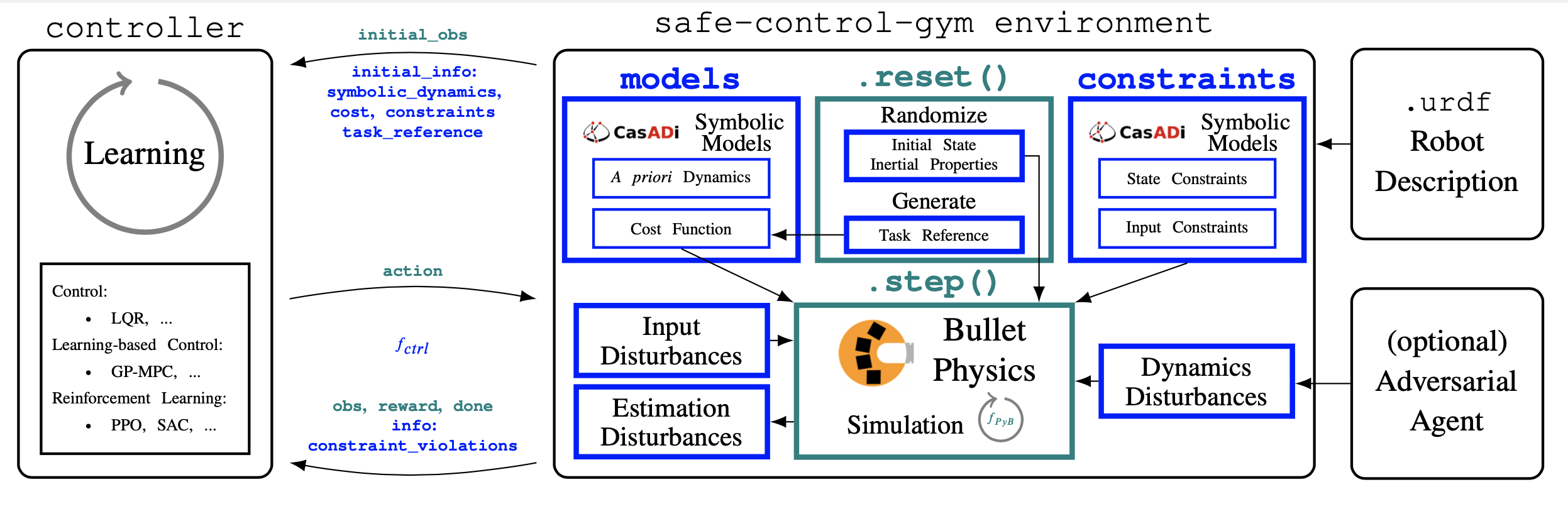

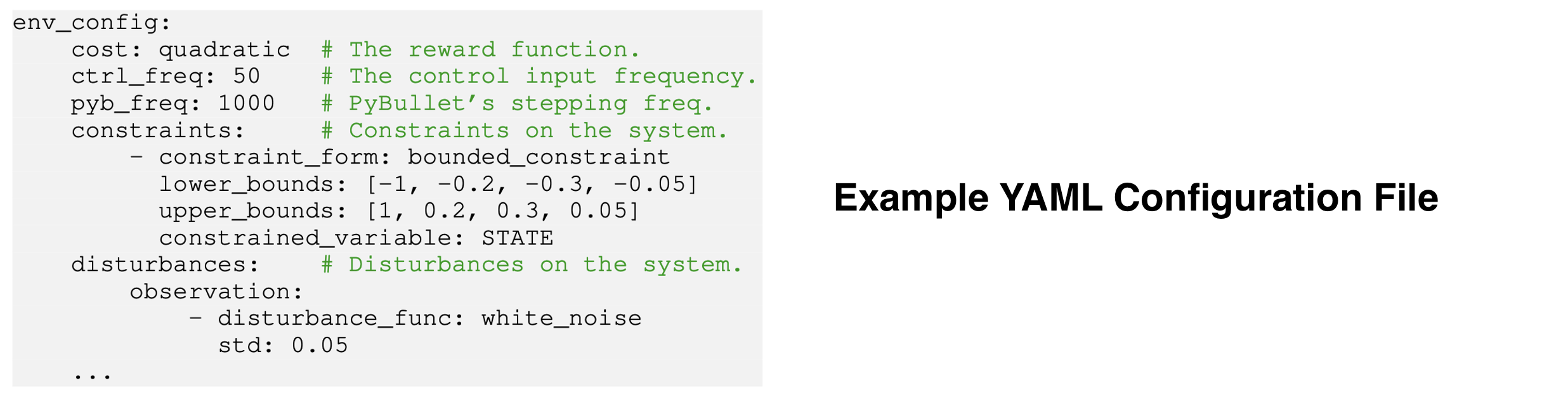

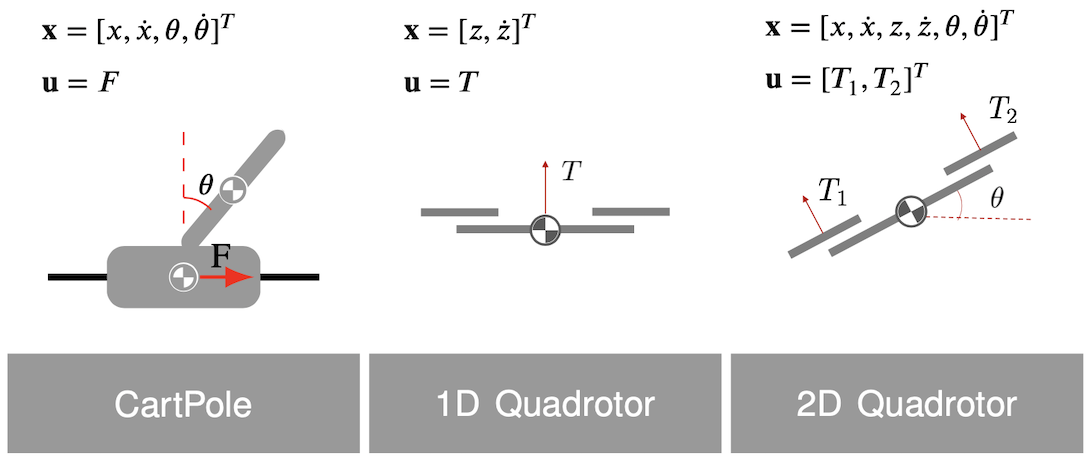

Physics-based CartPole and Quadrotor Gym environments (using PyBullet) with symbolic a priori dynamics (using CasADi) for learning-based control, and model-free and model-based reinforcement learning (RL).

These environments include (and evaluate) symbolic safety constraints and implement input, parameter, and dynamics disturbances to test the robustness and generalizability of control approaches. [PDF]

@article{brunke2021safe,

title={Safe Learning in Robotics: From Learning-Based Control to Safe Reinforcement Learning},

author={Lukas Brunke and Melissa Greeff and Adam W. Hall and Zhaocong Yuan and Siqi Zhou and Jacopo Panerati and Angela P. Schoellig},

journal = {Annual Review of Control, Robotics, and Autonomous Systems},

year={2021},

url = {https://arxiv.org/abs/2108.06266}}To reproduce the results in the article, see branch ar.

@misc{yuan2021safecontrolgym,

title={safe-control-gym: a Unified Benchmark Suite for Safe Learning-based Control and Reinforcement Learning},

author={Zhaocong Yuan and Adam W. Hall and Siqi Zhou and Lukas Brunke and Melissa Greeff and Jacopo Panerati and Angela P. Schoellig},

year={2021},

eprint={2109.06325},

archivePrefix={arXiv},

primaryClass={cs.RO}}To reproduce the results in the article, see branch submission.

git clone https://github.com/utiasDSL/safe-control-gym.git

cd safe-control-gymCreate and access a Python 3.10 environment using

conda

conda create -n safe python=3.10

conda activate safeInstall the safe-control-gym repository

pip install --upgrade pip

pip install -e .You may need to separately install gmp, a dependency of pycddlib:

conda install -c anaconda gmpor

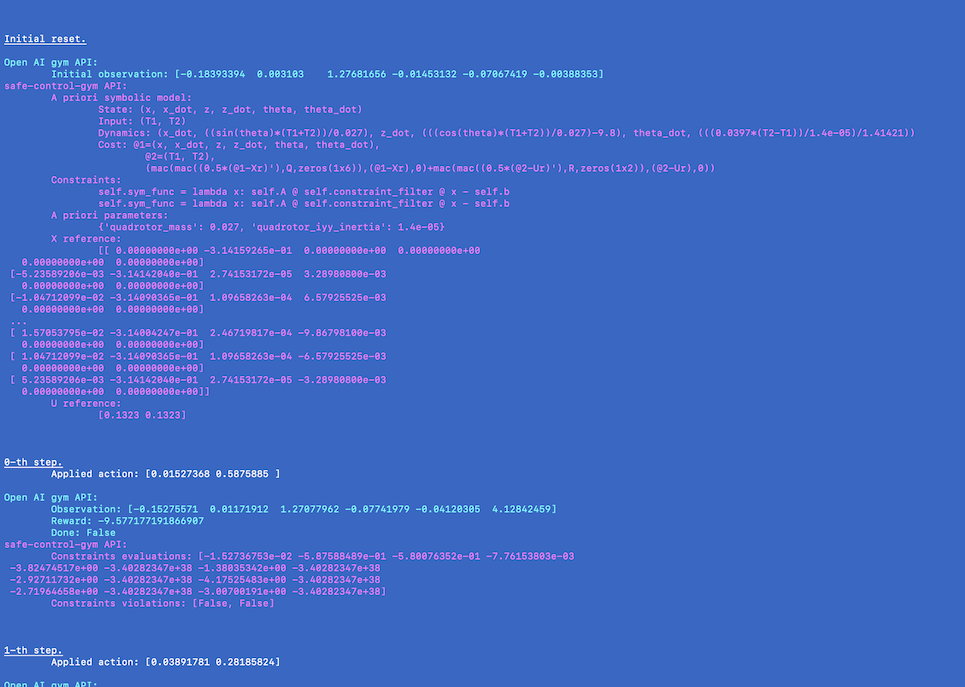

sudo apt-get install libgmp-devOverview of safe-control-gym's API:

Familiarize with APIs and environments with the scripts in examples/

cd ./examples/ # Navigate to the examples folder

python3 pid/pid_experiment.py \

--algo pid \

--task quadrotor \

--overrides \

./pid/config_overrides/quadrotor_3D/quadrotor_3D_tracking.yamlcd ./examples/ # Navigate to the examples folder

python3 lqr/lqr_experiment.py \

--algo lqr \

--task cartpole \

--overrides \

./lqr/config_overrides/cartpole/cartpole_stabilization.yaml \

./lqr/config_overrides/cartpole/lqr_cartpole_stabilization.yamlcd ./examples/rl/ # Navigate to the RL examples folder

python3 rl_experiment.py \

--algo ppo \

--task quadrotor \

--overrides \

./config_overrides/quadrotor_2D/quadrotor_2D_track.yaml \

./config_overrides/quadrotor_2D/ppo_quadrotor_2D.yaml \

--kv_overrides \

algo_config.training=Falsecd ./examples/ # Navigate to the examples folder

python3 no_controller/verbose_api.py \

--task cartpole \

--overrides no_controller/verbose_api.yamlWe compare the sample efficiency of safe-control-gym with the original OpenAI Cartpole and PyBullet Gym's Inverted Pendulum, as well as gym-pybullet-drones.

We choose the default physic simulation integration step of each project.

We report performance results for open-loop, random action inputs.

Note that the Bullet engine frequency reported for safe-control-gym is typically much finer grained for improved fidelity.

safe-control-gym quadrotor environment is not as light-weight as gym-pybullet-drones but provides the same order of magnitude speed-up and several more safety features/symbolic models.

| Environment | GUI | Control Freq. | PyBullet Freq. | Constraints & Disturbances^ | Speed-Up^^ |

|---|---|---|---|---|---|

| Gym cartpole | True | 50Hz | N/A | No | 1.16x |

| InvPenPyBulletEnv | False | 60Hz | 60Hz | No | 158.29x |

| cartpole | True | 50Hz | 50Hz | No | 0.85x |

| cartpole | False | 50Hz | 1000Hz | No | 24.73x |

| cartpole | False | 50Hz | 1000Hz | Yes | 22.39x |

| gym-pyb-drones | True | 48Hz | 240Hz | No | 2.43x |

| gym-pyb-drones | False | 50Hz | 1000Hz | No | 21.50x |

| quadrotor | True | 60Hz | 240Hz | No | 0.74x |

| quadrotor | False | 50Hz | 1000Hz | No | 9.28x |

| quadrotor | False | 50Hz | 1000Hz | Yes | 7.62x |

^ Whether the environment includes a default set of constraints and disturbances

^^ Speed-up = Elapsed Simulation Time / Elapsed Wall Clock Time; on a 2.30GHz Quad-Core i7-1068NG7 with 32GB 3733MHz LPDDR4X; no GPU

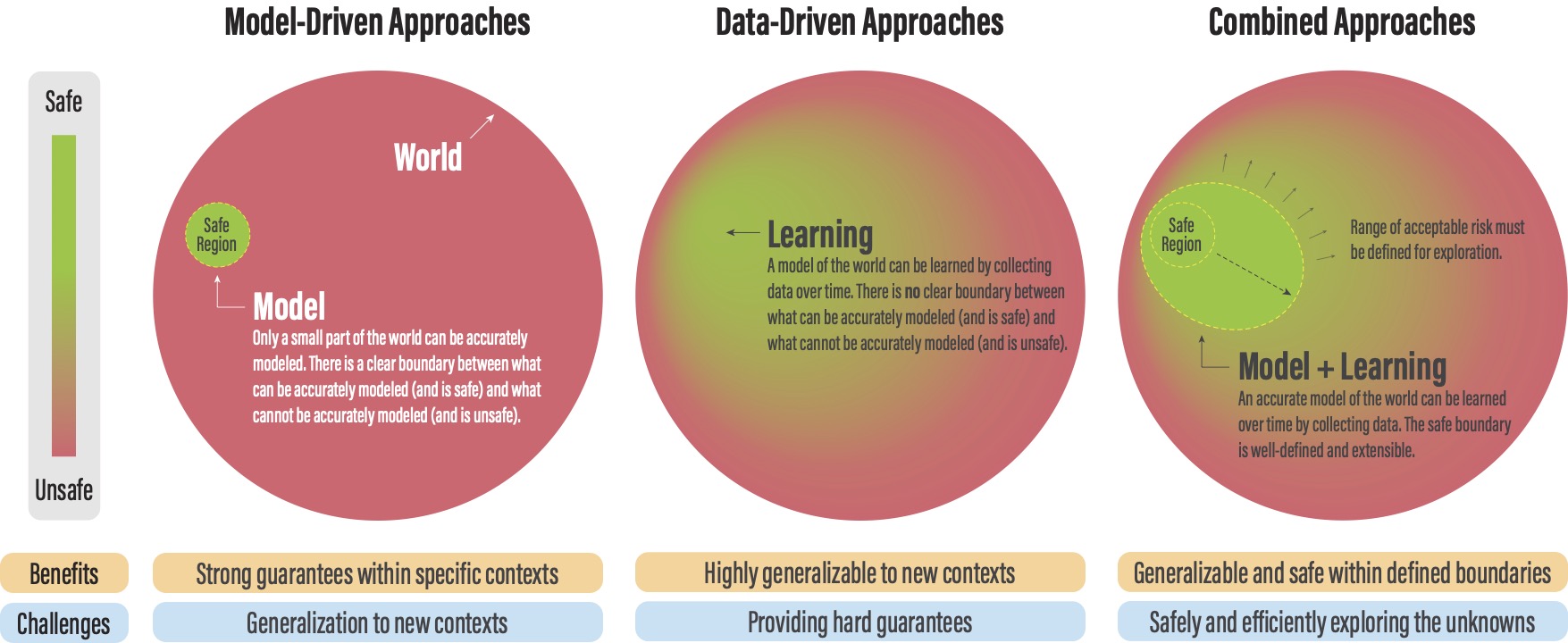

- Brunke, L., Greeff, M., Hall, A. W., Yuan, Z., Zhou, S., Panerati, J., & Schoellig, A. P. (2022). Safe learning in robotics: From learning-based control to safe reinforcement learning. Annual Review of Control, Robotics, and Autonomous Systems, 5, 411-444.

- Yuan, Z., Hall, A. W., Zhou, S., Brunke, L., Greeff, M., Panerati, J., & Schoellig, A. P. (2022). safe-control-gym: A unified benchmark suite for safe learning-based control and reinforcement learning in robotics. IEEE Robotics and Automation Letters, 7(4), 11142-11149.

gym-pybullet-drones: single and multi-quadrotor environmentsstable-baselines3: PyTorch reinforcement learning algorithmsbullet3: multi-physics simulation enginegym: OpenAI reinforcement learning toolkitcasadi: symbolic framework for numeric optimizationsafety-gym: environments for safe exploration in RLrealworldrl_suite: real-world RL challenge frameworkgym-marl-reconnaissance: multi-agent heterogeneous (UAV/UGV) environments

University of Toronto's Dynamic Systems Lab / Vector Institute for Artificial Intelligence