Archiving mempool transactions in Parquet and CSV format.

The data is freely available at https://mempool-dumpster.flashbots.net

Overview:

- Data is published under the CC-0 public domain license

- Saving about 1M - 2M unique transactions per day

- This project is under active development and the codebase might change significantly without notice. The functionality itself is pretty stable.

- Related tooling: https://github.com/dvush/mempool-dumpster-rs

- Introduction & guide: https://collective.flashbots.net/t/mempool-dumpster-a-free-mempool-transaction-archive/2401

- Generic EL nodes - go-ethereum, Infura, etc. (Websockets, using

newPendingTransactions) - Alchemy (Websockets, using

alchemy_pendingTransactions, warning - burns a lot of credits) - bloXroute (Websockets and gRPC)

- Chainbound Fiber (gRPC)

- Eden (Websockets)

Note: Some sources send transactions that are already included on-chain, which are discarded (not added to archive or summary)

Daily files uploaded by mempool-dumpster (i.e. for September 2023):

- Parquet file with transaction metadata and raw transactions (~800MB/day, i.e.

2023-09-08.parquet) - CSV file with only the transaction metadata (~100MB/day zipped, i.e.

2023-09-08.csv.zip) - CSV file with details about when each transaction was received by any source (~100MB/day zipped, i.e.

2023-09-08_sourcelog.csv.zip) - Summary in text format (~2kB, i.e.

2023-09-08_summary.txt)

- When is the data uploaded? ... The data for the previous day is uploaded daily between UTC 4am and 4:30am.

- What are exclusive transactions? ... a transaction that was seen from no other source (transaction only provided by a single source). These transactions might include recycled transactions (which were already seen long ago but not included, and resent by a transaction source).

- What does "XOF" stand for? ... XOF stands for "exclusive orderflow" (i.e. exclusive transactions).

- What is a-pool? ... A-Pool is a regular geth node with some optimized peering settings, subscribed to over the network.

- gRPC vs Websockets? ... bloXroute and Chainbound are connected with gRPC, all other sources are connected with Websockets (note that gRPC has a lower latency than WebSockets).

Apache Parquet is a column-oriented data file format designed for efficient data storage and retrieval. It provides efficient data compression and encoding schemes with enhanced performance to handle complex data in bulk (more here).

We recommend to use ClickHouse local (as well as DuckDB) to work with Parquet files, it makes it easy to run queries like:

# show the schema

$ clickhouse local -q "DESCRIBE TABLE 'transactions.parquet';"

timestamp Nullable(DateTime64(3))

hash Nullable(String)

chainId Nullable(String)

from Nullable(String)

to Nullable(String)

value Nullable(String)

nonce Nullable(String)

gas Nullable(String)

gasPrice Nullable(String)

gasTipCap Nullable(String)

gasFeeCap Nullable(String)

dataSize Nullable(Int64)

data4Bytes Nullable(String)

sources Array(Nullable(String))

includedAtBlockHeight Nullable(Int64)

includedBlockTimestamp Nullable(DateTime64(3))

inclusionDelayMs Nullable(Int64)

rawTx Nullable(String)

# count rows

$ clickhouse local -q "SELECT count(*) FROM 'transactions.parquet' LIMIT 1;"

# get the first hash+rawTx

$ clickhouse local -q "SELECT hash,hex(rawTx) FROM 'transactions.parquet' LIMIT 1;"

# get details of a particular hash

$ clickhouse local -q "SELECT timestamp,hash,from,to,hex(rawTx) FROM 'transactions.parquet' WHERE hash='0x152065ad73bcf63f68572f478e2dc6e826f1f434cb488b993e5956e6b7425eed';"

# get exclusive transactions from bloxroute

$ clickhouse local -q "SELECT COUNT(*) FROM 'transactions.parquet' WHERE length(sources) == 1 AND sources[1] == 'bloxroute';"

# get count of landed vs not-landed exclusive transactions, by source

$ clickhouse local -q "WITH includedBlockTimestamp!=0 as included SELECT sources[1], included, count(included) FROM 'out/out/transactions.parquet' WHERE length(sources) == 1 GROUP BY sources[1], included;"

# get uniswap v2 transactions

$ clickhouse local -q "SELECT COUNT(*) FROM 'transactions.parquet' WHERE to='0x7a250d5630b4cf539739df2c5dacb4c659f2488d';"

# get uniswap v2 transactions and separate by included/not-included

$ clickhouse local -q "WITH includedBlockTimestamp!=0 as included SELECT included, COUNT(included) FROM 'transactions.parquet' WHERE to='0x7a250d5630b4cf539739df2c5dacb4c659f2488d' GROUP BY included;"

# get inclusion delay for uniswap v2 transactions (time between receiving and being included on-chain)

$ clickhouse local -q "WITH inclusionDelayMs/1000 as incdelay SELECT quantiles(0.5, 0.9, 0.99)(incdelay), avg(incdelay) as avg FROM 'transactions.parquet' WHERE to='0x7a250d5630b4cf539739df2c5dacb4c659f2488d' AND includedBlockTimestamp!=0;"

# count uniswap v2 contract methods

$ clickhouse local -q "SELECT data4Bytes, COUNT(data4Bytes) FROM 'transactions.parquet' WHERE to='0x7a250d5630b4cf539739df2c5dacb4c659f2488d' GROUP BY data4Bytes;"See this post for more details: https://collective.flashbots.net/t/mempool-dumpster-a-free-mempool-transaction-archive/2401

- Amount of transactions which eventually lands on chain + inclusionDelay (by source)

- Transaction quality (i.e. for high-volume XOF sources)

- Trash transactions

Feel free to continue the conversation in the Flashbots Forum!

You can easily run the included analyzer to create summaries like 2023-09-22_summary.txt:

- First, download the parquet and sourcelog files from https://mempool-dumpster.flashbots.net/ethereum/mainnet/2023-09

- Then run the analyzer:

go run cmd/analyze/* \

--out summary.txt \

--input-parquet /mnt/data/mempool-dumpster/2023-09-22/2023-09-22.parquet \

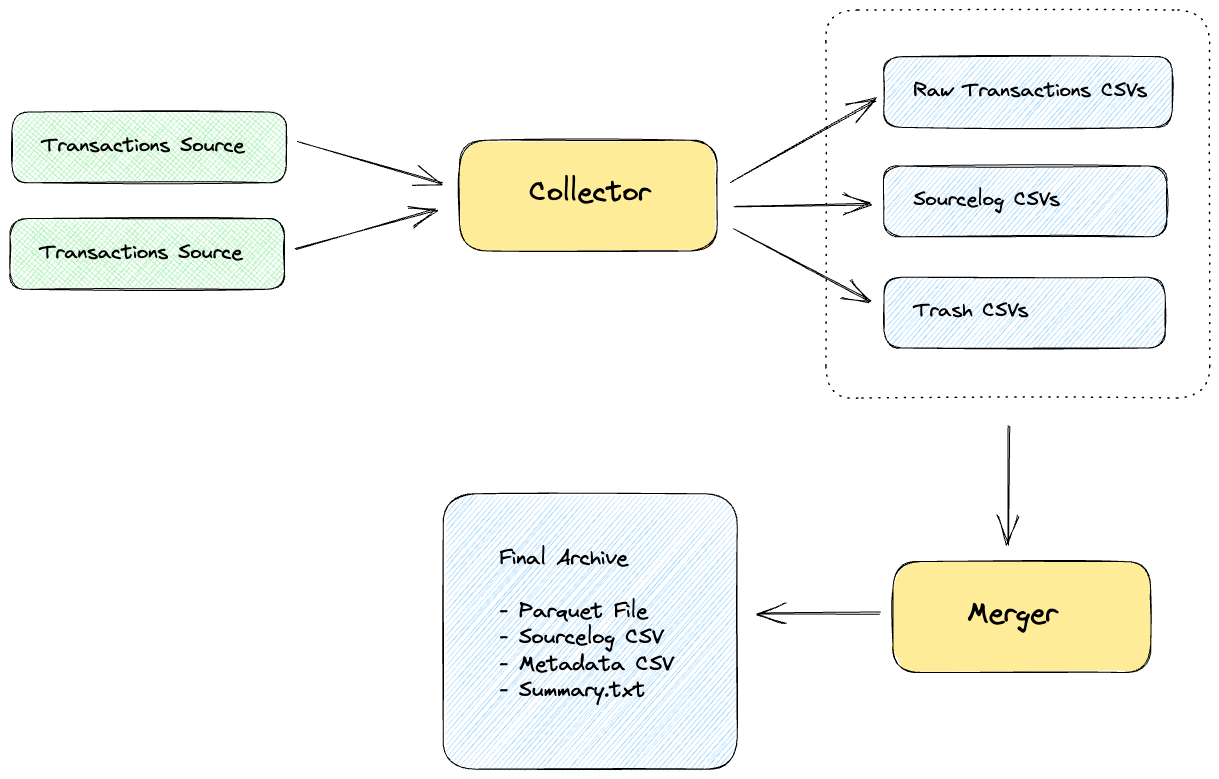

--input-sourcelog /mnt/data/mempool-dumpster/2023-09-22/2023-09-22_sourcelog.csv.zip- Collector: Connects to EL nodes and writes new mempool transactions and sourcelog to hourly CSV files. Multiple collector instances can run without colliding.

- Merger: Takes collector CSV files as input, de-duplicates, checks transaction inclusion status, sorts by timestamp and writes output files (Parquet, CSV and Summary).

- Analyzer: Analyzes sourcelog CSV files and produces summary report.

- Website: Website dev-mode as well as build + upload.

- Subscribes to new pending transactions at various data sources

- Writes 3 files:

- Transactions CSV:

timestamp_ms, hash, raw_tx(one file per hour by default) - Sourcelog CSV:

timestamp_ms, hash, source(one entry for every single transaction received by any source) - Trash CSV:

timestamp_ms, hash, source, reason, note(trash transactions received by any source, these are not added to the transactions CSV. currently only if already included in previous block)

- Transactions CSV:

- Note: the collector can store transactions repeatedly, and only the merger will properly deduplicate them later

Default filenames:

Transactions

- Schema:

<out_dir>/<date>/transactions/txs_<date>_<uid>.csv - Example:

out/2023-08-07/transactions/txs_2023-08-07-10-00_collector1.csv

Sourcelog

- Schema:

<out_dir>/<date>/sourcelog/src_<date>_<uid>.csv - Example:

out/2023-08-07/sourcelog/src_2023-08-07-10-00_collector1.csv

Trash

- Schema:

<out_dir>/<date>/trash/trash_<date>_<uid>.csv - Example:

out/2023-08-07/trash/trash_2023-08-07-10-00_collector1.csv

Running the mempool collector:

# print help

go run cmd/collect/main.go -help

# Connect to ws://localhost:8546 and write CSVs into ./out

go run cmd/collect/main.go -out ./out

# Connect to multiple nodes

go run cmd/collect/main.go -out ./out -nodes ws://server1.com:8546,ws://server2.com:8546- Iterates over collector output directory / CSV files

- Deduplicates transactions, sorts them by timestamp

go run cmd/merge/main.go -h- Keep it simple and stupid

- Vendor-agnostic (main flow should work on any server, independent of a cloud provider)

- Downtime-resilience to minimize any gaps in the archive

- Multiple collector instances can run concurrently, without getting into each others way

- Merger produces the final archive (based on the input of multiple collector outputs)

- The final archive:

- Includes (1) parquet file with transaction metadata, and (2) compressed file of raw transaction CSV files

- Compatible with ClickHouse and S3 Select (Parquet using gzip compression)

- Easily distributable as torrent

NodeConnection- One for each EL connection

- New pending transactions are sent to

TxProcessorvia a channel

TxProcessor- Check if it already processed that tx

- Store it in the output directory

- Uses https://github.com/xitongsys/parquet-go to write Parquet format

- encoding transactions in typed EIP-2718 envelopes:

- currently using: https://github.com/HdrHistogram/hdrhistogram-go/

- possibly more versatile: https://github.com/montanaflynn/stats

- see also:

Install dependencies

go install mvdan.cc/gofumpt@latest

go install honnef.co/go/tools/cmd/staticcheck@latest

go install github.com/golangci/golangci-lint/cmd/golangci-lint@latest

go install github.com/daixiang0/gci@latestLint, test, format

make lint

make test

make fmt- Discussion about compression and storage

- Forum post: https://collective.flashbots.net/t/mempool-dumpster-a-free-mempool-transaction-archive/2401

- Code: MIT

- Data: CC-0 public domain