pip install keractYou have just found a (easy) way to get the activations (outputs) and gradients for each layer of your Keras model (LSTM, conv nets...).

from keract import get_activations

get_activations(model, x)Inputs are:

modelis akeras.models.Modelobject.xis a numpy array to feed to the model as input. In the case of multi-input,xis of type List. We use the Keras convention (as used in predict, fit...).

The output is a dictionary containing the activations for each layer of model for the input x:

{

'conv2d_1/Relu:0': np.array(...),

'conv2d_2/Relu:0': np.array(...),

...,

'dense_2/Softmax:0': np.array(...)

}

The key is the name of the layer and the value is the corresponding output of the layer for the given input x.

modelis akeras.models.Modelobject.xInput data (numpy array). Keras convention.y: Labels (numpy array). Keras convention.

from keract import get_gradients_of_trainable_weights

get_gradients_of_trainable_weights(model, x, y)The output is a dictionary mapping each trainable weight to the values of its gradients (regarding x and y).

modelis akeras.models.Modelobject.xInput data (numpy array). Keras convention.y: Labels (numpy array). Keras convention.

from keract import get_gradients_of_activations

get_gradients_of_activations(model, x, y)The output is a dictionary mapping each layer to the values of its gradients (regarding x and y).

Examples are provided for:

keras.models.Sequential- mnist.pykeras.models.Model- multi_inputs.py- Recurrent networks - recurrent.py

In the case of MNIST with LeNet, we are able to fetch the activations for a batch of size 128:

conv2d_1/Relu:0

(128, 26, 26, 32)

conv2d_2/Relu:0

(128, 24, 24, 64)

max_pooling2d_1/MaxPool:0

(128, 12, 12, 64)

dropout_1/cond/Merge:0

(128, 12, 12, 64)

flatten_1/Reshape:0

(128, 9216)

dense_1/Relu:0

(128, 128)

dropout_2/cond/Merge:0

(128, 128)

dense_2/Softmax:0

(128, 10)

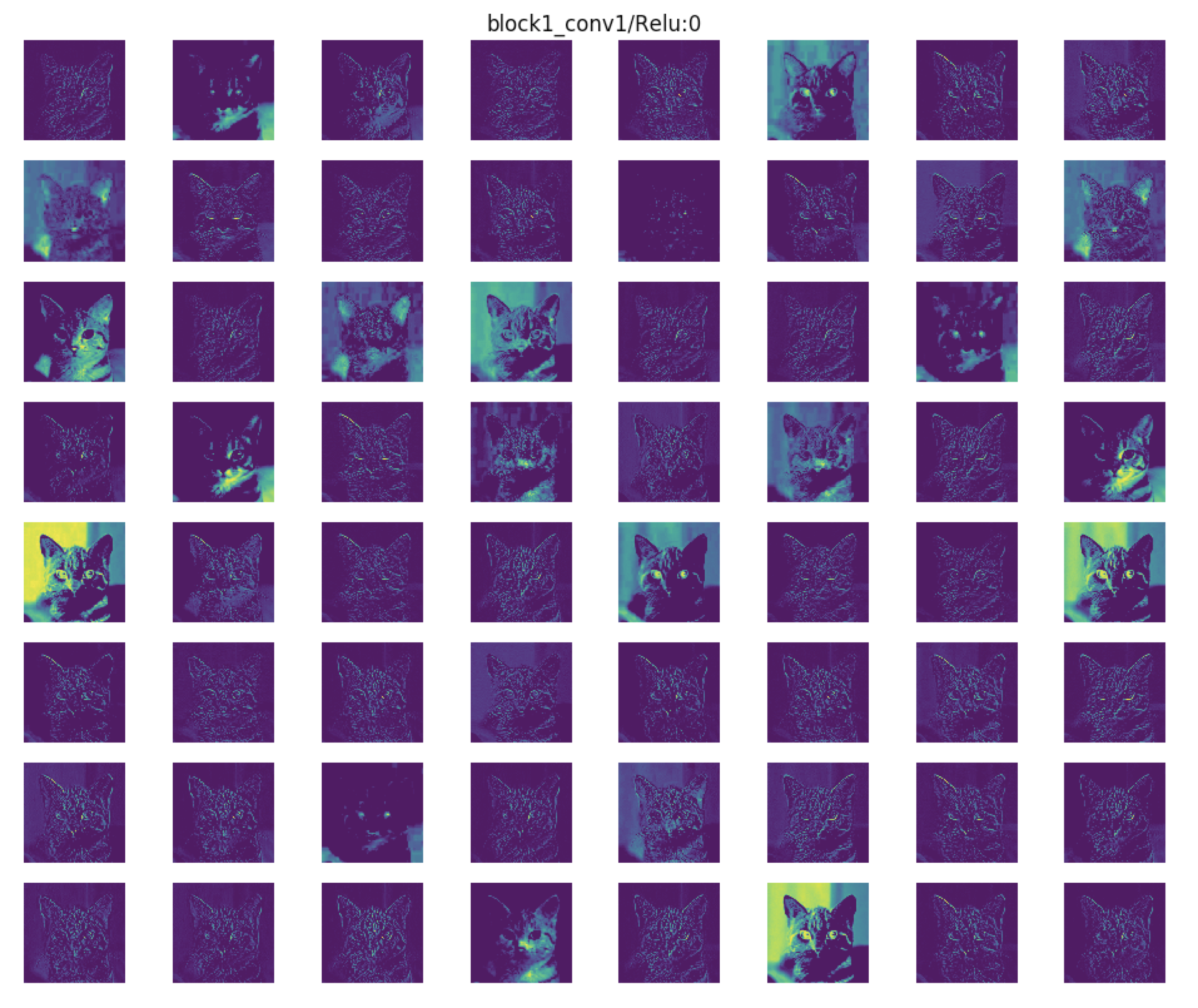

We can visualise the activations. Here's another example using VGG16:

cd examples

python vgg16.py

Outputs of the first convolutional layer of VGG16.

Also, we can visualise the heatmaps of the activations:

cd examples

python heat_map.py