This quickstart consists of one microservice:

jupyter: Runs Jupyter notebook with scipy (and other data analysis libraries) installed for building, analysing and visualising models interactively.

Follow along below to get the setup working on your cluster.

- Ensure that you have the hasura cli tool installed on your system.

$ hasura versionOnce you have installed the hasura cli tool, login to your Hasura account

$ # Login if you haven't already

$ hasura login- You should also have git installed.

$ git --version$ # Run the quickstart command to get the project

$ hasura quickstart hasura/kaggle-python-notebook

$ # Navigate into the Project

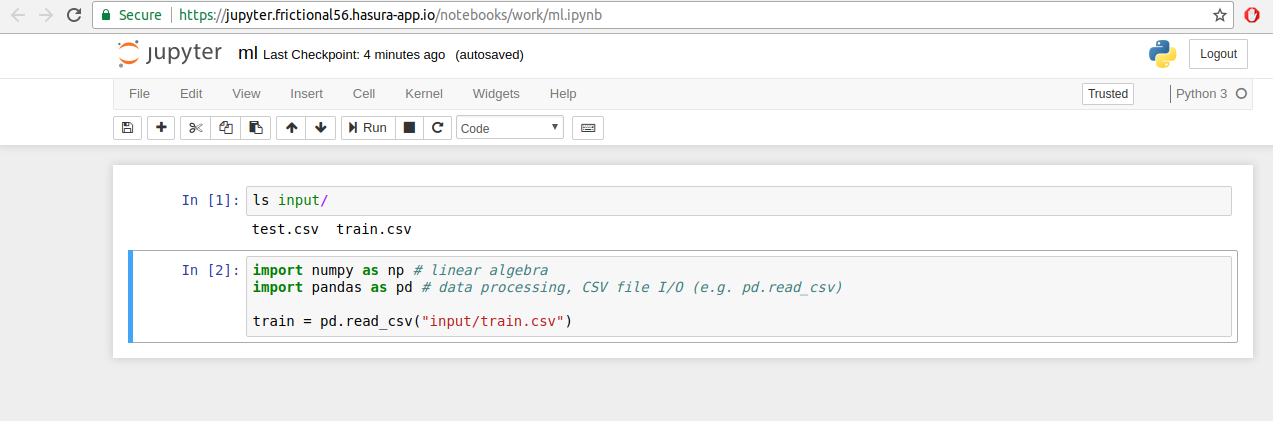

$ cd kaggle-python-notebookDownload any Kaggle dataset from Kaggle. Then, copy the dataset into the input folder in the jupyter microservice.

$ # Ensure that you are in the kaggle-python-notebook directory

$ cp ~/Downloads/train.csv microservices/jupyter/input/

$ cp ~/Downloads/test.csv microservices/jupyter/input/

In case the dataset is a zip, you may wish to extract it and then copy the files as above.

$ # Ensure that you are in the kaggle-python-notebook directory

$ # Git add, commit & push to deploy to your cluster

$ git add .

$ git commit -m 'First commit'

$ git push hasura masterOnce the above commands complete successfully, your cluster will have jupyter service running. To get their URLs

$ # Run this in the scipy-jupyter-notebook directory

$ hasura microservice list• Getting microservices...

• Custom microservices:

NAME STATUS INTERNAL-URL EXTERNAL-URL

jupyter Running jupyter.default http://jupyter.boomerang68.hasura-app.io

• Hasura microservices:

NAME STATUS INTERNAL-URL EXTERNAL-URL

auth Running auth.hasura http://auth.boomerang68.hasura-app.io

data Running data.hasura http://data.boomerang68.hasura-app.io

filestore Running filestore.hasura http://filestore.boomerang68.hasura-app.io

gateway Running gateway.hasura

le-agent Running le-agent.hasura

notify Running notify.hasura http://notify.boomerang68.hasura-app.io

platform-sync Running platform-sync.hasura

postgres Running postgres.hasura

session-redis Running session-redis.hasura

sshd Running sshd.hasuraYou can access the services at the EXTERNAL-URL for the respective service.

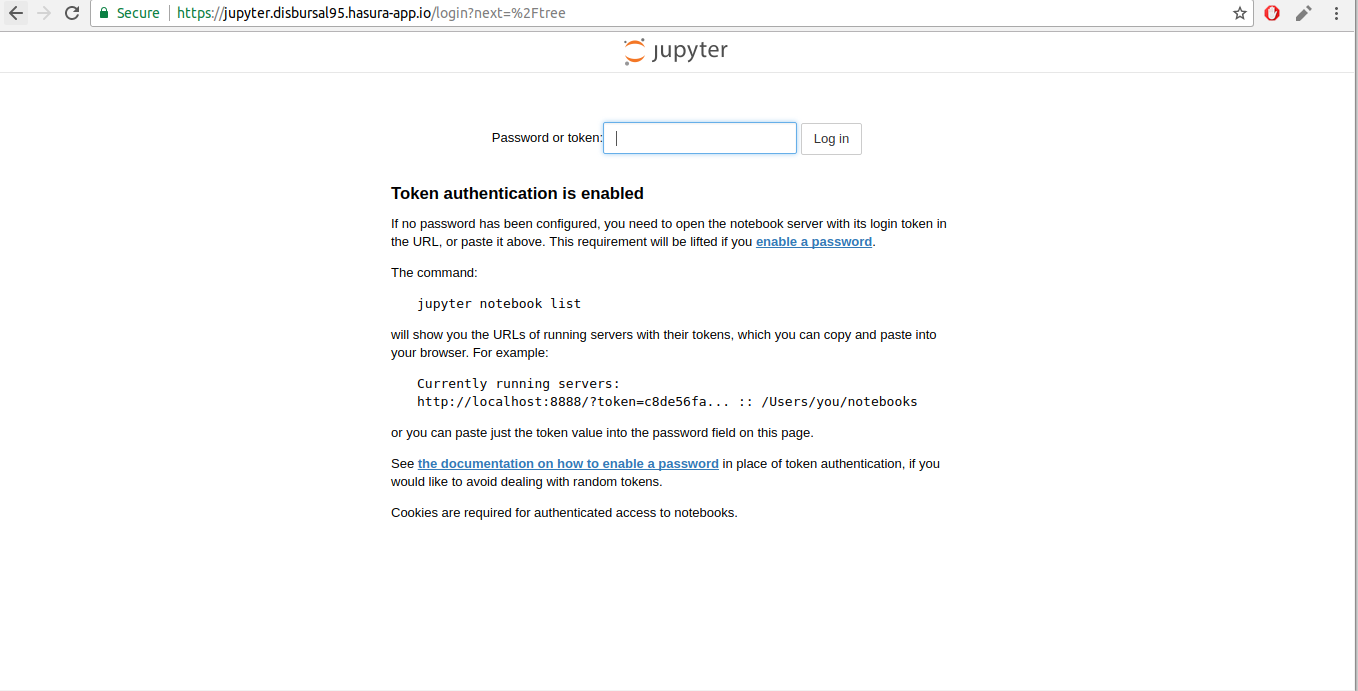

Head over to the EXTERNAL-URL of your jupyter service.

$ # Run this in the scipy-jupyter-notebook directory

$ hasura ms logs jupyterCopy the authentication token from the logs and enter it in the jupyter UI above.

Executing the command: jupyter notebook

[I 07:14:46.914 NotebookApp] Writing notebook server cookie secret to /home/jovyan/.local/share/jupyter/runtime/notebook_cookie_secret

[W 07:14:47.379 NotebookApp] WARNING: The notebook server is listening on all IP addresses and not using encryption. This is not recommended.

[I 07:14:47.428 NotebookApp] JupyterLab alpha preview extension loaded from /opt/conda/lib/python3.6/site-packages/jupyterlab

[I 07:14:47.428 NotebookApp] JupyterLab application directory is /opt/conda/share/jupyter/lab

[I 07:14:47.434 NotebookApp] Serving notebooks from local directory: /home/jovyan

[I 07:14:47.434 NotebookApp] 0 active kernels

[I 07:14:47.434 NotebookApp] The Jupyter Notebook is running at:

[I 07:14:47.434 NotebookApp] http://[all ip addresses on your system]:8888/?token=cd596a9b5e90a83283e4c9d6b792b4a58cac38e06153fd12

[I 07:14:47.434 NotebookApp] Use Control-C to stop this server and shut down all kernels (twice to skip confirmation).

[C 07:14:47.435 NotebookApp]

Copy/paste this URL into your browser when you connect for the first time,

to login with a token:

http://localhost:8888/?token=cd596a9b5e90a83283e4c9d6b792b4a58cac38e06153fd12

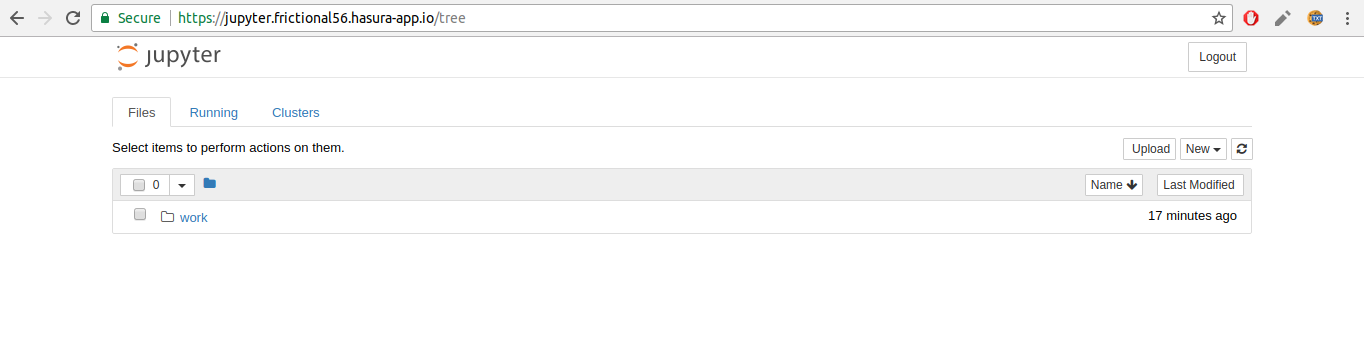

Once you authenticate Jupyter, you should see a work directory. This is where your data including input is persisted. You should create your notebooks in this directory so that your data is available even if your service restarts.

And that's it! Start building your models.