CapsNet-Pytorch

Pytorch version of Hinton's paper: Dynamic Routing Between Capsules

Some implementations of CapsNet online have potential problems and it's uneasy to realize the bugs since MNIST is too simple to achieve satisfying accuracy.

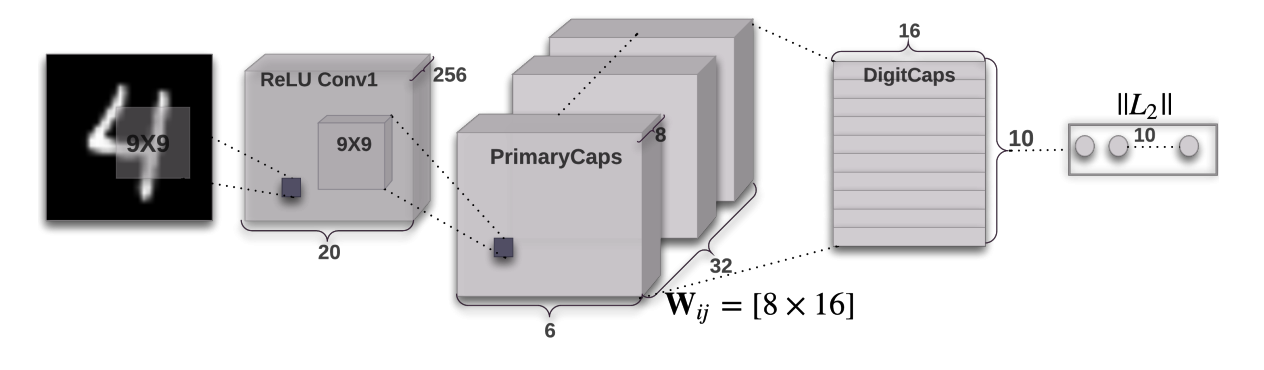

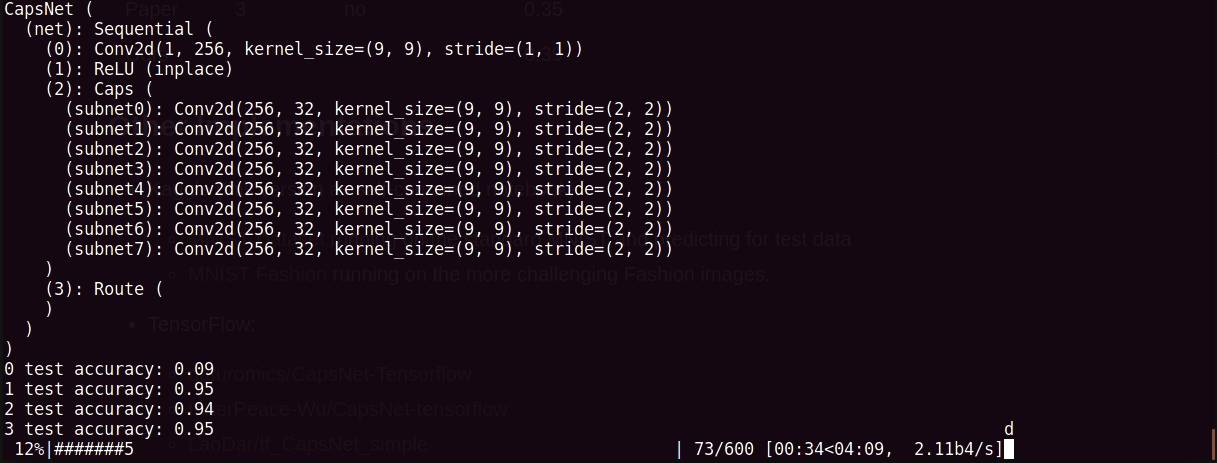

Network

Corresponding pipeline: Input > Conv1 > Caps(cnn inside) > Route > Loss

Screenshots

Highlights

- Highly abstraction of

Capslayer, by re-writing the functioncreate_cell_fnyou can implement your own sub-network insideCapsLayer

def create_cell_fn(self):

"""

create sub-network inside a capsule.

:return:

"""

conv1 = nn.Conv2d(self.conv1_kernel_num, self.caps1_conv_kernel_num, kernel_size = self.caps1_conv_kernel_size, stride = self.caps1_conv1_stride)

#relu = nn.ReLU(inplace = True)

#net = nn.Sequential(conv1, relu)

return conv1 - Highly abstraction of routing layer by class

Route, you can take use ofCapsLayer andRouteLayer to construct any type of network - No DigitsCaps Layer, and we just just the output of

Routelayer.

Status

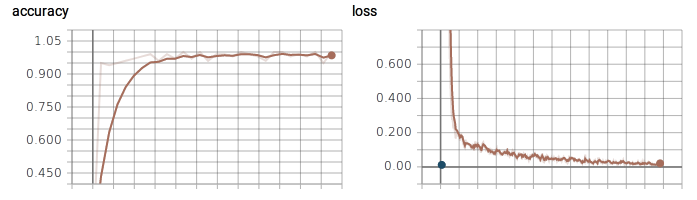

- Currently we train our model for 30 epochs, which means it is potentially promising if more epochs are used to train

- We don not use reconstruction loss now, and will add it later

- The critical part of code is well commented with each dimension changes, which means you can follow the comments to understand the routing mechnism

TODO

- add reconstruction loss

- test on more convincing dataset, such as ImagetNet

About me

I'm a Research Assistant @ National University of Singapre, before joinging NUS, I was a first-year PhD candidate in Zhejiang University and then quitted. Contact me with email: dcslong@nus.edu.sg or wechat: dragen1860

Usage

Step 1. Install Conda, CUDA, cudnn and Pytorch

conda install pytorch torchvision cuda80 -c soumith

Step 2. Clone the repository to local

git clone https://github.com/dragen1860/CapsNet-Pytorch.git

cd CapsNet-Pytorch

Step 3. Train CapsNet on MNIST

- please modify the variable

glo_batch_size = 125to appropriate size according to your GPU memory size. - run

$ python main.py

- turn on tensorboard

$ tensorboard --logdir runs

Step 4. Validate CapsNet on MNIST

OR you can comment the part of train code and test its performance with pretrained model mdl file.

Results

| Model | Routing | Reconstruction | MNIST | |

|---|---|---|---|---|

| Baseline | - | - | 0.39 | |

| Paper | 3 | no | 0.35 | |

| Ours | 3 | no | 0.34 |

It takes about 150s per epoch for single GTX 970 4GB Card.

Other Implementations

-

Keras:

- XifengGuo/Capsnet-Keras Well written.

-

TensorFlow:

- naturomics/CapsNet-Tensorflow The first implementation online.

- InnerPeace-Wu/CapsNet-tensorflow

- LaoDar/tf_CapsNet_simple

-

MXNet:

-

Lasagne (Theano):

-

Chainer: