Link to repo: tnwei/vqgan-clip-app.

VQGAN-CLIP has been in vogue for generating art using deep learning. Searching the r/deepdream subreddit for VQGAN-CLIP yields quite a number of results. Basically, VQGAN can generate pretty high fidelity images, while CLIP can produce relevant captions for images. Combined, VQGAN-CLIP can take prompts from human input, and iterate to generate images that fit the prompts.

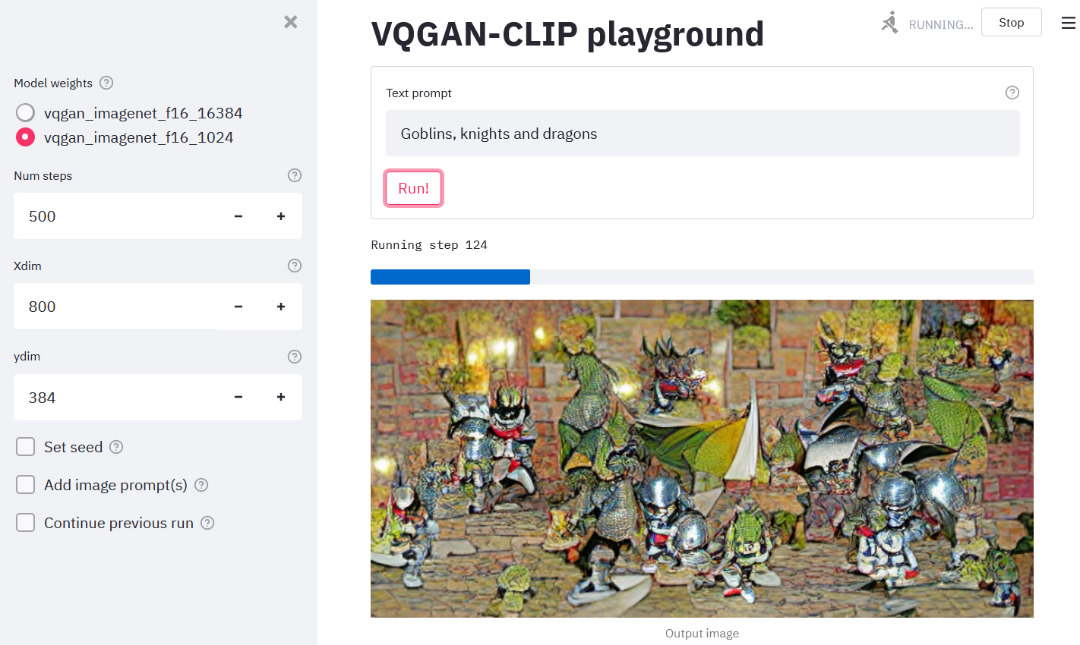

Thanks to the generosity of creators sharing notebooks on Google Colab, the VQGAN-CLIP technique has seen widespread circulation. However, for regular usage across multiple sessions, I prefer a local setup that can be started up rapidly. Thus, this simple Streamlit app for generating VQGAN-CLIP images on a local environment. Screenshot of the UI as below:

Be advised that you need a beefy GPU with lots of VRAM to generate images large enough to be interesting. (Hello Quadro owners!). For reference, an RTX2060 can barely manage a 300x300 image. Otherwise you are best served using the notebooks on Colab.

Reference is this Colab notebook originally by Katherine Crowson. The notebook can also be found in this repo hosted by EleutherAI.

In mid 2021, Open AI released Diffusion Models Beat GANS on Image Synthesis, with corresponding source code and model checkpoints released on github. The cadre of people that brought us VQGAN-CLIP worked their magic, and shared CLIP guided diffusion notebooks for public use. CLIP guided diffusion uses more GPU VRAM, runs slower, and has fixed output sizes depending on the trained model checkpoints, but is capable of producing more breathtaking images.

Here's a few examples using the prompt "Flowery fragrance intertwined with the freshness of the ocean breeze by Greg Rutkowski", run on the 512x512 HQ Uncond model:

The implementation of CLIP guided diffusion in this repo is based on notebooks from the same EleutherAI/vqgan-clip repo.

- Install the required Python libraries. Using

conda, runconda env create -f environment.yml - Git clone this repo. After that,

cdinto the repo and run: - Download the pretrained weights and config files using the provided links in the files listed below. Note that that all of the links are commented out by default. Recommend to download one by one, as some of the downloads can take a while.

- For VQGAN-CLIP:

download-weights.sh. You'll want to at least have both the ImageNet weights, which are used in the reference notebook. - For CLIP guided diffusion:

download-diffusion-weights.sh.

- For VQGAN-CLIP:

- VQGAN-CLIP:

streamlit run app.py, launches web app onlocalhost:8501if available - CLIP guided diffusion:

streamlit run diffusion_app.py, launches web app onlocalhost:8501if available - Image gallery:

python gallery.py, launches a gallery viewer onlocalhost:5000. More on this below.

In the web app, select settings on the sidebar, key in the text prompt, and click run to generate images using VQGAN-CLIP. When done, the web app will display the output image as well as a video compilation showing progression of image generation. You can save them directly through the browser's right-click menu.

A one-time download of additional pre-trained weights will occur before generating the first image. Might take a few minutes depending on your internet connection.

If you have multiple GPUs, specify the GPU you want to use by adding -- --gpu X. An extra double dash is required to bypass Streamlit argument parsing. Example commands:

# Use 2nd GPU

streamlit run app.py -- --gpu 1

# Use 3rd GPU

streamlit run diffusion_app.py -- --gpu 2See: tips and tricks

Each run's metadata and output is saved to the output/ directory, organized into subfolders named using the timestamp when a run is launched, as well as a unique run ID. Example output dir:

$ tree output

├── 20210920T232927-vTf6Aot6

│ ├── anim.mp4

│ ├── details.json

│ └── output.PNG

└── 20210920T232935-9TJ9YusD

├── anim.mp4

├── details.json

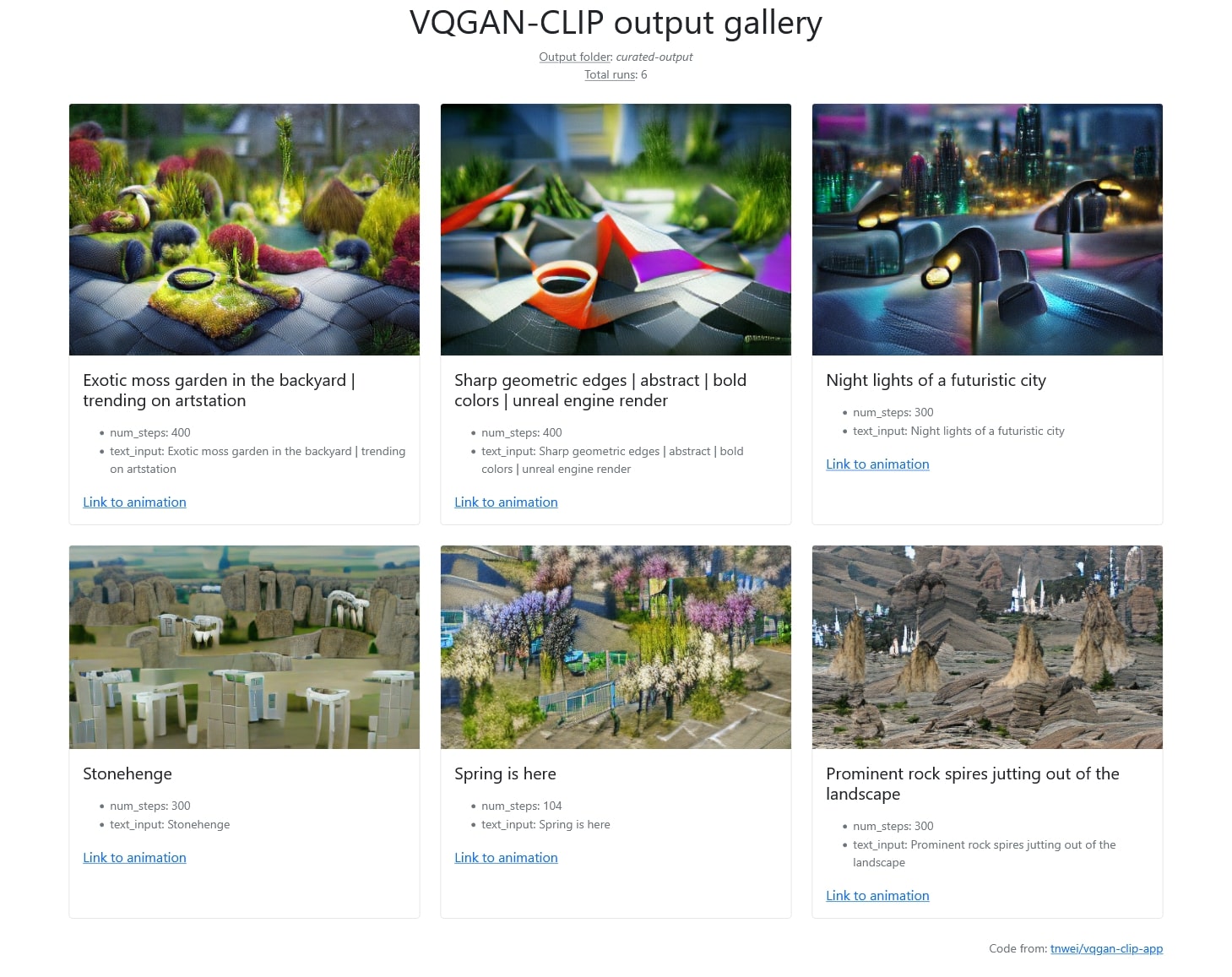

└── output.PNGThe gallery viewer reads from output/ and visualizes previous runs together with saved metadata.

If the details are too much, call python gallery.py --kiosk instead to only show the images and their prompts.