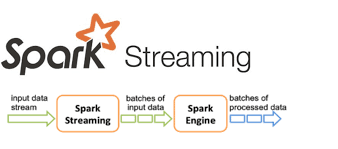

This is a Spark streaming program that reads a text file, sends it content through a socket streaming session and aggregates the count of each word.

The text file is an extract of the defination for data engineering given by Joe Reis and & Matt Housley in their book "Fundamentals of Data Engineering", a good read 👍 👍.

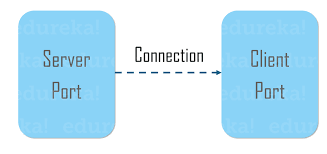

To demonstrate a simple Spark socket streaming with aggregation using PySpark. The socket is a TCP socket session between a local server (127.0.0.1:5011) endpoint and a remote client endpoint (127.0.0.1:<spark defines the port>).

- Used python to read the text file and refining it lines by removing commas and periods.

- Established a TCP socket connection with python's built-in socket module.

- Create a spark session, spark readstream object and a socket output spark writestream object.

- Performed a word count aggregation on the streaming data.

-

Inside the cloned repo, create your virtual env, and run

pip install -r dependencies.txtin the activated virtual env to set up required libraries and packages. -

Activte the virtual env.

-

It would be a good idea to split the terminal, run

python server_socket.py(the local server) thenpython pyspark_socket_streaming.py(the remote client) in the terminal. -

Should in case a need comes to end the server_socket.py while running, use

CTRL+CnotCTRL+Zas that only suspends the program and not freeing the socket resource port in use. -

In error socket already in use, run

lsof -i:5011to see the running process in your terminal. Usekill -9 <PID_NUMBER>to end the process so as to free the port.

- We can include scheduling and orchestration with Apache Airflow.

Having any issues, reach me at this email. More is definitely coming, do come back to check them out.

Now go get your cup of coffee/tea and enjoy a good code-read and criticism 👍 👍.