Preprint: arxiv.org/abs/2006.06666

Model Zoo, Usage Instructions and API docs: kdexd.github.io/virtex

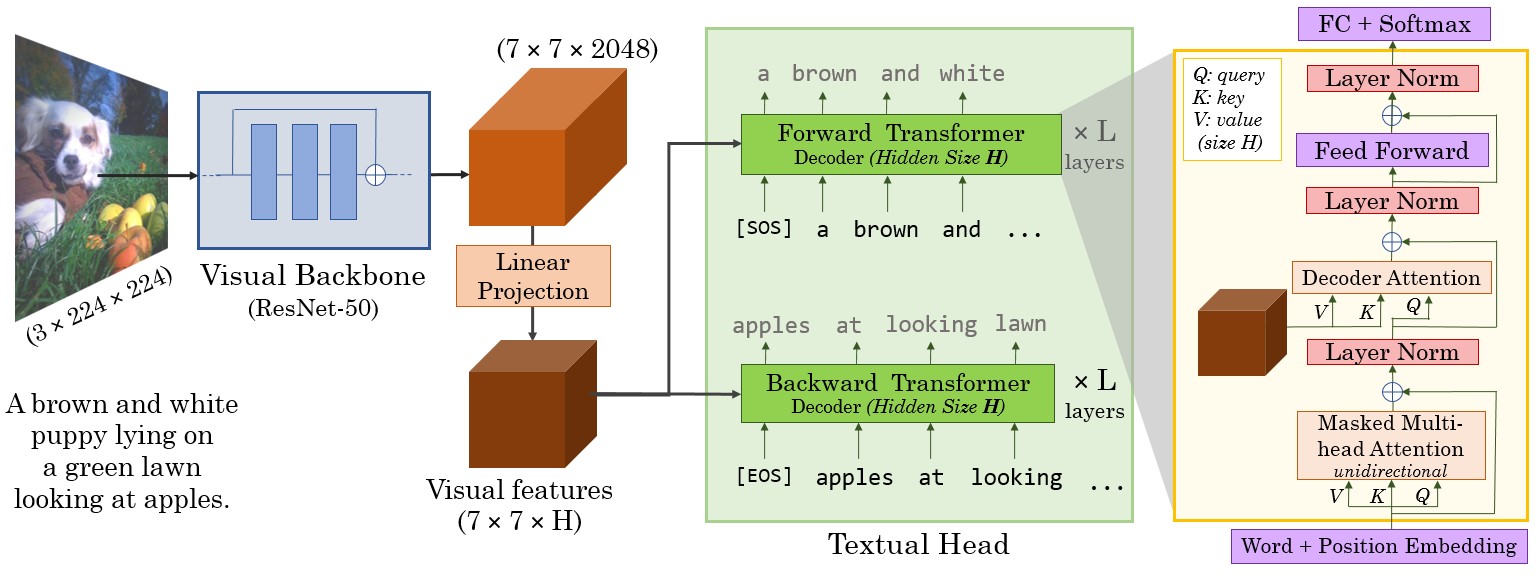

VirTex is a pretraining approach which uses semantically dense captions to learn visual representations. We train CNN + Transformers from scratch on COCO Captions, and transfer the CNN to downstream vision tasks including image classification, object detection, and instance segmentation. VirTex matches or outperforms models which use ImageNet for pretraining -- both supervised or unsupervised -- despite using up to 10x fewer images.

Get the pretrained ResNet-50 visual backbone from our best performing VirTex model in one line without any installation!

import torch

# That's it, this one line only requires PyTorch.

model = torch.hub.load("kdexd/virtex", "resnet50", pretrained=True)- How to setup this codebase?

- VirTex Model Zoo

- How to train your VirTex model?

- How to evaluate on downstream tasks?

These can also be accessed from kdexd.github.io/virtex.

If you find this code useful, please consider citing:

@article{desai2020virtex,

title={VirTex: Learning Visual Representations from Textual Annotations},

author={Karan Desai and Justin Johnson},

journal={arXiv preprint arXiv:2006.06666},

year={2020}

}