cognee - memory layer for AI apps and Agents

Demo . Learn more · Join Discord

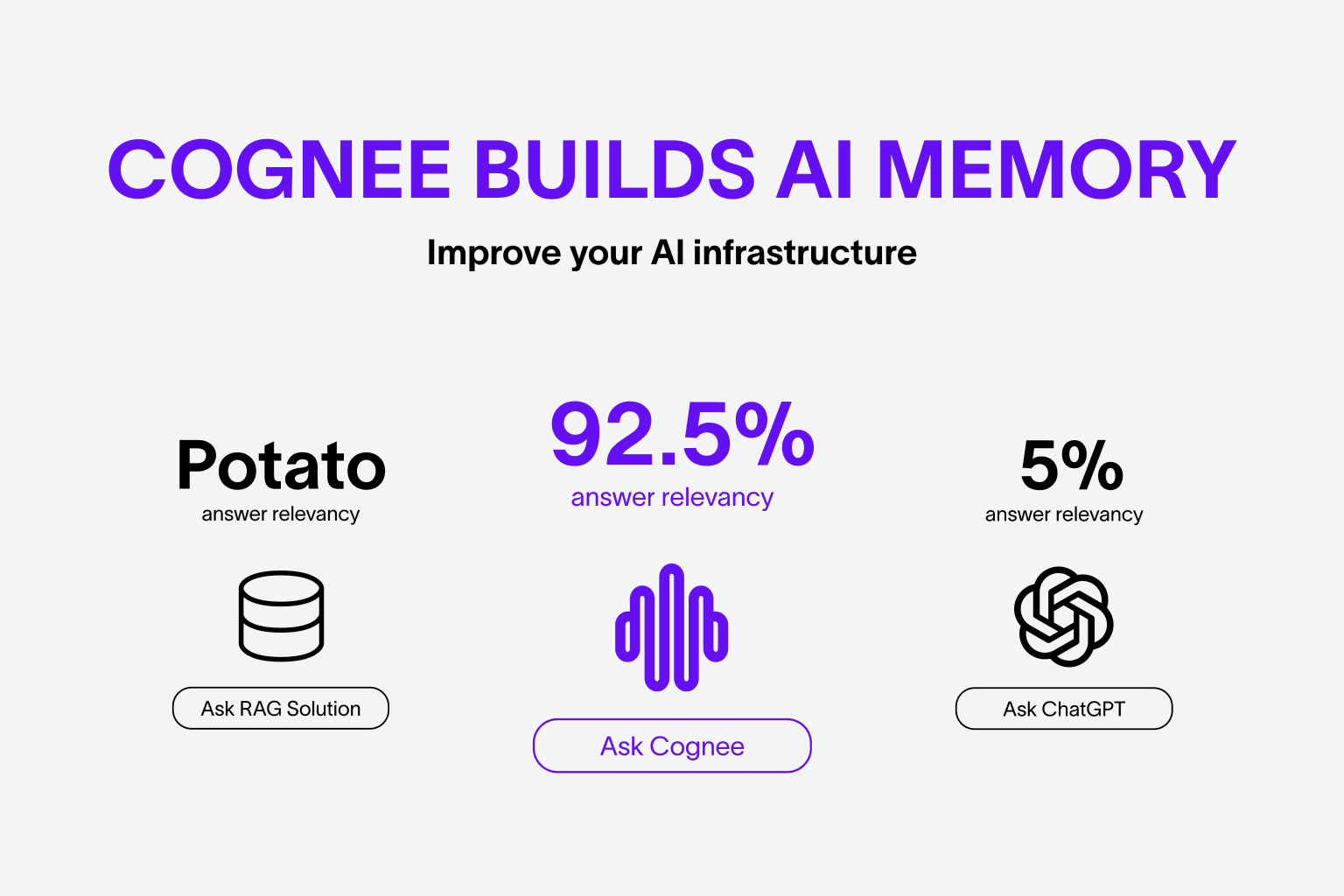

AI Agent responses you can rely on.

Build dynamic Agent memory using scalable, modular ECL (Extract, Cognify, Load) pipelines.

More on use-cases.

- Interconnect and retrieve your past conversations, documents, images and audio transcriptions

- Reduce hallucinations, developer effort, and cost.

- Load data to graph and vector databases using only Pydantic

- Manipulate your data while ingesting from 30+ data sources

Get started quickly with a Google Colab notebook or starter repo

Your contributions are at the core of making this a true open source project. Any contributions you make are greatly appreciated. See CONTRIBUTING.md for more information.

You can install Cognee using either pip, poetry, uv or any other python package manager.

pip install cogneeimport os

os.environ["LLM_API_KEY"] = "YOUR OPENAI_API_KEY"

You can also set the variables by creating .env file, using our template. To use different LLM providers, for more info check out our documentation

This script will run the default pipeline:

import cognee

import asyncio

async def main():

# Add text to cognee

await cognee.add("Natural language processing (NLP) is an interdisciplinary subfield of computer science and information retrieval.")

# Generate the knowledge graph

await cognee.cognify()

# Query the knowledge graph

results = await cognee.search("Tell me about NLP")

# Display the results

for result in results:

print(result)

if __name__ == '__main__':

asyncio.run(main())Example output:

Natural Language Processing (NLP) is a cross-disciplinary and interdisciplinary field that involves computer science and information retrieval. It focuses on the interaction between computers and human language, enabling machines to understand and process natural language.

Graph visualization:

Open in browser.

Open in browser.

For more advanced usage, have a look at our documentation.

- What is AI memory:

cognee_ai_memory.mp4

- Simple GraphRAG demo

cognee_graphrag.mp4

- cognee with Ollama

cognee_with_ollama.mp4

We are committed to making open source an enjoyable and respectful experience for our community. See CODE_OF_CONDUCT for more information.