This is open-source codebase for PCZero, from "Efficient Learning for AlphaZero via Path Consistency" at ICML 2022. You can find our poster here (https://github.com/CMACH508/PCZero/blob/main/Picture/poster.pdf) to get a quick overview of our work.

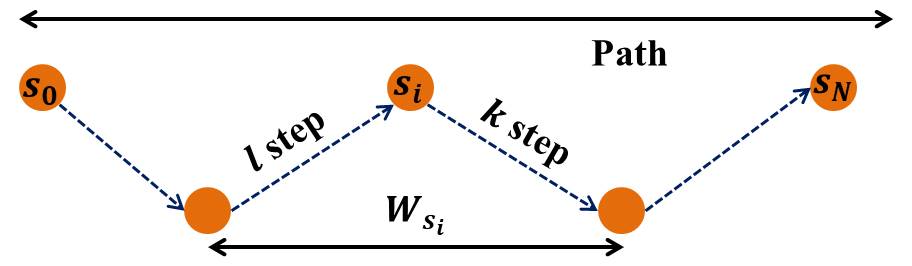

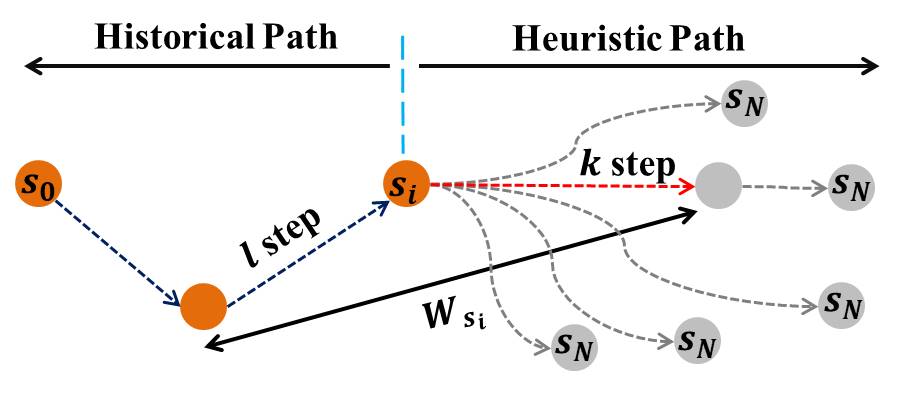

In recent years, deep reinforcement learning have made great breakthroughs on board games. However, most of the works require huge computational resources for a large scale of environmental interactions or self-play for the games. In this paper, we propose a data-efficient learning algorithm built on AlphaZero, called PCZero. Our method is based on an optimality condition in board games, called path consistency (PC), i.e., values on one optimal search path should be identical. For implementing PC, we propose to include not only historical trajectory but also scouted search paths by Monte Carlo Tree Search (MCTS). It enables a good balance between exploration and exploitation and enhances the generalization ability effectively. PCZero obtains

In AlphaZero,

- Python 3.6 or higher.

- Pytorch == 1.2.10

- Numpy

$\cdots\cdots$

Expert data is needed while doing offline learning. Hex game can be download from https://drive.google.com/drive/folders/18MdnvMItU7O2sEJDlbmk_ZzUhZG7yDK9. Othello data can be found in https://www.ffothello.org/informatique/la-base-wthor/ and Gomoku is from http://www.renju.net/downloads/games.php.

The raw data is in sgf format and preprocessing code can be found to exact the input data of the network.

The generated data while training our

After the dataset is ready, you can run train.py directly for offline learning.

For online learning, execute run.sh to finish the training process.

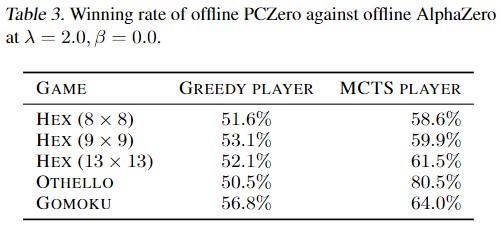

You can hold the competition between models by run evaluate.py for Greedy player and MCTS player. In order to compete with the expert, you need to install MoHex 2.0 https://github.com/cgao3/benzene-vanilla-cmake and Edax https://github.com/abulmo/edax-reversi first.

If you want to play Hex in real, install HexGUI from https://github.com/ryanbhayward/hexgui.

In order to record the training process, elo rating system is needed.

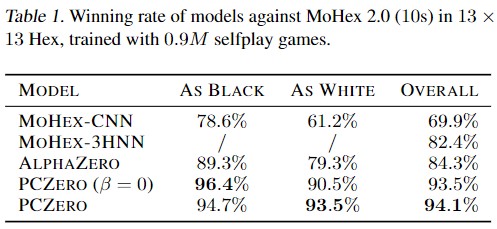

While competing with MoHex 2.0, the search time is limited to $10$s. Therefore, the tournament results is related with the machine to conduct the experiment. In our paper, we use the machine with a single GTX

While conducting competitions for Othello, opening positions are generated following the method in "Preference learning for move prediction and evaluation function approximation in Othello".

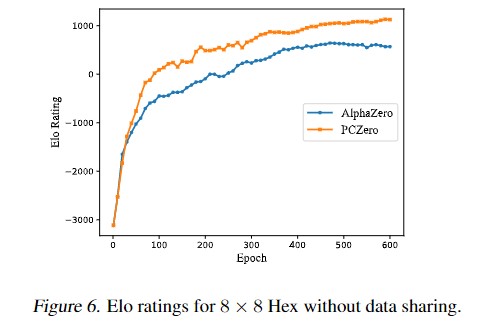

The elo rates while learning in online manner for Hex is

The tournament results is listed as below. Table

If you find this repo useful, please cite our paper:

@InProceedings{pmlr-v162-zhao22h,

title = {Efficient Learning for {A}lpha{Z}ero via Path Consistency},

author = {Zhao, Dengwei and Tu, Shikui and Xu, Lei},

booktitle = {Proceedings of the 39th International Conference on Machine Learning},

pages = {26971--26981},

year = {2022},

editor = {Chaudhuri, Kamalika and Jegelka, Stefanie and Song, Le and Szepesvari, Csaba and Niu, Gang and Sabato, Sivan},

volume = {162},

series = {Proceedings of Machine Learning Research},

month = {17--23 Jul},

publisher = {PMLR}

}

If you have any question or want to use the code, please contact zdwccc@sjtu.edu.cn

We appreciate the following github repos greatly for their valuable code base implementations:

https://github.com/cgao3/benzene-vanilla-cmake

https://github.com/kenjyoung/Neurohex