Version information of this project

Paper of this Project is accepted to Intelligent Vehicle Symposium 2018!! 😄

This repository is for Deep Reinforcement Learning Based Self Driving Car Control project in ML Jeju Camp 2017

There are 2 main goals for this project.

-

Making vehicle simulator with Unity ML-Agents.

-

Control self driving car in the simulator with some safety systems.

As a self driving car engineer, I used lots of

vehicle sensors(e.g. RADAR, LIDAR, ...) to perceive environments around host vehicle. Also, There are a lot ofAdvanced Driver Assistant Systems (ADAS)which are already commercialized. I wanted to combine these things with my deep reinforcement learning algorithms to control self driving car.

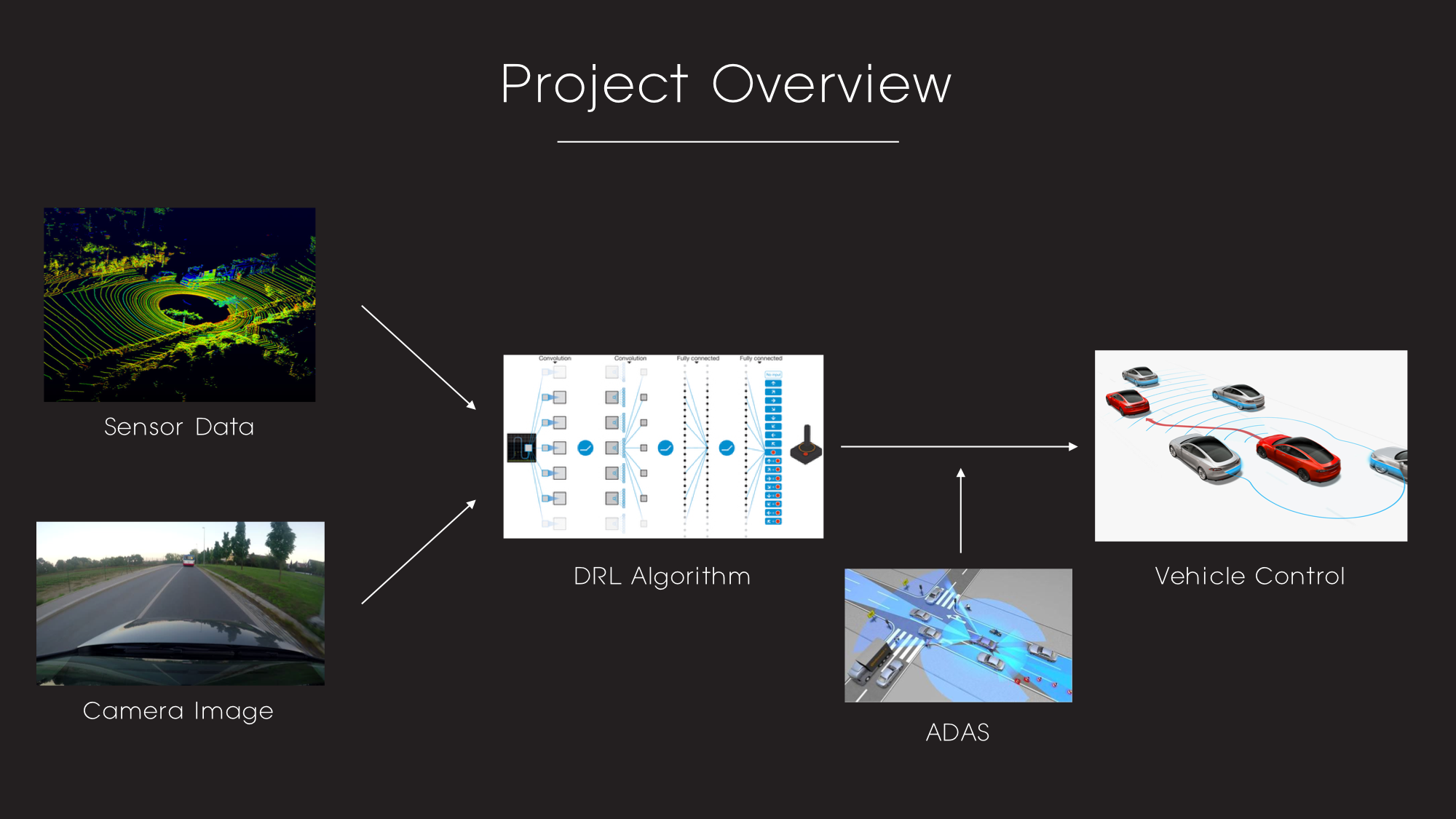

Simple overview of my project is as follows.

I will use sensor data and camera image as inputs of DRL algorithm. DRL algorithm decides action according to the inputs. If the action may cause dangerous situation, ADAS controls the vehicle to avoid collision.

Software

- Windows7 (64bit)

- Python 3.5.2

- Anaconda 4.2.0

- Tensorflow 1.3.0

Hardware

-

CPU: Intel(R) Core(TM) i7-4790K CPU @ 4.00GHZ

-

GPU: GeForce GTX 1080 Ti

-

Memory: 8GB

- download the github repo

- download the simulator and put all the files into the environment folder

- open the ipynb file in the RL_algorithm folder and run it!

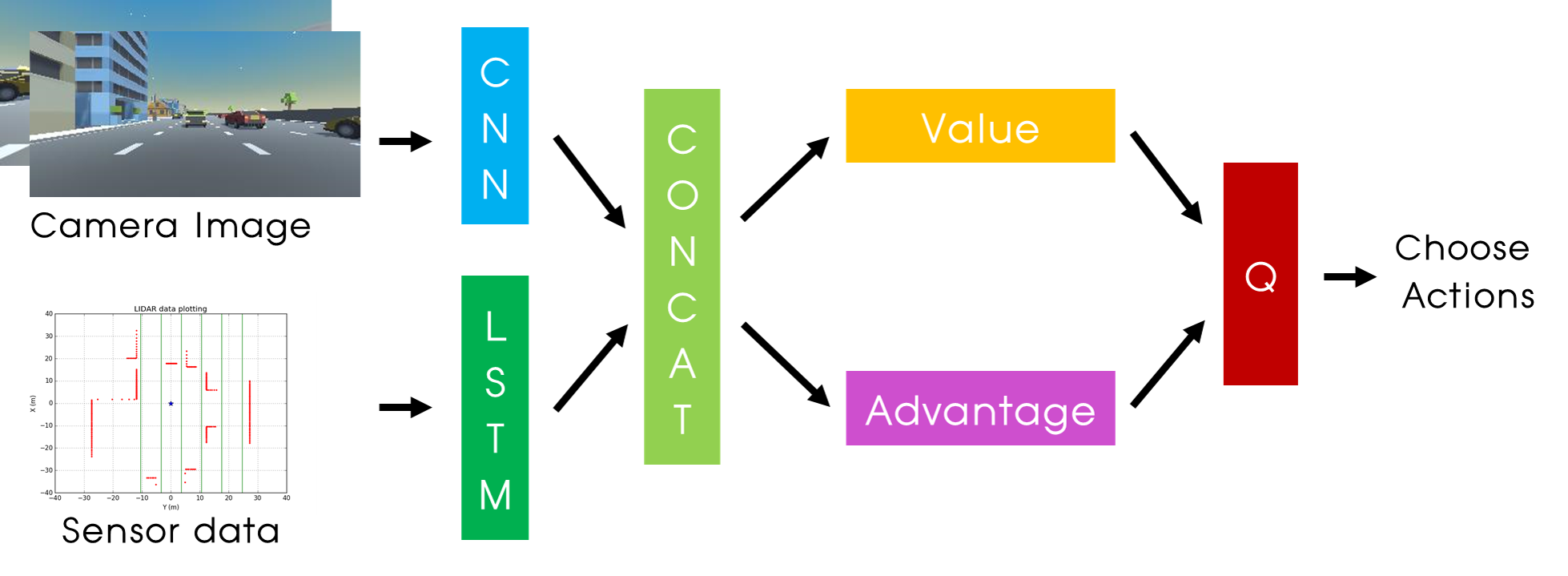

- Dueling_Image.ipynb: Dueling network using only image of vehicle.

- Dueling_sensor.ipynb: Dueling network using only sensor data of vehicle.

- Dueling_image_sensor.ipynb: Dueling network using both image and sensor of vehicle

I also upload the other DQN codes which I tested with the games that I made. Check out my DRL github repo

This is my PPT file of final presentation(Jeju Camp)

Also, this are the links for my Driving Simulators. (Windows only for now)

Simulator - Windows

Simulator - Mac

Simulator - Linux

Unzip the simulator into the environment folder.

Specific explanation of my simulator and model is as follows.

I made this simulator to test my DRL algorithms. Also, to test my algorithms, I need sensor data and Camera images as inputs, but there was no driving simulators which provides both sensor data and camera images. Therefore, I tried to make one by myself.

The simulator is made by Unity ML-agents

As, I mentioned simulator provides 2 inputs to DRL algorithm. Forward camera, Sensor data. The example of those inputs are as follows.

| Front Camera Image | Sensor data Plotting |

|---|---|

|

|

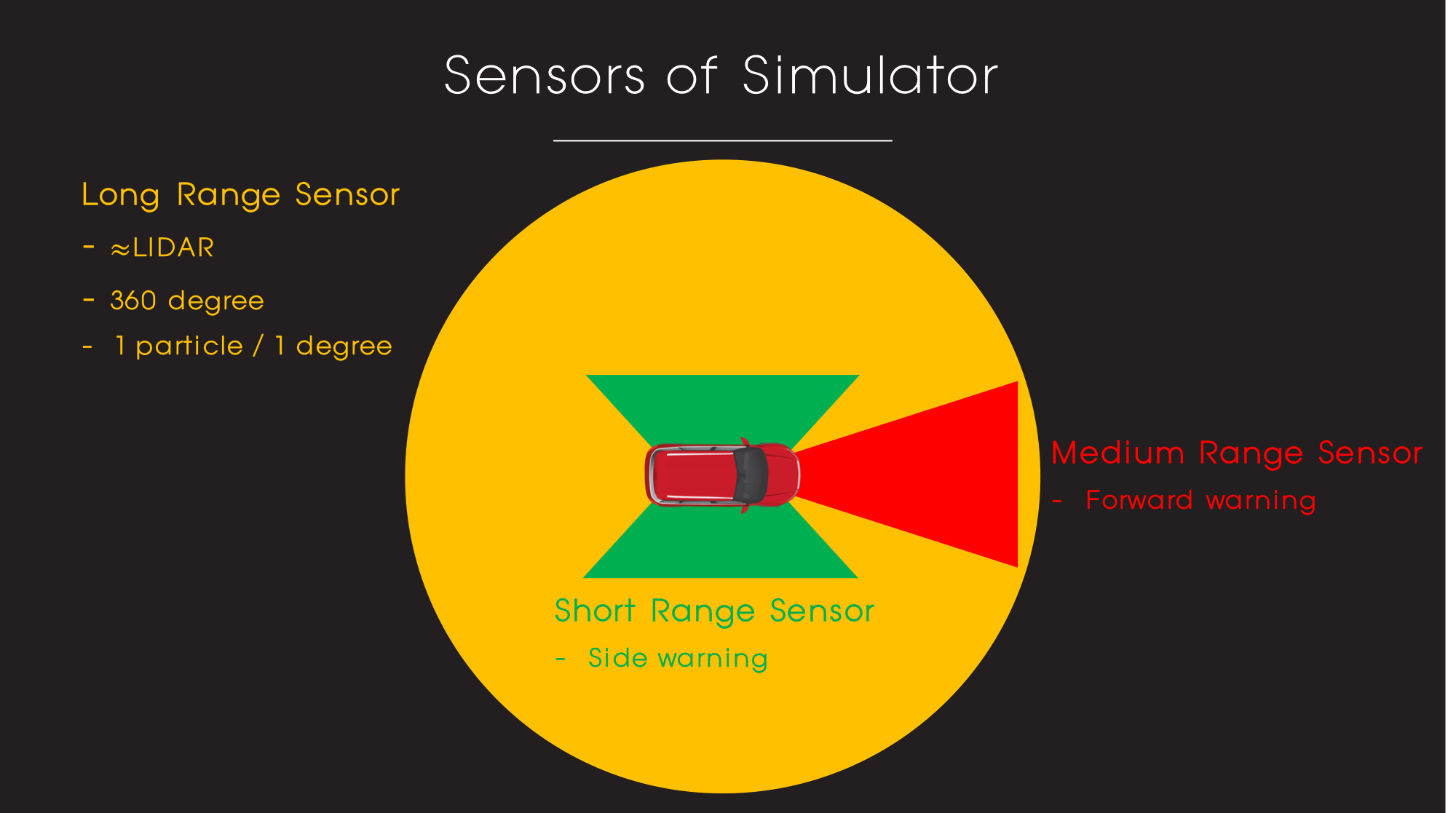

Also, vehicles of this simulator have some safety functions. This functions are applied to the other vehicles and host vehicle of ADAS version. The sensor overview is as follows.

The safety functions are as follows.

- Forward warning

- Control the velocity of host vehicle equal to velocity of the vehicle at the front.

- If distance between two vehicles is too close, rapidly drop the velocity to the lowest velocity

- Side warning: No lane change

- Lane keeping: If vehicle is not in the center of the lane, move vehicle to the center of the lane.

In this simulator, size of vector observation is 373.

0 ~ 359: LIDAR Data (1 particle for 1 degree)

360 ~ 362: Left warning, Right Warning, Forward Warning (0: False, 1: True)

363: Normalized forward distance

364: Forward vehicle Speed

365: Host Vehicle Speed

0 ~ 365 are used as input data for sensor

366 ~ 372 are used for sending information

366: Number of Overtake in a episode

367: Number of lane change in a episode

368 ~ 372: Longitudinal reward, Lateral reward, Overtake reward, Violation reward, collision reward

(Specific information of rewards are as follows)

The action of the vehicle is as follows.

- Do nothing

- Acceleration

- Deceleration

- Lane change to left lane

- Lane change to right lane

In this simulator, 5 different kinds of rewards are used.

Longitudinal reward: ((vehicle_speed - vehicle_speed_min) / (vehicle_speed_max - vehicle_speed_min));

- 0: Minimum speed, 1: Maximum speed

Lateral reward: - 0.5

- During the lane change it continuously get lateral reward

Overtake reward: 0.5* (num_overtake - num_overtake_old)

- 0.5 / overtake

Violation reward: -0.1

- example: If vehicle do left lane change at left warning, it gets violation reward (Front and right warning also)

Collision reward: -10

- If collision happens, it gets collision reward

Sum of these 5 rewards is final reward of this simulator

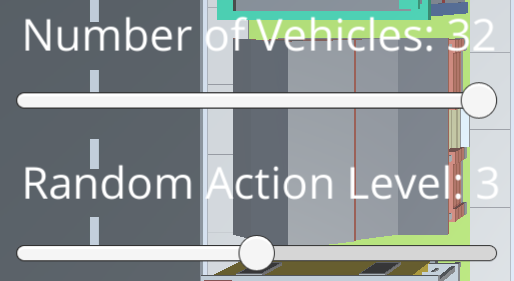

You can change some parameters with the Slider on the left side of simulator

- Number of Vehicles (0 ~ 32) : Change the number of other vehicles

- Random Action (0 ~ 6): Change the random action level of other vehicles (Higher value, more random action)

For this project, I read papers as follows.

You can find the code of those algorithms at my DRL github.

I applied algorithms 1 ~ 4 to my DRL model. The network model is as follows.

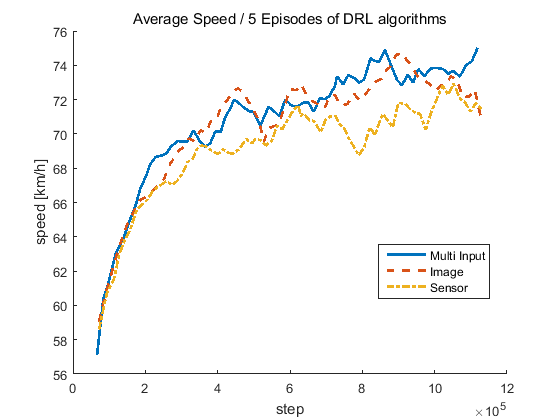

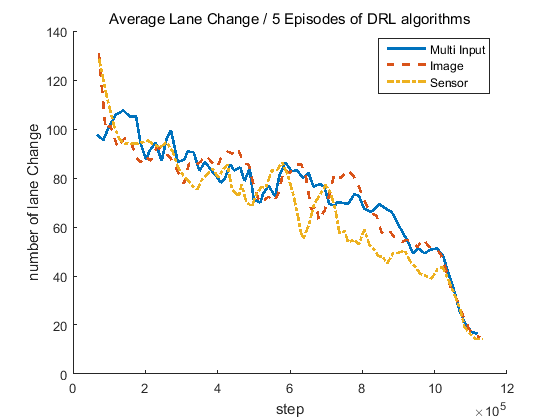

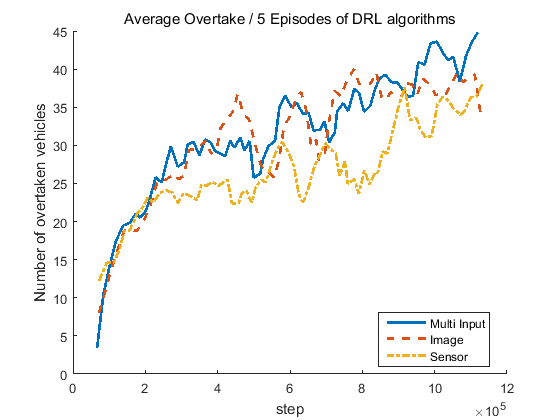

| Average Speed | Average # of Lane Change | Average # of Overtake |

|---|---|---|

|

|

|

| Input Configuration | Speed (km/h) | Number of Lane Change | Number of Overtaking |

|---|---|---|---|

| Camera Only | 71.0776 | 15 | 35.2667 |

| LIDAR Only | 71.3758 | 14.2667 | 38.0667 |

| Multi-Input | 75.0212 | 19.4 | 44.8 |

After training, host vehicle drives mush faster (almost at the maximum speed!!!) with little lane change!! Yeah! :happy: