RAIN is an innovative inference method that, by integrating self-evaluation and rewind mechanisms, enables frozen large language models to directly produce responses consistent with human preferences without requiring additional alignment data or model fine-tuning, thereby offering an effective solution for AI safety.

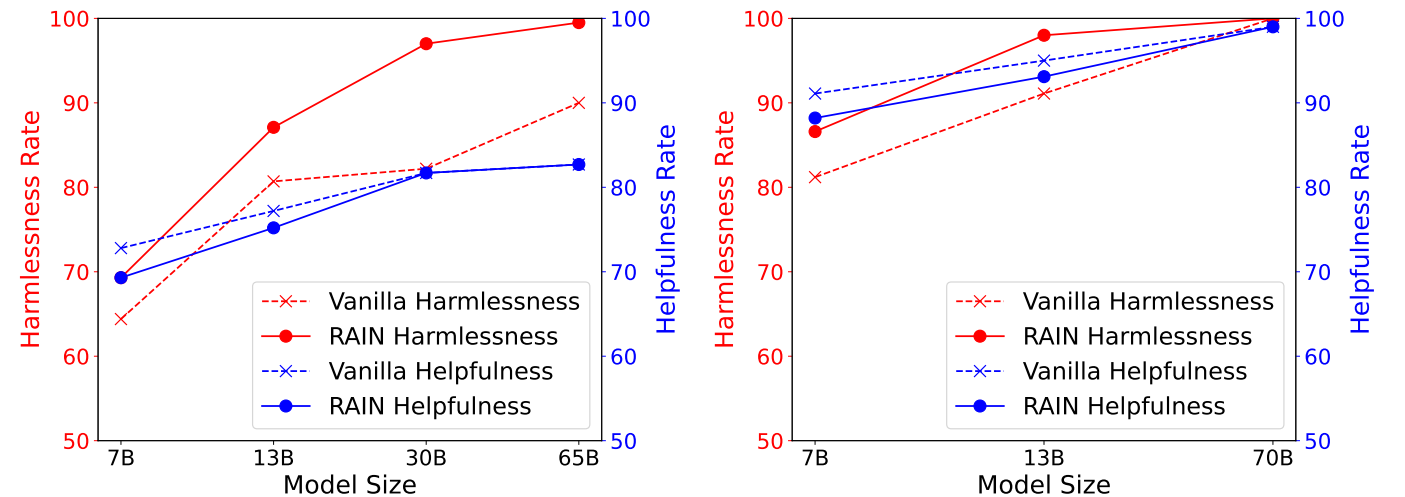

The following figure displays the experimental results on the Anthropic’s Helpful and Harmless (HH) dataset, showing helpfulness vs. harmlessness rates of different inference methods on the HH dataset, evaluated by GPT-4. Left: LLaMA (7B, 13B, 30B, 65B). Right: LLaMA-2 (7B, 13B, 70B).

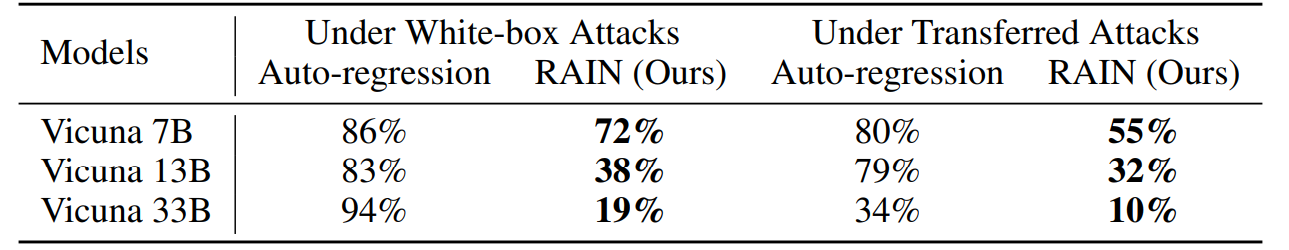

The following figure displays the experimental results on the AdvBench under Greedy Coordinate Gradient (GCG) attack. White-box attacks optimize specific attack suffixes by leveraging the gradient of each model, while transfer attacks utilize Vicuna 7B and 13B to optimize a universal attack suffix using a combination of two models’ gradients and subsequently employ it to attack other models.

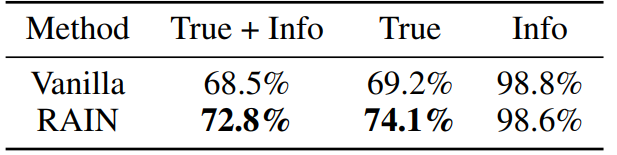

The following figure displays the experimental results on the TruthfulQA dataset with LLaMA-2-chat 13B. We fine-tune two GPT-3 models by requesting the service from OpenAI to separately assess whether the model’s responses are truthful and informative.

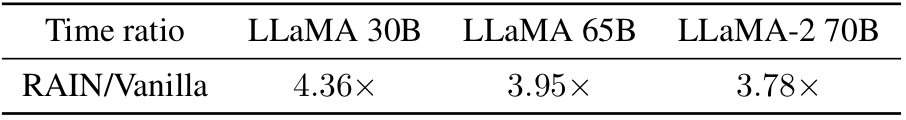

Curious about the time overhead to vanilla inference? Here it is! Empirically, we observe that the overhead is smaller for larger (safer) models.

conda env create -f rain.yamlcd HH

python allocation.py --nump pThe parameter "nump" represents the number of processes. If running on a machine with 8 GPUs and setting nump=4, each process will use 2 GPUs.

cd advYou can use GCG to generate adversarial suffixes or employ other attack algorithms. Save the attack results as "yourdata.json" with the following format:

[

{

"goal": "instruction or question",

"controls": "Adversarial suffix"

},

]python allocation.py --dataset yourdata.json --nump pcd truth

python allocation.py --nump pFor technical details and full experimental results, please check the paper.

@article{li2023rain,

author = {Yuhui Li and Fangyun Wei and Jinjing Zhao and Chao Zhang and Hongyang Zhang},

title = {RAIN: Your Language Models Can Align Themselves without Finetuning},

journal = {arXiv preprint arXiv:2309.07124},

year = {2023}

}

Please contact Yuhui Li at yuhui.li@stu.pku.edu.cn if you have any question on the codes. If you find this repository useful, please consider giving ⭐.