The main use case is the ability to run an Ansible playbook against the virtual machine that is migrated. For example, we want to remove the virtual machine from a load balancing pool. An Ansible Playbook Run custom resource is created (cf. Custom Resources) and it contains all the required information to run the playbook.

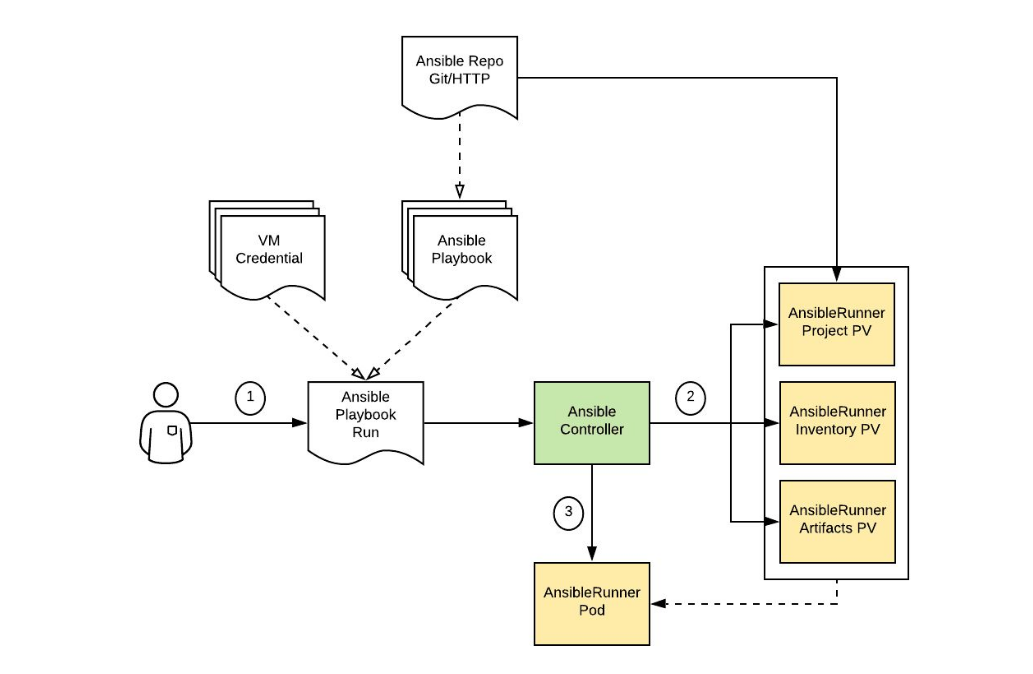

The following diagram [source] shows the corresponding flow.

The Ansible Controller creates three Persistent Volumes:

- Project to install the playbook and its dependencies: roles, collections...

- Inventory to store the inventory and variables, including credentials.

- Artifacts to store the data produced by Ansible Runner: events, facts and output.

Then, the Ansible Controller creates the Ansible Runner job with the 3 PVs attached to it and monitors the progress. The Ansible Runner pod copies the Ansible playbook repository, installs the dependencies, creates the inventory and variables files, then calls the ansible-runner command.

It might be worth creating a separate operator and depend on it to allow pre/post migration hooks. The UI and admission controller could verify that the operator is deployed and enabled when a Migration Plan is created. For CAM, a hook-runner container image already exists and adds k8s and openshift libraries to enable the respective modules. Because we will interact with VMware, RHV and maybe OpenStack, we will have to extend this image to ship the required libraries.

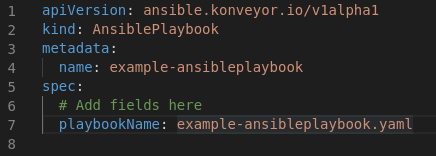

It represents an Ansible playbook specification to be run by the Ansible Playbook controller.

- Repository Type

- Repository URL

- Playbook file name

It represents the execution of an Ansible Playbook. AnsiblePlaybookRuns are how the AnsiblePlaybooks are executed ; they prepare the execution and capture operational aspects of the AnsiblePlaybook execution such as events and progress, so that the UI can represent them.

- AnsiblePlaybook (*AnsiblePlaybook)

- Inventory - List of hostnames / IP addresses extracted from provider

- Host(s) credentials ([]*Secrets)

- Extra vars - List of key / value pairs that are passed to the playbook at runtime

Ideally the Status of the CR will contain at least:

- State: pending, preparing, active, cleaning, finished

- Message: human readable status to display in the UI (Ansible task name)

- Install docker

$ sudo dnf config-manager --add-repo=https://download.docker.com/linux/centos/docker-ce.repo

$ sudo dnf install docker-ce-3:18.09.1-3.el7

- Running OpenShift cluster

- You can pull the

AnsibleRunner Operatorimage from -

docker pull quay.io/tsisodia/ansiblerunner-operator

- Clone git repository

https://github.com/tsisodia10/ansible-operator

We will use Golang to build the Operator. Install Golang, and then configure the following environment settings, as well as any other settings that you prefer:

$GOPATH=/your/preferred/path/

$GO111MODULE=on

# Verify

$ go version

go version go1.13.3 linux/amd64

We will use the Kubernetes Operator SDK to build our Operator. Install the Operator SDK, then verify the installation:

# Verify

$ operator-sdk version

operator-sdk version: "v0.17.0", commit: "2fd7019f856cdb6f6618e2c3c80d15c3c79d1b6c", kubernetes version: "unknown", go version: "go1.13.10 linux/amd64"

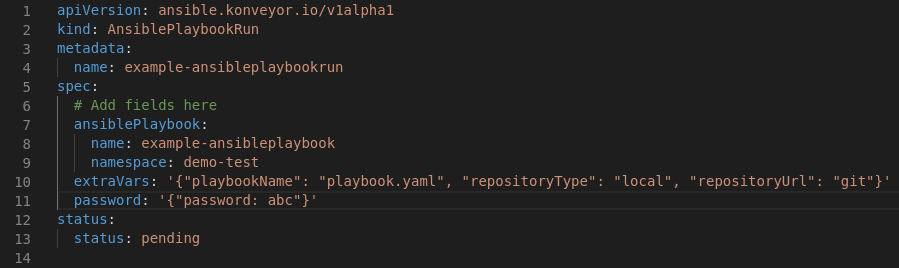

In a new terminal, inspect the Custom Resource manifest:

cd $GOPATH/src/github.com/redhat/ansible-operator

cat deploy/crds/ansible.konveyor.io_v1alpha1_ansibleplaybookrun_cr.yaml

Ensure your kind: AnsiblePlaybookRun Custom Resource (CR) is updated with spec.replicas

apiVersion: ansible.konveyor.io/v1alpha1

kind: AnsiblePlaybookRun

metadata:

name: example-ansibleplaybookrun

spec:

# Add fields here

ansiblePlaybook:

name: example-ansibleplaybook

namespace: demo-test

inventory: '{"all": {"hosts": "localhost"}}'

hostCredential: '{"password": "b3BlcmF0b3I="}'

status:

status: pending

Configuration data can be consumed in pods in a variety of ways. A ConfigMap can be used to:

- Populate the value of environment variables.

- Set command-line arguments in a container.

- Populate configuration files in a volume.

- Both users and system components may store configuration data in a ConfigMap.

Ensure you create configMap and Secrets, for example - password-secret.yaml, sshkey-secret.yaml

oc create configmap <configmap_name> [options]

Example of ConfigMap with two environment variable:

kind: ConfigMap

metadata:

name: special-config

namespace: default

data:

special.how: very

special.type: charm

Ensure you are currently scoped to the operator Namespace:

oc project operator

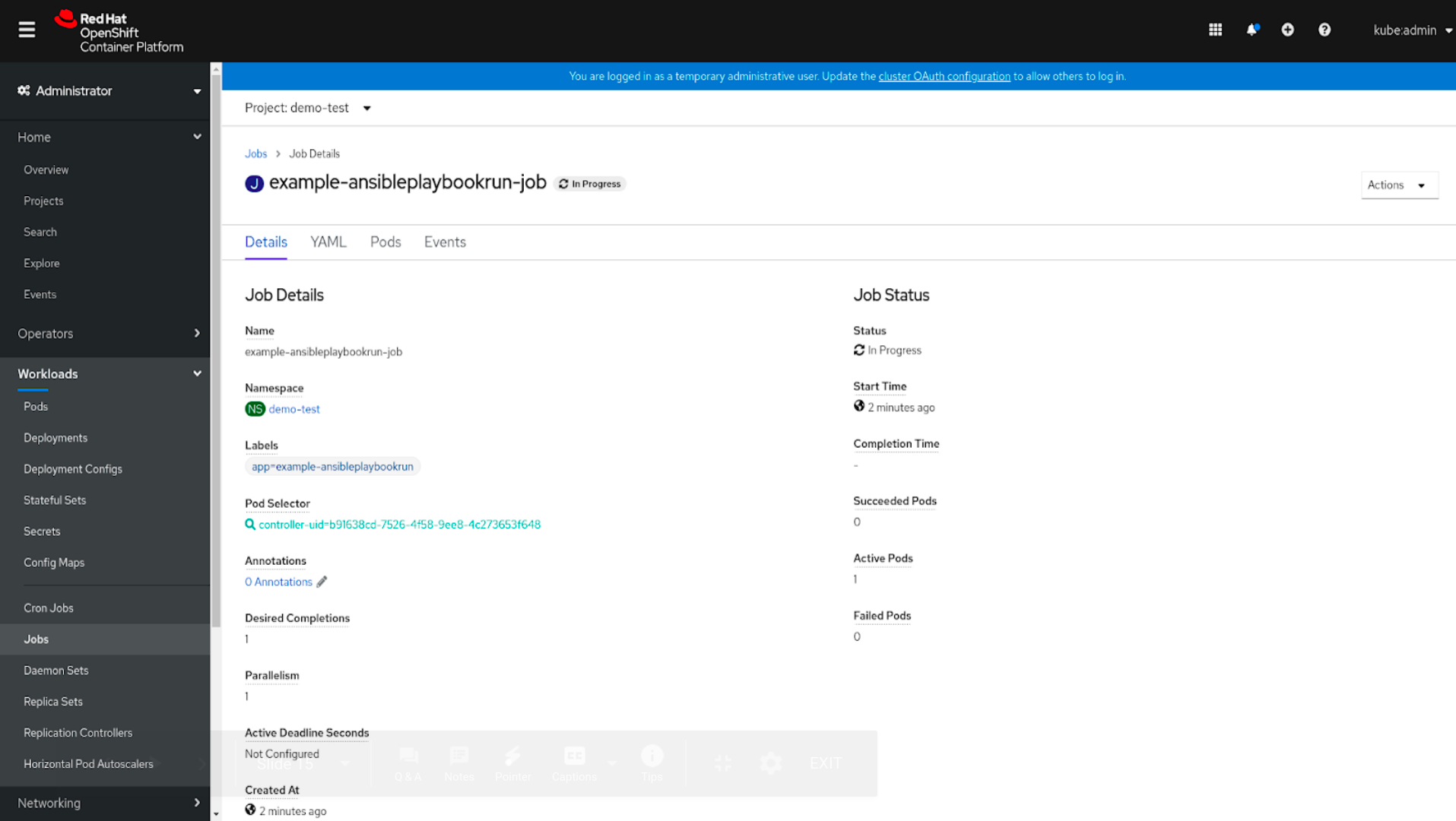

Deploy your AnsiblePlaybookRun Custom Resource to the live OpenShift Cluster:

oc create -f deploy/crds/ansible.konveyor.io_v1alpha1_ansibleplaybookrun_cr.yaml

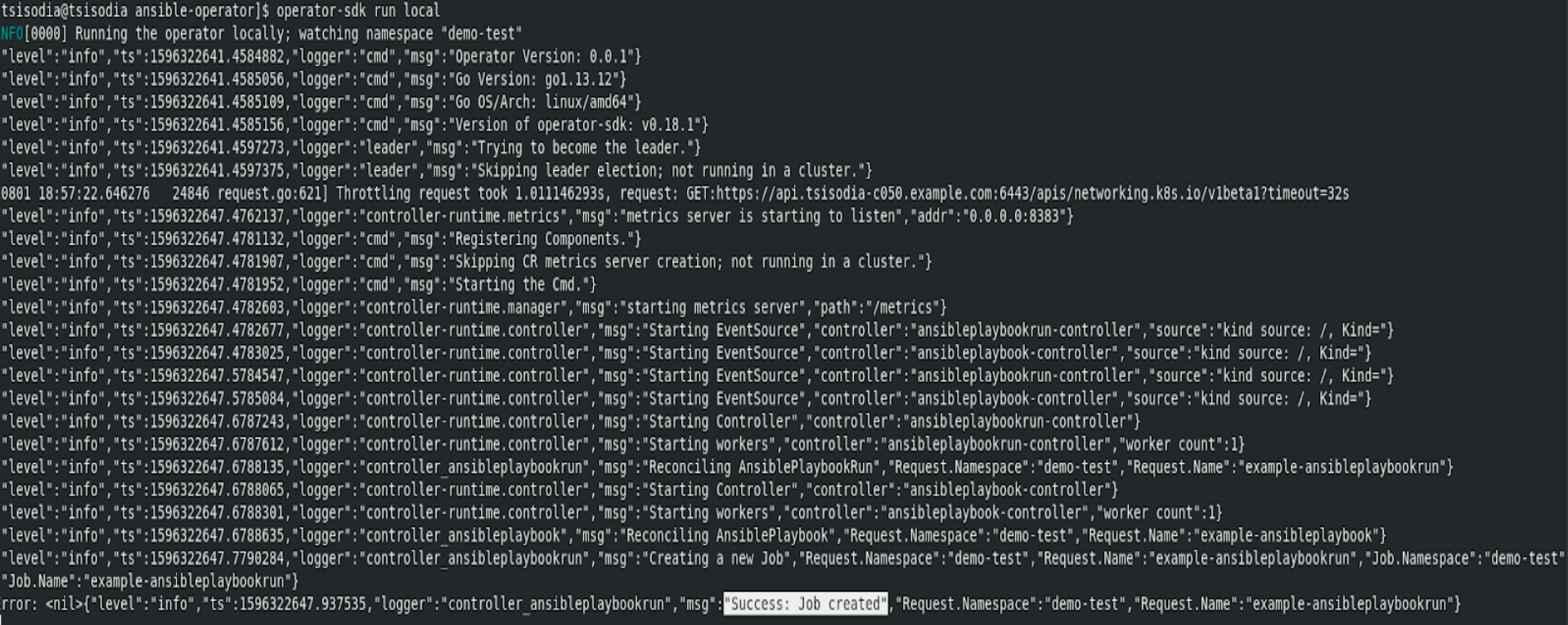

Now we can test our logic by running our Operator outside the cluster. You can continue interacting with the OpenShift cluster by opening a new terminal window.

operator-sdk run local

Verify the Ansible operator has created 1 job running Ansible Playbook :

oc get job

Verify in the OpenShift dashboard if the resources are created

Presentation : https://docs.google.com/presentation/d/1Y-_01OudbLUmuMF2abGp2gmr60WhpecN5DiBywkwmak/edit?usp=sharing

Lightning Talk Video : https://drive.google.com/file/d/1nsRFYO7Yt6MGCZOwnP9AcJ8LlZTk2AGt/view?usp=sharing