By Tsung-Wei Ke*, Sangwoo Mo* and Stella X. Yu

Official implementation of "Learning Hierarchical Image Segmentation For Recognition and By Recognition". This work is selected as a spotlight paper in ICLR 2024.

Large vision and language models learned directly through image-text associations often lack detailed visual substantiation, whereas image segmentation tasks are treated separately from recognition, supervisedly learned without interconnections.

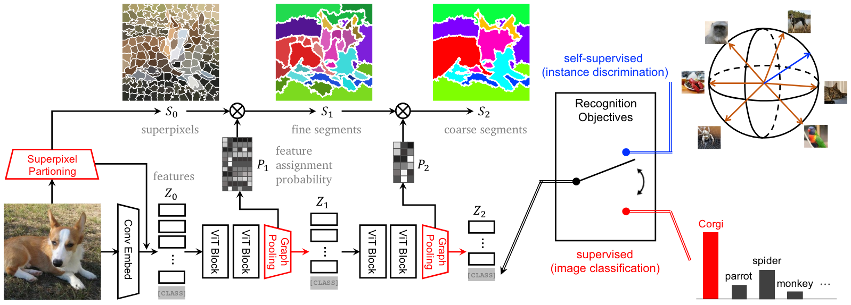

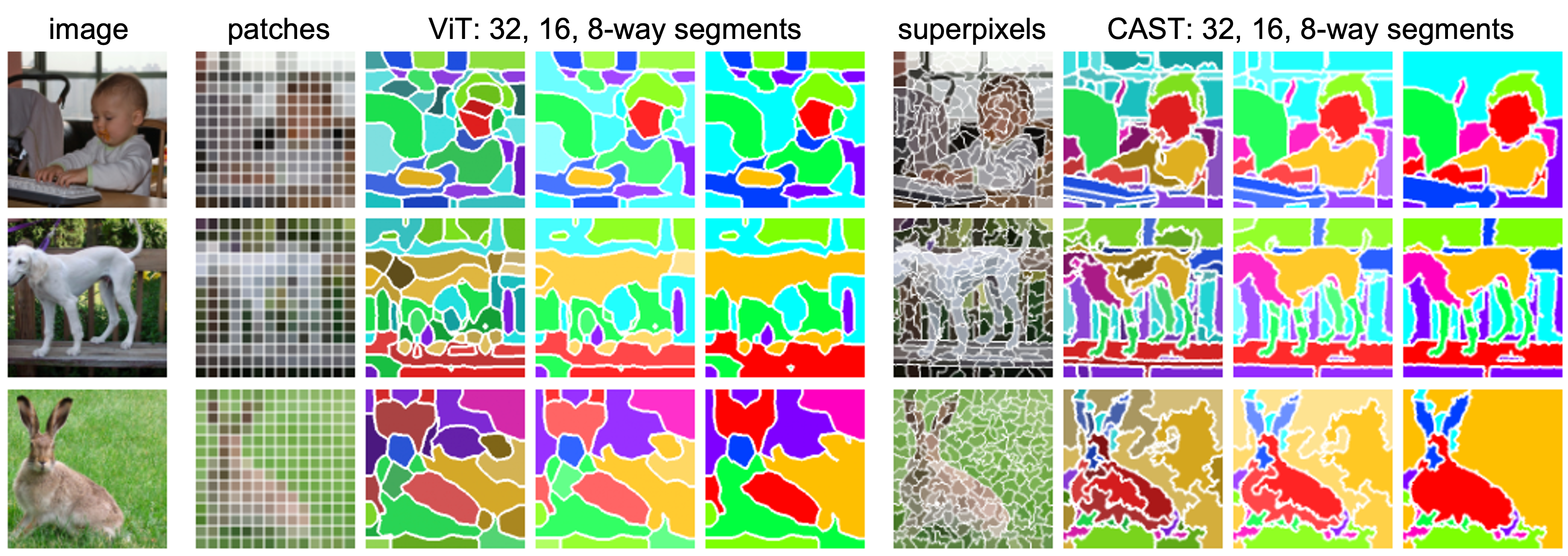

Our key observation is that, while an image can be recognized in multiple ways, each has a consistent part-and-whole visual organization. Segmentation thus should be treated not as an end task to be mastered through supervised learning, but as an internal process that evolves with and supports the ultimate goal of recognition.

We propose to integrate a hierarchical segmenter into the recognition process, train and adapt the entire model solely on image-level recognition objectives. We learn hierarchical segmentation for free alongside recognition, automatically uncovering part-to-whole relationships that not only underpin but also enhance recognition.

Enhancing the Vision Transformer (ViT) with adaptive segment tokens and graph pooling, our model surpasses ViT in unsupervised part-whole discovery, semantic segmentation, image classification, and efficiency. Notably, our model (trained on unlabeled 1M ImageNet images) outperforms SAM (trained on 11M images and 1 billion masks) by absolute 8% in mIoU on PartImageNet object segmentation

Create a conda environment with the following command:

# initiate conda env

> conda update conda

> conda env create -f environment.yaml

> conda activate cast

# install dgl (https://www.dgl.ai/pages/start.html)

> pip install dgl==1.1.3+cu116 -f https://data.dgl.ai/wheels/cu116/dgl-1.1.3%2Bcu116-cp38-cp38-manylinux1_x86_64.whl

To facilitate fast development on top of our model, we provide here an overview of our implementation of CAST.

The model can be indenpendently installed and used as a stand-alone package.

> pip install -e .

# import the model

> from cast_models.cast import cast_small

> model = cast_small()

See Preparing datasets.

See Getting started with self-supervised learning of CAST

See Getting started with fully-supervised learning of CAST

See Getting started with fine-tuning of CAST on Pascal Context and ADE20K

See Getting started with unsupervised segementation and fine-tuning of CAST on VOC

See Getting started with part segmentation of CAST on PartImageNet

See our jupyter notebook

We host the model weights on hugging face.

- Self-supervised trained models on ImageNet-1K. We use a smaller batch size (256) and shorter training epochs (100) compared to the original setup of MoCo-v3.

| Acc. | pre-trained weights | |

|---|---|---|

| ViT-S | 67.9 | link |

| CAST-S | 68.1 | link |

| CAST-SD | 69.1 | link |

| ViT-B | 72.5 | link |

| CAST-B | 72.4 | link |

- Self-supervised trained models on COCO. We evaluate sementic segmentation before and after fine-tuning on Pascal VOC.

| CAST-S | mIoU | pre-trained weights |

|---|---|---|

| before fine-tuning | 38.4 | link |

| after fine-tuning | 67.6 | link |

We pre-train CAST for 400 epochs on COCO. Segmentation before fine-tuning on VOC uses the checkpoint at the 149th epoch. Segmentation after fine-tuning on VOC uses the final checkpoint.

- Supervised trained models on ImageNet-1K. We evaluate semantic segmentaiton by fine-tuning on ADE20K and Pascal Context.

| ImageNet-1K (Acc.) | ADE20K (mIoU) | Pascal Context (mIoU) | pre-trained weights | |

|---|---|---|---|---|

| CAST-S | 80.4 | 43.1 | 49.1 | link |

We also provide a compressed zip file, which include most of the trained model weights, except those fine-tuned on ADE20K and Pascal Context.

If you find this code useful for your research, please consider citing our paper "Learning Hierarchical Image Segmentation For Recognition and By Recognition".

@inproceedings{ke2023cast,

title={Learning Hierarchical Image Segmentation For Recognition and By Recognition},

author={Ke, Tsung-Wei and Mo, Sangwoo and Stella, X Yu},

booktitle={The Twelfth International Conference on Learning Representations},

year={2023}

}

CAST is released under the MIT License (refer to the LICENSE file for details).

This release of code is based on MOCO-v3, DeiT, SegFormer, SPML.