Exercism Go Track

Exercism exercises in Go

Issues

We welcome issues filed at https://github.com/exercism/go/issues for problems of any size. Feel free to report typographical errors or poor wording. We are most interested in improving the quality of the test suites. You can greatly help us improve the quality of the exercises by filing reports of invalid solutions that pass tests or of valid solutions that fail tests.

Development setup

Beyond filing issues, if you would like to contribute directly to the Go code in the Exercism Go track, you should follow some standard Go development practices. You should have a recent version of Go installed, ideally either the current release, the previous release, or tip.

You will need a github account and you will need to fork exercism/go to your account.

See GitHub Help if you are unfamiliar with the process.

Clone your fork with the command: git clone https://github.com/<you>/go.

Test your clone by cding to the go directory and typing bin/fetch-golangci-lint and then

bin/test-without-stubs. You should see tests pass for all exercises.

Note that unlike most other Go code, it is not necessary to clone this to your GOPATH. This is because this repo only imports from the standard library and isn't expected to be imported by other packages.

Your Go code should be formatted using the gofmt tool.

There is a misspelling tool. You can install and occasionally run it to find low hanging typo problems. #570 It's not added into CI since it could give false positives.

Contributing Guide

Please be familiar with the contributing guide in the docs repository. This describes some great ways to get involved. In particular, please read the Pull Request Guidelines before opening a pull request.

Exercism Go style

Let's walk through an example, non-existent, exercise, which we'll call

fizzbuzz to see what could be included in its implementation.

Exercise configuration

An exercise is configured

via an entry in the exercises array in config.json file. If fizzbuzz is an optional

exercise, it would have an entry below the core exercises that might look like:

{

"slug": "fizzbuzz",

"uuid": "mumblety-peg-whatever",

"core": false,

"unlocked_by": "two-fer",

"difficulty": 1,

"topics": ["conditionals"]

}See Exercise Configuration for more info.

Exercise files: Overview

For any exercise you may see a number of files present in a directory under exercises/:

~/go/exercises/fizzbuzz

$ tree -a

.

├── cases_test.go

├── example.go

├── fizzbuzz.go

├── fizzbuzz_test.go

├── .meta

│ └── description.md

│ └── gen.go

│ └── hints.md

│ └── metadata.yml

└── README.mdThis list of files can vary across exercises.

Not all exercises use all of these files.

Exercises originate their test data and README text from the Exercism problem-specification repository.

This repository collects common information for all exercises across all tracks.

However, should track-specific documentation need to be included with the exercise, files in an exercise's .meta/ directory can be used to override or augment the exercise's README.

Let's briefly describe each file:

-

cases_test.go - Contains generated test cases, using test data sourced from the problem-specifications repository. Only in some exercises. Automatically generated by

.meta/gen.go. -

example.go - An example solution for the exercise used to verify the test suite. Ignored by the

exercism fetchcommand. See also ignored files. -

fizzbuzz.go - A stub file, in some early exercises to give users a starting point.

-

fizzbuzz_test.go - The main test file for the exercise.

-

.meta/ - Contains files not to be included when a user fetches an exercise: See also ignored files.

-

.meta/description.md - Use to generate a track specific description of the exercise in the exercise's README.

-

.meta/gen.go - Generates

cases_test.gowhen present. See also synchronizing exercises with problem specifications. -

.meta/hints.md - Use to add track specific information in addition to the generic exercise's problem-specification description in the README.

-

.meta/metadata.yml - Track specific exercise metadata, overrides the exercise metadata from the problem-specifications repository.

In some exercises there can be extra files, for instance the series exercise contains extra test files.

Ignored files

When a user fetches an exercise, they do not need to get all the files within an exercise directory. For instance; the example.go files that contain an example solution, or the gen.go files used to generate an exercise's test cases. Therefore there are certain files and directories that are ignored when an exercise is fetched. These are:

- The .meta directory and anything within it.

- Any file that matches the

ignore_patterndefined in config.json file. This currently matches any filename that contains the wordexample, unless it is followed by the wordtest, with any number of characters in between.

Example solutions

example.go is a reference solution.

It is a valid solution that the CI (continuous integration) service can run tests against.

Files with "example" in the file name are skipped by the exercism fetch command.

Because of this, there is less need for this code to be a model of style, expression and readability, or to use the best algorithm.

Examples can be plain, simple, concise, even naïve, as long as they are correct.

Stub files

Stub files, such as leap.go, are a starting point for solutions.

Not all exercises need to have a stub file, only exercises early in the syllabus.

By convention, the stub file for an exercise with slug exercise-slug

must be named exercise_slug.go. This is because CI needs to delete stub files

to avoid conflicting definitions.

Tests

The test file is fetched for the solver and deserves attention for consistency and appearance.

The leap exercise makes use of data-driven tests.

Test cases are defined as data, then a test function iterates over the data.

In this exercise, as they are generated, the test cases are defined in the cases_test.go file.

The test function that iterates over this data is defined in the leap_test.go file.

The cases_test.go file is generated by information found in problem-specifications using generators.

To add additional test cases (e.g. test cases that only make sense for Go) add the test cases to <exercise>_test.go.

An example of using additional test cases can be found in the exercise two-bucket.

Identifiers within the test function appear in actual-expected order as described

at Useful Test Failures.

Here the identifier observed is used instead of actual. That's fine. More

common are words got and want. They are clear and short. Note Useful Test

Failures

is part of Code Review Comments.

Really we like most of the advice on that page.

In Go we generally have all tests enabled and do not ask the solver to edit the test program, to enable progressive tests for example.

t.Fatalf(), as seen in the leap_test.go file, will stop tests at the first failure encountered, so the solver is not faced with too many failures at once.

Testable examples

Some exercises can contain Example tests that document the exercise API. These examples are run alongside the standard exercise tests and will verify that the exercise API is working as expected. They are not required by all exercises and are not intended to replace the data-driven tests. They are most useful for providing examples of how an exercise's API is used. Have a look at the example tests in the clock exercise to see them in action.

Errors

We like errors in Go. It's not idiomatic Go to ignore invalid data or have undefined behavior. Sometimes our Go tests require an error return where other language tracks don't.

Benchmarks

In most test files there will also be benchmark tests, as can be seen at the end of the leap_test.go file. In Go, benchmarking is a first-class citizen of the testing package. We throw in benchmarks because they're interesting, and because it is idiomatic in Go to think about performance. There is no critical use for these though. Usually they will just bench the combined time to run over all the test data rather than attempt precise timings on single function calls. They are useful if they let the solver try a change and see a performance effect.

Synchronizing exercises with problem specifications

Some problems that are implemented in multiple tracks use the same inputs and outputs to define the test suites. Where the problem-specifications repository contains a canonical-data.json file with these inputs and outputs, we can generate the test cases programmatically. The problem-specifications repo also defines the instructions for the exercises, which are also shared across tracks and must also be synchronized.

Test structure

See the gen.go file in the leap exercise for an example of how this

can be done.

Test case generators are named gen.go and are kept in a special .meta directory within each exercise that makes use of a test cases generator. This .meta directory will be ignored when a user fetches an exercise.

Whenever the shared JSON data changes, the test cases will need to be regenerated. The generator will first look for a local copy of the problem-specifications repository. If there isn't one it will attempt to get the relevant json data for the exercise from the problem-specifications repository on GitHub. The generator uses the GitHub API to find some information about exercises when it cannot find a local copy of problem-specifications. This can cause throttling issues if working with a large number of exercises. We therefore recommend using a local copy of the repository when possible (remember to keep it current 😄).

To use a local copy of the problem-specifications repository, make sure that it has been cloned into the same parent-directory as the go repository.

$ tree -L 1 .

.

├── problem-specifications

└── goAdding a test generator to an exercise

For some exercises, a test generator is used to generate the cases_test.go file with the test cases based on information from problem-specifications.

To add a new exercise generator to an exercise the following steps are needed:

- Create the file

gen.goin the directory.metaof the exercise - Add the following template to

gen.go:

package main

import (

"log"

"text/template"

"../../../../gen"

)

func main() {

t, err := template.New("").Parse(tmpl)

if err != nil {

log.Fatal(err)

}

var j = map[string]interface{}{

"property_1": &[]Property1Case{},

"property_2": &[]Property2Case{},

}

if err := gen.Gen("<exercise-name>", j, t); err != nil {

log.Fatal(err)

}

}- Insert the name of the exercise to the call of

gen.Gen - Add all values for the field

propertyincanonical-data.jsonto the mapj.canonical-data.jsoncan be found at problem-specifications/exercises/<exercise-name> - Create the needed structs for storing the test cases from

canonical-data.json(you can for example use JSON-to-Go to convert the JSON to a struct)

NOTE: In some cases, the struct of the data in the input/expected fields is not the same for all test cases of one property. In those situations, an interface{} has to be used to represent the values for these fields. These interface{} values then need to be handled by the test generator. A common way to handle these cases is to create methods on the test case structs that perform type assertions on the interface{} values and return something more meaningful. These methods can then be referenced/called in the tmpl template variable. Examples of this can be found in the exercises forth or bowling.

- Add the variable

tmpltogen.go. This template will be used to create thecases_test.gofile.

Example:

var tmpl = `package <package of exercise>

{{.Header}}

var testCases = []struct {

description string

input int

expected int

}{ {{range .J.<property>}}

{

description: {{printf "%q" .Description}},

input: {{printf "%d" .Score}},

expected: {{printf "%d" .Score}},

},{{end}}

}

`- Synchronize the test case using the exercise generator (as described in Synchronizing tests and instructions)

- Check the validity of

cases_test.go - Use the generated test cases in the

<exercise>_test.gofile - Check if

.meta/example.gopasses all tests

Synchronizing tests and instructions

To keep track of which tests are implemented by the exercise the file .meta/tests.toml is used by configlet.

To synchronize the exercise with problem-specifications and to regenerate the tests, navigate into the go directory and perform the following steps:

- Synchronize your exercise with

exercism/problem-specificationsusing configlet:

$ configlet sync --update -e <exercise>configlet synchronizes the following parts, if an updated is needed:

- docs:

.docs/instructions.md,.docs/introduction.md - metadata:

.meta/config.json - tests:

.meta/tests.toml - filepaths:

./meta/config.json

For further instructions check out configlet.

- Run the test case generator to update

<exercise>/cases_test.go:

$ GO111MODULE=off go run exercises/practice/<exercise>/.meta/gen.goNOTE: If you see the error json: cannot unmarshal object into Go value of type []gen.Commit when running the generator you probably have been rate limited by GitHub.

Try providing a GitHub access token with the flag -github_token="<Token>".

Using the token will result in a higher rate limit.

The token does not need any specific scopes as it is only used to fetch infos about commits.

You should see that some/all of the above files have changed. Commit the changes.

Synchronizing all exercises with generators

$ ./bin/run-generators <GitHub Access Token>NOTE: If you see the error json: cannot unmarshal object into Go value of type []gen.Commit when running the generator you probably have been rate limited by GitHub.

Make sure you provided the GitHub access token as first argument to the script as shown above.

Using the token will result in a higher rate limit.

The token does not need any specific scopes as it is only used to fetch infos about commits.

Managing the Go version

For an easy management of the Go version in the go.mod file in all exercises, we can use gomod-sync.

This is a tool made in Go that can be seen in the gomod-sync/ folder.

To update all go.mod files according to the config file (gomod-sync/config.json) run:

$ cd gomod-sync && go run main.go updateTo check all exercise go.mod files specify the correct Go version, run:

$ cd gomod-sync && go run main.go checkPull requests

Pull requests are welcome. You forked, cloned, coded and tested and you have something good? Awesome! Use git to add, commit, and push to your repository. Checkout your repository on the web now. You should see your commit and the invitation to submit a pull request!

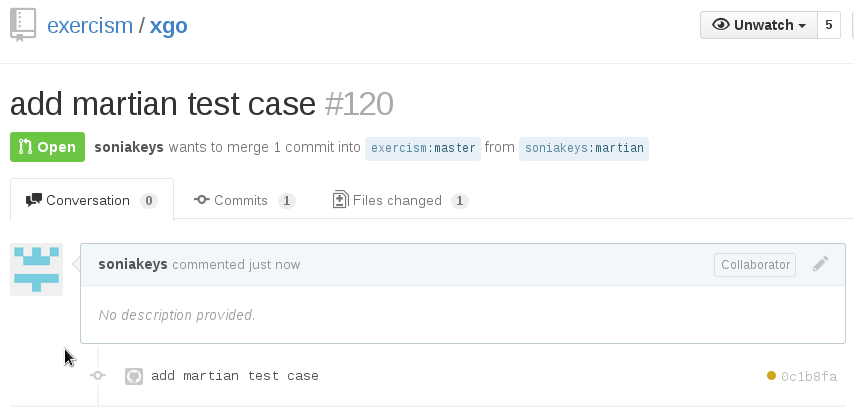

Click on that big green button. You have a chance to add more explanation to your pull request here, then send it. Looking at the exercism/go repository now instead of your own, you see this.

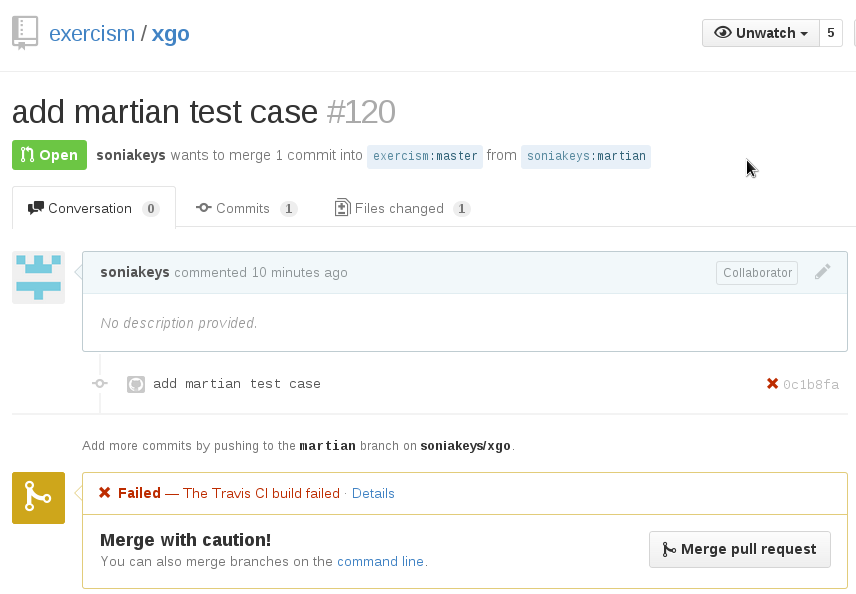

That inconspicuous orange dot is important! Hover over it (no, not on this image, on a real page) and you can see it's indicating that a CI build is in progress. After a few minutes (usually) that dot will turn green indicating that tests passed. If there's a problem, it comes up red:

This means you've still got work to do. Click on "details" to go to the CI build details. Look over the build log for clues. Usually error messages will be helpful and you can correct the problem.

Direction

Directions are unlimited. This code is fresh and evolving. Explore the existing code and you will see some new directions being tried. Your fresh ideas and contributions are welcome. ✨

Go icon

The Go logo was designed by Renée French, and has been released under the Creative Commons 3.0 Attributions license.