中文简介 • Features • Installation • Quick Start • Notebook • Tutorial • Contributing • Citation • License

Machine Learning Model CI is a one-stop machine learning MLOps platform on clouds, aiming to solve the "last mile" problem between model training and model serving. We implement a highly automated pipeline between the trained models and the online machine learning applications.

We offer the following features and users 1) can register models to our system and enjoy the automated pipeline, 2) or use them individually.

- Housekeeper provides a refined management for model (service) registration, deletion, update and selection.

- Converter is designed to convert models to serialized and optimized formats so that the models can be deployed to cloud. Support Tensorflow SavedModel, ONNX, TorchScript, TensorRT

- Profiler simulates the real service behavior by invoking a gRPC client and a model service, and provides a detailed report about model runtime performance (e.g. P99-latency and throughput) in production environment.

- Dispatcher launches a serving system to load a model in a containerized manner and dispatches the MLaaS to a device. Support Tensorflow Serving, Trion Inference Serving, ONNX runtime, Web Framework (e.g., FastAPI)

- Controller receives data from the monitor and node exporter, and controls the whole workflow of our system.

Several features are in beta testing and will be available in the next release soon. You are welcome to discuss them with us in the issues.

- Automatic model quantization and pruning.

- Model visulization and fine-tune.

The system is currently under rapid iterative development. Some APIs or CLIs may be broken. Please go to Wiki for more details

If your want to join in our development team, please contact huaizhen001 @ e.ntu.edu.sg

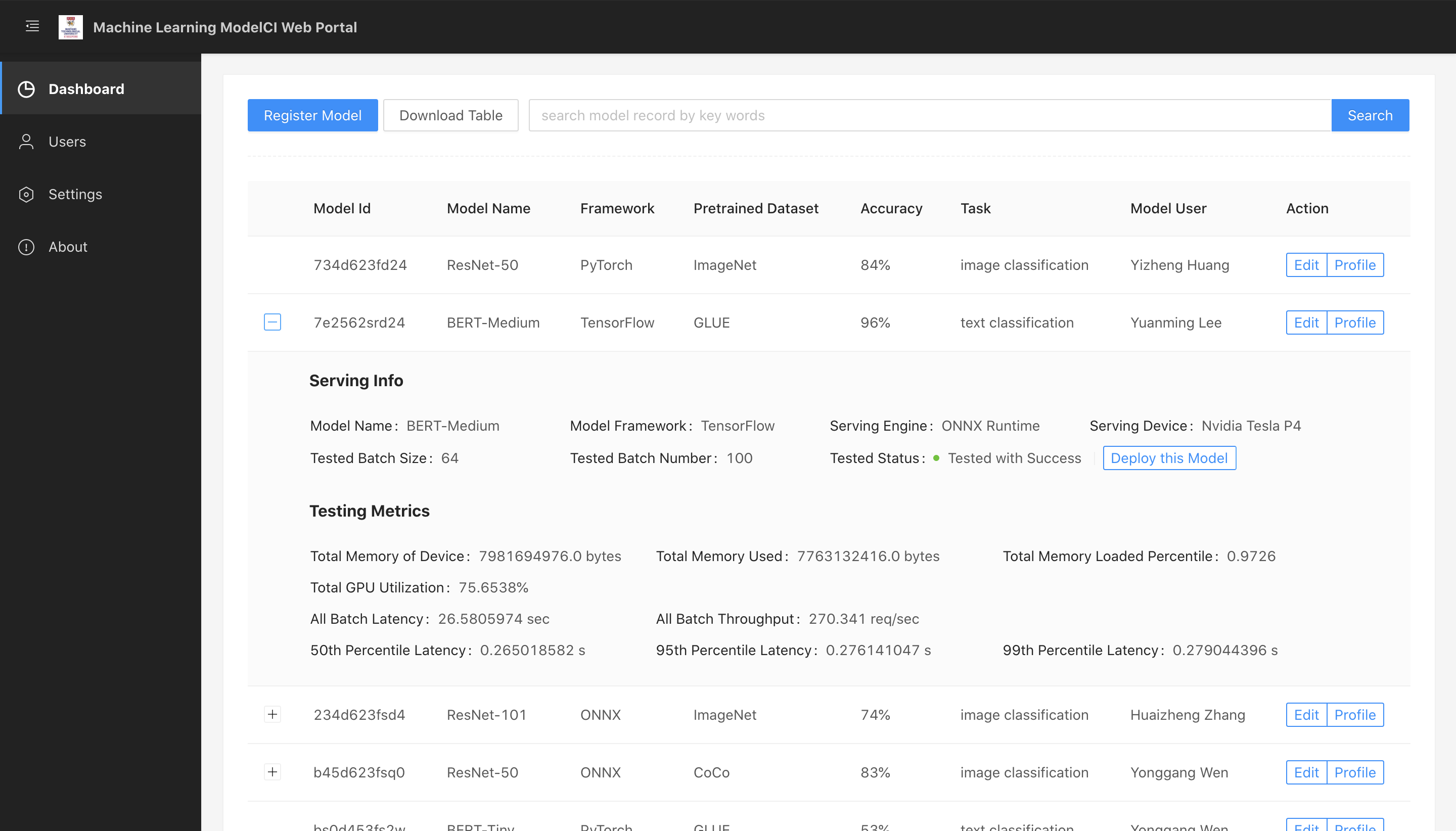

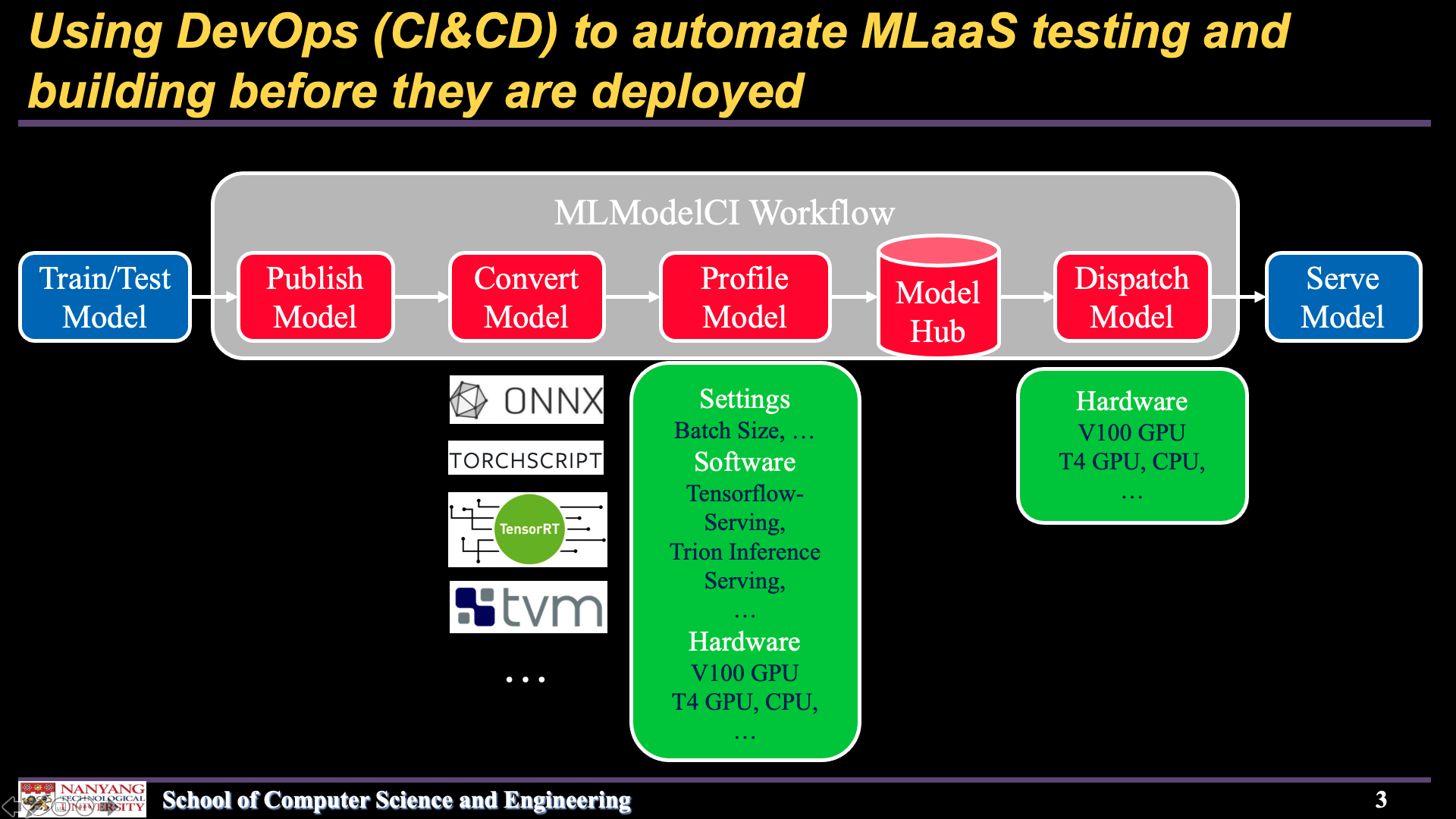

The below figures illusrates the web interface of our system and overall workflow.

| Web frontend | Workflow |

|---|---|

|

|

- A GNU/Linux environment(Ubuntu preferred)

- Docker

- Docker Compose (Optional, for Installation via Docker)

- TVM and

tvmPython module(Optional) - TensorRT and Python API(Optional)

- Python >= 3.7

# install modelci from GitHub

pip install git+https://github.com/cap-ntu/ML-Model-CI.git@masterOnce you have installed, make sure the docker daemon is running, then you can start MLModelCI service on a leader server by:

modelci service initOr stop the service by:

modelci service stopdocker pull mlmodelci/mlmodelci:cpuStart basic services by Docker Compose:

docker-compose -f ML-Model-CI/docker/docker-compose-cpu-modelhub.yml up -dStop the services by:

docker-compose -f ML-Model-CI/docker/docker-compose-cpu-modelhub.yml downdocker pull mlmodelci/mlmodelci:cuda10.2-cudnn8Start basic services by Docker Compose:

docker-compose -f ML-Model-CI/docker/docker-compose-gpu-modelhub.yml up -dStop the services by:

docker-compose -f ML-Model-CI/docker/docker-compose-gpu-modelhub.yml downWe provide three options for users to use MLModelCI: CLI, Running Programmatically and Web interface

# publish a model to the system

modelci@modelci-PC:~$ modelci modelhub publish -f example/resnet50.yml

{'data': {'id': ['60746e4bc3d5598e0e7a786d']}, 'status': True}Please refer to WIKI for more CLI options.

# utilize the convert function

from modelci.hub.converter import convert

from modelci.types.bo import IOShape

# the system can trigger the function automaticlly

# users can call the function individually

convert(

'<torch model>',

src_framework='pytorch',

dst_framework='onnx',

save_path='<path to export onnx model>',

inputs=[IOShape([-1, 3, 224, 224], dtype=float)],

outputs=[IOShape([-1, 1000], dtype=float)],

opset=11)If you have installed MLModelCI via pip, you should start the frontend service manually.

# Navigate to the frontend folder

cd frontend

# Install dependencies

yarn install

# Start the frontend

yarn startThe frontend will start on http://localhost:3333

- Publish an image classification model

- Publish an object detection model

- Model performance analysis

- Model dispatch

- Model visualization&edit

After the Quick Start, we provide detailed tutorials for users to understand our system.

- Register a Model in ModelHub

- Manage Models with Housekeeper within a Team

- Convert a Model to Optimized Formats

- Profile a Model for Cost-Effective MLaaS

- Dispatch a Model as a Cloud Service

- model visulization and editor within a team

MLModelCI welcomes your contributions! Please refer to here to get start.

If you use MLModelCI in your work or use any functions published in MLModelCI, we would appreciate if you could cite:

@inproceedings{10.1145/3394171.3414535,

author = {Zhang, Huaizheng and Li, Yuanming and Huang, Yizheng and Wen, Yonggang and Yin, Jianxiong and Guan, Kyle},

title = {MLModelCI: An Automatic Cloud Platform for Efficient MLaaS},

year = {2020},

url = {https://doi.org/10.1145/3394171.3414535},

doi = {10.1145/3394171.3414535},

booktitle = {Proceedings of the 28th ACM International Conference on Multimedia},

pages = {4453–4456},

numpages = {4},

location = {Seattle, WA, USA},

series = {MM '20}

}

Please feel free to contact our team if you meet any problem when using this source code. We are glad to upgrade the code meet to your requirements if it is reasonable.

We also open to collaboration based on this elementary system and research idea.

huaizhen001 AT e.ntu.edu.sg

Copyright 2021 Nanyang Technological University, Singapore

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.