Leaderboard • Data and Query • QuickStart • Customized Implementation • Result Uploading • Contact

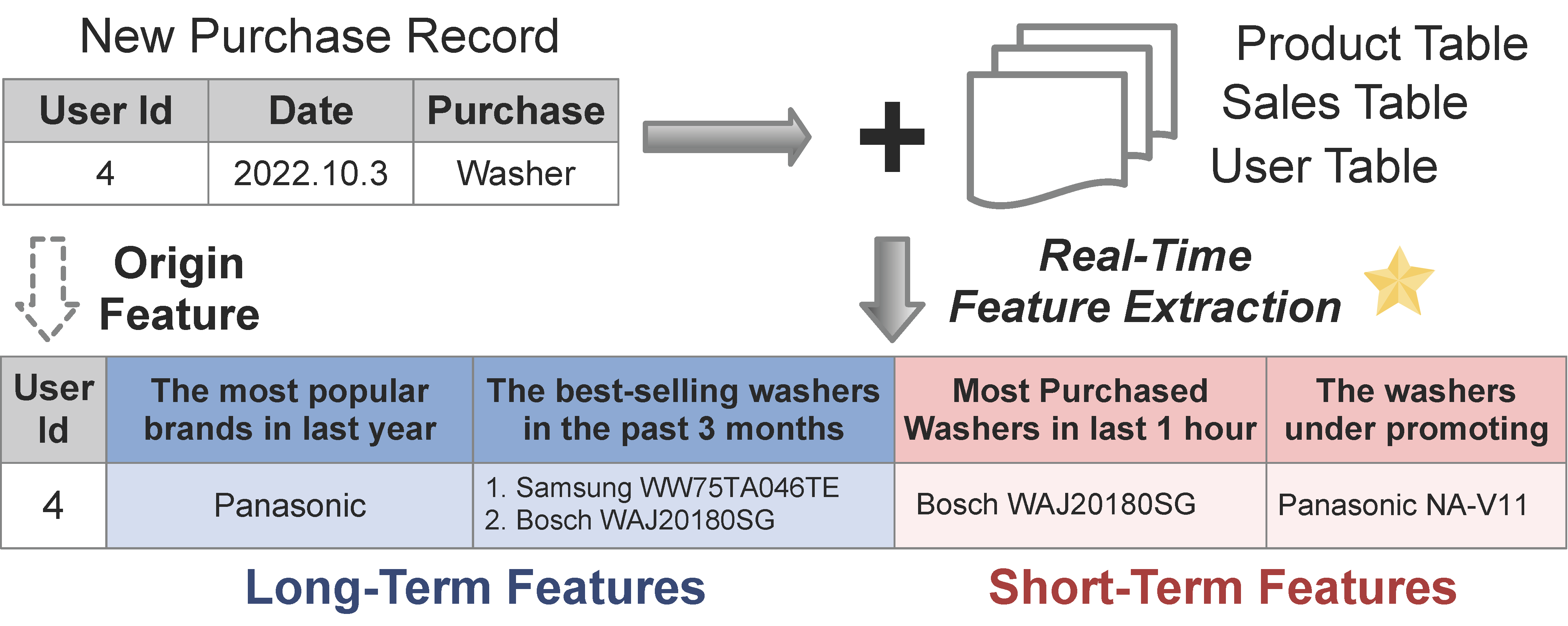

🧗 FEBench is a novel benchmark specifically designed for real-time feature extraction (RTFE) within the domain of online AI inference services. These services are rapidly being deployed in diverse applications, including finance, retail, manufacturing, energy, media, and more.

Despite the emergence of various RTFE systems capable of processing incoming data tuples using SQL-like languages, there remains a noticeable lack of studies on workload characteristics and benchmarks for RTFE.

In close collaboration with our industry partners, FEBench addresses this gap by providing selected datasets, query templates, and a comprehensive testing framework, which signifcantly differs from existing database workloads and benchmarks like TPC-C.

👐 With FEBench, we preliminarily investigate the effectiveness of feature extraction systems together with advanced hardwares, focusing on aspects like overall latency, tail latency, and concurrency performance.

For further insights, please check out our detailed Technical Report and Standard Specification!

We deeply appreciate the invaluable effort contributed by our dedicated team of developers, supportive users, and esteemed industry partners.

This leaderboard showcases the performance of executing FEBench on various hardware configurations. Two performance metrics are adopted: (i) Latency defined with the commonly used `top percentiles' in the industry; (ii) Throughput measured in QPS, i.e., the number of requests processed per second.

Leaderboard - Latency

| Contributor | Hardware | Average TP50/90/99 Performance (ms) | Submit Date |

|---|---|---|---|

| Tsinghua | (Dual Xeon, 512GB DDR4, CentOS 7) | 2.379/3.224/5.603 | 2023/2 |

| 4Paradigm | (Dual Xeon, 438GB DDR5, Rocky 9) | 10.697/12.676/15.039 | 2023/8 |

Leaderboard - Throughput

| Contributor | Hardware | Average Performance (ops/s) | Submit Date |

|---|---|---|---|

| Tsinghua | (Dual Xeon, 512GB DDR4, CentOS 7) | 4301 | 2023/2 |

| 4Paradigm | (Dual Xeon, 438GB DDR5, Rocky 9) | 1332 | 2023/8 |

Note we utilize the performance results of OpenMLDB as the basis for ranking. To participate, kindly implement FEBench following our Standard Specification and upload your results by following the Result Uploading guidelines.

We have conducted an analysis of both the schema of our datasets and the characteristics of the queries. Please refer to our detailed data schema analysis and query analysis for further information.

Download the datasets. Replace <folder_path> with the specific path you are using,

wget -r -np -R "index.html*" -nH --cut-dirs=3 http://43.138.115.238/download/febench/data/ -P <folder_path>Note that the data files are in parquet format.

The above server is located in China, if you are experiencing slow connection, you may try to download from OneDrive HERE (this copy is compressed, please decompress after downloading).

We have included a comprehensive testing procedure in a docker for you to try.

- Download docker image.

docker pull vegatablechicken/febench:0.5.0-lmem- Run the image.

# note that you need download the data in advance and mount it into the container.

docker run -it -v <data path>:/work/febench/dataset <image id>- Start the clusters, addr is

localhost:7181, path is/work/openmldb.

/work/init.sh- update the repository

cd /work/febench

git pull- Enter

febenchdirectory and initialize FEBench tests

cd /work/febench

export FEBENCH_ROOT=`pwd`

sed s#\<path\>#$FEBENCH_ROOT# ./OpenMLDB/conf/conf.properties.template > ./OpenMLDB/conf/conf.properties

sed s#\<path\>#$FEBENCH_ROOT# ./flink/conf/conf.properties.template > ./flink/conf/conf.properties- Run the benchmark according to Step 5 in Customized Implementation.

- OpenMLDB

cd /work/febench/OpenMLDB

./compile_test.sh #compile test

./test.sh <dataset_ID> #run task <dataset_ID>- Flink

cd /work/febench/flink

./compile_test.sh <dataset_ID> #compile and run test of task <dataset_ID>

./test.sh #rerun test of task <dataset_ID>

Here we show the approximate memory usage and execution time for each task in FEBench for your reference.

| Task | Q0 | Q1 | Q2 | Q3 | Q4 | Q5 |

|---|---|---|---|---|---|---|

| Memory (GB) | 20 | 5 | 5 | 120 | 50 | 500 |

| Exe. Time | 15min | 15min | 15min | 1hr | 4hrs | 4hrs |

Note that for larger datasets like Q3, Q4 and Q5, please make sure enough memory is allocated. You can reduce the memory usage by setting the table replica numbers to 1 with OPTIONS(replicanum=1), for example here.

You can build and customize your cluster from scratch according to your needs. In this section you'll find: (1) System prerequisites, (2) AI features, (3) OpenMLDB evaluation, (4) Flink evaluation.

Before executing the benchmarking scripts, ensure that your environment meets the following version requirements, assuming you've already correctly configured the target FE system.

- Java JDK: Version 1.8.0 or higher

- Maven: 3.8.0 (recommended)

In the features folder: Check out the features utilized in each of the 6 AI tasks, which are generated by the commercial automated ML tool HCML (the simplified version is available at https://github.com/4paradigm/AutoX ).

Step 1: Clone the repository

Step 2: Download and move the data files to the dataset directory of the repository

Step 3: Start the OpenMLDB cluster. For a quick start, you can use the docker, but note that the performance may not be optimal since all the components are deployed on a single physical machine.

Please be aware that the default values for

spark.driver.memoryandspark.executor.memorymay not be enough for your needs. If you encounter ajava.lang.OutOfMemoryError: Java heap spaceerror, you may need to increase them by settingspark.default.confinconf/taskmanager.propertiesand restart taskmanager, or set spark parameters through CLI. You can refer to Spark Client Configuration.spark.default.conf=spark.driver.memory=32g;spark.executor.memory=32g

Step 4: Modify the conf.properties.template file to create your own conf.properties file in the ./OpenMLDB/conf directory, and update the configuration settings in the file accordingly, including the OpenMLDB cluster and the locations of data and queries.

4.1 Modify the locations of data and query,

export FEBENCH_ROOT=`pwd`

# better to add file://

sed s#\<path\>#file://$FEBENCH_ROOT# ./OpenMLDB/conf/conf.properties.template > ./OpenMLDB/conf/conf.properties

sed s#\<path\>#$FEBENCH_ROOT# ./flink/conf/conf.properties.template > ./flink/conf/conf.properties4.2 Modify the OpenMLDB cluster in conf.properties to your own,

# ./OpenMLDB/conf/conf.properties

ZK_CLUSTER=127.0.0.1:7181

ZK_PATH=/openmldbStep 5: Compile and run the test

cd OpenMLDB

./compile_test.sh

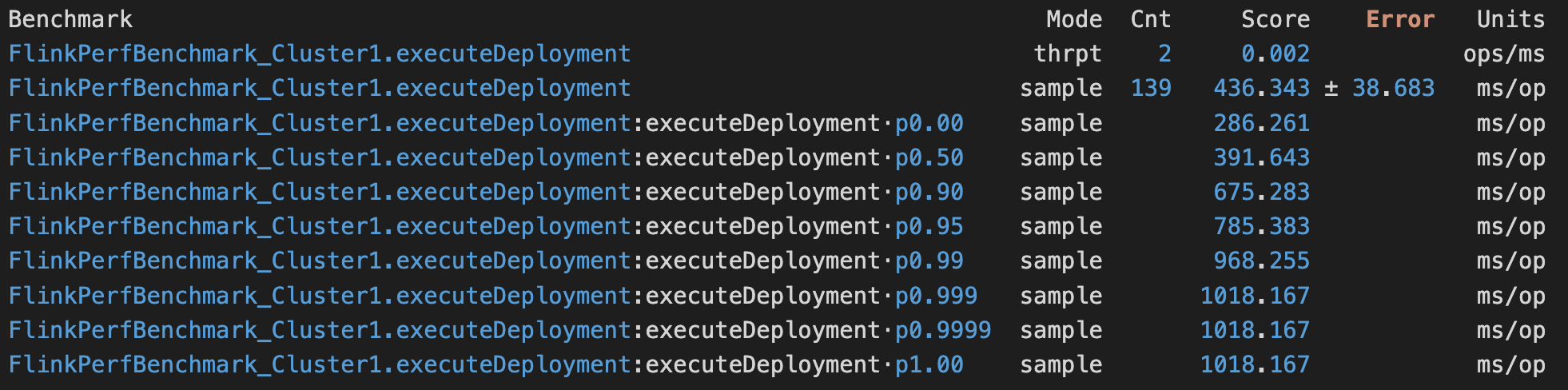

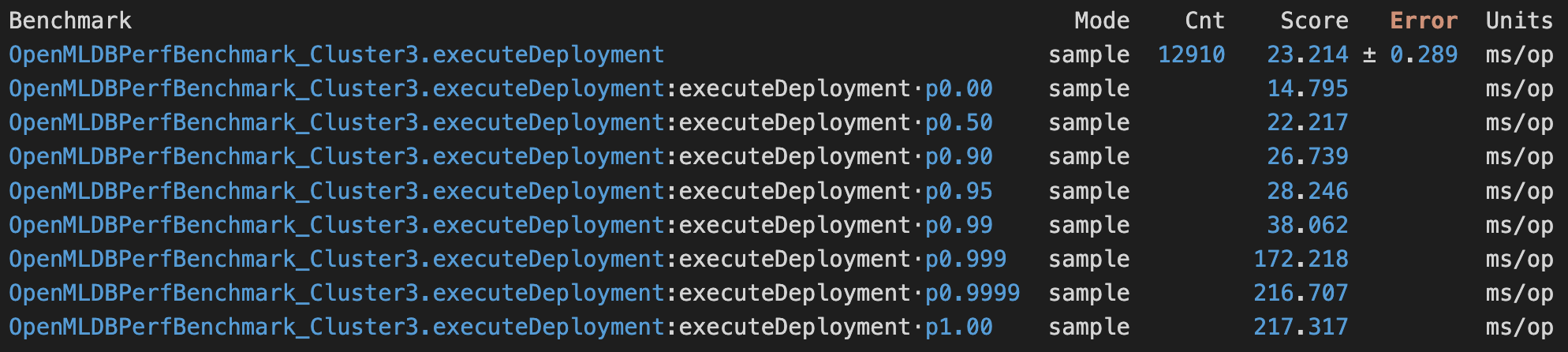

./test.sh <dataset_ID>Example test result looks as follows

Repeat the 1-5 steps in OpenMLDB Evaluation. And there are a few more steps:

-

In Step 3, additionally start a disk-based storage engine (e.g., RocksDB in MySQL) to persist the Flink table data. Note (1) the listening port is set 3306 by default and (2) you need to preload all the secondary tables into the storage engine.

-

In Step 5, supply

<dataset_ID>when runningcompile_test.shscript; and no parameter when runningtest.sh, e.g.,

./compile_test.sh 3 # compile and run the test of task3

./test.sh # rerun the test of task3- You will need to rerun

compile_test.shif you modify the fileconf.properties. This is not required for OpenMLDB Evaluation.

The benchmark results are stored at OpenMLDB/logs or flink/logs. If you'd like to share your results, please feel free to send us an email. Please tell us your institution (optional), system configurations, and attach the result file to the email. We appreciate your contribution.

Example of system configurations:

| Field | Setting |

|---|---|

| No. of Servers | 1 |

| Memory | 512 GB DDR4 2667 MHz |

| CPU | 2x Intel(R) Xeon(R) CPU E5-2630 v4 @ 2.20GHz |

| Network | 1 Gbps |

| OS | CentOS 7 |

| Tablet Server | 3 |

| Name Server | 1 |

| OpenMLDB Version | v0.6.4 |

| Docker Image Version | N.A. |

If you use FEBench in your research, please cite:

@article{zhou2023febench,

author = {Xuanhe Zhou and

Cheng Chen and

Kunyi Li and

Bingsheng He and

Mian Lu and

Qiaosheng Liu and

Wei Huang and

Guoliang Li and

Zhao Zheng and

Yuqqiang Chen},

title = {FEBench: A Benchmark for Real-Time Relational Data Feature Extraction},

journal = {Proc. {VLDB} Endow.},

year = {2023}

}

- You may use the Github Issues to leave feedback or anything you want to discuss

- Email: febench2023@gmail.com