- Fixing the Dataloader to actually load multiple clips for a given text query (CBM).

- Modifying the Validation call to enable batch processing. This is done (as of now) by loading all the video features at once, and then computing the similarity score for the entire gallery against the current text feature.

-

MovieClips_dataset.pyfile contains two arguments:p_context_windowandf_context_window, which indicate the number of videos considered before and after the current windows (context window size sorta). If these are both 1, the total clips become 3.n_clipsinmodel.pyneed to be updated accorinngly. -

If the batch processing approach is to be avoided, and the original processing to be used, change

if self.do_validation:

#val_log = self._valid_epoch(epoch)

val_log = self._memory_save_valid(epoch)

log.update(val_log)

to

if self.do_validation:

#val_log = self._valid_epoch(epoch)

val_log = self._valid_epoch(epoch)

log.update(val_log)

- Currently, the

data_loaders.pybehaviour is slightly changed. It no longer automatically sets batching tolen(dataset), but the same has been manually set in thetrain.pycall, to enable stochastic batching for the row-wise similarity calculation approach.

**** N.B: Please use the condensed movies challenge https://github.com/m-bain/CondensedMovies-chall with updated splits since some videos in the original paper are unavailable with missing features ****_

Contact me directly for the additional dataset queries, details in the challenge repo for feature download.

###############################################

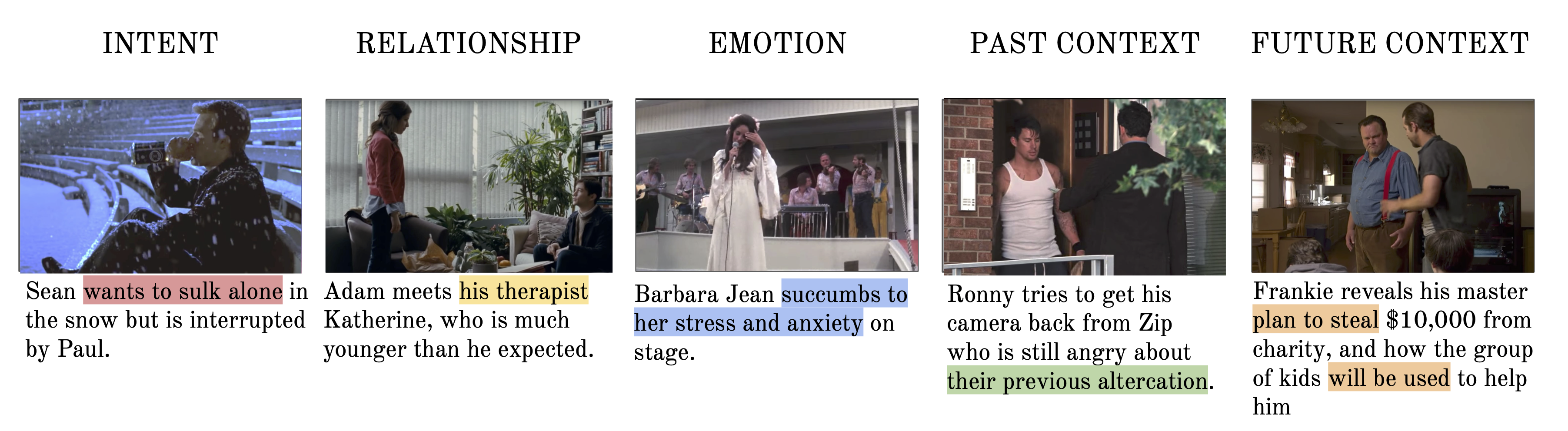

This repository contains the video dataset, implementation and baselines from Condensed Movies: Story Based Retrieval with Contextual Embeddings.

Project page | arXiv preprint | Read the paper | Preview the data

The dataset consists of 3K+ movies, 30K+ professionally captioned clips, 1K+ video hours, 400K+ facetracks & precomputed features from 6 different modalities.

Requirements:

- Storage

- 20GB for features (required for baseline experiments)

- 10GB for facetracks

- 250GB for videos

- Libraries

- ffmpeg (for video download)

- youtube-dl (for video download)

- pandas, numpy

- python 3.6+

- Navigate to directory

cd CondensedMovies/data_prep/ - Edit configuration file

config.jsonto download desired subsets of the dataset and their destination. - If downloading the source videos (

src: true), you can edityoutube-dl.conffor desired resolution, subtitles etc. Please see youtube-dl for more info - Run

python download.py

If you have trouble downloading the source videos or features (due to geographical restrictions or otherwise), please contact me.

Edit data_dir and save_dir in configs/moe.json for the experiments.

python train.py configs/moe.jsonpython test.py --resume $SAVED_EXP_DIR/model_best.pth

Run python visualise_face_tracks.py with the appropriate arguments to visualise face tracks for a given videoID (requires facetracks and source videos downloaded).

- youtube download script

- missing videos check

- precomputed features download script

- facetrack visualisation

- dataloader

- video-text retrieval baselines

- intra-movie baselines + char module

- release fixed_seg features

Why did some of the source videos fail to download?

This is most likely due to geographical restrictions on the videos, email me at maxbain@robots.ox.ac.uk and I can help.

The precomputed features are averaged over the temporal dimension, will you release the original features?

This is to save space, original features in total are ~1TB, contact me to arrange download of this.

I think clip X is incorrectly identified as being from movie Y, what do do?

Please let me know any movie identification mistakes and I'll correct it ASAP.

We would like to thank Samuel Albanie for his help with feature extraction.