This repository hosts the official PyTorch implementation of the paper: "HairCLIP: Design Your Hair by Text and Reference Image".

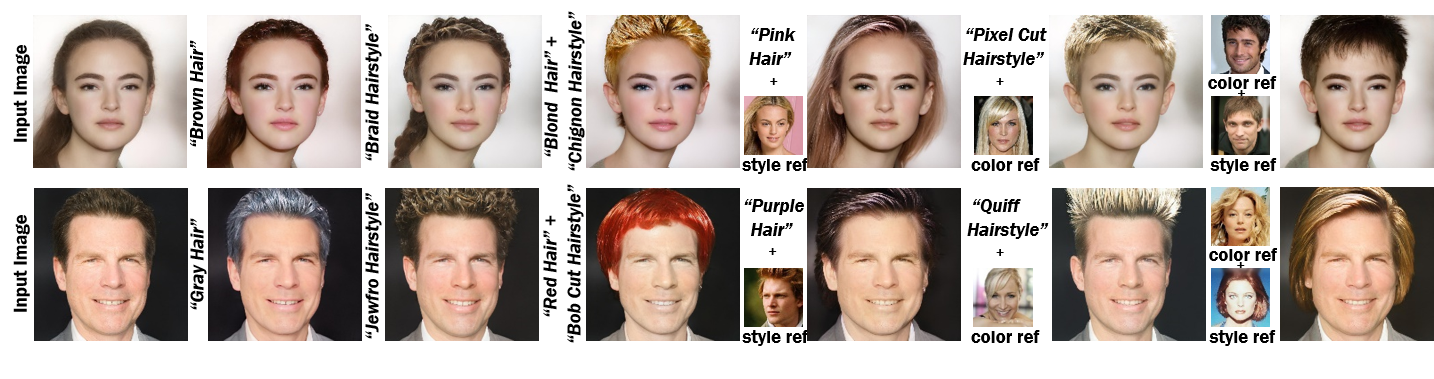

Our single framework supports hairstyle and hair color editing individually or jointly, and conditional inputs can come from either image or text domain.

Tianyi Wei1,

Dongdong Chen2,

Wenbo Zhou1,

Jing Liao3,

Zhentao Tan1,

Lu Yuan2,

Weiming Zhang1,

Nenghai Yu1

1University of Science and Technology of China, 2Microsoft Cloud AI, 3City University of Hong Kong

2022.03.02: Our paper is accepted by CVPR2022 and the code will be released soon.

2022.03.09: Our training code is released.

2022.03.19: Our testing code and pretrained model are released.

Upload your own image and try HairCLIP here with Replicate

$ conda install --yes -c pytorch pytorch=1.7.1 torchvision cudatoolkit=11.0

$ pip install ftfy regex tqdm

$ pip install git+https://github.com/openai/CLIP.git

$ pip install tensorflow-ioPlease download the pre-trained model from the following link. The HairCLIP model contains the entire architecture, including the mapper and decoder weights.

| Path | Description |

|---|---|

| HairCLIP | Our pre-trained HairCLIP model. |

If you wish to use the pretrained model for training or inference, you may do so using the flag --checkpoint_path.

In addition, we provide various auxiliary models and latent codes inverted by e4e needed for training your own HairCLIP model from scratch.

| Path | Description |

|---|---|

| FFHQ StyleGAN | StyleGAN model pretrained on FFHQ taken from rosinality with 1024x1024 output resolution. |

| IR-SE50 Model | Pretrained IR-SE50 model taken from TreB1eN for use in our ID loss during HairCLIP training. |

| Train Set | CelebA-HQ train set latent codes inverted by e4e. |

| Test Set | CelebA-HQ test set latent codes inverted by e4e. |

By default, we assume that all auxiliary models are downloaded and saved to the directory pretrained_models.

The main training script can be found in scripts/train.py.

Intermediate training results are saved to opts.exp_dir. This includes checkpoints, train outputs, and test outputs.

Additionally, if you have tensorboard installed, you can visualize tensorboard logs in opts.exp_dir/logs.

cd mapper

python scripts/train.py \

--exp_dir=/path/to/experiment \

--hairstyle_description="hairstyle_list.txt" \

--color_description="purple, red, orange, yellow, green, blue, gray, brown, black, white, blond, pink" \

--latents_train_path=/path/to/train_faces.pt \

--latents_test_path=/path/to/test_faces.pt \

--hairstyle_ref_img_train_path=/path/to/celeba_hq_train \

--hairstyle_ref_img_test_path=/path/to/celeba_hq_val \

--color_ref_img_train_path=/path/to/celeba_hq_train \

--color_ref_img_test_path=/path/to/celeba_hq_val \

--color_ref_img_in_domain_path=/path/to/generated_hair_of_various colors \

--hairstyle_manipulation_prob=0.5 \

--color_manipulation_prob=0.2 \

--both_manipulation_prob=0.27 \

--hairstyle_text_manipulation_prob=0.5 \

--color_text_manipulation_prob=0.5 \

--color_in_domain_ref_manipulation_prob=0.25 \- This version only supports batch size and test batch size to be 1.

- See

options/train_options.pyfor all training-specific flags. - See

options/test_options.pyfor all test-specific flags. - You can customize your own HairCLIP by adjusting the different category probabilities. For example, if you want to train a HairCLIP that only performs hair color editing with text as the interaction mode, you can adjust the different probabilities as follows.

--hairstyle_manipulation_prob=0 \ --color_manipulation_prob=1 \ --both_manipulation_prob=0 \ --color_text_manipulation_prob=1 \ --color_ref_img_in_domain_pathis a dataset of images with diverse hair colors generated by the HairCLIP trained from the above probabilistic configuration to enhance the diversity of the dataset when editing hair colors based on the reference image, which you may not use. If you choose to use this augmentation, you need to pre-train a text-based hair color HairCLIP according to above probabilistic configuration.- The weights of different losses in training are in the

options/train_options.py, and you can adjust them to balance your needs. Empirically, the larger the loss weight, the better the corresponding effect, but it will affect the effect of other losses to some extent.

The main inference script can be found in scripts/inference.py. Inference results are saved to test_opts.exp_dir.

cd mapper

python scripts/inference.py \

--exp_dir=/path/to/experiment \

--checkpoint_path=../pretrained_models/hairclip.pt \

--latents_test_path=/path/to/test_faces.pt \

--editing_type=hairstyle \

--input_type=text \

--hairstyle_description="hairstyle_list.txt" \cd mapper

python scripts/inference.py \

--exp_dir=/path/to/experiment \

--checkpoint_path=../pretrained_models/hairclip.pt \

--latents_test_path=/path/to/test_faces.pt \

--editing_type=both \

--input_type=text_image \

--hairstyle_description="hairstyle_list.txt" \

--color_ref_img_test_path=/path/to/celeba_hq_test \- See

options/test_options.pyfor all test-specific flags. --editing_typeshould behairstyle,color, orbothto indicate whether to edit only hairstyle, only hair color, or both hairstyle and hair color.--input_typeis used to indicate the interaction mode,textfor text, andimagefor reference image. When editing both hairstyle and hair color, the two interactions are separated by_.- The

--start_indexand--end_indexindicate the range of the edited test latent codes, where--start_indexneeds to be greater than 0 and--end_indexcannot exceed the size of the whole test latent codes dataset.

This code is based on StyleCLIP.

If you find our work useful for your research, please consider citing the following papers :)

@article{wei2022hairclip,

title={Hairclip: Design your hair by text and reference image},

author={Wei, Tianyi and Chen, Dongdong and Zhou, Wenbo and Liao, Jing and Tan, Zhentao and Yuan, Lu and Zhang, Weiming and Yu, Nenghai},

journal={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2022}

}