Wenhao Wu1,2, Haipeng Luo3, Bo Fang3, Jingdong Wang2, Wanli Ouyang4,1

Welcome to the official implementation of Cap4Video - an innovative framework that maximizes the utility of auxiliary captions generated by powerful LLMs (e.g., GPT) to enhance video-text matching.

📣 I also have other cross-modal video projects that may interest you ✨.

Revisiting Classifier: Transferring Vision-Language Models for Video Recognition

Accepted by AAAI 2023 | [Text4Vis Code]

Wenhao Wu, Zhun Sun, Wanli Ouyang

Bidirectional Cross-Modal Knowledge Exploration for Video Recognition with Pre-trained Vision-Language Models

Accepted by CVPR 2023 | [BIKE Code]

Wenhao Wu, Xiaohan Wang, Haipeng Luo, Jingdong Wang, Yi Yang, Wanli Ouyang

- [Apr 27, 2023] Our code has been released. Thanks for your star 😝.

- [Mar 21, 2023] 😍 Our Cap4Video has been selected as a 🌟Highlight🌟 paper at CVPR 2023! (Top 2.5% of 9155 submissions).

- [Feb 28, 2023] 🎉 Our Cap4Video has been accepted by CVPR-2023.

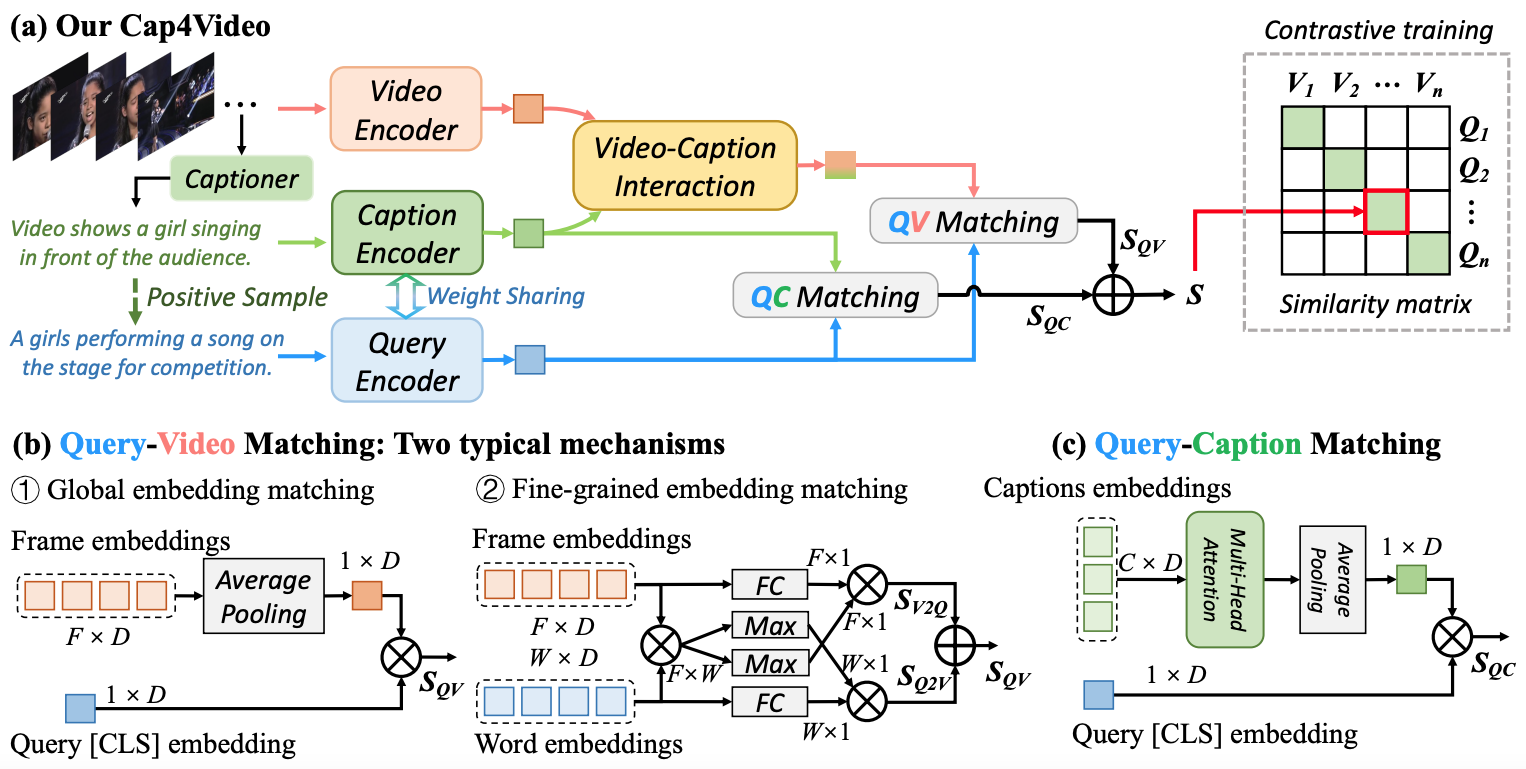

Cap4Video leverages captions generated by large language models to improve text-video matching in three ways: (1) input data augmentation during training, (2) intermediate video-caption feature interaction for creating compact video representations, and (3) output score fusion for enhancing text-video matching. Cap4Video is compatible with both global and fine-grained matching.

# From CLIP

conda install --yes -c pytorch pytorch=1.8.1 torchvision cudatoolkit=11.1

pip install ftfy regex tqdm

pip install opencv-python boto3 requests pandasAll video datasets can be downloaded from respective official links. In order to improve training efficiency, we have preprocessed these videos into frames, which we have packaged and uploaded for convenient reproduction of our results.

| Dataset | Official Link | Ours |

|---|---|---|

| MSRVTT | Video | Frames |

| DiDeMo | Video | Video |

| MSVD | Video | Frames |

| VATEX | Video | Frames |

-

To begin, you will need to prepare a video dataset that has been processed into frames.

-

Next, download the CLIP B/32 and CLIP B/16 models and place them in the

modulesfolder. -

Then, download the Caption files that we provide, and place them in the

datafolder. -

Finally, execute the following command to train MSRVTT dataset.

# For more details, please refer to the co_train_msrvtt.sh # DATA_PATH=[Your MSRVTT data path] sh co_train_msrvtt.sh

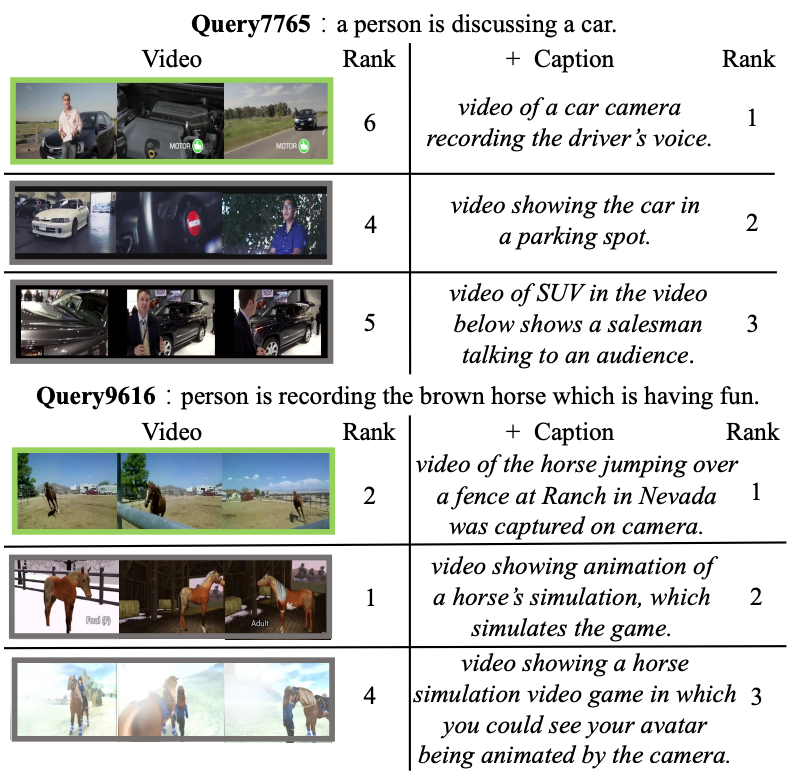

The text-video results on the MSR-VTT 1K-A test set. Left: The ranking results of the query-video matching model. Right: The ranking results of Cap4Video, which incorporates generated captions to enhance retrieval.

If you use our code in your research or wish to refer to the results, please star 🌟 this repo and use the following BibTeX 📑 entry.

@inproceedings{cap4video,

title={Cap4Video: What Can Auxiliary Captions Do for Text-Video Retrieval?},

author={Wu, Wenhao and Luo, Haipeng and Fang, Bo and Wang, Jingdong and Ouyang, Wanli},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2023}

}This repository is built in part on the excellent works of CLIP4Clip and DRL. We use Video ZeroCap to pre-extract captions from the videos. We extend our sincere gratitude to these contributors for their invaluable contributions.

For any questions, please feel free to file an issue or reach out to Wenhao Wu or Haipeng Luo.

-f9f107.svg)