Nexa SDK is a comprehensive toolkit for supporting ONNX and GGML models. It supports text generation, image generation, vision-language models (VLM), and text-to-speech (TTS) capabilities. Additionally, it offers an OpenAI-compatible API server with JSON schema mode for function calling and streaming support, and a user-friendly Streamlit UI. Users can run Nexa SDK in any device with Python environment, and GPU acceleration is supported.

-

Model Support:

- ONNX & GGML models

- Conversion Engine

- Inference Engine:

- Text Generation

- Image Generation

- Vision-Language Models (VLM)

- Text-to-Speech (TTS)

Detailed API documentation is available here.

- Server:

- OpenAI-compatible API

- JSON schema mode for function calling

- Streaming support

- Streamlit UI for interactive model deployment and testing

Below is our differentiation from other similar tools:

| Feature | Nexa SDK | ollama | Optimum | LM Studio |

|---|---|---|---|---|

| GGML Support | ✅ | ✅ | ❌ | ✅ |

| ONNX Support | ✅ | ❌ | ✅ | ❌ |

| Text Generation | ✅ | ✅ | ✅ | ✅ |

| Image Generation | ✅ | ❌ | ❌ | ❌ |

| Vision-Language Models | ✅ | ✅ | ✅ | ✅ |

| Text-to-Speech | ✅ | ❌ | ✅ | ❌ |

| Server Capability | ✅ | ✅ | ✅ | ✅ |

| User Interface | ✅ | ❌ | ❌ | ✅ |

We have released pre-built wheels for various Python versions, platforms, and backends for convenient installation on our index page.

Note

- If you want to use ONNX model, just replace

pip install nexaaiwithpip install "nexaai[onnx]"in provided commands. - For Chinese developers, we recommend you to use Tsinghua Open Source Mirror as extra index url, just replace

--extra-index-url https://pypi.org/simplewith--extra-index-url https://pypi.tuna.tsinghua.edu.cn/simplein provided commands.

pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/cpu --extra-index-url https://pypi.org/simple --no-cache-dirFor the GPU version supporting Metal (macOS):

CMAKE_ARGS="-DGGML_METAL=ON -DSD_METAL=ON" pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/metal --extra-index-url https://pypi.org/simple --no-cache-dirFAQ: cannot use Metal/GPU on M1

Try the following command:

wget https://github.com/conda-forge/miniforge/releases/latest/download/Miniforge3-MacOSX-arm64.sh

bash Miniforge3-MacOSX-arm64.sh

conda create -n nexasdk python=3.10

conda activate nexasdk

CMAKE_ARGS="-DGGML_METAL=ON -DSD_METAL=ON" pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/metal --extra-index-url https://pypi.org/simple --no-cache-dirFor Linux:

CMAKE_ARGS="-DGGML_CUDA=ON -DSD_CUBLAS=ON" pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/cu124 --extra-index-url https://pypi.org/simple --no-cache-dirFor Windows PowerShell:

$env:CMAKE_ARGS="-DGGML_CUDA=ON -DSD_CUBLAS=ON"; pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/cu124 --extra-index-url https://pypi.org/simple --no-cache-dirFor Windows Command Prompt:

set CMAKE_ARGS="-DGGML_CUDA=ON -DSD_CUBLAS=ON" & pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/cu124 --extra-index-url https://pypi.org/simple --no-cache-dirFor Windows Git Bash:

CMAKE_ARGS="-DGGML_CUDA=ON -DSD_CUBLAS=ON" pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/cu124 --extra-index-url https://pypi.org/simple --no-cache-dirFAQ: Building Issues for llava

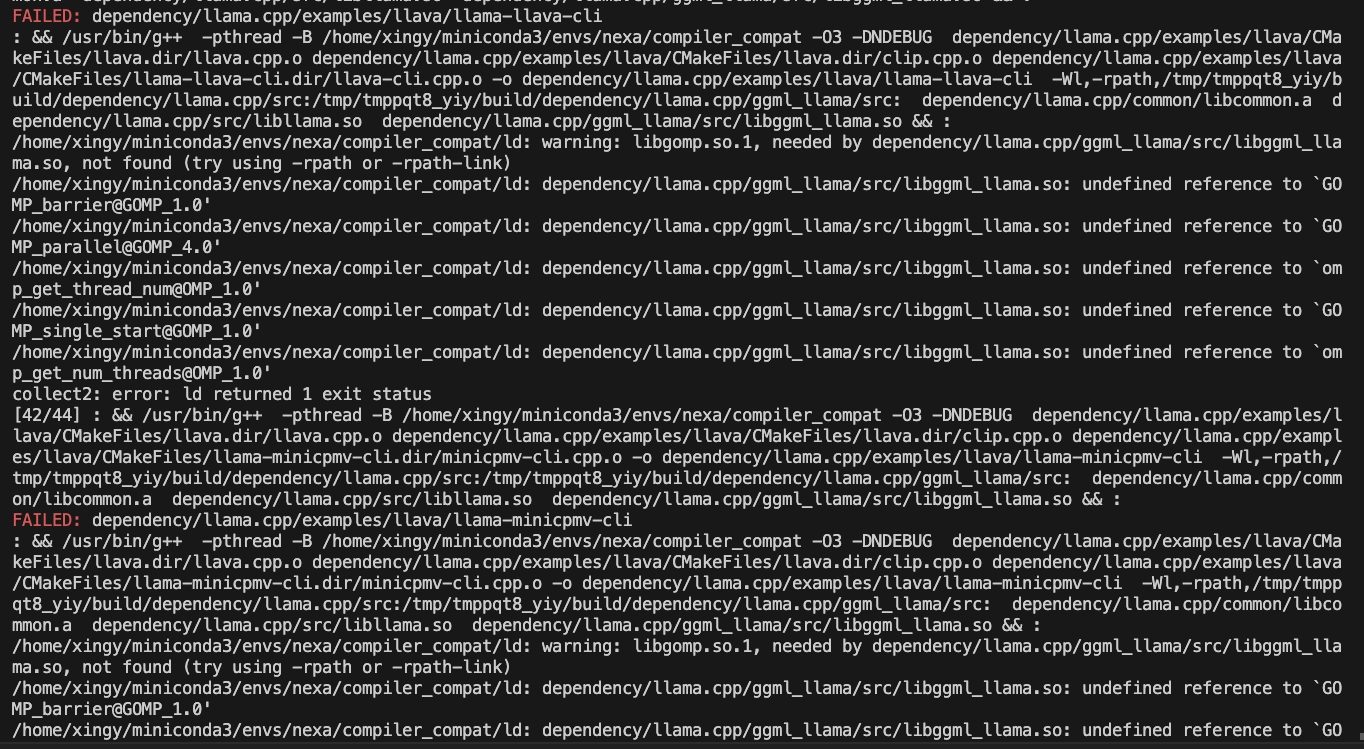

If you encounter the following issue while building:

try the following command:

CMAKE_ARGS="-DCMAKE_CXX_FLAGS=-fopenmp" pip install nexaaiFor Linux:

CMAKE_ARGS="-DGGML_HIPBLAS=on" pip install nexaai --prefer-binary --index-url https://nexaai.github.io/nexa-sdk/whl/rocm621 --extra-index-url https://pypi.org/simple --no-cache-dirHow to clone this repo

git clone --recursive https://github.com/NexaAI/nexa-sdkIf you forget to use --recursive, you can use below command to add submodule

git submodule update --init --recursiveThen you can build and install the package

pip install -e .| Model | Type | Format | Command |

|---|---|---|---|

| octopus-v2 | NLP | GGUF | nexa run octopus-v2 |

| octopus-v4 | NLP | GGUF | nexa run octopus-v4 |

| tinyllama | NLP | GGUF | nexa run tinyllama |

| llama2 | NLP | GGUF/ONNX | nexa run llama2 |

| llama3 | NLP | GGUF/ONNX | nexa run llama3 |

| llama3.1 | NLP | GGUF/ONNX | nexa run llama3.1 |

| gemma | NLP | GGUF/ONNX | nexa run gemma |

| gemma2 | NLP | GGUF | nexa run gemma2 |

| qwen1.5 | NLP | GGUF | nexa run qwen1.5 |

| qwen2 | NLP | GGUF/ONNX | nexa run qwen2 |

| qwen2.5 | NLP | GGUF | nexa run qwen2.5 |

| mathqwen | NLP | GGUF | nexa run mathqwen |

| mistral | NLP | GGUF/ONNX | nexa run mistral |

| codegemma | NLP | GGUF | nexa run codegemma |

| codellama | NLP | GGUF | nexa run codellama |

| codeqwen | NLP | GGUF | nexa run codeqwen |

| deepseek-coder | NLP | GGUF | nexa run deepseek-coder |

| dolphin-mistral | NLP | GGUF | nexa run dolphin-mistral |

| phi2 | NLP | GGUF | nexa run phi2 |

| phi3 | NLP | GGUF/ONNX | nexa run phi3 |

| llama2-uncensored | NLP | GGUF | nexa run llama2-uncensored |

| llama3-uncensored | NLP | GGUF | nexa run llama3-uncensored |

| llama2-function-calling | NLP | GGUF | nexa run llama2-function-calling |

| nanollava | Multimodal | GGUF | nexa run nanollava |

| llava-phi3 | Multimodal | GGUF | nexa run llava-phi3 |

| llava-llama3 | Multimodal | GGUF | nexa run llava-llama3 |

| llava1.6-mistral | Multimodal | GGUF | nexa run llava1.6-mistral |

| llava1.6-vicuna | Multimodal | GGUF | nexa run llava1.6-vicuna |

| stable-diffusion-v1-4 | Computer Vision | GGUF | nexa run sd1-4 |

| stable-diffusion-v1-5 | Computer Vision | GGUF/ONNX | nexa run sd1-5 |

| lcm-dreamshaper | Computer Vision | GGUF/ONNX | nexa run lcm-dreamshaper |

| hassaku-lcm | Computer Vision | GGUF | nexa run hassaku-lcm |

| anything-lcm | Computer Vision | GGUF | nexa run anything-lcm |

| faster-whisper-tiny | Audio | BIN | nexa run faster-whisper-tiny |

| faster-whisper-small | Audio | BIN | nexa run faster-whisper-small |

| faster-whisper-medium | Audio | BIN | nexa run faster-whisper-medium |

| faster-whisper-base | Audio | BIN | nexa run faster-whisper-base |

| faster-whisper-large | Audio | BIN | nexa run faster-whisper-large |

Here's a brief overview of the main CLI commands:

nexa run: Run inference for various tasks using GGUF models.nexa onnx: Run inference for various tasks using ONNX models.nexa server: Run the Nexa AI Text Generation Service.nexa pull: Pull a model from official or hub.nexa remove: Remove a model from local machine.nexa clean: Clean up all model files.nexa list: List all models in the local machine.nexa login: Login to Nexa API.nexa whoami: Show current user information.nexa logout: Logout from Nexa API.

For detailed information on CLI commands and usage, please refer to the CLI Reference document.

To start a local server using models on your local computer, you can use the nexa server command.

For detailed information on server setup, API endpoints, and usage examples, please refer to the Server Reference document.

We would like to thank the following projects: