Fast Style Transfer in TensorFlow

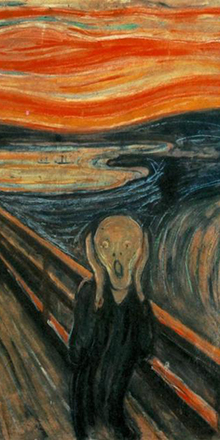

Add famous painting styles to any photo in a fraction of a second! You can even style videos!

It takes 100ms on a 2015 Titan X to style the MIT Stata Center (1024×680) like Udnie, by Francis Picabia.

Our implementation is based off of a combination of Gatys' A Neural Algorithm of Artistic Style, Johnson's Perceptual Losses for Real-Time Style Transfer and Super-Resolution, and Ulyanov's Instance Normalization.

Here we transformed every frame in a video, then combined the results. Click to go to the full demo on YouTube! The style here is Udnie, as above.

See how to generate these videos here!

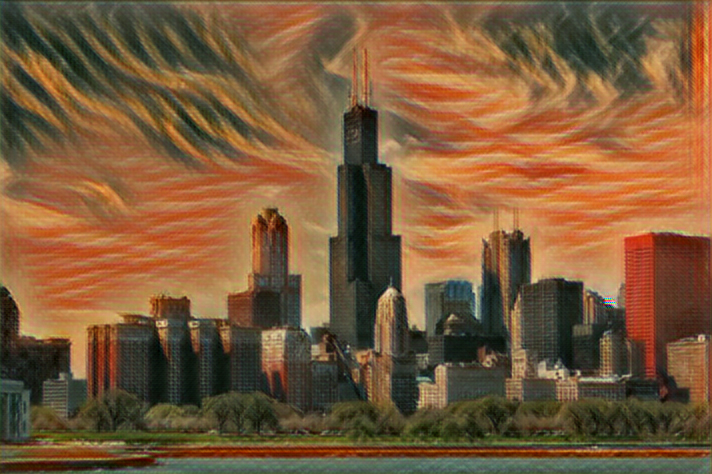

We added styles from various paintings to a photo of Chicago. Click on thumbnails to see full applied style images.

Our implementation uses TensorFlow to train a fast style transfer network. We use roughly the same transformation network as described in Johnson, except that batch normalization is replaced with Ulyanov's instance normalization, and the scaling/offset of the output tanh layer is slightly different. We use a loss function close to the one described in Gatys, using VGG19 instead of VGG16 and typically using "shallower" layers than in Johnson's implementation (e.g. we use relu1_1 rather than relu1_2). Empirically, this results in larger scale style features in transformations.

Use style.py to train a new style transfer network. Run python style.py to view all the possible parameters. Training takes 4-6 hours on a Maxwell Titan X. More detailed documentation here. Before you run this, you should run setup.sh. Example usage:

python style.py --style examples/style/wave.jpg \

--checkpoint-dir saver \

--test examples/content/chicago.jpg \

--test-dir test \

--content-weight 1.5e1 \

--checkpoint-iterations 1000 \

--batch-size 20

Use evaluate.py to evaluate a style transfer network. Run python evaluate.py to view all the possible parameters. Evaluation takes 100 ms per frame (when batch size is 1) on a Maxwell Titan X. More detailed documentation here. Takes several seconds per frame on a CPU. Models for evaluation are located here. Example usage:

python evaluate.py --style examples/style/wave.jpg \

--checkpoint-dir saver \

--in-path all_frames/ \

--out-path transformed_frames/ \

--device /gpu:2 \

--batch-size 20

Use transform_video.py to transfer style into a video. Run python transform_video.py to view all the possible parameters. Requires ffmpeg. More detailed documentation here. Example usage:

python transform_video.py --in_path shibe.mp4 \

--checkpoint-dir saver \

--out-path shibe_la_muse.mp4 \

--device /gpu:0 \

--batch-size 20

You will need the following to run the above:

- TensorFlow .11

- Python 2, Pillow, scipy, numpy

- If you want to train (and don't want to wait for 4 months):

- A decent GPU

- All the required NVIDIA software to run TF on a GPU (cuda, etc)

- ffmpeg if you want to stylize video

- This project could not have happened without the advice (and GPU access) given by Anish Athalye.

- The project also borrowed some code from Anish's Neural Style

- Some readme/docs formatting was borrowed from Justin Johnson's Fast Neural Style

- The image of the Stata Center at the very beginning of the README was taken by Juan Paulo

Copyright (c) 2016 Logan Engstrom. Contact me for commercial use (email: engstrom at my university's domain dot edu). Free for research/noncommercial use, as long as proper attribution is given and this copyright notice is retained.