This repository is the official PyTorch implementation of the papers:

- White-Box Transformers via Sparse Rate Reduction [paper link]. By Yaodong Yu (UC Berkeley), Sam Buchanan (TTIC), Druv Pai (UC Berkeley), Tianzhe Chu (UC Berkeley), Ziyang Wu (UC Berkeley), Shengbang Tong (UC Berkeley), Benjamin D Haeffele (Johns Hopkins University), and Yi Ma (UC Berkeley). 2023.

- Emergence of Segmentation with Minimalistic White-Box Transformers [paper link]. By Yaodong Yu* (UC Berkeley), Tianzhe Chu* (UC Berkeley & ShanghaiTech U), Shengbang Tong (UC Berkeley & NYU), Ziyang Wu (UC Berkeley), Druv Pai (UC Berkeley), Sam Buchanan (TTIC), and Yi Ma (UC Berkeley & HKU). 2023. (* equal contribution)

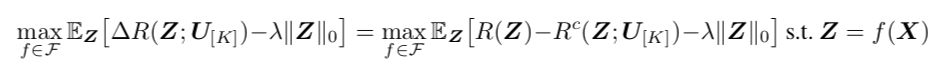

CRATE (Coding RAte reduction TransformEr) is a white-box (mathematically interpretable) transformer architecture, where each layer performs a single step of an alternating minimization algorithm to optimize the sparse rate reduction objective

where the

Figure 1 presents an overview of the pipeline for our proposed CRATE architecture:

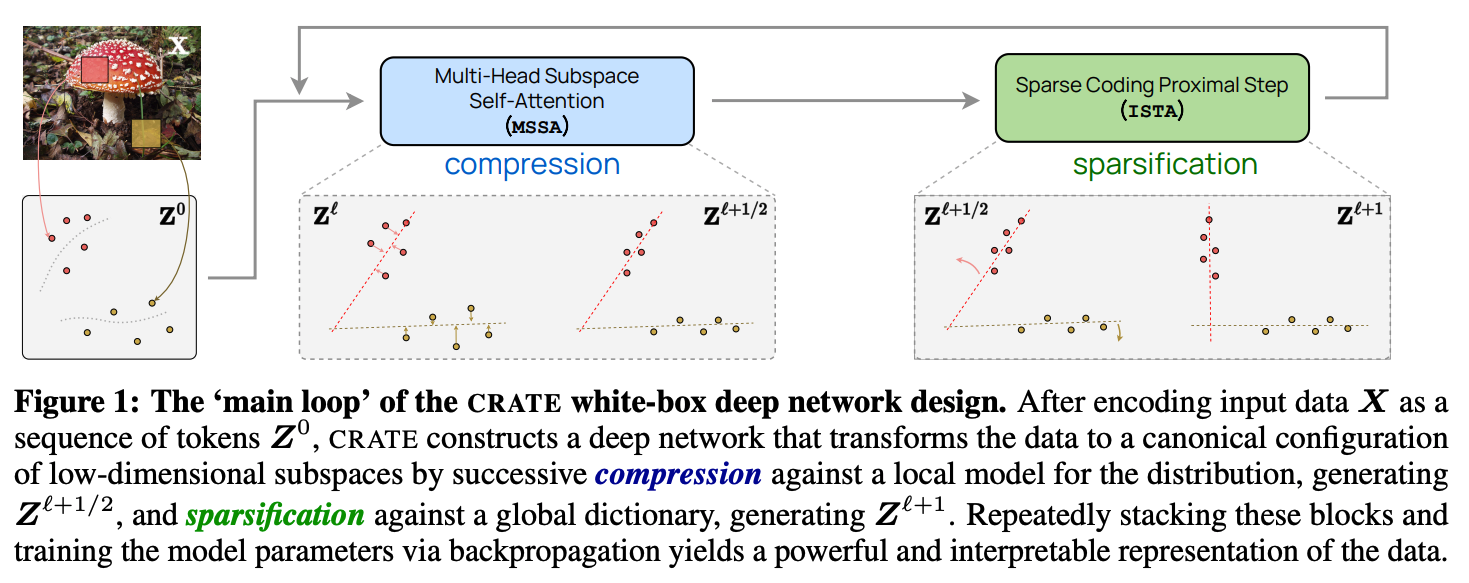

Figure 2 shows the overall architecture of one block of CRATE:

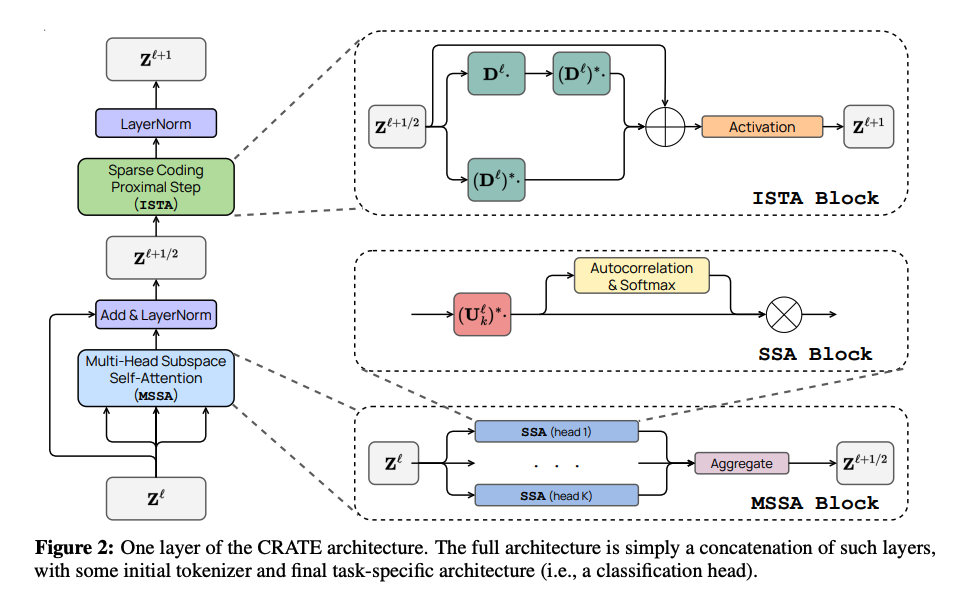

In Figure 3, we measure the compression term [

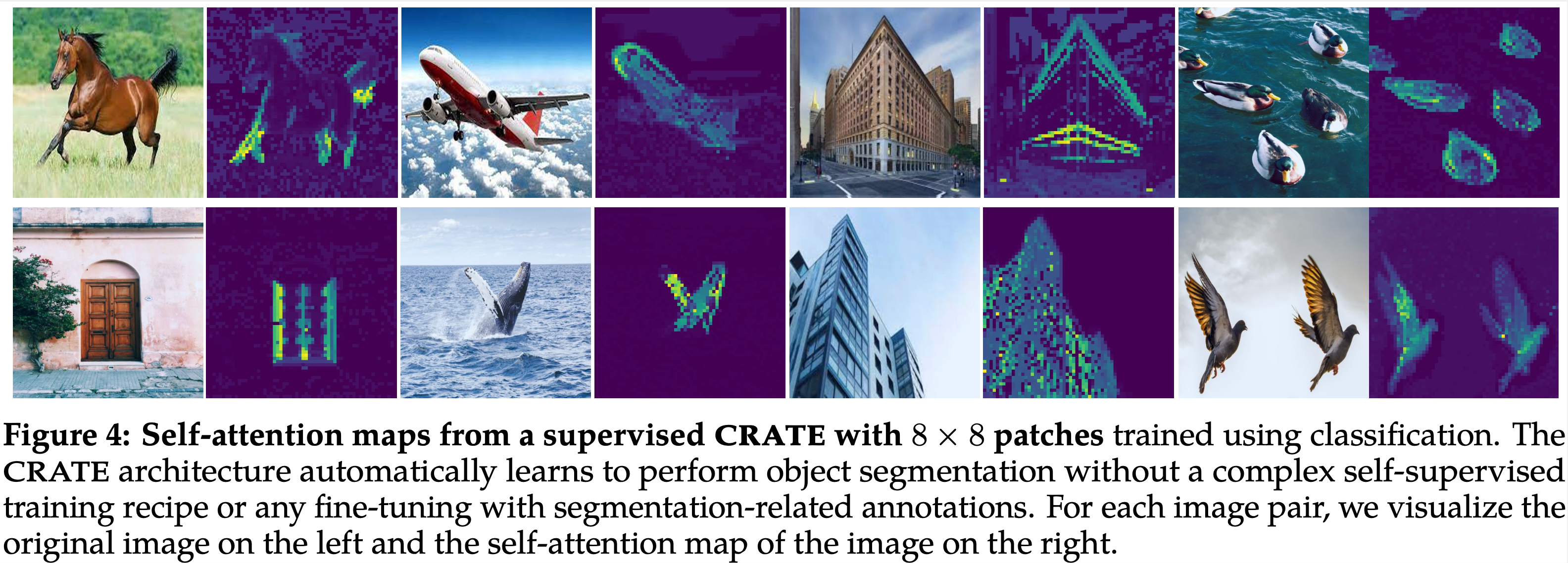

In Figure 4, we visualize self-attention maps from a supervised CRATE with 8x8 patches (similar to the ones shown in DINO 🦖):

A CRATE model can be defined using the following code, (the below parameters are specified for CRATE-Tiny)

from model.crate import CRATE

dim = 384

n_heads = 6

depth = 12

model = CRATE(image_size=224,

patch_size=16,

num_classes=1000,

dim=dim,

depth=depth,

heads=n_heads,

dim_head=dim // n_heads)| model | dim |

n_heads |

depth |

pre-trained checkpoint |

|---|---|---|---|---|

| CRATE-T(iny) | 384 | 6 | 12 | TODO |

| CRATE-S(mall) | 576 | 12 | 12 | download link |

| CRATE-B(ase) | 768 | 12 | 12 | TODO |

| CRATE-L(arge) | 1024 | 16 | 24 | TODO |

To train a CRATE model on ImageNet-1K, run the following script (training CRATE-tiny)

As an example, we use the following command for training CRATE-tiny on ImageNet-1K:

python main.py

--arch CRATE_tiny

--batch-size 512

--epochs 200

--optimizer Lion

--lr 0.0002

--weight-decay 0.05

--print-freq 25

--data DATA_DIRand replace DATA_DIR with [imagenet-folder with train and val folders].

python finetune.py

--bs 256

--net CRATE_tiny

--opt adamW

--lr 5e-5

--n_epochs 200

--randomaug 1

--data cifar10

--ckpt_dir CKPT_DIR

--data_dir DATA_DIRReplace CKPT_DIR with the path for the pretrained CRATE weight, and replace DATA_DIR with the path for the CIFAR10 dataset. If CKPT_DIR is None, then this script is for training CRATE from random initialization on CIFAR10.

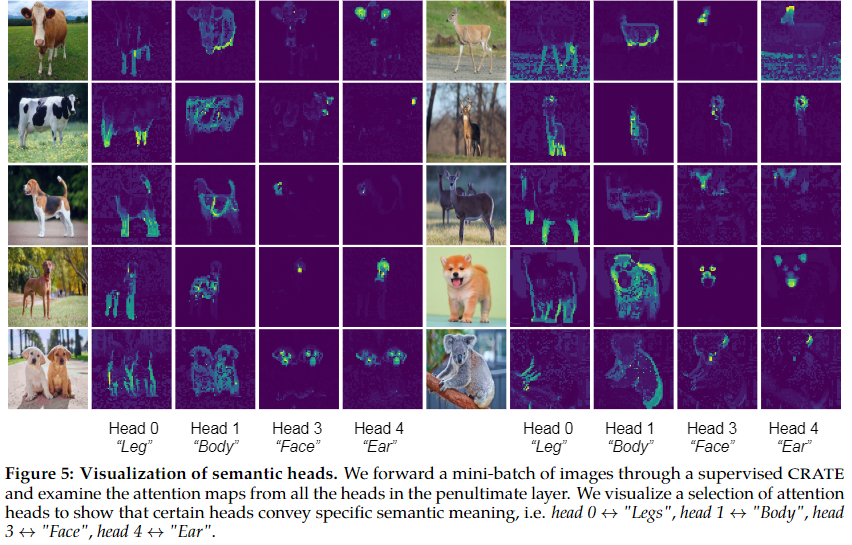

We provide a Colab Jupyter notebook to visualize the emerged segmentations from a supervised CRATE. The demo provides visualizations for Figure 4 and Figure 5.

Link: crate-emergence.ipynb (in colab)

For technical details and full experimental results, please check the crate paper and crate segmentation paper. Please consider citing our work if you find it helpful to yours:

@article{yu2023white,

title={White-Box Transformers via Sparse Rate Reduction},

author={Yu, Yaodong and Buchanan, Sam and Pai, Druv and Chu, Tianzhe and Wu, Ziyang and Tong, Shengbang and Haeffele, Benjamin D and Ma, Yi},

journal={arXiv preprint arXiv:2306.01129},

year={2023}

}

@article{yu2023emergence,

title={Emergence of Segmentation with Minimalistic White-Box Transformers},

author={Yu, Yaodong and Chu, Tianzhe and Tong, Shengbang and Wu, Ziyang and Pai, Druv and Buchanan, Sam and Ma, Yi},

journal={arXiv preprint arXiv:2308.16271},

year={2023}

}