The objective is to serve a local llama-2 model by mimicking an OpenAI API service.

The llama2 model runs on GPU using ggml-sys crate with specific compilation flags.

The easiest way of getting started is using the official Docker container. Make sure you have docker

and docker-compose installed on your machine (example install

for ubuntu20.04).

cria provides two docker images : one for CPU only deployments and a second GPU accelerated image. To use GPU image,

you need to install

the NVIDIA Container Toolkit.

We also recommend using NVIDIA drivers with CUDA version 11.7 or higher.

To deploy the cria gpu version using docker-compose:

- Clone the repos:

git clone git@github.com:AmineDiro/cria.git

cd cria/docker- The api will load the model located in

/app/model.binby default. You should change the docker-compose file with ggml model path for docker to bind mount. You can also change environement variables for your specific config. Alternatively, the easiest way is to setCRIA_MODEL_PATHin adocker/.env:

# .env

CRIA_MODEL_PATH=/path/to/ggml/model

# Other environement variables to set

CRIA_SERVICE_NAME=cria

CRIA_HOST=0.0.0.0

CRIA_PORT=3000

CRIA_MODEL_ARCHITECTURE=llama

CRIA_USE_GPU=true

CRIA_GPU_LAYERS=32

CRIA_ZIPKIN_ENDPOINT=http://zipkin-server:9411/api/v2/spans- Run

docker-composeto startup thecriaAPI server and the zipkin server

docker compose up -f docker-compose-gpu.yaml -d- Enjoy using your local LLM API server 🤟 !

-

Git clone project

git clone git@github.com:AmineDiro/cria.git cd cria/ -

Build project ( I ❤️ cargo !).

cargo b --release

- For

cuBLAS(nvidia GPU ) acceleration usecargo b --release --features cublas

- For

metalacceleration usecargo b --release --features metal

❗ NOTE: If you have issues building for GPU, checkout the building issues section

- For

-

Download GGML

.binLLama-2 quantized model (for example llama-2-7b) -

Run API, use the

use-gpuflag to offload model layers to your GPU./target/cria -a llama --model {MODEL_BIN_PATH} --use-gpu --gpu-layers 32

All the parameters can be passed as environment variables or command line arguments. Here is the reference for the command line arguments:

./target/cria --help

Usage: cria [OPTIONS]

Options:

-a, --model-architecture <MODEL_ARCHITECTURE> [default: llama]

--model <MODEL_PATH>

-v, --tokenizer-path <TOKENIZER_PATH>

-r, --tokenizer-repository <TOKENIZER_REPOSITORY>

-H, --host <HOST> [default: 0.0.0.0]

-p, --port <PORT> [default: 3000]

-m, --prefer-mmap

-c, --context-size <CONTEXT_SIZE> [default: 2048]

-l, --lora-adapters <LORA_ADAPTERS>

-u, --use-gpu

-g, --gpu-layers <GPU_LAYERS>

--n-gqa <N_GQA>

Grouped Query attention : Specify -gqa 8 for 70B models to work

-z, --zipkin-endpoint <ZIPKIN_ENDPOINT>

-h, --help Print helpFor environment variables, just prefix the argument with CRIA_ and use uppercase letters. For example, to set the

model path, you can use CRIA_MODEL environment variable.

There is a an example docker/.env.sample file in the project root directory.

We are exporting Prometheus metrics via the /metrics endpoint.

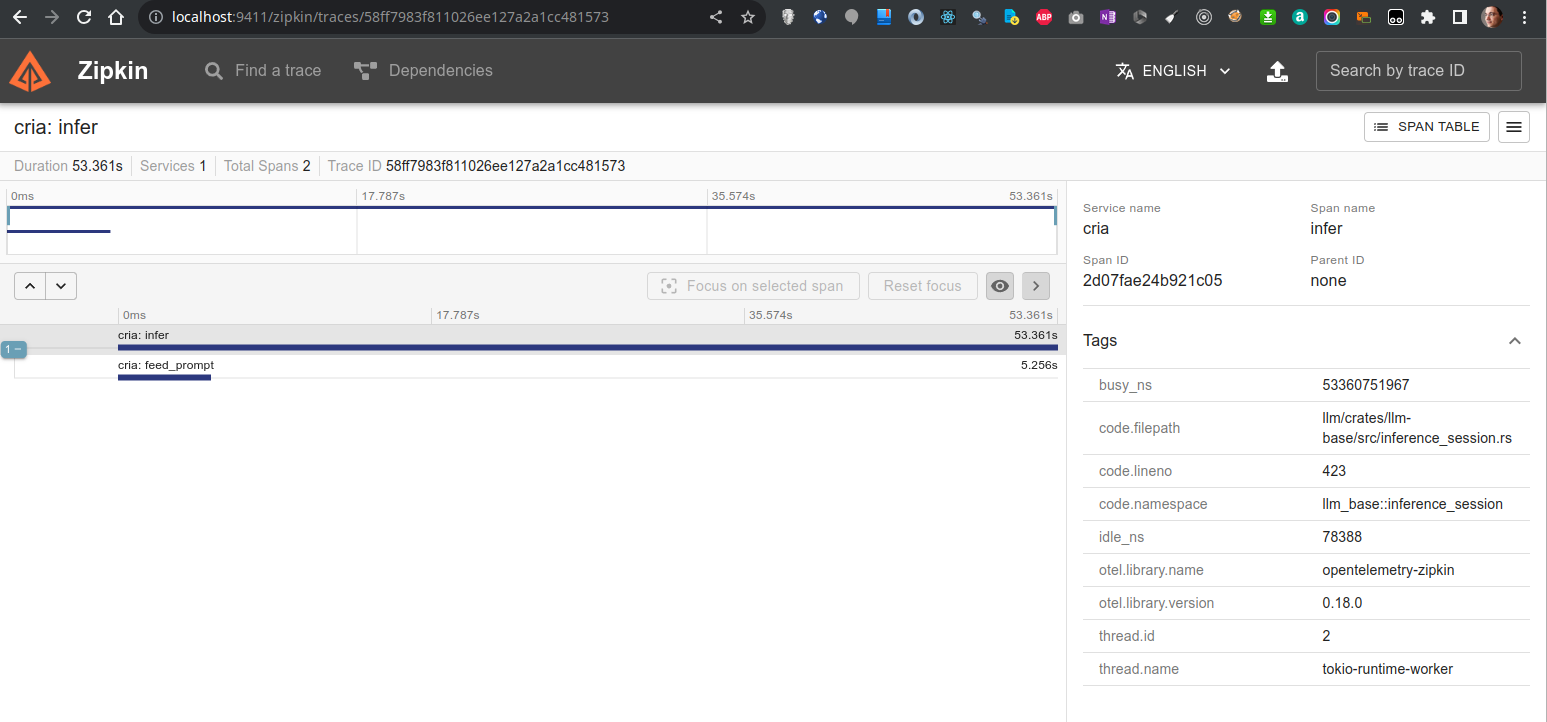

We are tracing performance metrics using tracing and tracing-opentelemetry crates.

You can use the --zipkin-endpoint to export metrics to a zipkin endpoint.

There is a docker-compose file in the project root directory to run a local zipkin server on port 9411.

You can use openai python client or directly use the sseclient python library and stream messages.

Here is an example :

Here is a example using a Python client

import json

import sys

import time

import sseclient

import urllib3

url = "http://localhost:3000/v1/completions"

http = urllib3.PoolManager()

response = http.request(

"POST",

url,

preload_content=False,

headers={

"Content-Type": "application/json",

},

body=json.dumps(

{

"prompt": "Morocco is a beautiful country situated in north africa.",

"temperature": 0.1,

}

),

)

client = sseclient.SSEClient(response)

s = time.perf_counter()

for event in client.events():

chunk = json.loads(event.data)

sys.stdout.write(chunk["choices"][0]["text"])

sys.stdout.flush()

e = time.perf_counter()

print(f"\nGeneration from completion took {e - s:.2f} !")You can clearly see generation using my M1 GPU:

- Run Llama.cpp on CPU using llm-chain

- Run Llama.cpp on GPU using llm-chain

- Implement

/modelsroute - Implement basic

/completionsroute - Implement streaming completions SSE

- Cleanup cargo features with llm

- Support MacOS Metal

- Merge completions / completion_streaming routes in same endpoint

- Implement

/embeddingsroute - Implement route

/chat/completions - Setup good tracing

- Docker deployment on CPUs / GPU

- Metrics : Prometheus

- Implement a global request queue

- For each response put an entry in a queue

- Spawn a model in separate task reading from ringbuffer, get entry and put each token in response

- Construct stream from flume resp_rx chan and stream responses to user.

- BETTER ERRORS and http responses (deal with all the unwrapping)

- Implement streaming chat completions SSE

- Implement request batching

- Implement request continuous batching

- Setup CI/CD

- Maybe Support huggingface

candlelib for a full rust integration

Details on OpenAI API docs: https://platform.openai.com/docs/api-reference/