Diffusion Model-Based Video Editing: A Survey

Wenhao Sun ,

Rong-Cheng Tu ,

Jingyi Liao,

Dacheng Tao

Nanyang Technological University

teaser.mp4

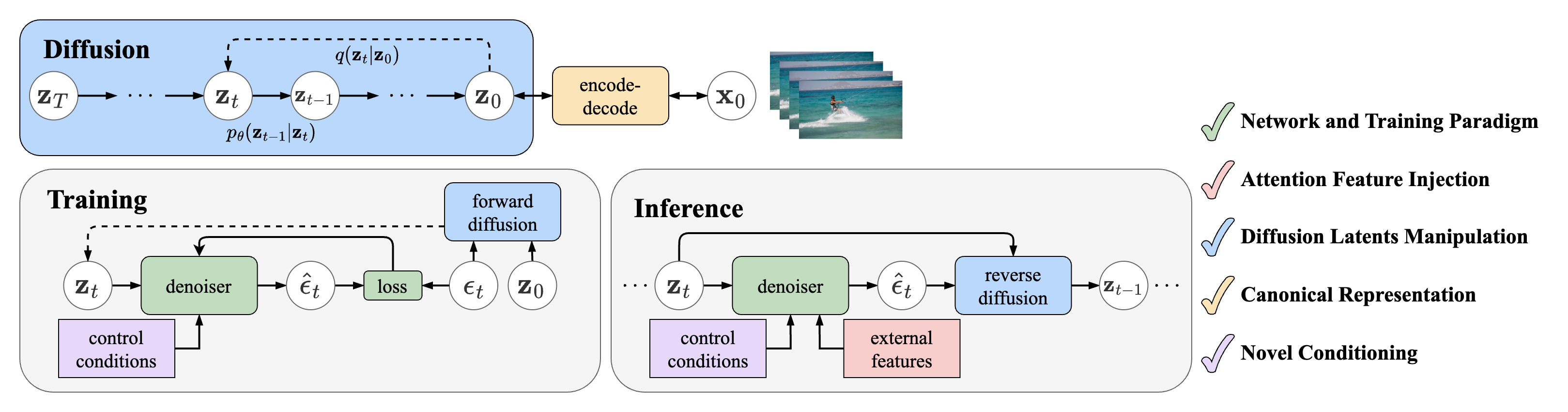

Overview of diffusion-based video editing model components.

The diffusion process defines a Markov chain that progressively adds random noise to data and learns to reverse this process to generate desired data samples from noise. Deep neural networks facilitate the transitions between latent states.

Network and Training Paradigm

Method

Paper

Project

Publication

Year

Tune-A-Video: One-Shot Tuning of Image Diffusion Models for Text-to-Video Generation

arXiv Website , GitHub ICCV

Dec 2022

Towards Consistent Video Editing with Text-to-Image Diffusion Models

arXiv NeurIPS

May 2023

SimDA: Simple Diffusion Adapter for Efficient Video Generation

arXiv Website , GitHub Preprint

Aug 2023

VidToMe: Video Token Merging for Zero-Shot Video Editing

arXiv Website , GitHub Preprint

Dec 2023

Fairy: Fast Parallelized Instruction-Guided Video-to-Video Synthesis

arXiv Website Preprint

Dec 2023

MaskINT: Video Editing via Interpolative Non-autoregressive Masked Transformers

arXiv Website CVPR

Dec 2023

Video Editing via Factorized Diffusion Distillation

arXiv Website ECCV

Mar 2024

(back to top )

Method

Paper

Project

Publication

Year

Structure and Content-Guided Video Synthesis with Diffusion Models

arXiv Website Preprint

Feb 2023

VideoComposer: Compositional Video Synthesis with Motion Controllability

arXiv Website , GitHub NeurIPS

Jun 2023

VideoControlNet: A Motion-Guided Video-to-Video Translation Framework by Using Diffusion Model with ControlNet

arXiv GitHub Preprint

Jul 2023

MagicEdit: High-Fidelity and Temporally Coherent Video Editing

arXiv Website , GitHub Preprint

Aug 2023

CCEdit: Creative and Controllable Video Editing via Diffusion Models

arXiv Website , GitHub Preprint

Sep 2023

Ground-A-Video: Zero-shot Grounded Video Editing using Text-to-image Diffusion Models

arXiv Website , GitHub ICLR

Oct 2023

LAMP: Learn A Motion Pattern for Few-Shot-Based Video Generation

arXiv Website , GitHub Preprint

Oct 2023

Motion-Conditioned Image Animation for Video Editing

arXiv Website , GitHub Preprint

Nov 2023

FlowVid: Taming Imperfect Optical Flows for Consistent Video-to-Video Synthesis

arXiv Website , GitHub CVPR

Dec 2023

EVA: Zero-shot Accurate Attributes and Multi-Object Video Editing

arXiv Website , GitHub Preprint

Mar 2024

(back to top )

Method

Paper

Project

Publication

Year

Dreamix: Video Diffusion Models are General Video Editors

arXiv Website Preprint

Feb 2023

InstructVid2Vid: Controllable Video Editing with Natural Language Instructions

arXiv Preprint

May 2023

MotionDirector: Motion Customization of Text-to-Video Diffusion Models

arXiv Website , GitHub Preprint

Oct 2023

VIDiff: Translating Videos via Multi-Modal Instructions with Diffusion Models

arXiv Website , GitHub Preprint

Nov 2023

Consistent Video-to-Video Transfer Using Synthetic Dataset

arXiv GitHub ICLR

Nov 2023

VMC: Video Motion Customization using Temporal Attention Adaption for Text-to-Video Diffusion Models

arXiv Website , GitHub CVPR

Dec 2023

SAVE: Protagonist Diversification with Structure Agnostic Video Editing

arXiv Website , GitHub Preprint

Dec 2023

VASE: Object-Centric Appearance and Shape Manipulation of Real Videos

arXiv Website , GitHub Preprint

Jan 2024

Still-Moving: Customized Video Generation without Customized Video Data

arXiv Website , Community Implementation Preprint

Jul 2024

(back to top )

Attention Feature Injection Inversion-Based Feature Injection

Method

Paper

Project

Publication

Year

Video-P2P: Video Editing with Cross-attention Control

arXiv Website , GitHub CVPR

Mar 2023

Edit-A-Video: Single Video Editing with Object-Aware Consistency

arXiv Website Preprint

Mar 2023

FateZero: Fusing Attentions for Zero-shot Text-based Video Editing

arXiv Website , GitHub ICCV

Mar 2023

Zero-Shot Video Editing Using Off-The-Shelf Image Diffusion Models

arXiv GitHub Preprint

Mar 2023

Make-A-Protagonist: Generic Video Editing with An Ensemble of Experts

arXiv Website , GitHub Preprint

May 2023

UniEdit: A Unified Tuning-Free Framework for Video Motion and Appearance Editing

arXiv Website , GitHub Preprint

Feb 2023

AnyV2V: A Tuning-Free Framework For Any Video-to-Video Editing Tasks

arXiv Website , GitHub Preprint

Mar 2024

(back to top )

Motion-Based Feature Injection

Method

Paper

Project

Publication

Year

TokenFlow: Consistent Diffusion Features for Consistent Video Editing

arXiv Website , GitHub ICLR

Jul 2023

FLATTEN: optical FLow-guided ATTENtion for consistent text-to-video editing

arXiv Website , GitHub ICLR

Oct 2023

FRESCO: Spatial-Temporal Correspondence for Zero-Shot Video Translation

arXiv Website , GitHub CVPR

Mar 2024

(back to top )

Diffusion Latents Manipulation

Method

Paper

Project

Publication

Year

Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators

arXiv Website , GitHub ICCV

Mar 2023

Control-A-Video: Controllable Text-to-Video Generation with Diffusion Models

arXiv Website , GitHub Preprint

May 2023

Video ControlNet: Towards Temporally Consistent Synthetic-to-Real Video Translation Using Conditional Image Diffusion Models

arXiv Preprint

May 2023

A Video is Worth 256 Bases: Spatial-Temporal Expectation-Maximization Inversion for Zero-Shot Video Editing

arXiv Website , GitHub CVPR

Dec 2023

(back to top )

Method

Paper

Project

Publication

Year

Pix2Video: Video Editing using Image Diffusion

arXiv Website , GitHub ICCV

Mar 2023

ControlVideo: Training-free Controllable Text-to-Video Generation

arXiv Website , GitHub ICLR

May 2023

Rerender A Video: Zero-Shot Text-Guided Video-to-Video Translation

arXiv Website , GitHub SIGGRAPH

Jun 2023

DiffSynth: Latent In-Iteration Deflickering for Realistic Video Synthesis

arXiv Website , GitHub Preprint

Aug 2023

Space-Time Diffusion Features for Zero-Shot Text-Driven Motion Transfer

arXiv Website , GitHub CVPR

Nov 2023

RAVE: Randomized Noise Shuffling for Fast and Consistent Video Editing with Diffusion Models

arXiv Website , GitHub CVPR

Dec 2023

MotionClone: Training-Free Motion Cloning for Controllable Video Generation

arXiv Website , GitHub Preprint

Jun 2024

GenVideo: One-shot target-image and shape aware video editing using T2I diffusion models

arXiv CVPR

Apr 2024

(back to top )

Method

Paper

Project

Publication

Year

Shape-aware Text-driven Layered Video Editing

Open Access Website , GitHub CVPR

Jan 2023

VidEdit: Zero-Shot and Spatially Aware Text-Driven Video Editing

arXiv Website TMLR

Jun 2023

CoDeF: Content Deformation Fields for Temporally Consistent Video Processing

arXiv Website , GitHub CVPR

Aug 2023

StableVideo: Text-driven Consistency-aware Diffusion Video Editing

arXiv GitHub ICCV

Aug 2023

DiffusionAtlas: High-Fidelity Consistent Diffusion Video Editing

arXiv Website Preprint

Dec 2023

Neural Video Fields Editing

arXiv Website , GitHub Preprint

Dec 2023

(back to top )

Method

Paper

Project

Publication

Year

VideoSwap: Customized Video Subject Swapping with Interactive Semantic Point Correspondence

arXiv Website , GitHub CVPR

Dec 2023

DragVideo: Interactive Drag-style Video Editing

arXiv GitHub Preprint

Dec 2023

Drag-A-Video: Non-rigid Video Editing with Point-based Interaction

arXiv Preprint

Dec 2023

MotionCtrl: A Unified and Flexible Motion Controller for Video Generation

arXiv GitHub , Website Preprint

Dec 2023

(back to top )

Pose-Guided Human Action Editing

Method

Paper

Project

Publication

Year

Follow Your Pose: Pose-Guided Text-to-Video Generation using Pose-Free Videos

arXiv Website , GitHub AAAI

Apr 2023

DreamPose: Fashion Image-to-Video Synthesis via Stable Diffusion

arXiv Website , GitHub ICCV

Apr 2023

DisCo: Disentangled Control for Realistic Human Dance Generation

arXiv Website , GitHub CVPR

Jun 2023

MagicPose: Realistic Human Poses and Facial Expressions Retargeting with Identity-aware Diffusion

arXiv Website , GitHub ICML

Nov 2023

MagicAnimate: Temporally Consistent Human Image Animation using Diffusion Model

arXiv Website , GitHub Preprint

Nov 2023

Animate Anyone: Consistent and Controllable Image-to-Video Synthesis for Character Animation

arXiv Website , Official GitHub , Community Implementation Preprint

Nov 2023

Zero-shot High-fidelity and Pose-controllable Character Animation

arXiv Preprint

Apr 2024

(back to top )

Leaderboard

V2VBench is a comprehensive benchmark designed to evaluate video editing methods. It consists of:

50 standardized videos across 5 categories, and

3 editing prompts per video, encompassing 4 editing tasks: Huggingface Datasets

8 evaluation metrics to assess the quality of edited videos: Evaluation Metrics

For detailed information, please refer to the accompanying paper.

If you find this repository helpful, please consider citing our paper:

@article {sun2024v2vsurvey ,

author = { Wenhao Sun and Rong-Cheng Tu and Jingyi Liao and Dacheng Tao} title = { Diffusion Model-Based Video Editing: A Survey} journal = { CoRR} volume = { abs/2407.07111} year = { 2024}