arXiv, 2023

Li Liu

·

Lufei Gao

·

Wentao Lei

·

Fengji Ma

·

Xiaotian Lin

Jinting Wang

This repository is used for recording and tracking some Multi-modal Body Language researchs,

as a supplement to our survey.

If you find any work missing or have any suggestions (papers, implementations and other resources), please don't hesitate to open an issue or pull request or just contact us by e-mail.

We will check the problems and add the missing papers to this repo ASAP.

[-2023.8.17 ] The first draft is on arxiv.

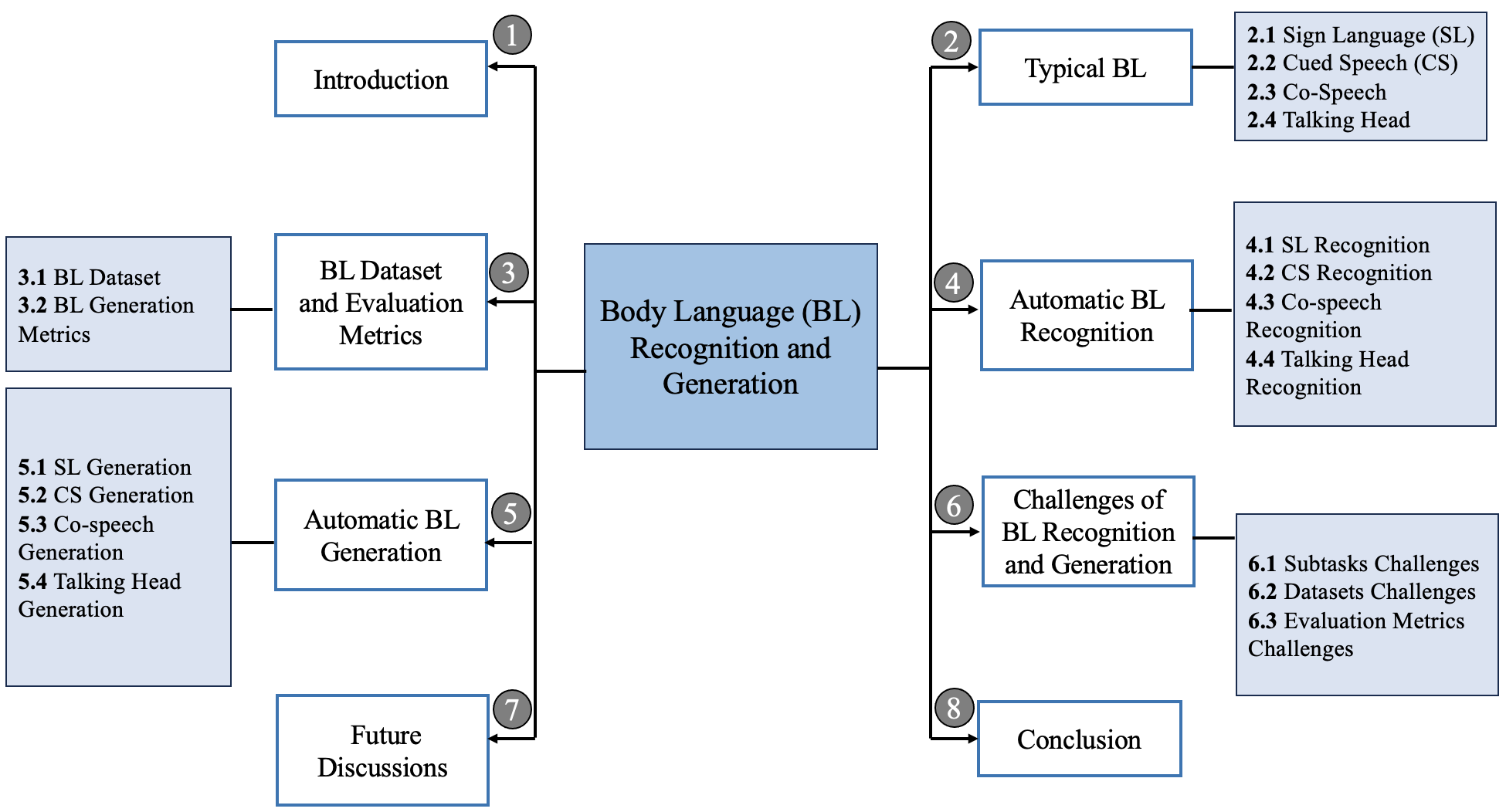

[1] We re-visit and group the existing Body Language researchs from the Multi-modal perspective.

[2] We survey the research in 4 parts: Cued Speech, Co-speech, Sign Language, Talking Head.

[3] We survey the research in 2 directions: Recognition and Generation.

[4] Some new insight for this directions are discussed.

In this survey, we present the first detailed survey on Multi-modal Body Language research.

| Year | Venue | Acronym | Paper Title | Code/Project |

|---|---|---|---|---|

| 2014 | MA3HMI | Bhattacharya et al. | Disposition Recognition from Spontaneous Speech Towards a Combination with Co-speech Gestures | N/A |

| 2021 | ACM MM | Böck et al. | Speech2AffectiveGestures: Synthesizing Co-Speech Gestures with Generative Adversarial Affective Expression Learning | N/A |

| Year | Venue | Acronym | Paper Title | Code/Project |

|---|---|---|---|---|

| 1998 | ISCA | Paul et al. | Automatic Generation of Cued Speech for The Deaf: Status and Outlook | N/A |

| 2008 | AVSP | G ́erard et al. | Retargeting cued speech hand gestures for different talking heads and speakers | N/A |

| Year | Venue | Acronym | Paper Title | Code/Project |

|---|---|---|---|---|

| 2015 | IVA | DCNF | Predicting co-verbal gestures: A deep and temporal modeling approach | N/A |

| 2019 | CVPR | S2G | Learning individual styles of conversational gesture | Code |

| 2020 | EUROGRAPHICS | StyleGestures | Style-Controllable Speech-Driven Gesture Synthesis Using Normalising Flows | Code |

| 2021 | ICCV | A2G | Audio2Gestures: Generating Diverse Gestures from Speech Audio withConditional Variational Autoencoders | Code |

| 2021 | IEEE VR | Text2Gestures | Text2Gestures: A Transformer-Based Network for Generating Emotive Body Gestures for Virtual Agents | Code |

| 2022 | Computer Graphics Forum | ZeroEGGS | ZeroEGGS: Zero-shot Example-based Gesture Generation from Speech | Code |

| 2022 | CVPR | DiffGAN | Low-Resource Adaptation for Personalized Co-Speech Gesture Generation | N/A |

| 2022 | SIGGRAPH Asia | RG | Rhythmic Gesticulator: Rhythm-Aware Co-Speech Gesture Synthesis with Hierarchical Neural Embeddings | Code |

| Year | Venue | Acronym | Paper Title | Code/Project |

|---|---|---|---|---|

| 2016 | Universal Access in the Information Society | Sign3D | Interactive editing in French Sign Language dedicated to virtual signers: requirements and challenges | N/A |

| 2018 | AAAI | DETR | Hierarchical LSTM for Sign Language Translation | N/A |

| 2020 | IJCV | text2gesture | Text2Sign: Towards Sign Language Production Using Neural Machine Translation and Generative Adversarial Networks | N/A |

| 2020 | CVPR | ESN | Everybody Sign Now:Translating Spoken Language to Photo Realistic Sign Language Video | N/A |

| 2020 | BMVC | Saunders et al. | Adversarial Training for Multi-Channel Sign Language Production | N/A |

| 2022 | ACL | DSM | Modeling Intensification for Sign Language Generation: A Computational Approach | Code |

| 2022 | CVPR | SignGAN | Signing at Scale: Learning to Co-Articulate Signs for Large-Scale Photo-Realistic Sign Language Production | N/A |

| 2023 | CVPR | PoseVQ-Diffusion | Vector Quantized Diffusion Model with CodeUnet for Text-to-Sign Pose Sequences Generation | Code |

| Year | Task | Language | Name | Link |

|---|---|---|---|---|

| 2021 | Sign Language Recognition | English | ChaLearn Looking at People | link |

| 2022 | Sign Language Recognition, Translation & Production | English | SLRTP | link |

| 2023 | Sign Language Recognition | English | Google - Isolated Sign Language Recognition | link |

| 2023 | Sign Language Recognition | Multiple | WMT-SLT 23 | link |

| 2018 | Lip Reading Recognition | Japanese | SSSD | link |

| 2022 | Talking Head Generation | English | ViCo2022 | link |

| 2023 | Talking Head Generation | English | ViCo2023 | link |

| 2020 | Co-speech Generation | English | GENEA Challenge 2020 | link |

| 2022 | Co-speech Generation | English | GENEA Challenge 2022 | link |

| 2023 | Co-speech Generation | English | GENEA Challenge 2023 | link |

If you find our survey and repository useful for your research project, please consider citing our paper:

@article{liu2023blsurvey,

title={A Survey on Deep Multi-modal Learning for Body Language Recognition and Generation},

author={Liu, Li and Lufei, Gao and Wentao, Lei and Fengji, Ma and Xiaotian, Lin and Jinting, Wang },

journal={arXiv:2308.08849},

year={2023}

}avrillliu@hkust-gz.edu.cn

wlei117@connect.hkust-gz.edu.cn