NNI (Neural Network Intelligence) is a toolkit to help users run automated machine learning (AutoML) experiments. The tool dispatches and runs trial jobs generated by tuning algorithms to search the best neural architecture and/or hyper-parameters in different environments like local machine, remote servers and cloud.

NNI v0.7 has been released!

Supported Frameworks

|

Tuning Algorithms

|

Training Services

|

|

Tuner

|

- Those who want to try different AutoML algorithms in their training code (model) at their local machine.

- Those who want to run AutoML trial jobs in different environments to speed up search (e.g. remote servers and cloud).

- Researchers and data scientists who want to implement their own AutoML algorithms and compare it with other algorithms.

- ML Platform owners who want to support AutoML in their platform.

Targeting at openness and advancing state-of-art technology, Microsoft Research (MSR) had also released few other open source projects.

- OpenPAI : an open source platform that provides complete AI model training and resource management capabilities, it is easy to extend and supports on-premise, cloud and hybrid environments in various scale.

- FrameworkController : an open source general-purpose Kubernetes Pod Controller that orchestrate all kinds of applications on Kubernetes by a single controller.

- MMdnn : A comprehensive, cross-framework solution to convert, visualize and diagnose deep neural network models. The "MM" in MMdnn stands for model management and "dnn" is an acronym for deep neural network. We encourage researchers and students leverage these projects to accelerate the AI development and research.

If you choose NNI Windows local mode and you use PowerShell to run script for the first time, you need to run PowerShell as administrator with this command first:

Set-ExecutionPolicy -ExecutionPolicy UnrestrictedInstall through pip

- We support Linux, MacOS and Windows(local mode) in current stage, Ubuntu 16.04 or higher, MacOS 10.14.1 along with Windows 10.1809 are tested and supported. Simply run the following

pip installin an environment that haspython >= 3.5.

Linux and MacOS

python3 -m pip install --upgrade nniWindows

python -m pip install --upgrade nniNote:

--usercan be added if you want to install NNI in your home directory, which does not require any special privileges.- Currently NNI on Windows only support local mode. Anaconda or Miniconda is highly recommended to install NNI on Windows.

- If there is any error like

Segmentation fault, please refer to FAQ

Install through source code

- We support Linux (Ubuntu 16.04 or higher), MacOS (10.14.1) and Windows local mode (10.1809) in our current stage.

Linux and MacOS

- Run the following commands in an environment that has

python >= 3.5,gitandwget.

git clone -b v0.7 https://github.com/Microsoft/nni.git

cd nni

source install.shWindows

- Run the following commands in an environment that has

python >=3.5,gitandPowerShell

git clone -b v0.7 https://github.com/Microsoft/nni.git

cd nni

powershell ./install.ps1For the system requirements of NNI, please refer to Install NNI

For NNI Windows local mode, please refer to NNI Windows local mode

Verify install

The following example is an experiment built on TensorFlow. Make sure you have TensorFlow installed before running it.

- Download the examples via clone the source code.

git clone -b v0.7 https://github.com/Microsoft/nni.gitLinux and MacOS

- Run the MNIST example.

nnictl create --config nni/examples/trials/mnist/config.ymlWindows

- Run the MNIST example.

nnictl create --config nni/examples/trials/mnist/config_windows.yml- Wait for the message

INFO: Successfully started experiment!in the command line. This message indicates that your experiment has been successfully started. You can explore the experiment using theWeb UI url.

INFO: Starting restful server...

INFO: Successfully started Restful server!

INFO: Setting local config...

INFO: Successfully set local config!

INFO: Starting experiment...

INFO: Successfully started experiment!

-----------------------------------------------------------------------

The experiment id is egchD4qy

The Web UI urls are: http://223.255.255.1:8080 http://127.0.0.1:8080

-----------------------------------------------------------------------

You can use these commands to get more information about the experiment

-----------------------------------------------------------------------

commands description

1. nnictl experiment show show the information of experiments

2. nnictl trial ls list all of trial jobs

3. nnictl top monitor the status of running experiments

4. nnictl log stderr show stderr log content

5. nnictl log stdout show stdout log content

6. nnictl stop stop an experiment

7. nnictl trial kill kill a trial job by id

8. nnictl --help get help information about nnictl

-----------------------------------------------------------------------

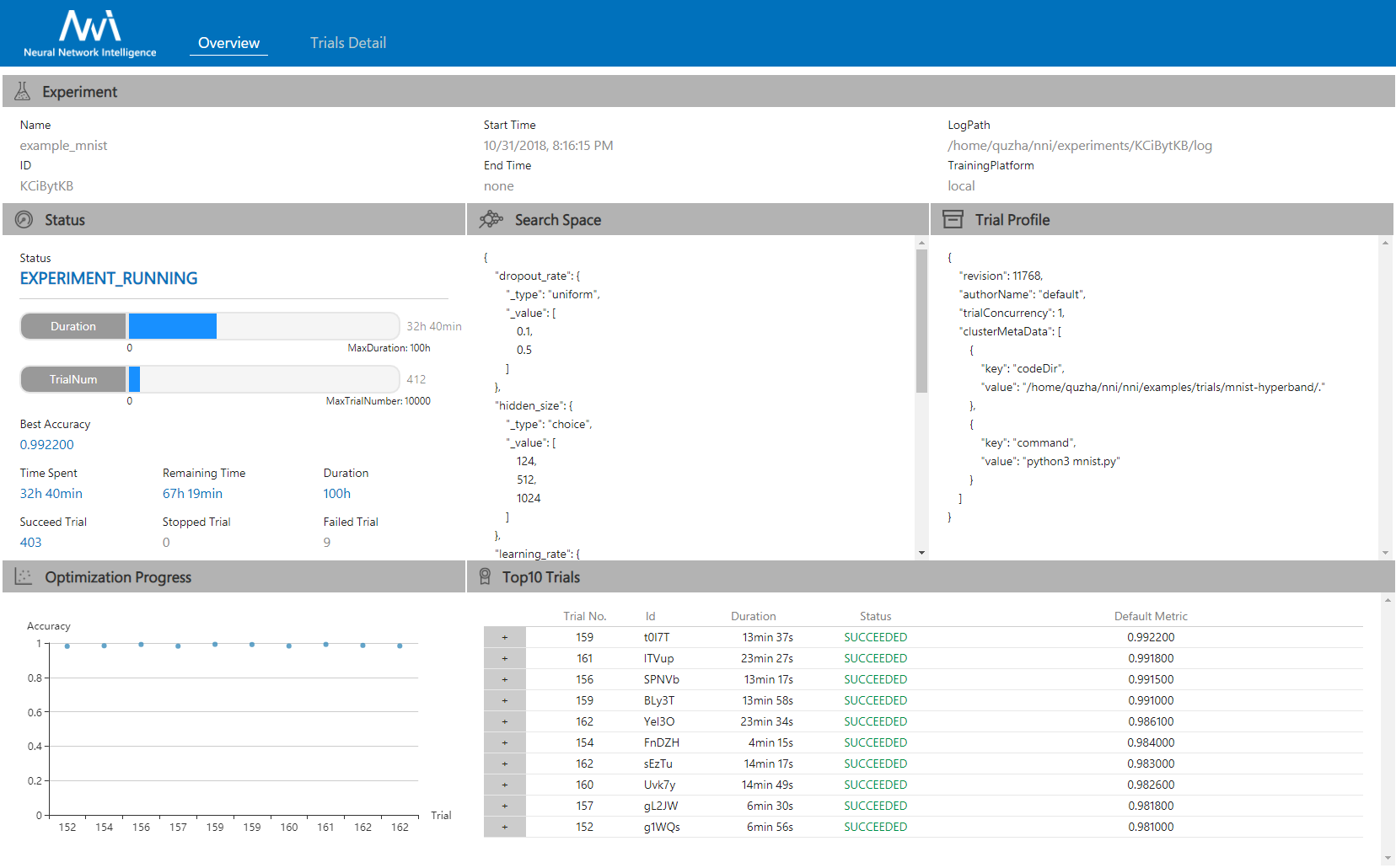

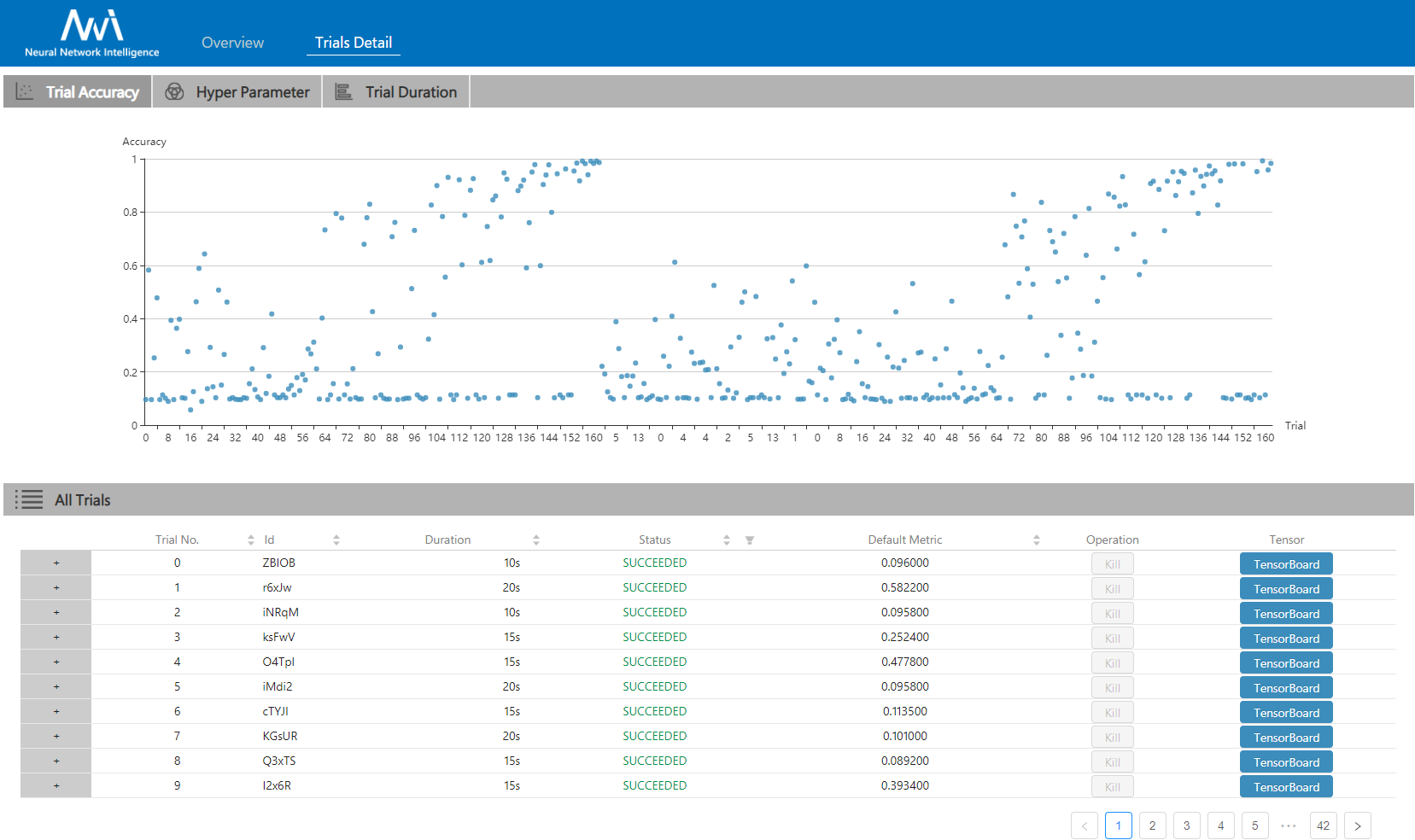

- Open the

Web UI urlin your browser, you can view detail information of the experiment and all the submitted trial jobs as shown below. Here are more Web UI pages.

|

|

|---|

- Install NNI

- Use command line tool nnictl

- Use NNIBoard

- How to define search space

- How to define a trial

- How to choose tuner/search-algorithm

- Config an experiment

- How to use annotation

- Run an experiment on local (with multiple GPUs)?

- Run an experiment on multiple machines?

- Run an experiment on OpenPAI?

- Run an experiment on Kubeflow?

- Try different tuners

- Try different assessors

- Implement a customized tuner

- Implement a customized assessor

- Use Genetic Algorithm to find good model architectures for Reading Comprehension task

This project welcomes contributions and suggestions, we use GitHub issues for tracking requests and bugs.

Issues with the good first issue label are simple and easy-to-start ones that we recommend new contributors to start with.

To set up environment for NNI development, refer to the instruction: Set up NNI developer environment

Before start coding, review and get familiar with the NNI Code Contribution Guideline: Contributing

We are in construction of the instruction for How to Debug, you are also welcome to contribute questions or suggestions on this area.

The entire codebase is under MIT license