Code and Data for the paper "LLMs for Knowledge Graph Construction and Reasoning: Recent Capabilities and Future Opportunities"

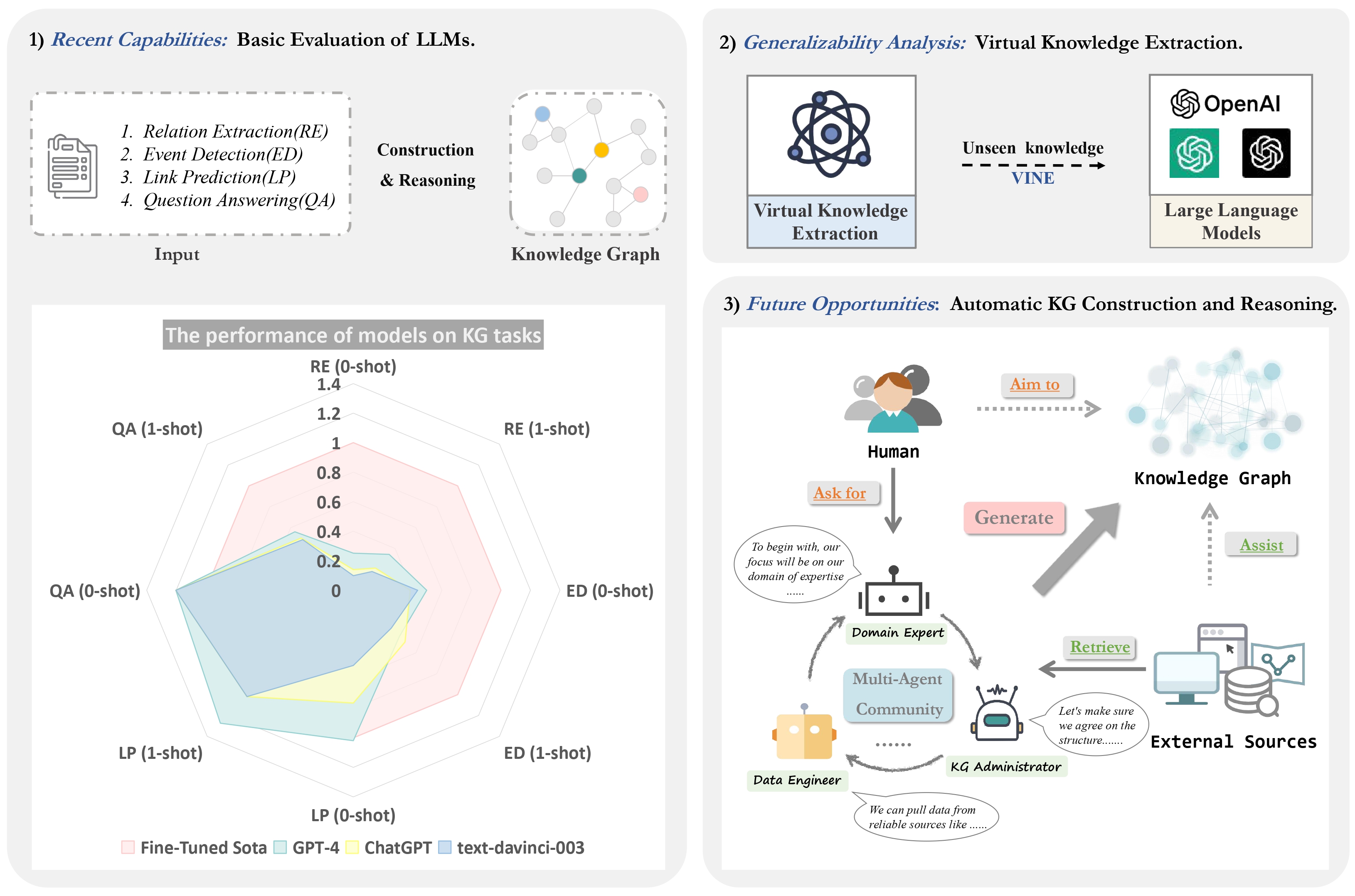

The overview of our work. There are three main components: 1) Basic Evaluation: detailing our assessment of large models (text-davinci-003, ChatGPT, and GPT-4), in both zero-shot and one-shot settings, using performance data from fully supervised state-of-the-art models as benchmarks; 2) Virtual Knowledge Extraction: an examination of large models' virtual knowledge capabilities on the constructed VINE dataset; and 3) Automatic KG: the proposal of utilizing multiple agents to facilitate the construction and reasoning of KGs.

The datasets that we used in our experiments are as follows:

-

KG Construction

You can download the dataset from the above address, and you can also find the data used in this experiment directly from the corresponding "datas" folder like DuIE2.0.

-

KG Reasoning

-

Question Answering

- FreebaseQA

- MetaQA

The expected structure of files is:

AutoKG

|-- KG Construction

| |-- DuIE2.0

| | |-- datas #dataset

| | |-- prompts #0-shot/1-shot prompts

| | |-- duie_processor.py #preprocess data

| | |-- duie_prompts.py #generate prompts

| |--MAVEN

| | |-- datas #dataset

| | |-- prompts #0-shot/1-shot prompts

| | |-- maven_processor.py #preprocess data

| | |-- maven_prompts.py #generate prompts

| |--RE-TACRED

| | |-- datas #dataset

| | |-- prompts #0-shot/1-shot prompts

| | |-- retacred_processor.py #preprocess data

| | |-- retacred_prompts.py #generate prompts

| |--SciERC

| | |-- datas #dataset

| | |-- prompts #0-shot/1-shot prompts

| | |-- scierc_processor.py #preprocess data

| | |-- scierc_prompts.py #generate prompts

|-- KG Reasoning (Link Prediction)

| |-- FB15k-237

| | |-- data #sample data

| | |-- prompts #0-shot/1-shot prompts

| |-- ATOMIC2020

| | |-- data #sample data

| | |-- prompts #0-shot/1-shot prompts

| | |-- system_eval #eval for ATOMIC2020

-

KG Construction(Use DuIE2.0 as an example)

cd KG Construction python duie_processor.py python duie_prompts.pyThen we’ll get 0-shot/1-shot prompts in the folder “prompts”

-

KG Reasoning

-

Question Answering

The VINE dataset we built can be retrieved from the folder “Virtual Knowledge Extraction/datas”

Do the following code to generate prompts:

cd Virtual Knowledge Extraction

python VINE_processor.py

python VINE_prompts.pyOur AutoKG code is based on CAMEL: Communicative Agents for “Mind” Exploration of Large Scale Language Model Society and a LangChain implementation of the paper, you can get more details through this link.

- Change the OPENAI_API_KEY in

Autokg.py - Change the SERPAPI_API_KEY in

RE_CAMEL.py.( You can get more information in serpapi )

Run the Autokg.py script.

cd AutoKG

python Autokg.pyIf you use the code or data, please cite the following paper:

@article{zhu2023llms,

title={LLMs for Knowledge Graph Construction and Reasoning: Recent Capabilities and Future Opportunities},

author={Zhu, Yuqi and Wang, Xiaohan and Chen, Jing and Qiao, Shuofei and Ou, Yixin and Yao, Yunzhi and Deng, Shumin and Chen, Huajun and Zhang, Ningyu},

journal={arXiv preprint arXiv:2305.13168},

year={2023}

}