The code in this repository, forked from the official implementation, evaluates the robustness of Adversarial Logit Pairing, a proposed defense against adversarial examples.

On the ImageNet 64x64 dataset, with an L-infinity perturbation of 16/255 (the threat model considered in the original paper), we can make the classifier accuracy 0.1% and generate targeted adversarial examples (with randomly chosen target labels) with 98.6% success rate using the provided code and models.

See our writeup here for our analysis, including visualizations of the loss landscape induced by Adversarial Logit Pairing.

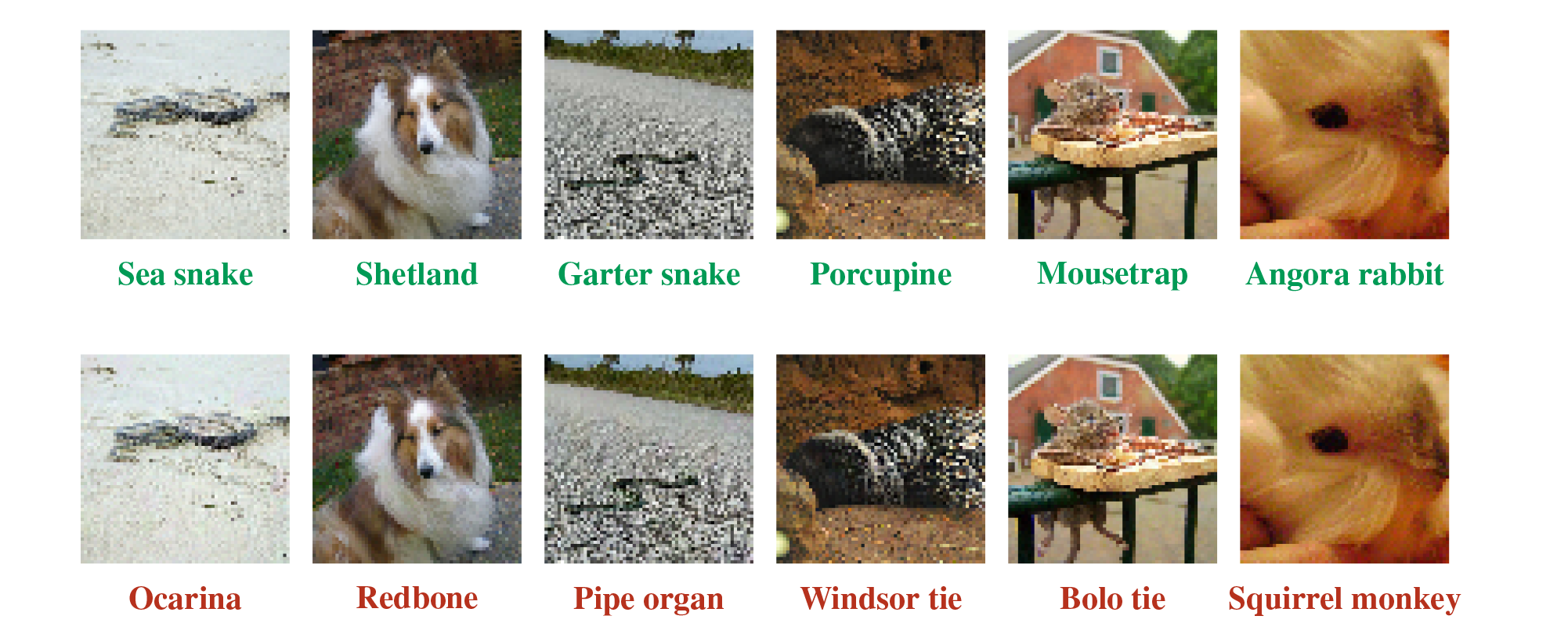

Obligatory pictures of adversarial examples (with randomly chosen target classes).

Download and untar the ALP-trained ResNet-v2-50 model into the root of the repository.

RobustML evaluation

Run with:

python robustml_eval.py --imagenet-path <path>