ROCm (-aware) MPI tests on AMD GPUs

Upon cloning the ROCm-MPI repo:

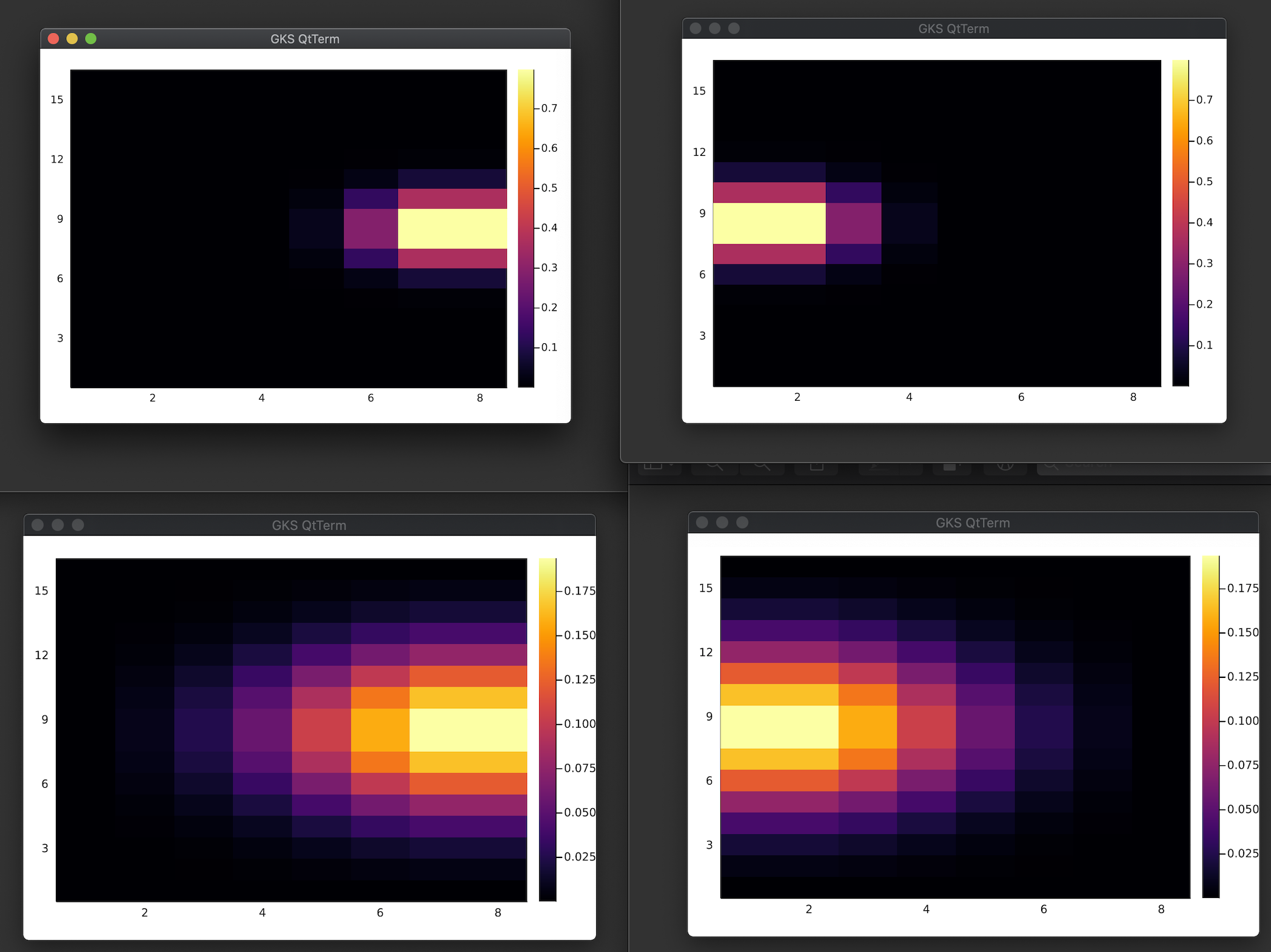

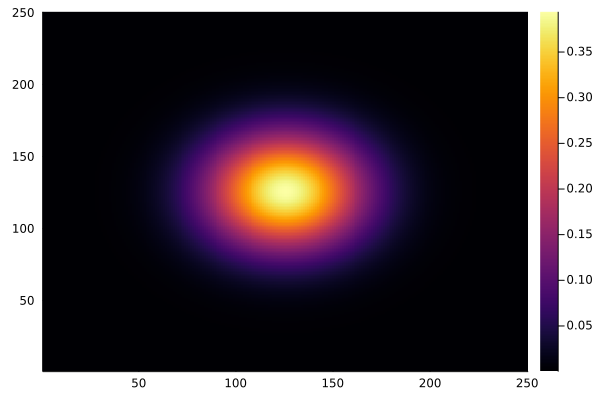

cd ROCm-MPIsrun -n 1 --mpi=pmix ./startup.shcd scriptssrun -n 4 --mpi=pmix ./runme.sh- check the image saved in

/output

Uncomment the execution lines in

runme.shto switch from array programming (ap) to kernel programming (kp) or performance-oriented (perf) examples.

scripts/setenv.sh script accordingly to the MPI and ROCm "modules" available on the machine you plan to run on.

💡 You can switch to non ROCM-aware MPI by commenting out scripts/setenv.sh L.11-17:

# ROCm-aware MPI

module load roc-ompi

export IGG_ROCMAWARE_MPI=1

# Standard MPI

# module load openmpi

# export IGG_ROCMAWARE_MPI=0The following package versions are currently needed to run ROCm (-aware) MPI tests successfully (see also in startup.sh):

- AMDGPU.jl v0.3.5 and above: https://github.com/JuliaGPU/AMDGPU.jl

- MPI.jl

#master: https://github.com/JuliaParallel/MPI.jl#master - ImplicitGlobalGrid.jl dev: https://github.com/luraess/ImplicitGlobalGrid.jl#mpi-dev