Segment Anything in 3D with NeRFs

Jiazhong Cen1, Zanwei Zhou1, Jiemin Fang2, Wei Shen1✉, Lingxi Xie3, Dongsheng Jiang3, Xiaopeng Zhang3, Qi Tian3

1AI Institute, SJTU 2School of EIC, HUST 3Huawei Inc.

Given a NeRF, just input prompts from one single view and then get your 3D model.

We propose a novel framework to Segment Anything in 3D, named SA3D. Given a neural radiance field (NeRF) model, SA3D allows users to obtain the 3D segmentation result of any target object via only one-shot manual prompting in a single rendered view. The entire process for obtaining the target 3D model can be completed in approximately 2 minutes, yet without any engineering optimization. Our experiments demonstrate the effectiveness of SA3D in different scenes, highlighting the potential of SAM in 3D scene perception.

The code will be released.

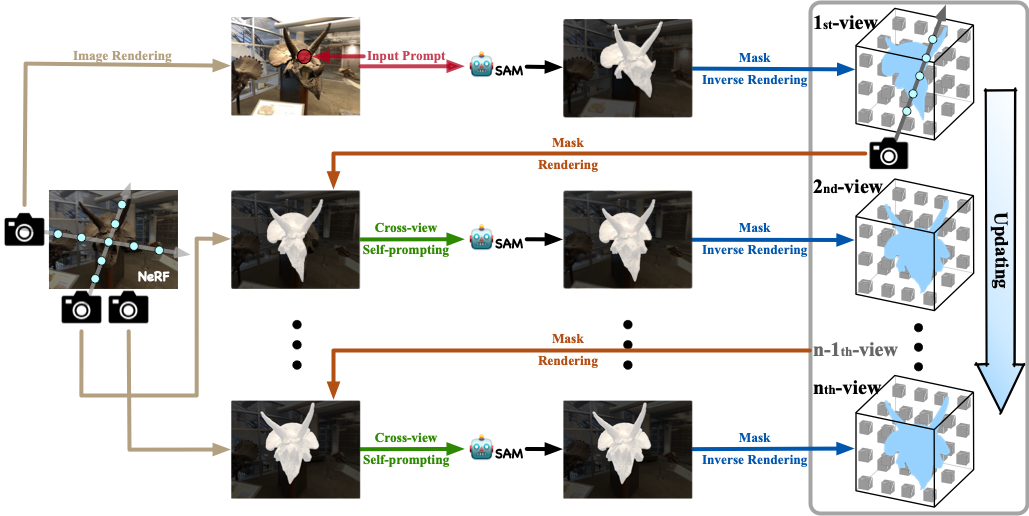

With input prompts, SAM cuts out the target object from the according view. The obtained 2D segmentation mask is projected onto 3D mask grids via density-guided inverse rendering. 2D masks from other views are then rendered, which are mostly uncompleted but used as cross-view self-prompts to be fed into SAM again. Complete masks can be obtained and projected onto mask grids. This procedure is executed via an iterative manner while accurate 3D masks can be finally learned. SA3D can adapt to various radiance fields effectively without any additional redesigning.

SA3D can handle various scenes for 3D segmentation. Find more demos in our project page.

| Forward facing | 360° | Multi-objects |

|---|---|---|

|

|

|

Thanks for the following project for their valuable contributions:

If you find this project helpful for your research, please consider citing the report and giving a ⭐.

@article{cen2023segment,

title={Segment Anything in 3D with NeRFs},

author={Jiazhong Cen and Zanwei Zhou and Jiemin Fang and Wei Shen and Lingxi Xie and Xiaopeng Zhang and Qi Tian},

journal={arXiv:2304.12308},

year={2023}

}