Language Prompt for Autonomous Driving

Dongming Wu*, Wencheng Han*, Tiancai Wang, Yingfei Liu, Xiangyu Zhang, Jianbing Shen

This is the official implementation of Language Prompt for Autonomous Driving.

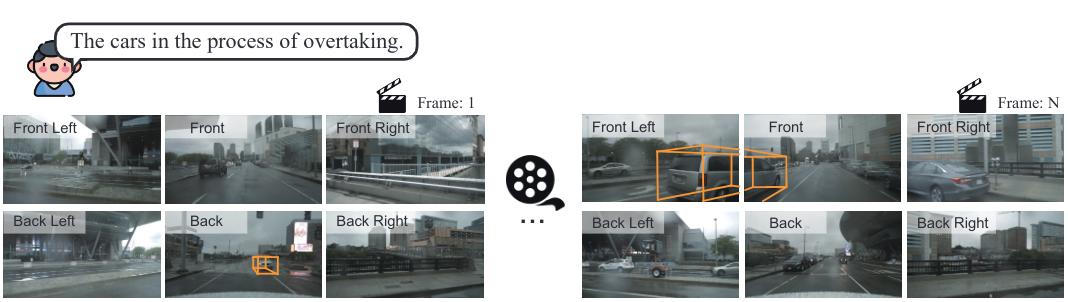

- We propose a new large-scale language prompt set for driving scenes, named NuPrompt. As far as we know, it is the first dataset specializing in multiple 3D objects of interest from video domain.

- We construct a new prompt-based driving perceiving task, which requires using a language prompt as a semantic cue to predict object trajectories.

- We develop a simple end-to-end baseline model, called PromptTrack, which effectively fuses cross-modal features in a newly built prompt reasoning branch to predict referent objects, showing impressive performance.

- [2024.06.27] Data and code are released. Welcome to try it!

- [2023.09.11] Our paper is released at arXiv.

We expand nuScenes dataset with annotating language prompts, named NuPrompt. It is a large-scale dataset for language prompt in driving scenes, which contains 40,147 language prompts for 3D objects. Thanks to nuScenes, our descriptions are closed to real-driving nature and complexity, covering a 3D, multi-view, and multi-frame space.

The data can be downloaded from NuPrompt.

Our model is built upon PF-Track.

Please refer to data.md for preparing data and pre-trained models.

Please refer to environment.md for environment installation.

Please refer to training_inference.md for training and evaluation.

| Method | AMOTA | AMOTP | RECALL | Model | Config |

|---|---|---|---|---|---|

| PromptTrack | 0.200 | 1.572 | 32.5% | model | config |

If you find our work useful in your research, please consider citing them.

@article{wu2023language,

title={Language Prompt for Autonomous Driving},

author={Wu, Dongming and Han, Wencheng and Wang, Tiancai and Liu, Yingfei and Zhang, Xiangyu and Shen, Jianbing},

journal={arXiv preprint},

year={2023}

}

@inproceedings{wu2023referring,

title={Referring multi-object tracking},

author={Wu, Dongming and Han, Wencheng and Wang, Tiancai and Dong, Xingping and Zhang, Xiangyu and Shen, Jianbing},

booktitle={CVPR},

year={2023}

}

We thank the authors that open the following projects.