CLIP-DINOiser: Teaching CLIP a few DINO tricks for Open-Vocabulary Semantic Segmentation

Monika Wysoczańska Oriane Siméoni Michaël Ramamonjisoa Andrei Bursuc Tomasz Trzciński Patrick Pérez

running_dog.2.mp4

Official PyTorch implementation of CLIP-DINOiser: Teaching CLIP a few DINO tricks.

@article{wysoczanska2023clipdino,

title={CLIP-DINOiser: Teaching CLIP a few DINO tricks for open-vocabulary semantic segmentation},

author={Wysocza{\'n}ska, Monika and Sim{\'e}oni, Oriane and Ramamonjisoa, Micha{\"e}l and Bursuc, Andrei and Trzci{\'n}ski, Tomasz and P{\'e}rez, Patrick},

journal={arxiv},

year={2023}

}

Updates

- [27/03/2023] Training code out. Updated weights to the ImageNet trained. Modified MaskCLIP code to directly load weights from OpenCLIP model.

- [20/12/2023] Code release

Try our model!

Set up the environment:

# Create conda environment

conda create -n clipdino python=3.9

conda activate clipdino

conda install pytorch==1.12.1 torchvision==0.13.1 cudatoolkit=[your CUDA version] -c pytorch

pip install -r requirements.txt

You will also need to install MMCV and MMSegmentation by running:

pip install -U openmim

mim install mmengine

mim install "mmcv-full==1.6.0"

mim install "mmsegmentation==0.27.0

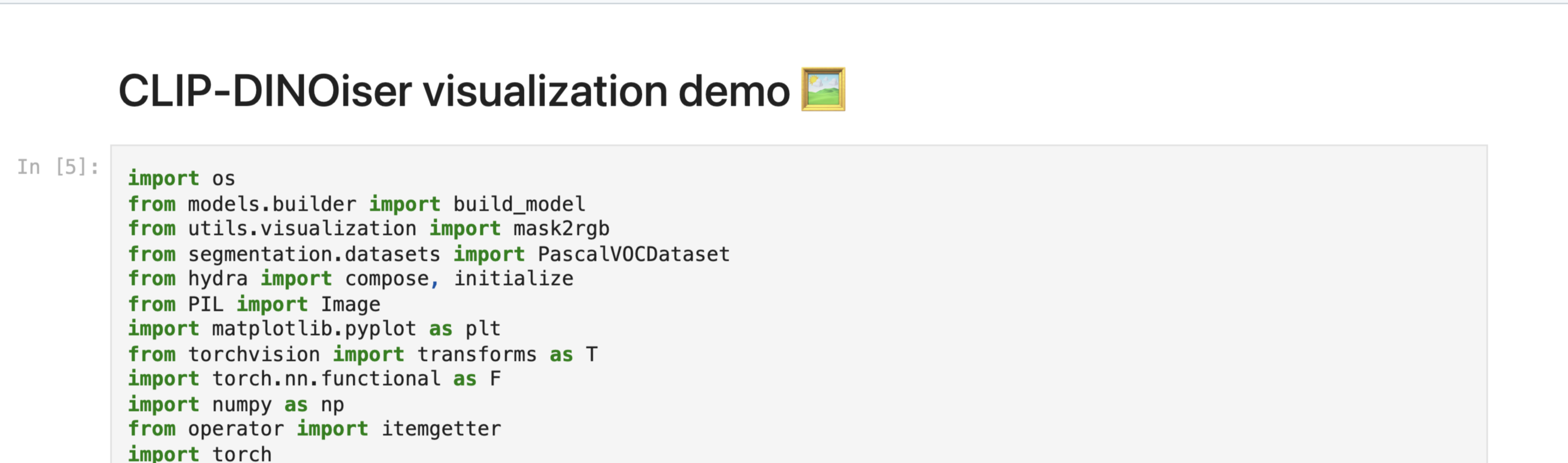

You can try our model through jupyter notebook demo.ipynb.

You can also try our demo from the command line:

python demo.py --file_path [path to the image file] --prompts [list of the text prompts separated by ',']

Example:

python demo.py --file_path assets/rusted_van.png --prompts "rusted van,foggy clouds,mountains,green trees"

In the paper, following previous works, we use 8 benchmarks; (i) w/ background: PASCAL VOC20, PASCAL Context59, and COCO-Object, and (ii) w/o background: PASCAL VOC, PASCAL Context, COCO-Stuff, Cityscapes, and ADE20k.

To run the evaluation, download and set up PASCAL VOC, PASCAL Context, COCO-Stuff164k, Cityscapes, and ADE20k datasets following MMSegmentation data preparation document.

COCO-Object dataset uses only object classes from COCO-Stuff164k dataset by collecting instance segmentation annotations. Run the following command to convert instance segmentation annotations to semantic segmentation annotations:

python tools/convert_coco.py data/coco_stuff164k/ -o data/coco_stuff164k/

In order to reproduce our results simply run:

torchrun main_eval.py clip_dinoiser.yaml

or using multiple GPUs:

CUDA_VISIBLE_DEVICES=[0,1..] torchrun --nproc_per_node=auto main_eval.py clip_dinoiser.yaml

Hardware Requirements: you'll need one gpu (~14GB) to run the training. Using NVIDIA GPU A5000 training takes approximately 3 hours.

Download ImageNet and update the ImageNet folder path in the configs/clip_dinoiser.yaml file.

Install FOUND by running:

cd models;

git clone git@github.com:valeoai/FOUND.git

cd FOUND;

git clone https://github.com/facebookresearch/dino.git

cd dino;

touch __init__.py

echo -e "import sys\nfrom os.path import dirname, join\nsys.path.insert(0, join(dirname(__file__), '.'))" >> __init__.py; cd ../;

To run the training simply run:

CUDA_VISIBLE_DEVICES=0 torchrun --nproc_per_node=auto train.py clip_dinoiser.yaml

Currently, we only support single gpu training.

This repo heavily relies on the following projects:

- TCL: Cha et al. "Text-grounded Contrastive Learning"

- MaskCLIP: Zhou et al. "Extract Free Dense Labels from CLIP"

- GroupViT: Xu et al. "GroupViT: Semantic Segmentation Emerges from Text Supervision"

- FOUND: Siméoni et al. "Unsupervised Object Localization: Observing the Background to Discover Objects"

Thanks to the authors!