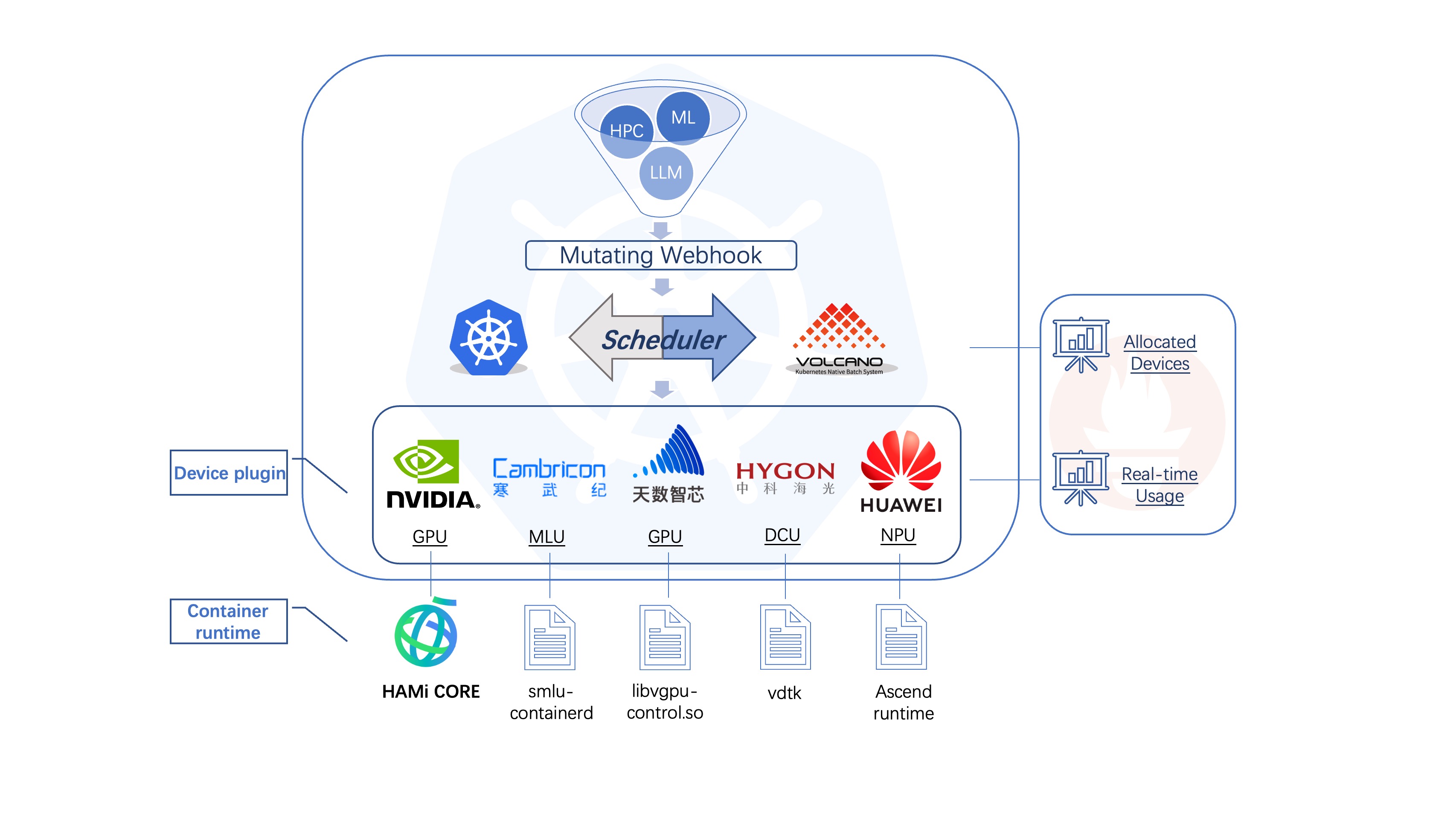

HAMi-core is the in-container gpu resource controller, it has beed adopted by HAMi, volcano

HAMi-core has the following features:

- Virtualize device meory

-

Limit device utilization by self-implemented time shard

-

Real-time device utilization monitor

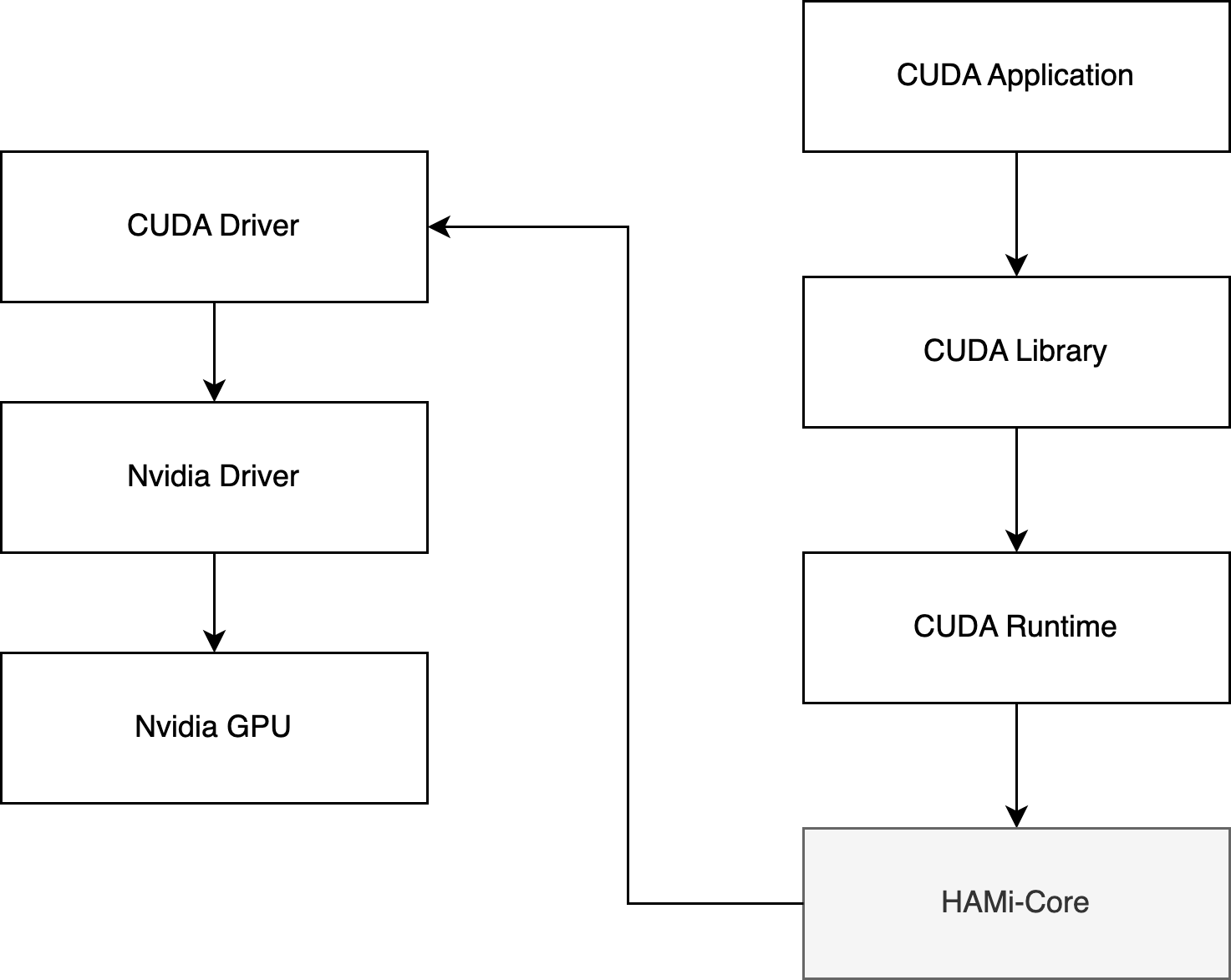

HAMi-core operates by Hijacking the API-call between CUDA-Runtime(libcudart.so) and CUDA-Driver(libcuda.so), as the figure below:

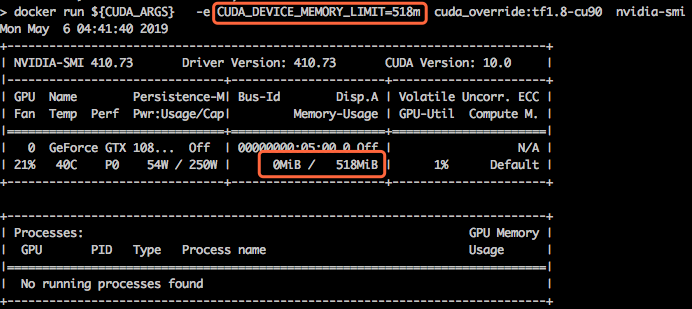

sh build.shdocker build . -f dockerfiles/DockerfileCUDA_DEVICE_MEMORY_LIMIT indicates the upper limit of device memory (eg 1g,1024m,1048576k,1073741824)

CUDA_DEVICE_SM_LIMIT indicates the sm utility percentage of each device

# Add 1GB bytes limit And set max sm utility to 50% for all devices

export LD_PRELOAD=./libvgpu.so

export CUDA_DEVICE_MEMORY_LIMIT=1g

export CUDA_DEVICE_SM_LIMIT=50# Make docker image

docker build . -f=dockerfiles/Dockerfile-tf1.8-cu90

# Launch the docker image

export DEVICE_MOUNTS="--device /dev/nvidia0:/dev/nvidia0 --device /dev/nvidia-uvm:/dev/nvidia-uvm --device /dev/nvidiactl:/dev/nvidiactl"

export LIBRARY_MOUNTS="-v /usr/cuda_files:/usr/cuda_files -v $(which nvidia-smi):/bin/nvidia-smi"

docker run ${LIBRARY_MOUNTS} ${DEVICE_MOUNTS} -it \

-e CUDA_DEVICE_MEMORY_LIMIT=2g \

cuda_vmem:tf1.8-cu90 \

python -c "import tensorflow; tensorflow.Session()"Use environment variable LIBCUDA_LOG_LEVEL to set the visibility of logs

| LIBCUDA_LOG_LEVEL | description |

|---|---|

| 0 | errors only |

| 1(default),2 | errors,warnings,messages |

| 3 | infos,errors,warnings,messages |

| 4 | debugs,errors,warnings,messages |

./test/test_alloc