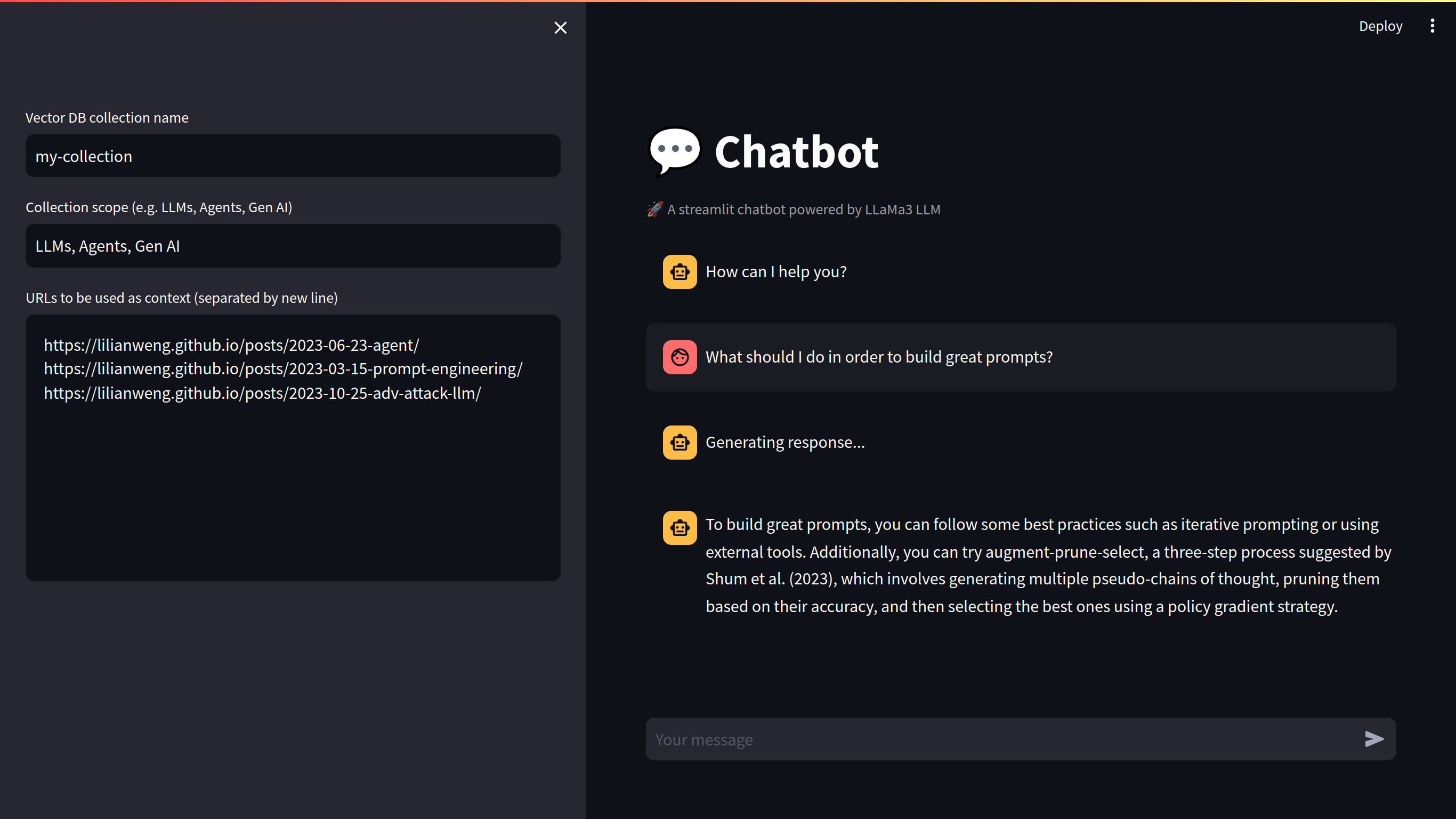

Fully local RAG agent powered by LLaMa3 and LangChain

Create a virtual environment and install the requirements:

python -m venv .venv

pip install -r requirements.txtIf you don't have ollama installed, follow this doc.

And then run:

ollama pull llama3If you want to use LangSmith, copy the .env.example to .env and fill the LANGSMITH_API_KEY with your API key.

Go to Run and Debug in VSCode and select Debug App.

If you have any issues with ollama running infinetely, try to run the following command:

sudo systemctl restart ollamaOr:

pgrep ollama # returns the pid

kill -9 <pid>

sudo systemctl start ollama