主要修改的文件:

- deformable_transformer.py

- ms_deform_attn_func.py

- ms_deform_attn.py

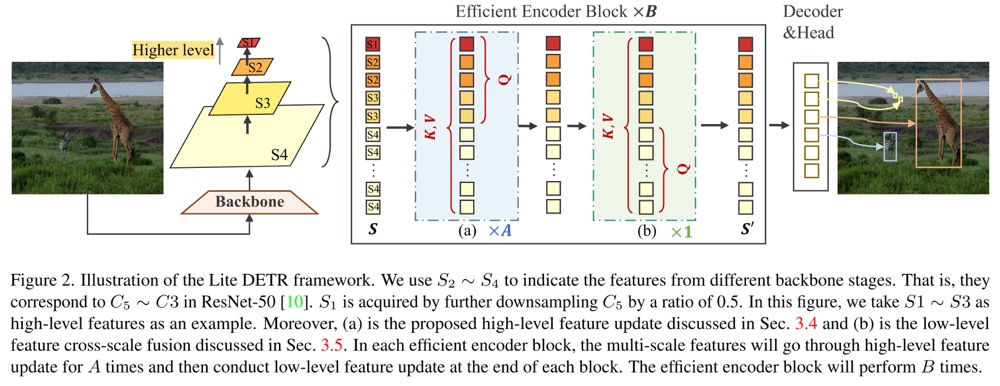

This is the official implementation of the paper "Lite DETR : An Interleaved Multi-Scale Encoder for Efficient DETR". Accepted to CVPR 2023.

Code is available now.

Efficient encoder design to reduce computational cost

- Simple. Dozens of lines code change (if not consider pluggable key-aware attention).

- Effective. Reduce encoder cost by 50% while preserve most of the original performance.

- General. Validated on a series of DETR models (Deformable DETR, H-DETR, DINO).

| name | backbone | box AP | Checkpoint | |

|---|---|---|---|---|

| 1 | Lite-DINO-H2L2-(2+1)x3 | R50 | 49.9 | Link |

| 2 | Lite-DINO-H3L1-(6+1)x1 | R50 | 50.2 | Link |

| 3 | Lite-DINO-H3L1-(2+1)x3 | R50 | 50.4 | Link |

We use the environment same to DINO to run Lite-DINO. If you have run DINO, you can skip this step.

We test our models under python=3.7.3,pytorch=1.9.0,cuda=11.1. Other versions might be available as well.

- Clone this repo

git https://github.com/IDEA-Research/Lite-DETR

cd Lite-DETR- Install Pytorch and torchvision

Follow the instruction on https://pytorch.org/get-started/locally/.

# an example:

conda install -c pytorch pytorch torchvision- Install other needed packages

pip install -r requirements.txt- Compiling CUDA operators

cd models/dino/ops

python setup.py build install

# unit test (should see all checking is True)

python test.py

cd ../../..Please download COCO 2017 dataset and organize them as following:

COCODIR/

├── train2017/

├── val2017/

└── annotations/

├── instances_train2017.json

└── instances_val2017.json

Download our DINO model checkpoint links in above table and perform the command below.

python -m torch.distributed.launch main.py \

--eval -c config/DINO/DINO_4scale.py --coco_path /path/to/your/COCODIR \

--options num_expansion=a enc_scale=b --resume /path/to/ckptNote: for Lite-DINO-H2L2-(2+1)x3, a=3, b=1.

for Lite-DINO-H3L1-(6+1)x1, a=1, b=3.

for Lite-DINO-H2L2-(3+1)x3, a=3, b=3.

Add --benchmark --benchmark_only at the end of the above command to measure the GFLOPs.

We did not provide cuda implementation for the key-aware deformable attention (KDA), so the training and inference speed is slow. As KDA mainly impacts the performance of small objects, you can use the original deformable attention instead by setting key_aware=False in the config. The overall performance will be not significantly impacted.

Concurrent work RT-DETR also adopts similar idea to handle high-resolution maps and other speed improvements. It is optimized well in running speed, so we encourage you to use RT-DETR for practical scenarios.

You can also train our model on a single process:

python -m torch.distributed.launch main.py \

-c config/DINO/DINO_4scale.py --coco_path /path/to/your/COCODIR \

--options num_expansion=a enc_scale=bHowever, as the training is time consuming, we suggest to train the model on multi-gpu.

python -m torch.distributed.launch --nproc_per_node=8 main.py \

-c config/DINO/DINO_4scale.py --coco_path /path/to/your/COCODIR \

--options num_expansion=a enc_scale=bIf you find our work helpful for your research, please consider citing the following BibTeX entry.

@article{li2023lite,

title={Lite DETR: An Interleaved Multi-Scale Encoder for Efficient DETR},

author={Li, Feng and Zeng, Ailing and Liu, Shilong and Zhang, Hao and Li, Hongyang and Zhang, Lei and Ni, Lionel M},

journal={arXiv preprint arXiv:2303.07335},

year={2023}

}