Shilin Xu

·

Haobo Yuan

·

Qingyu Shi

·

Lu Qi

·

Jingbo Wang

·

Yibo Yang

·

Yining Li

·

Kai Chen

·

Yunhai Tong

·

Bernard Ghanem

·

Xiangtai Li

·

Ming-Hsuan Yang

PKU, NTU, UC-Merced, Shanghai AI, KAUST, Google Research

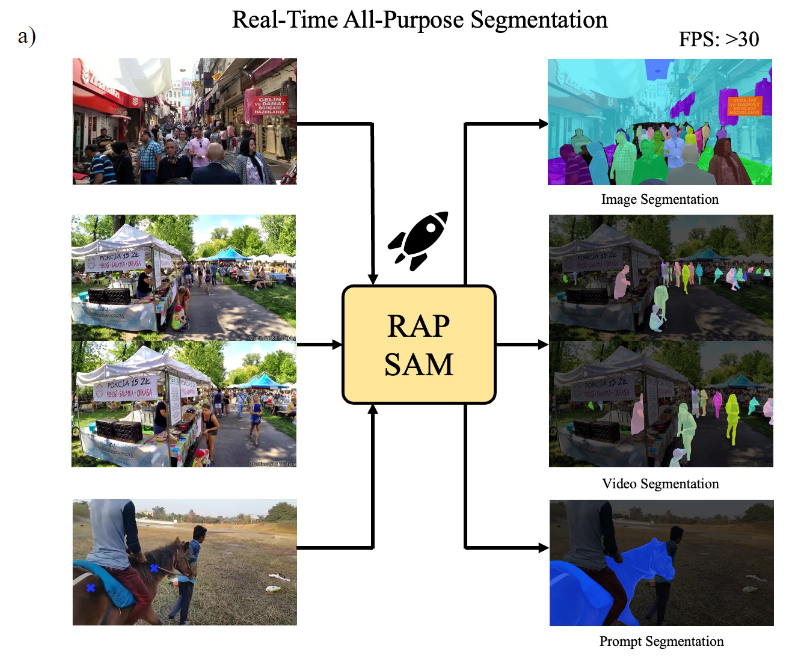

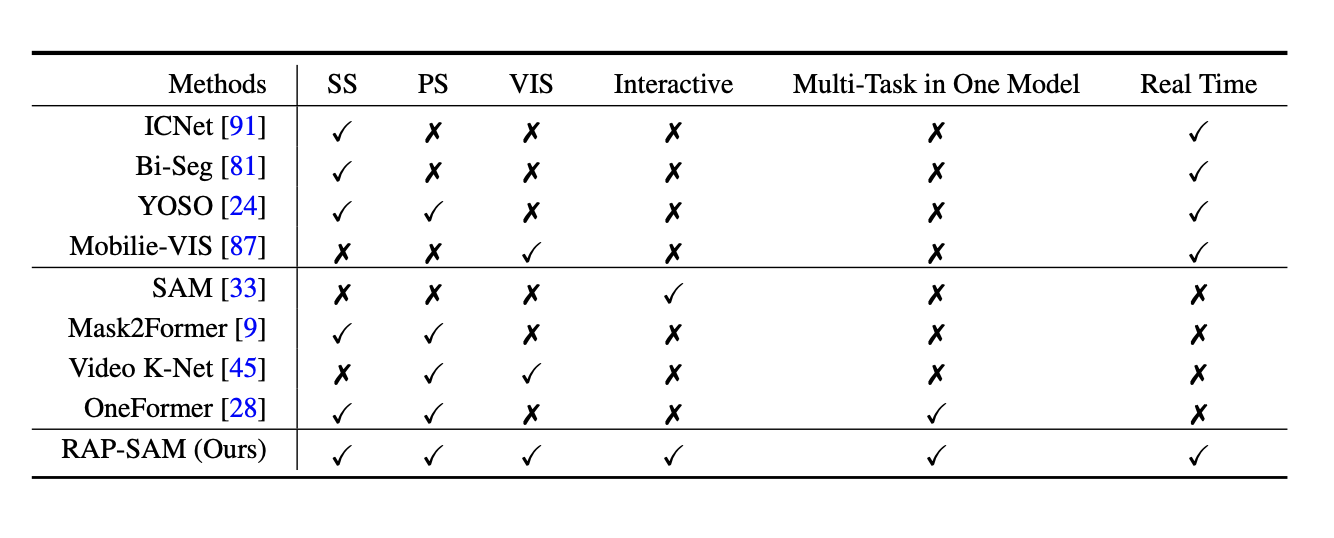

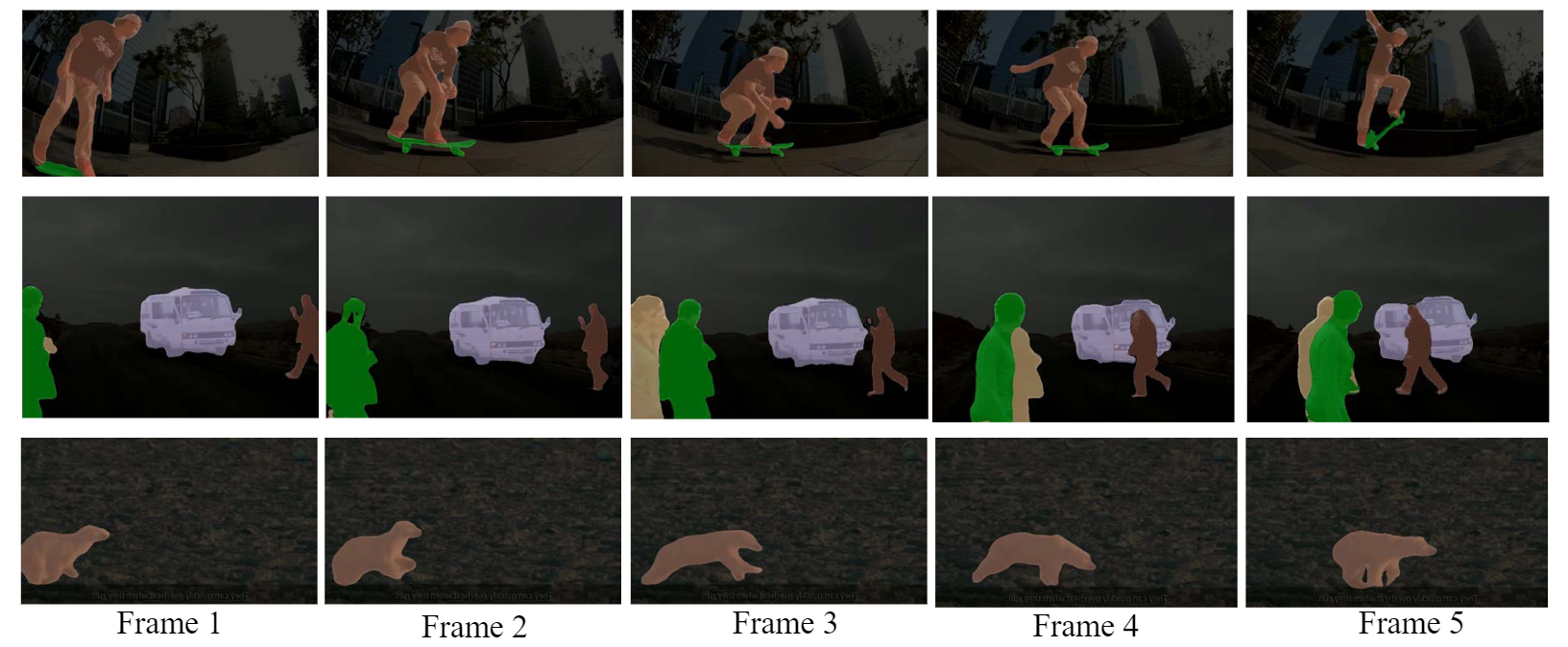

We present real-time all-purpose segmentation to segment and recognize objects for image, video, and interactive inputs. In addition to benchmarking, we also propose a simple yet effective baseline, named RAP-SAM, which achieves the best accuracy and speed trade-off among three different tasks.

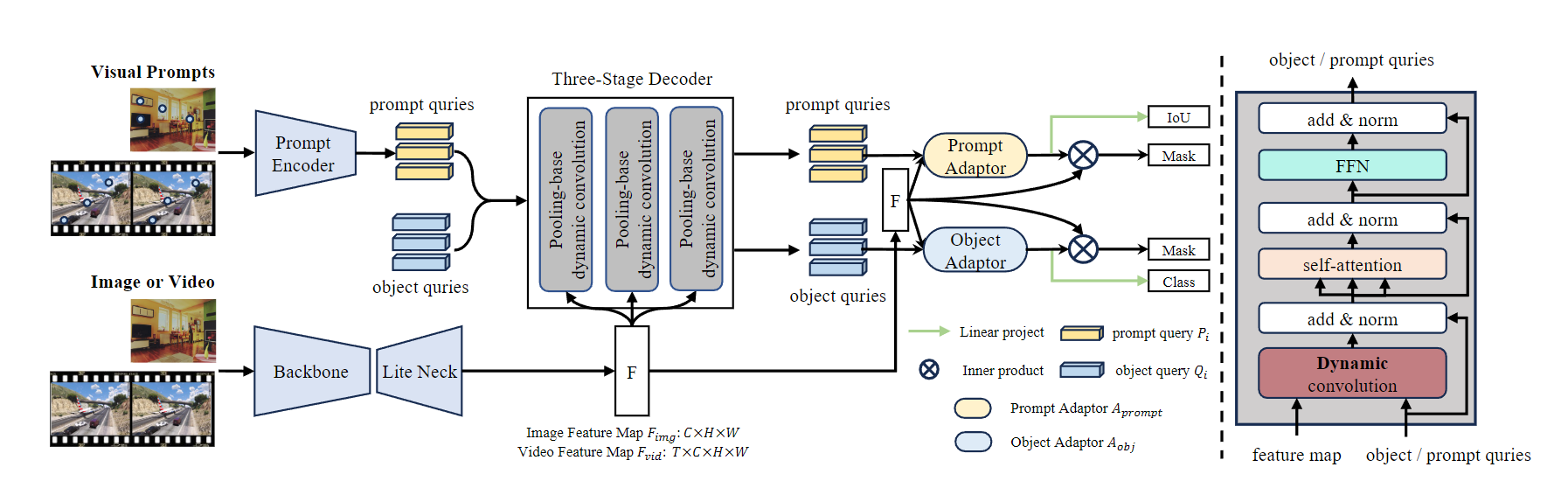

Our RAP-SAM is a simple encoder and decoder architecture. It contains a backbone, a lightweight neck, and a shared multitask decoder. Following SAM, we also adopt the prompt encoder to encode visual prompts into a query. We adopt the same decoder for both visual prompts and initial object queries to share more computation and parameters. To better balance the results for in-teractive segmentation and image/video segmentation, we design a prompt adapter and an object adapter in the end of the decoder.

The detection framework is built upon MMDet3.0.

Install the packages:

pip install mmengine==0.8.4

pip install mmdet==3.3.0Generate classifier using the following command or download from CocoPanopticOVDataset_YouTubeVISDataset_2019.pth and CocoPanopticOVDataset.pth .

PYTHONPATH='.' python tools/gen_cls.py configs/rap_sam/rap_sam_convl_12e_adaptor.py

The main experiments are conducted on COCO and YouTube-VIS-2019 datasets. Please prepare datasets and organize them like the following:

├── data

├── coco

├── annotations

├── instances_val2017.json

├── train2017

├── val2017

├── youtube_vis_2019

├── annotations

├── youtube_vis_2019_train.json

├── youtube_vis_2019_valid.json

├── train

├── valid

python demo/demo.py demo/demo.jpg configs/rap_sam/eval_rap_sam_coco.py --weights rapsam_r50_12e.pth

We provide the checkpoint here. You can download them and then run the command below for inference.

./tools/dist_test.sh configs/rap_sam/eval_rap_sam_coco.py $CKPT $NUM_GPUS

./tools/dist_test.sh configs/rap_sam/eval_rap_sam_yt19.py $CKPT $NUM_GPUS

./tools/dist_test.sh configs/rap_sam/eval_rap_sam_prompt.py $CKPT $NUM_GPUS

The code will be release soon!!! Please stay tuned.

@article{xu2024rapsam,

title={RAP-SAM: Towards Real-Time All-Purpose Segment Anything},

author={Shilin Xu and Haobo Yuan and Qingyu Shi and Lu Qi and Jingbo Wang and Yibo Yang and Yining Li and Kai Chen and Yunhai Tong and Bernard Ghanem and Xiangtai Li and Ming-Hsuan Yang},

journal={arXiv preprint},

year={2024}

}

MIT license