Shilin Xu*

·

Xiangtai Li*

·

Size Wu

·

Wenwei Zhang

Yunhai Tong

.

Chen Change Loy

PKU, S-Lab(MMlab@NTU)

Xiangtai is the project leader and corresponding author.

For the detailed comparison results, see [Project Page].

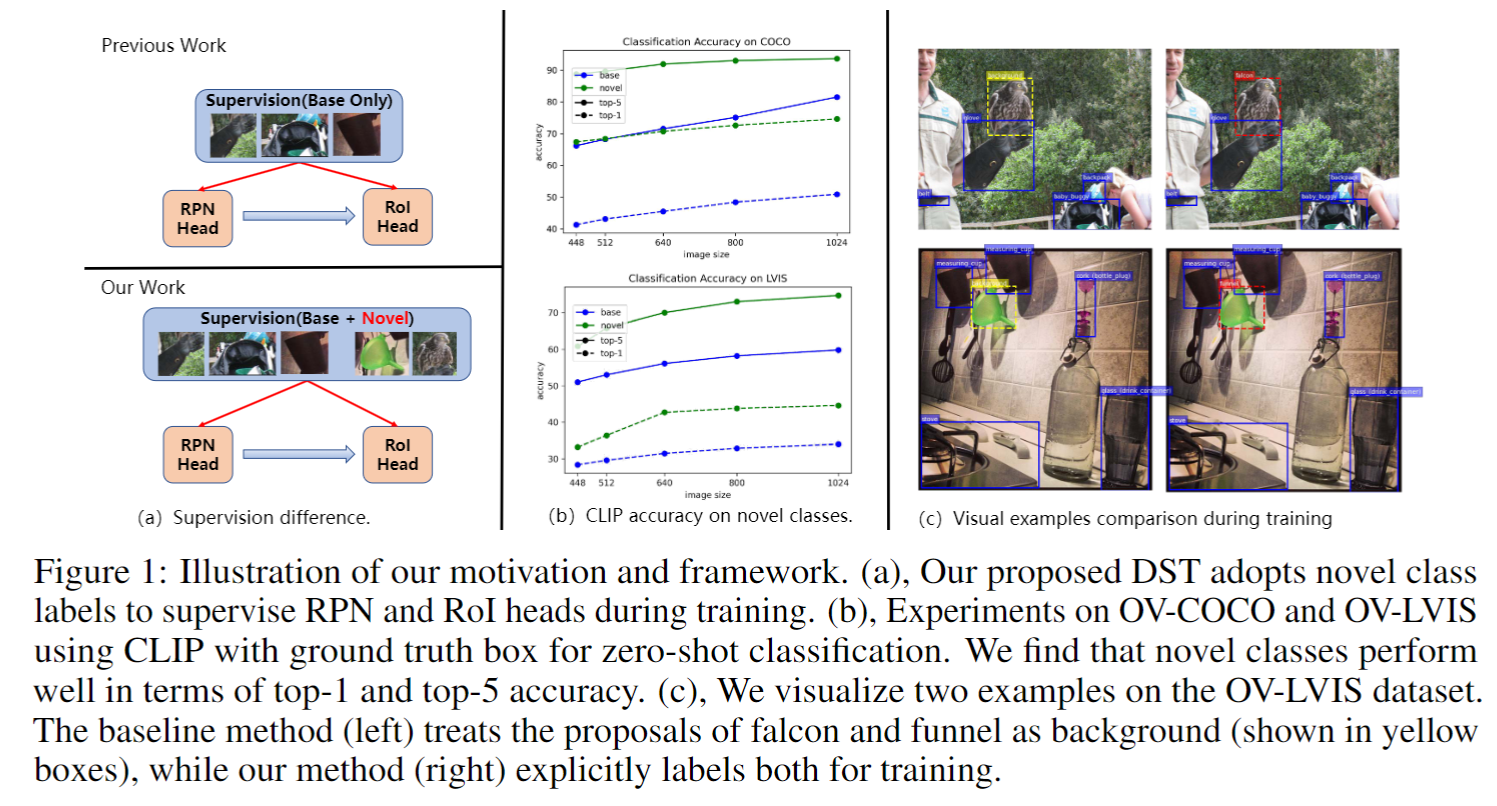

This paper presents a novel method for open-vocabulary object detection (OVOD) that aims to detect objects \textit{beyond} the set of categories observed during training.

Our approach proposes a dynamic self-training strategy that leverages the zero-shot classification capabilities of pre-trained vision-language models, such as CLIP, to classify proposals as novel classes directly. Unlike previous works that ignore novel classes during training and rely solely on the region proposal network (RPN) for novel object detection, our method selectively filters proposals based on specific design criteria. The resulting set of identified proposals serves as pseudo labels for novel classes during the training phase, enabling our self-training strategy to improve the recall and accuracy of novel classes in a self-training manner without requiring additional annotations or datasets. Empirical evaluations on the LVIS and COCO datasets demonstrate significant improvements over the baseline performance without incurring additional parameters or computational costs during inference. Notably, our method achieves a 1.7% improvement over the previous F-VLM method on the LVIS validation set. Moreover, combined with offline pseudo label generation, our method improves the strong baselines over 10 % mAP on COCO.

The detection framework is built upon MMDetection2.x. To install MMDetection2.x, run

git clone https://github.com/open-mmlab/mmcv.git

cd mmcv

git checkout v1.7.0

MMCV_WITH_OPS=1 pip install -e . -v

git clone https://github.com/open-mmlab/mmdetection.git

cd mmdetection

git checkout v2.28.1

pip install -e . -v

This project uses EVA-CLIP, so run the following command to install the package

pip install -v -U git+https://github.com/facebookresearch/xformers.git@main#egg=xformers

pip install -e . -v

We conduct experiments on COCO and LVIS datasets. We provide some preprocessed json files in Driver.

├── data

│ │── coco

│ ├── annotations

│ ├── ├── instances_train2017.json

| | ├── panoptic_train2017.json

| | ├── panoptic_train2017

│ ├── train2017

│ ├── val2017

│ ├── zero-shot # obtain the files from the drive

│ ├── instances_val2017_all_2.json

│├── lvis_v1

│ ├── annotations

│ ├── lvis_v1_train_seen_1203_cat.json # obtain the files from the drive

│ ├── lvis_v1_val.json

│ ├── train2017 # the same with coco

│ ├── val2017 # the same with coco

Please download the pretrained model from here. And they can be organized as follows:

checkpoints

├── eva_vitb16_coco_clipself_proposals.pt

├── eva_vitl14_coco_clipself_proposals.pt

Run the command below to train the model.

bash tools/dist_train.sh configs/fvit/coco/fvit_vitl14_upsample_fpn_bs64_3e_ovcoco_eva_original.py $NUM_GPUS

Please download the checkpoints file from 🤗Hugging Face and use the following command to reproduce our results.

bash tools/dist_test.sh configs/fvit/coco/fvit_vitl14_upsample_fpn_bs64_3e_ovcoco_eva_original.py $CKPT 8 --eval bbox

Demo

If you think DST-Det is helpful in your research, please consider referring DST-Det:

@article{xu2023dst,

title={DST-Det: Simple Dynamic Self-Training for Open-Vocabulary Object Detection},

author={Xu, Shilin and Li, Xiangtai and Wu, Size and Zhang, Wenwei and Li, Yining and Cheng, Guangliang and Tong, Yunhai and Chen, Kai and Loy, Chen Change},

journal={arXiv preprint arXiv:2310.01393},

year={2023}

}MIT license

We thank MMDetection, open-clip, CLIPSelf for their valuable code bases.