Title: Semantic and Spatial Adaptive Pixel-level Classifier for Semantic Segmentation

Authors: Xiaowen Ma, Zhenliang Ni and Xinghao Chen

Citation:@misc{ma2024semantic, title={Semantic and Spatial Adaptive Pixel-level Classifier for Semantic Segmentation}, author={Xiaowen Ma and Zhenliang Ni and Xinghao Chen}, year={2024}, eprint={2405.06525}, archivePrefix={arXiv}, primaryClass={cs.CV} }

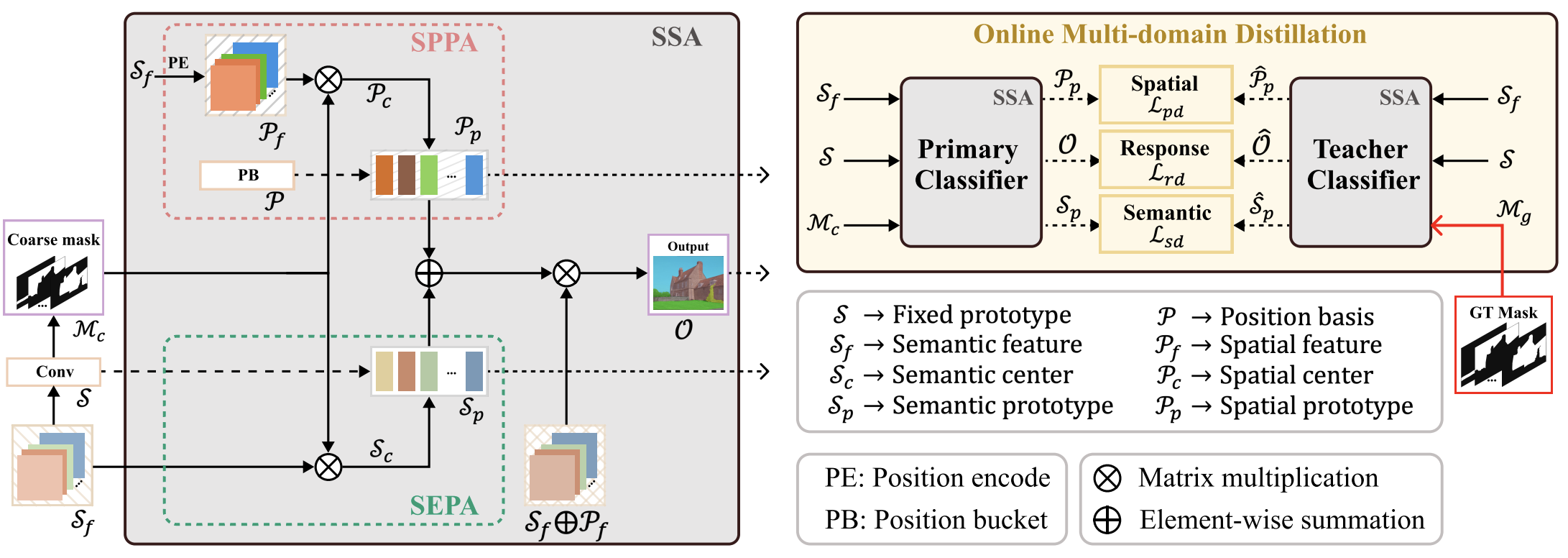

SSA has three key parts: semantic prototype adaptation (SEPA), spatial prototype adaptation (SPPA), and online multi-domain distillation.

Iters: 160000 Input size: 512x512 Batch size: 16

-

General models

+SSA Backbone Latency (ms) Flops (G) mIoU (ss) OCRNet HRNet-W48 69.3 165.0 47.67 UperNet Swin-T 54.3 236.3 47.56 SegFormer MiT-B5 70.1 52.6 50.74 UperNet Swin-L 107.3 405.2 52.69 -

Light weight models

+SSA Backbone Iters Latency (ms) Flops (G) mIoU (ss) AFFormer-B AFFormer-B 160000 26.0 4.4 42.74 SeaFormer-B SeaFormer-B 160000 27.3 1.8 42.46 SegNext-T MSCAN-T 160000 23.3 6.3 43.90 SeaFormer-L SeaFormer-L 160000 29.9 6.4 45.36

Iters: 80000 Input size: 512x512 Batch size: 16

-

General models

+SSA Backbone Latency (ms) Flops (G) mIoU (ss) OCRNet HRNet-W48 69.3 165.0 37.94 UperNet Swin-T 54.3 236.3 42.30 SegFormer MiT-B5 70.1 52.6 45.55 UperNet Swin-L 107.3 405.2 48.94 -

Light weight models

+SSA Backbone Iters Latency (ms) Flops (G) mIoU (ss) AFFormer-B AFFormer-B 80000 26.0 4.4 36.40 SeaFormer-B SeaFormer-B 80000 27.3 1.8 35.92 SegNext-T MSCAN-T 80000 23.3 6.3 38.91 SeaFormer-L SeaFormer-L 80000 29.9 6.4 38.48

Iters: 80000 Input size: 480x480 Batch size: 16

-

General models

+SSA Backbone Latency (ms) Flops (G) mIoU (ss) OCRNet HRNet-W48 69.3 143.3 50.21 UperNet Swin-T 54.3 207.7 55.11 SegFormer MiT-B5 70.1 45.8 59.14 UperNet Swin-L 107.3 363.2 61.83 -

Light weight models

+SSA Backbone Latency (ms) Flops (G) mIoU (ss) AFFormer-B AFFormer-B 26.0 4.4 49.72 SeaFormer-B SeaFormer-B 27.3 1.8 47.00 SegNext-T MSCAN-T 23.3 6.3 52.58 SeaFormer-L SeaFormer-L 29.9 6.4 49.66

-

Environment

conda create --name ssa python=3.8 -y conda activate ssa pip install torch==1.8.2+cu102 torchvision==0.9.2+cu102 torchaudio==0.8.2 pip install timm==0.6.13 pip install mmcv-full==1.7.0 pip install opencv-python==4.1.2.30 pip install "mmsegmentation==0.30.0"SSA is built based on mmsegmentation-0.30.0, which can be referenced for data preparation.

-

Train

# Single-gpu training python train.py configs/swin/upernet_swin_tiny_ade20k_ssa.py # Multi-gpu (4-gpu) training bash dist_train.sh configs/swin/upernet_swin_tiny_ade20k_ssa.py 4

-

Test

# Single-gpu testing python test.py configs/swin/upernet_swin_tiny_ade20k_ssa.py ${CHECKPOINT_FILE} --eval mIoU # Multi-gpu (4-gpu) testing bash dist_test.sh configs/swin/upernet_swin_tiny_ade20k_ssa.py ${CHECKPOINT_FILE} 4 --eval mIoU

-

Benchmark

python benchmark.py configs/swin/upernet_swin_tiny_ade20k_ssa.py ${CHECKPOINT_FILE} --repeat-times 5

Thanks to previous open-sourced repo:

SeaFormer CAC AFFormer SegNeXt

mmsegmentation