Xi Chen

·

Lianghua Huang

·

Yu Liu

·

Yujun Shen

·

Deli Zhao

·

Hengshuang Zhao

The University of Hong Kong | Alibaba Group | Ant Group

|

- [2023.12.17] Release train & inference & demo code, and pretrained checkpoint.

- [Soon] Release the new version paper.

- [Soon] Support online demo.

- [On-going] Scale-up the training data and release stronger models as the foundaition model for downstream region-to-region generation tasks.

- [On-going] Release specific-designed models for downstream tasks like virtual tryon, face swap, text and logo transfer, etc.

Install with conda:

conda env create -f environment.yaml

conda activate anydooror pip:

pip install -r requirements.txtAdditionally, for training, you need to install panopticapi, pycocotools, and lvis-api.

pip install git+https://github.com/cocodataset/panopticapi.git

pip install pycocotools -i https://pypi.douban.com/simple

pip install lvisDownload AnyDoor checkpoint:

Note: We include all the optimizer params for Adam, so the checkpoint is big. You could only keep the "state_dict" to make it much smaller.

Download DINOv2 checkpoint and revise /configs/anydoor.yaml for the path (line 83)

Download Stable Diffusion V2.1 if you want to train from scratch.

We provide inference code in run_inference.py (from Line 222 - ) for both inference single image and inference a dataset (VITON-HD Test). You should modify the data path and run the following code. The generated results are provided in examples/TestDreamBooth/GEN for single image, and VITONGEN for VITON-HD Test.

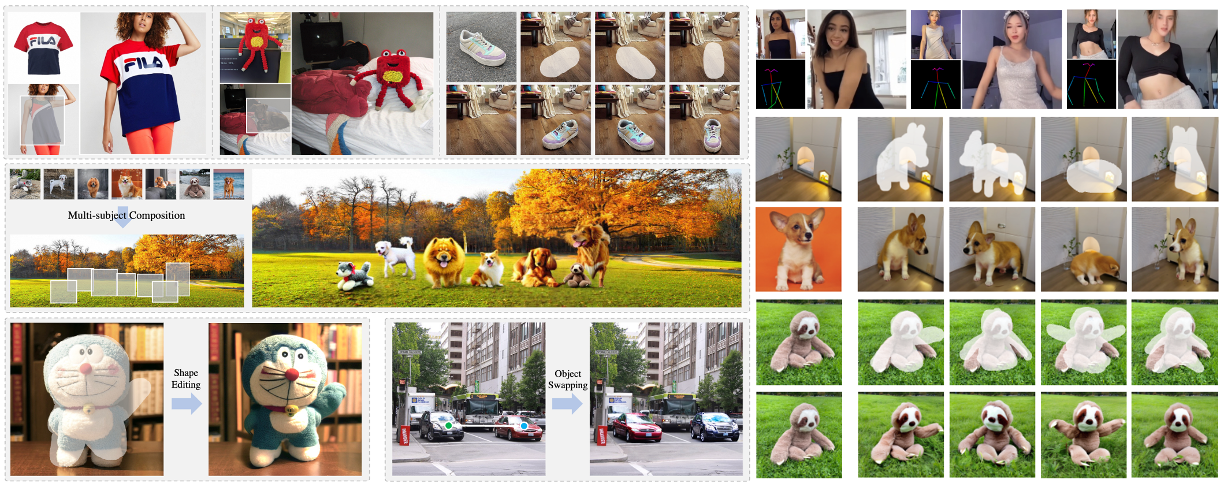

python run_inference.pyThe inferenced results on VITON-Test would be like [garment, ground truth, generation].

Noticing that AnyDoor does not contain any specific design/tuning for tryon, we think it would be helpful to add skeleton infos or warped garment, and tune on tryon data to make it better :)

|

Our evaluation data for DreamBooth an COCOEE coud be downloaded at Google Drive:

- URL: [to be released]

Currently, we suport local gradio demo. To launch it, you should firstly modify /configs/demo.yaml for the path to the pretrained model, and /configs/anydoor.yaml for the path to DINOv2(line 83).

Afterwards, run the script:

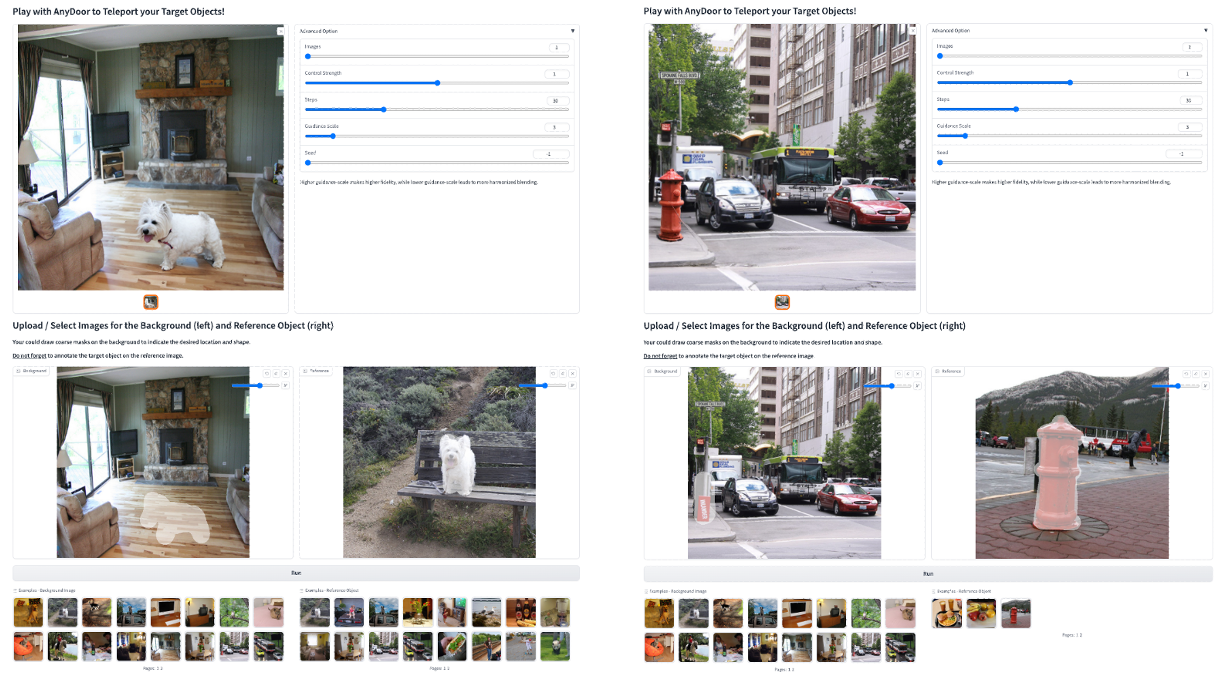

python run_gradio_demo.pyThe gradio demo would look like the UI shown below:

-

📢 This version requires users to annotate the mask of the target object, too coarse mask would influence the generation quality. We plan to add mask refine module or interactive segmentation modules in the demo.

-

📢 We provide an segmentation module to refine the user annotated reference mask. We could chose to disable it by setting

use_interactive_seg: Falsein/configs/demo.yaml.

|

- Download the datasets that present in

/configs/datasets.yamland modify the corresponding paths. - You could prepare you own datasets according to the formates of files in

./datasets. - If you use UVO dataset, you need to process the json following

./datasets/Preprocess/uvo_process.py - You could refer to

run_dataset_debug.pyto verify you data is correct.

- If your would like to train from scratch, convert the downloaded SD weights to control copy by running:

sh ./scripts/convert_weight.sh -

Modify the training hyper-parameters in

run_train_anydoor.pyLine 26-34 according to your training resources. We verify that using 2-A100 GPUs with batch accumulation=1 could get satisfactory results after 300,000 iterations. -

Start training by executing:

sh ./scripts/train.sh @bdsqlsz

- AnyDoor for windows: https://github.com/sdbds/AnyDoor-for-windows

- Pruned model: https://modelscope.cn/models/bdsqlsz/AnyDoor-Pruned/summary

This project is developped on the codebase of ControlNet. We appreciate this great work!

If you find this codebase useful for your research, please use the following entry.

@article{chen2023anydoor,

title={Anydoor: Zero-shot object-level image customization},

author={Chen, Xi and Huang, Lianghua and Liu, Yu and Shen, Yujun and Zhao, Deli and Zhao, Hengshuang},

journal={arXiv preprint arXiv:2307.09481},

year={2023}

}