pix2pix

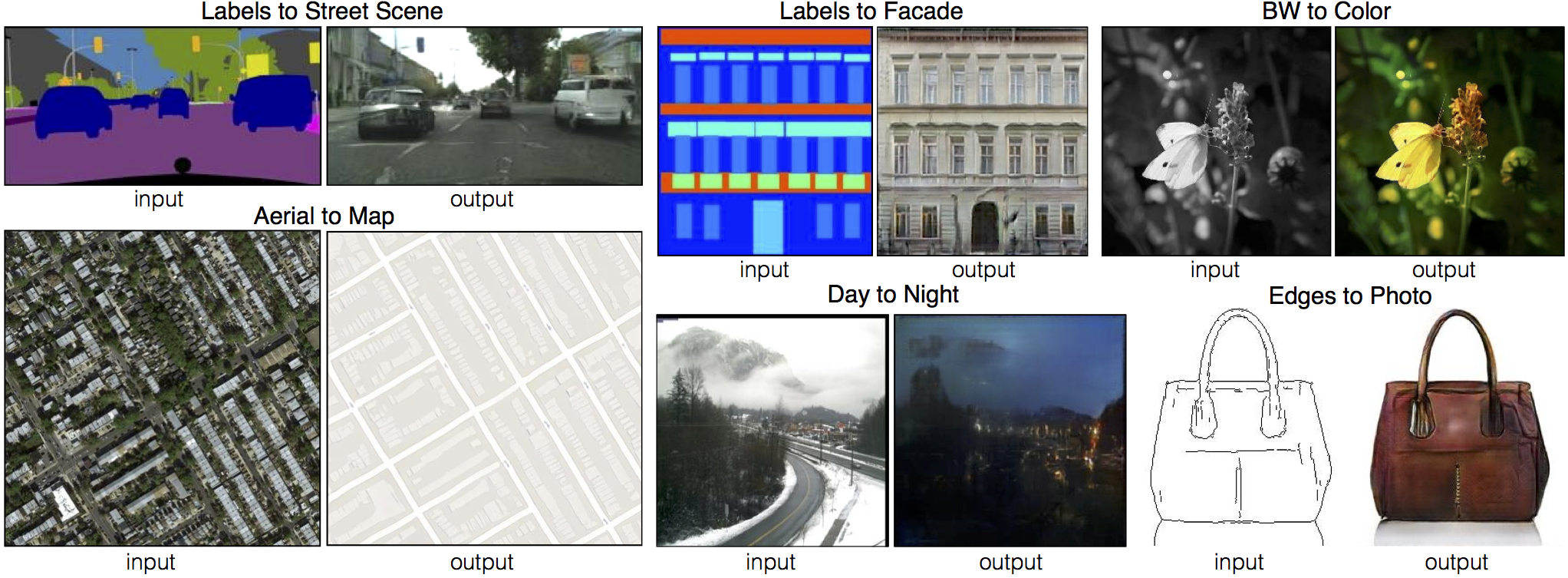

Code for the paper Image-to-Image Translation Using Conditional Adversarial Networks. Learns a mapping from input images to output images, for example:

On some tasks, decent results can be obtained fairly quickly and on small datasets. For example, to learn to generate facades (example shown above), we trained on just 400 images for about 2 hours (on a single Pascal Titan X GPU).

Setup

Prerequisites

- Linux or OSX

- python with numpy

- NVIDIA GPU, CUDA, and CuDNN (CPU mode and CUDA without CuDNN may work with minimal modification, but untested)

Installation

- Install torch and dependencies from https://github.com/torch/distro

- Clone this repo:

git clone git@github.com:phillipi/pix2pix.gitSetup training and test data

We require training data in the form of pairs of images {A,B}, where A and B are two different depicitions of the same underlying scene. For example, these might be pairs {label map, photo} or {bw image, color image}. Then we can learn to translate A to B or B to A:

Create folder /path/to/data with subfolders A and B. A and B should each have their own subfolders train, val, test, etc. In /path/to/data/A/train, put training images in style A. In /path/to/data/B/train, put the corresponding images in style B. Repeat same for other data splits (val, test, etc).

Corresponding images in a pair {A,B} must be the same size and have the same filename, e.g. /path/to/data/A/train/1.jpg is considered to correspond to /path/to/data/B/train/1.jpg.

Once the data is formatted this way, call:

python data/combine_A_and_B.py --fold_A /path/to/data/A --fold_B /path/to/data/B --fold_AB /path/to/dataThis will combine each pair of images (A,B) into a single image file, ready for training.

Train

DATA_ROOT=/path/to/data/ name=expt_name which_direction=AtoB th train.luaSwitch AtoB to BtoA to train translation in opposite direction.

Models are saved to ./checkpoints/expt_name (can be changed by modifying opt.checkpoint_dir in train.lua).

See opt in train.lua for additional training options.

Test

DATA_ROOT=/path/to/data/ name=expt_name which_direction=AtoB phase=val th test.luaThis will run the model named expt_name in direction AtoB on all images in /path/to/data/val.

Result images, and a webpage to view them, are saved to ./results/expt_name (can be changed by modifying opt.results_dir in test.lua).

See opt in test.lua for additional testing options.

Display UI

Optionally, for displaying images during training and test, use the display package.

- Install it with:

luarocks install https://raw.githubusercontent.com/szym/display/master/display-scm-0.rockspec - Then start the server with:

th -ldisplay.start - Open this URL in your browser: http://localhost:8000

By default, the server listens on localhost. Pass 0.0.0.0 to allow external connections on any interface:

th -ldisplay.start 8000 0.0.0.0Then open http://(hostname):(port)/ in your browser to load the remote desktop.

Example usage on facade generation

Let's try an example of training and testing on facade generation, using data from the CMP Facades dataset.

First, grab a copy of the data, already formatted for training:

cd /path/to/data

wget https://people.eecs.berkeley.edu/~isola/pix2pix/facades.tar

tar -xvf facades.tar

rm facades.tarNext train:

DATA_ROOT=/path/to/data/facades name=facades_generation which_direction=BtoA th train.luaStart the display server to view results as the model trains:

th -ldisplay.start 8000 0.0.0.0Finally, test:

DATA_ROOT=/path/to/data/facades name=facades_generation which_direction=BtoA phase=val th test.luaThe test results will be saved to an html file here: /path/to/data/facades/results/facades_generation/latest_net_G_val/index.html.

Citation

If you use this code for your research, please cite our paper Image-to-Image Translation Using Conditional Adversarial Networks:

@article{pix2pix2016,

title={Image-to-Image Translation with Conditional Adversarial Networks},

author={Isola, Phillip and Zhu, Jun-Yan and Zhou, Tinghui and Efros, Alexei A},

journal={arxiv},

year={2016}

}

Acknowledgments

Code borrows heavily from https://github.com/soumith/dcgan.torch