chat-with-your-doc is a demonstration application that leverages the capabilities of Azure OpenAI GPT-4 and LangChain to enable users to chat with their documents. This repository hosts the codebase, instructions, and resources needed to set up and run the application.

The primary goal of this project is to simplify the interaction with documents and extract valuable information with using natural language. This project is built using LangChain and Azure OpenAI GPT-4/ChatGPT to deliver a smooth and natural conversational experience to the user.

- Upload documents as external knowledge base for Azure OpenAI GPT-4/ChatGPT.

- Support various format including PDF, DOCX, PPTX, TXT and etc.

- Chat with the document content, ask questions, and get relevant answers based on the context.

- User-friendly interface to ensure seamless interaction.

- Show source documents for answers in the web gui

- Support streaming of answers

- Support swith of chain type and streaming LangChain output in the web gui

To get started with Chat-with-your-doc, follow these steps:

- Clone the repository:

git clone https://github.com/linjungz/chat-with-your-doc.git- Change into the

chat-with-your-docdirectory:

cd chat-with-your-doc- Install the required Python packages:

pip install -r requirements.txt-

Obtain your Azure OpenAI API key, Endpoint and Deployment Name from the Azure Portal.

-

Set the environment variable in

.envfile:

OPENAI_API_BASE=https://your-endpoint.openai.azure.com

OPENAI_API_KEY=your-key-here

OPENAI_DEPLOYMENT_NAME=your-deployment-name-here

The CLI application is built to support both ingest and chat commands. Python library typer is used to build the command line interface.

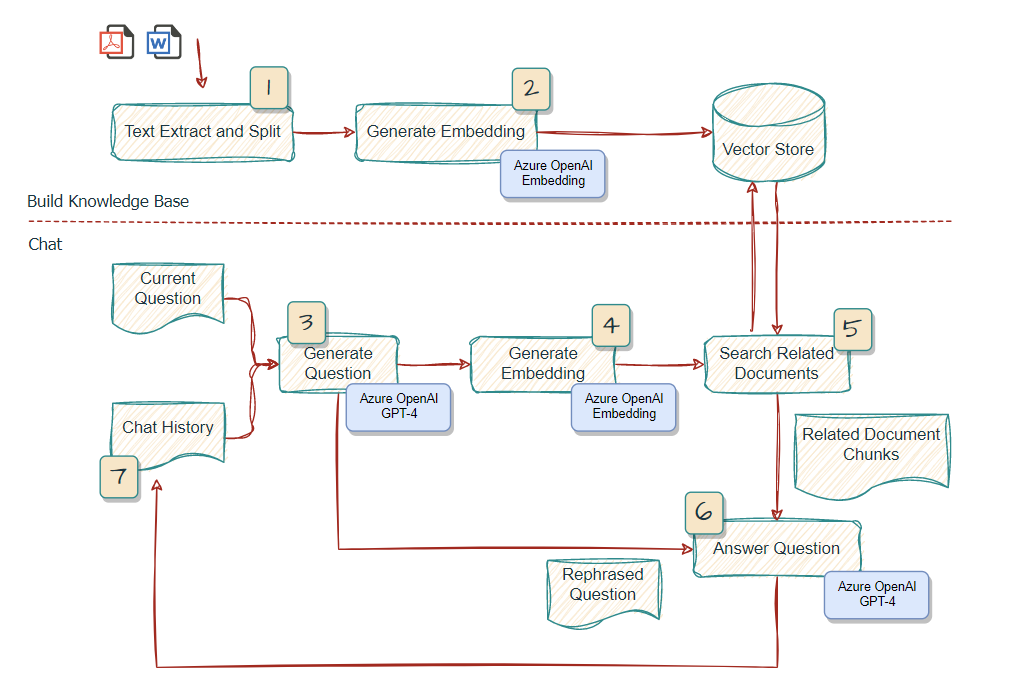

This command would take the documents as input, split the texts, generate the embeddings and store in a vector store FAISS. The vector store would be store locally for later used for chat.

$ python chat_cli.py ingest --help

Usage: chat_cli.py ingest [OPTIONS] DOC_PATH INDEX_NAME

Arguments:

doc_path TEXT Path to the documents to be ingested, support glob pattern [required]

index_name TEXT Name of the index to be created [default: None] [required]

Options:

--help Show this message and exit. This command would start a interactive chat, with documents as a external knowledge base in a vector store. You could choose which knowledge base to load for chat.

$ python chat_cli.py chat --help

Usage: chat_cli.py chat [OPTIONS]

Options:

--index-name TEXT [default: index]

--help Show this message and exit.

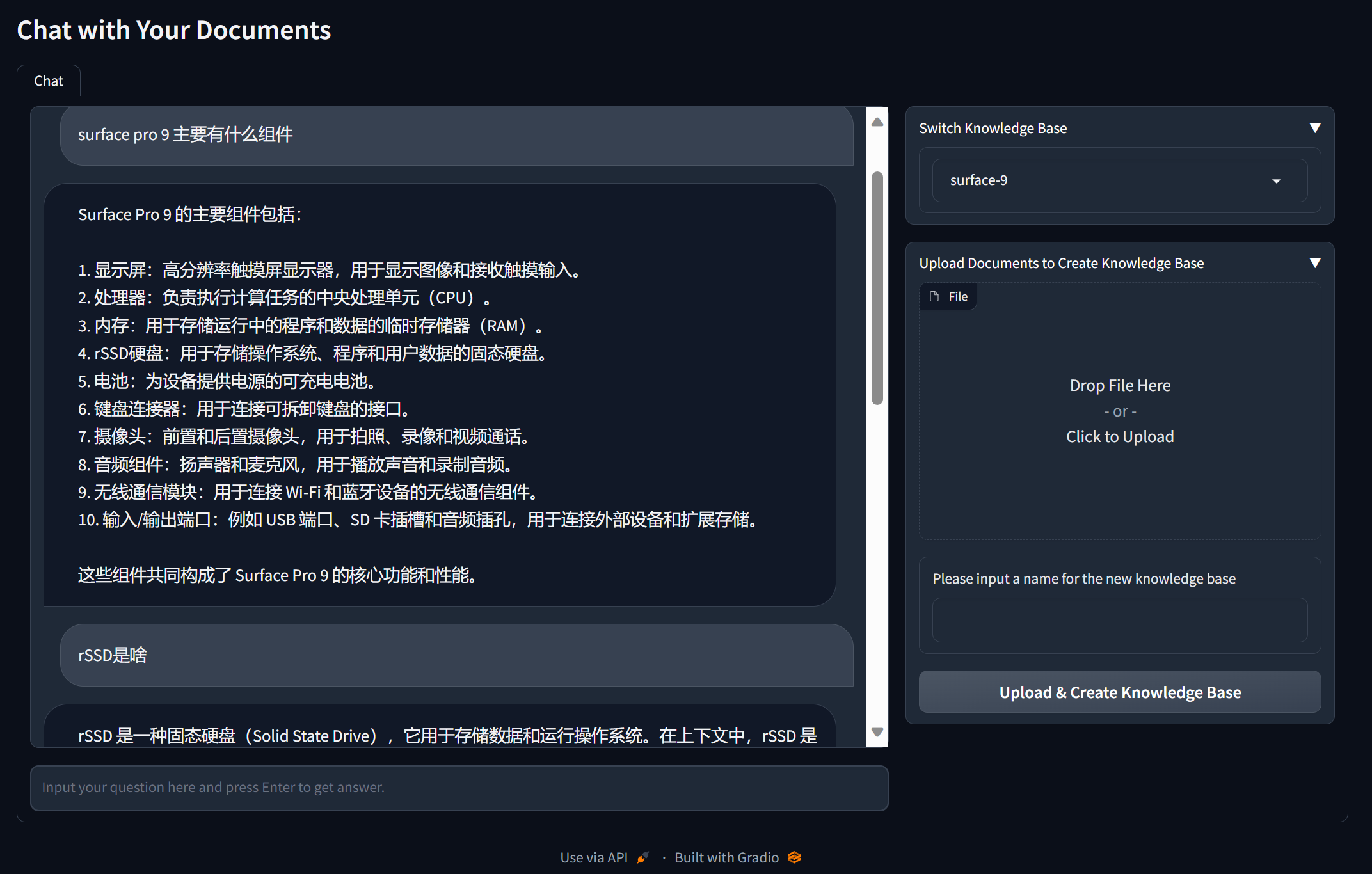

This will initialize the application and open up the user interface in your default web browser. You can now upload a document to create a knowledge base and start a conversation with it.

Gradio is used for quickly building the Web GUI and Hupper is used to ease the development.

For development purpuse, you may run python watcher.py to start the web gui. Or you may directly run python chat_web.py without monitoring the change of the source files.

Langchain is leveraged to quickly build a workflow interacting with Azure GPT-4. ConversationalRetrievalChain is used in this particular use case to support chat history. You may refer to this link for more detail.

For chaintype, by default stuff is used. For more detail, please refer to this link

- The LangChain usage is inspired by gpt4-pdf-chatbot-langchain

- The Web GUI is inspired by langchain-ChatGLM

- The processing of documents is inspired by OpenAIEnterpriseChatBotAndQA

chat-with-your-doc is released under the MIT License. See the LICENSE file for more details.