Build, train, and fine-tune production-ready deep learning SOTA vision models

Website • User Guide • Docs • Getting Started • Pretrained Models • Community • License • Deci Platform

# Load model with pretrained weights

model = models.get("yolox_s", pretrained_weights="coco")All Computer Vision Models - Pretrained Checkpoints can be found here

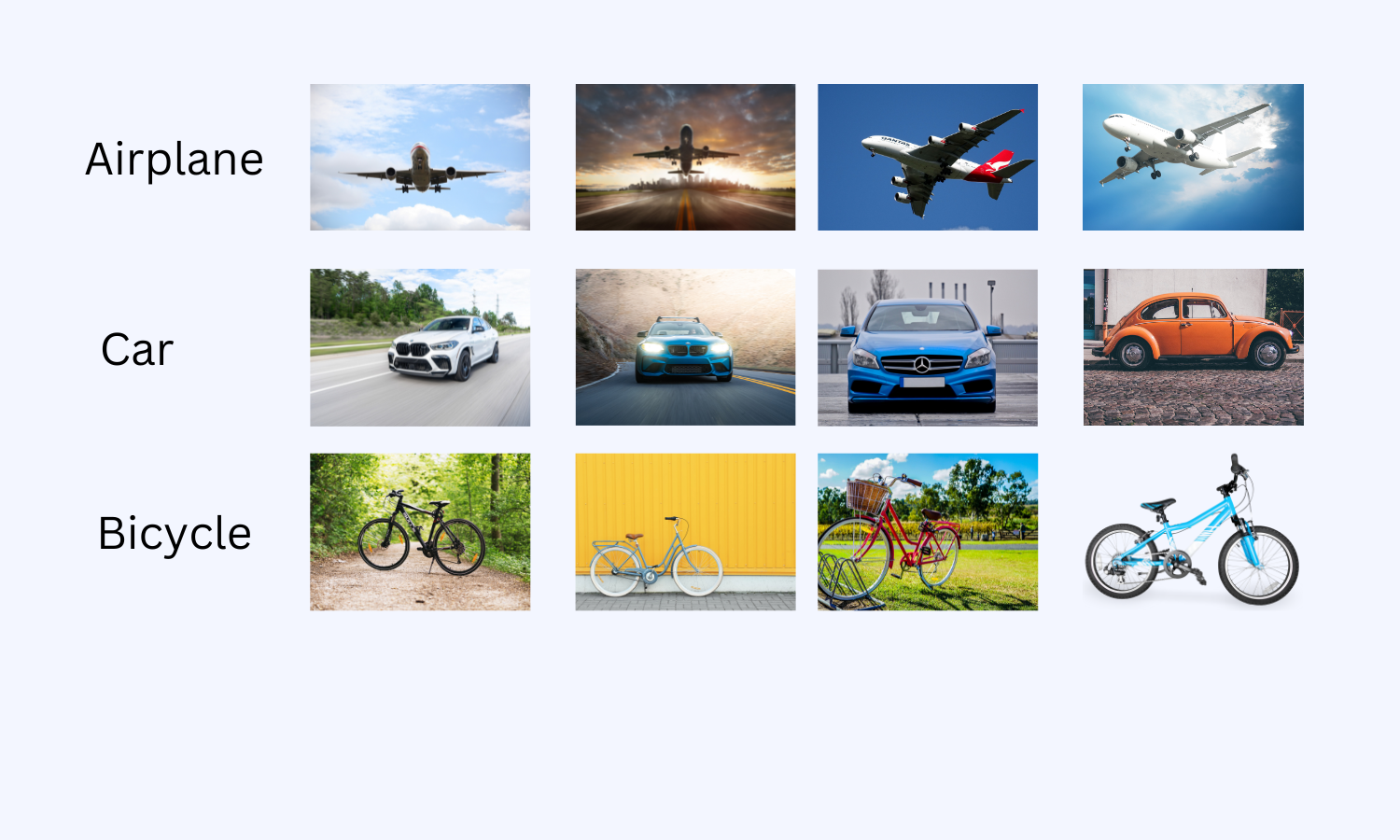

Easily load and fine-tune production-ready, pre-trained SOTA models that incorporate best practices and validated hyper-parameters for achieving best-in-class accuracy. For more information on how to do it go to Getting Started

python -m super_gradients.train_from_recipe --config-name=imagenet_regnetY architecture=regnetY800 dataset_interface.data_dir=<YOUR_Imagenet_LOCAL_PATH> ckpt_root_dir=<CHEKPOINT_DIRECTORY>More example on how and why to use recipes can be found in Recipes

All SuperGradients models’ are production ready in the sense that they are compatible with deployment tools such as TensorRT (Nvidia) and OpenVINO (Intel) and can be easily taken into production. With a few lines of code you can easily integrate the models into your codebase.

# Load model with pretrained weights

model = models.get("yolox_s", pretrained_weights="coco")

# Prepare model for conversion & create dummy_input

# Convert model to onnx

torch.onnx.export(model, dummy_input, "yolox_s.onnx")More information on how to take your model to production can be found in Getting Started notebooks

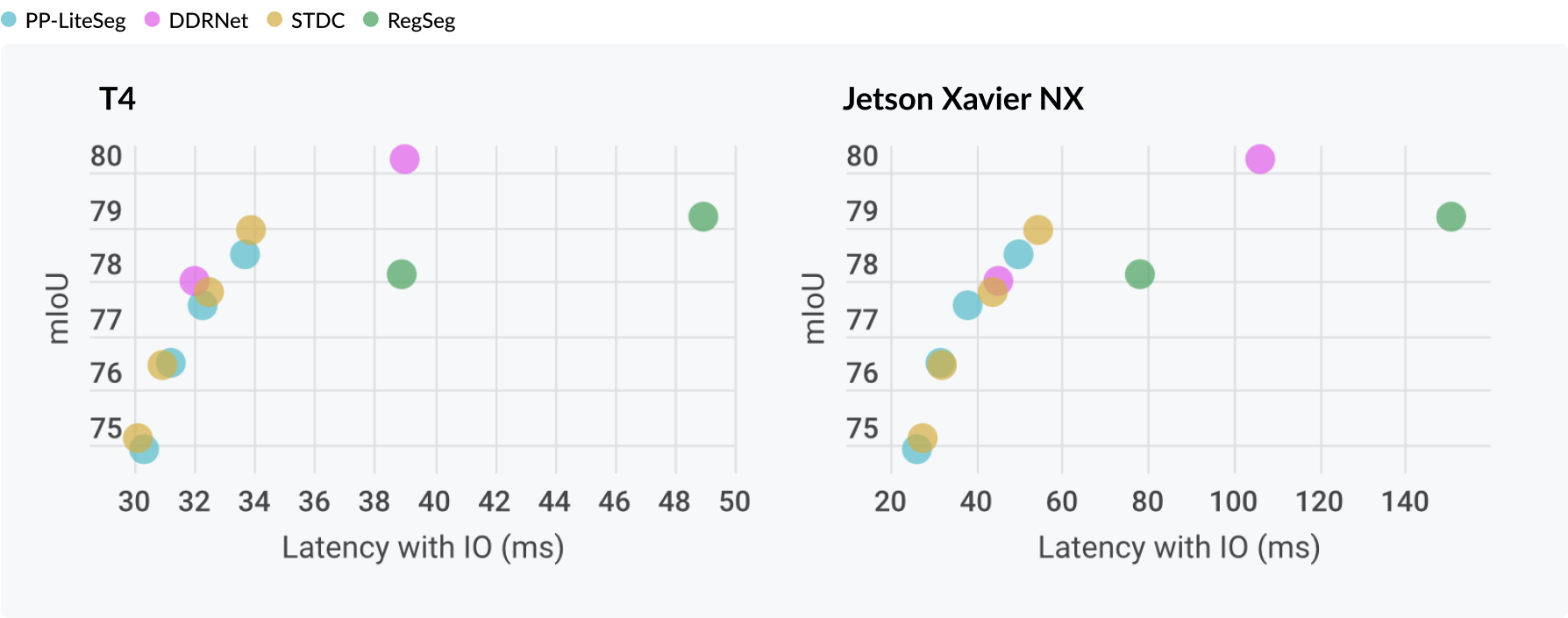

pip install super-gradients- 【06/9/2022】 PP-LiteSeg - new pre-trained checkpoints for Cityscapes with SOTA mIoU scores (~1.5% above paper)🎯

- 【07/08/2022】DDRNet23 - new pre-trained checkpoints and recipes for Cityscapes with SOTA mIoU scores (~1% above paper)🎯

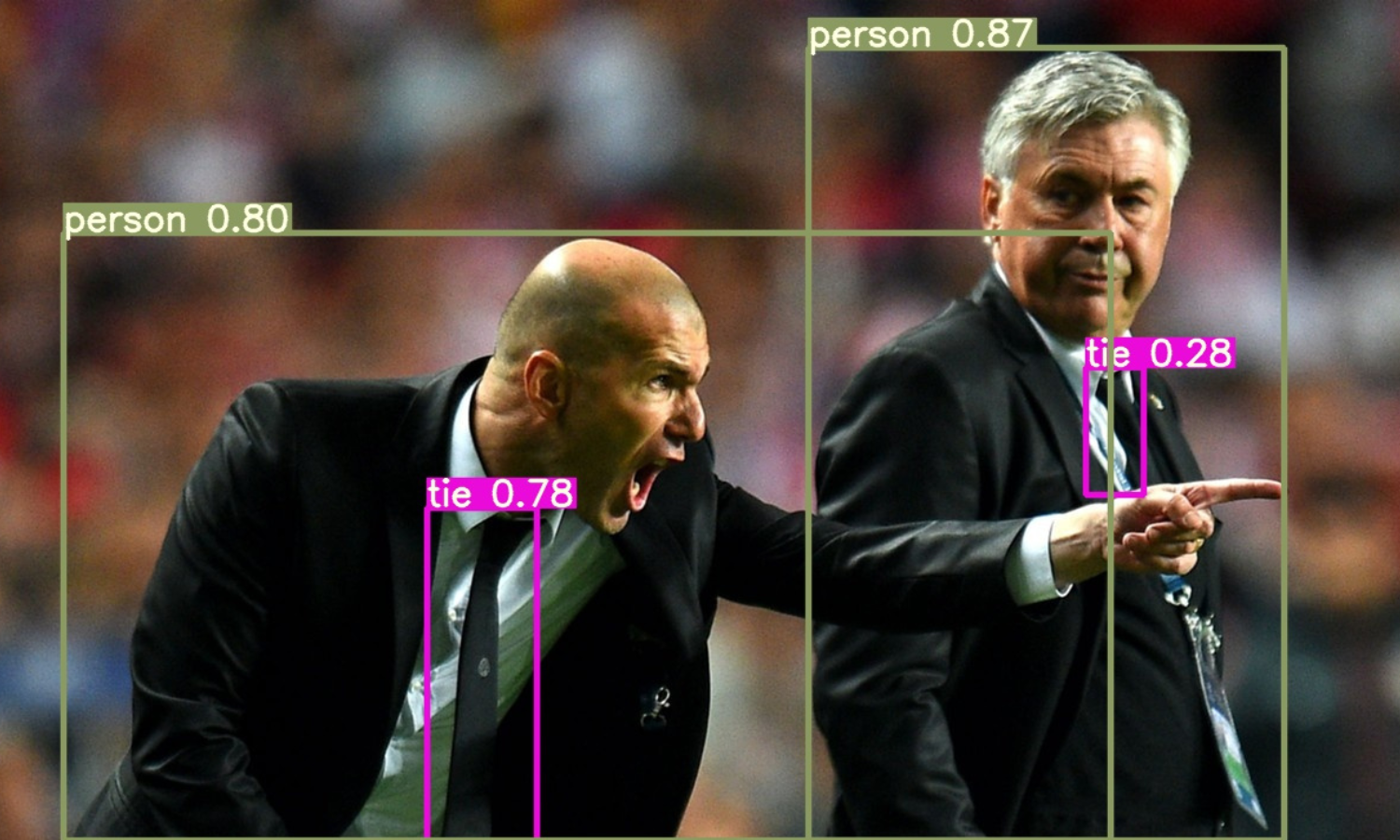

- 【27/07/2022】YOLOX models (object detection) - recipes and pre-trained checkpoints.

- 【07/07/2022】SSD Lite MobileNet V2,V1 - Training recipes and pre-trained checkpoints on COCO - Tailored for edge devices! 📱

- 【07/07/2022】 STDC - new pre-trained checkpoints and recipes for Cityscapes with super SOTA mIoU scores (~2.5% above paper)🎯

Check out SG full release notes.

- PP-LiteSeg recipes for Cityscapes with SOTA mIoU scores (~1.5% above paper)🎯

- Single class detectors (recipes, pre-trained checkpoints) for edge devices deployment.

- Single class segmentation (recipes, pre-trained checkpoints) for edge devices deployment.

- QAT capabilities (Quantization Aware Training).

- Integration with more professional tools.

- Getting Started

- Advanced Features

- Installation Methods

- Implemented Model Architectures

- Contributing

- Citation

- Community

- License

- Deci Platform

The most simple and straightforward way to start training SOTA performance models with SuperGradients reproducible recipes. Just define your dataset path and where you want your checkpoints to be saved and you are good to go from your terminal!

python -m super_gradients.train_from_recipe --config-name=imagenet_regnetY architecture=regnetY800 dataset_interface.data_dir=<YOUR_Imagenet_LOCAL_PATH> ckpt_root_dir=<CHEKPOINT_DIRECTORY>Want to try our pre-trained models on your machine? Import SuperGradients, initialize your Trainer, and load your desired architecture and pre-trained weights from our SOTA model zoo

# The pretrained_weights argument will load a pre-trained architecture on the provided dataset

import super_gradients

model = models.get("model-name", pretrained_weights="pretrained-model-name")

Classification Transfer Learning Classification Transfer Learning

|

GitHub source GitHub source

|

Segmentation Quick Start Segmentation Quick Start

|

GitHub source GitHub source

|

Segmentation Transfer Learning Segmentation Transfer Learning

|

GitHub source GitHub source

|

Segmentation How to Connect Custom Dataset Segmentation How to Connect Custom Dataset

|

GitHub source GitHub source

|

Detection Transfer Learning Detection Transfer Learning

|

GitHub source GitHub source

|

Detection How to Connect Custom Dataset Detection How to Connect Custom Dataset

|

GitHub source GitHub source

|

How to Predict Using Pre-trained Model How to Predict Using Pre-trained Model

|

GitHub source GitHub source

|

Knowledge Distillation is a training technique that uses a large model, teacher model, to improve the performance of a smaller model, the student model. Learn more about SuperGradients knowledge distillation training with our pre-trained BEiT base teacher model and Resnet18 student model on CIFAR10 example notebook on Google Colab for an easy to use tutorial using free GPU hardware

Knowledge Distillation Training Knowledge Distillation Training

|

GitHub source GitHub source

|

To train a model, it is necessary to configure 4 main components. These components are aggregated into a single "main" recipe .yaml file that inherits the aforementioned dataset, architecture, raining and checkpoint params. It is also possible (and recomended for flexibility) to override default settings with custom ones.

All recipes can be found here

How to Use Recipes How to Use Recipes

|

GitHub source GitHub source

|

from super_gradients import init_trainer

from super_gradients.common import MultiGPUMode

from super_gradients.training.utils.distributed_training_utils import setup_gpu_mode

# Initialize the environment

init_trainer()

# Launch DDP on 1 device (node) of 4 GPU's

setup_gpu_mode(gpu_mode=MultiGPUMode.DISTRIBUTED_DATA_PARALLEL, num_gpus=4)

# Define the objects

# The trainer will run on DDP without anything else to changefrom super_gradients.training import models

# instantiate default pretrained resnet18

default_resnet18 = models.get(name="resnet18", num_classes=100, pretrained_weights="imagenet")

# instantiate pretrained resnet18, turning DropPath on with probability 0.5

droppath_resnet18 = models.get(name="resnet18", arch_params={"droppath_prob": 0.5}, num_classes=100, pretrained_weights="imagenet")

# instantiate pretrained resnet18, without classifier head. Output will be from the last stage before global pooling

backbone_resnet18 = models.get(name="resnet18", arch_params={"backbone_mode": True}, pretrained_weights="imagenet")from super_gradients import Trainer

from torch.optim.lr_scheduler import ReduceLROnPlateau

from super_gradients.training.utils.callbacks import Phase, LRSchedulerCallback

from super_gradients.training.metrics.classification_metrics import Accuracy

# define PyTorch train and validation loaders and optimizer

# define what to be called in the callback

rop_lr_scheduler = ReduceLROnPlateau(optimizer, mode="max", patience=10, verbose=True)

# define phase callbacks, they will fire as defined in Phase

phase_callbacks = [LRSchedulerCallback(scheduler=rop_lr_scheduler,

phase=Phase.VALIDATION_EPOCH_END,

metric_name="Accuracy")]

# create a trainer object, look the declaration for more parameters

trainer = Trainer("experiment_name")

# define phase_callbacks as part of the training parameters

train_params = {"phase_callbacks": phase_callbacks}from super_gradients import Trainer

# create a trainer object, look the declaration for more parameters

trainer = Trainer("experiment_name")

train_params = { ... # training parameters

"sg_logger": "wandb_sg_logger", # Weights&Biases Logger, see class WandBSGLogger for details

"sg_logger_params": # paramenters that will be passes to __init__ of the logger

{

"project_name": "project_name", # W&B project name

"save_checkpoints_remote": True

"save_tensorboard_remote": True

"save_logs_remote": True

}

}General requirements

- Python 3.7, 3.8 or 3.9 installed.

- torch>=1.9.0

- The python packages that are specified in requirements.txt;

To train on nvidia GPUs

- Nvidia CUDA Toolkit >= 11.2

- CuDNN >= 8.1.x

- Nvidia Driver with CUDA >= 11.2 support (≥460.x)

Install using GitHub

pip install git+https://github.com/Deci-AI/super-gradients.git@stableDetailed list can be found here

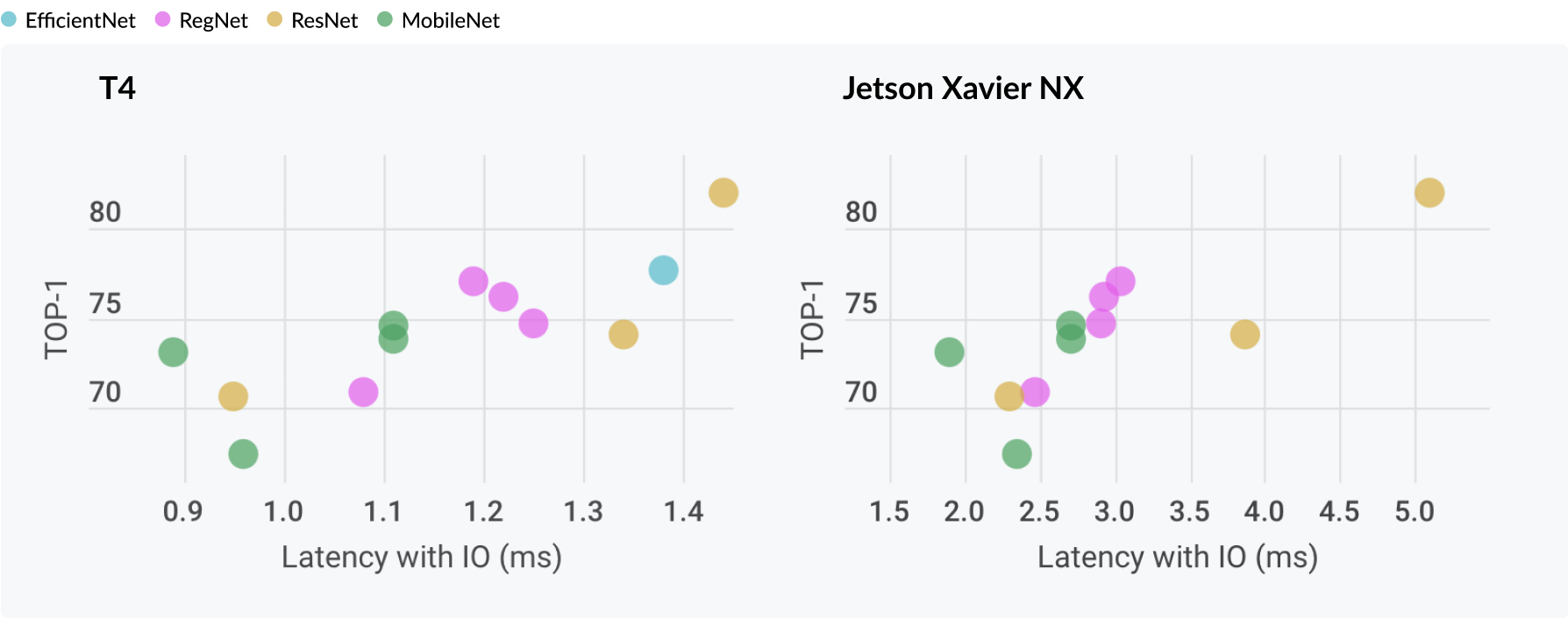

- DensNet (Densely Connected Convolutional Networks)

- DPN

- EfficientNet

- LeNet

- MobileNet

- MobileNet v2

- MobileNet v3

- PNASNet

- Pre-activation ResNet

- RegNet

- RepVGG

- ResNet

- ResNeXt

- SENet

- ShuffleNet

- ShuffleNet v2

- VGG

- PP-LiteSeg - https://arxiv.org/pdf/2204.02681v1.pdf

- DDRNet (Deep Dual-resolution Networks)

- LadderNet

- RegSeg

- ShelfNet

- STDC

Check SuperGradients Docs for full documentation, user guide, and examples.

To learn about making a contribution to SuperGradients, please see our Contribution page.

Our awesome contributors:

Made with contrib.rocks.

If you are using SuperGradients library or benchmarks in your research, please cite SuperGradients deep learning training library.

If you want to be a part of SuperGradients growing community, hear about all the exciting news and updates, need help, request for advanced features, or want to file a bug or issue report, we would love to welcome you aboard!

-

Slack is the place to be and ask questions about SuperGradients and get support. Click here to join our Slack

-

To report a bug, file an issue on GitHub.

-

Join the SG Newsletter for staying up to date with new features and models, important announcements, and upcoming events.

-

For a short meeting with us, use this link and choose your preferred time.

This project is released under the Apache 2.0 license.

Deci Platform is our end to end platform for building, optimizing and deploying deep learning models to production.

Request free trial to enjoy immediate improvement in throughput, latency, memory footprint and model size.

Features:

- Automatically compile and quantize your models with just a few clicks (TensorRT, OpenVINO).

- Gain up to 10X improvement in throughput, latency, memory and model size.

- Easily benchmark your models’ performance on different hardware and batch sizes.

- Invite co-workers to collaborate on models and communicate your progress.

- Deci supports all common frameworks and Hardware, from Intel CPUs to Nvidia's GPUs and Jetsons. ֿ

Request free trial here