conda create --name coqui python=3.9

conda activate coqui

conda install pytorch torchvision torchaudio cudatoolkit=11.3 -c pytorch-nightly

cd "your path to clone the Hakka version of Coqui TTS"

git clone https://github.com/yfliao/TTS

cd TTS

pip install -e .

conda activate coqui

cd "your path to the Hakka version of Coqui TTS"

cd TTS/recipes/hakka/tacotron2-DDC

# to generate "config.json"

python train_tacotron_ddc.py # will crash. since there are no "scale_stats.npy" yet.

python ../../../TTS/bin/compute_statistics.py config.json scale_stats.npy

nohup python train_tacotron_ddc.py &> train_tacotron_ddc.py.log &

PS: To mitigate the impact of configuration inconsistencies between different recording sessions in the given Hakka corpus,

some compromises have been made (mainly, sample_rate=16000 & mel_max=4000, please check the code or config.json).

- download the pre-trained model from https://drive.google.com/drive/folders/1zK6j2nmbGKV8q6rPQXbTI_MUtfqTOimT?usp=sharing

- commandline

tts --text "ngai11 ham55 bun24 ng11 tang24 loi11 io24" --model_path best_model.pth --config_path config.json --out_path speech.wav

- To mitigate the impact of configuration inconsistencies between different recording sessions, some compromises have been made (mainly, mel_max=4000, please check the code or config.json).

tts-server --model_path best_model.pth --config_path config.json

http://[::1]:5002/

🐸TTS is a library for advanced Text-to-Speech generation. It's built on the latest research, was designed to achieve the best trade-off among ease-of-training, speed and quality. 🐸TTS comes with pretrained models, tools for measuring dataset quality and already used in 20+ languages for products and research projects.

📰 Subscribe to 🐸Coqui.ai Newsletter

📢 English Voice Samples and SoundCloud playlist

📄 Text-to-Speech paper collection

Please use our dedicated channels for questions and discussion. Help is much more valuable if it's shared publicly so that more people can benefit from it.

| Type | Platforms |

|---|---|

| 🚨 Bug Reports | GitHub Issue Tracker |

| 🎁 Feature Requests & Ideas | GitHub Issue Tracker |

| 👩💻 Usage Questions | Github Discussions |

| 🗯 General Discussion | Github Discussions or Gitter Room |

| Type | Links |

|---|---|

| 💼 Documentation | ReadTheDocs |

| 💾 Installation | TTS/README.md |

| 👩💻 Contributing | CONTRIBUTING.md |

| 📌 Road Map | Main Development Plans |

| 🚀 Released Models | TTS Releases and Experimental Models |

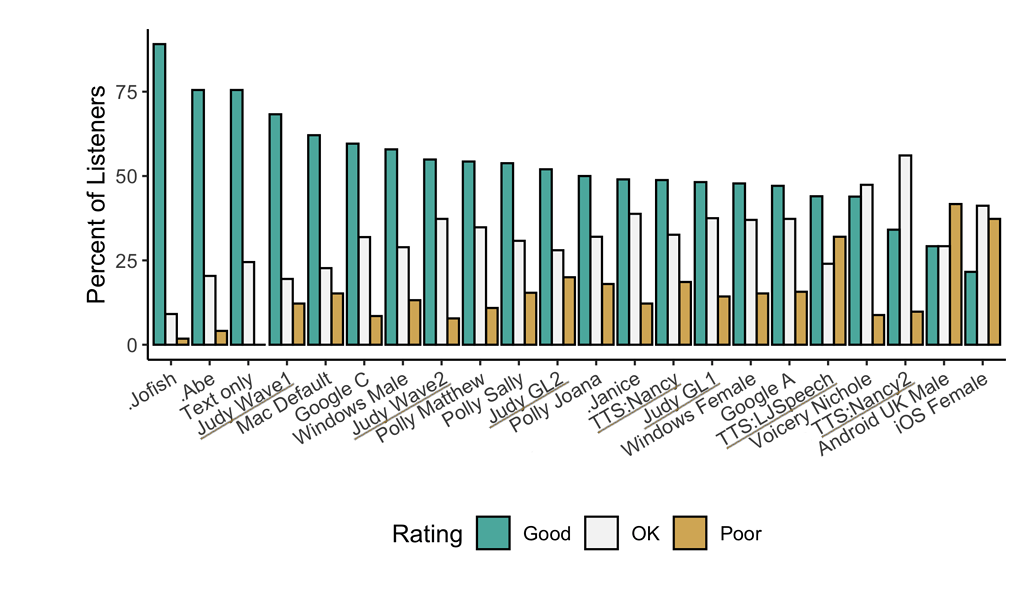

Underlined "TTS*" and "Judy*" are 🐸TTS models

- High-performance Deep Learning models for Text2Speech tasks.

- Text2Spec models (Tacotron, Tacotron2, Glow-TTS, SpeedySpeech).

- Speaker Encoder to compute speaker embeddings efficiently.

- Vocoder models (MelGAN, Multiband-MelGAN, GAN-TTS, ParallelWaveGAN, WaveGrad, WaveRNN)

- Fast and efficient model training.

- Detailed training logs on the terminal and Tensorboard.

- Support for Multi-speaker TTS.

- Efficient, flexible, lightweight but feature complete

Trainer API. - Released and ready-to-use models.

- Tools to curate Text2Speech datasets under

dataset_analysis. - Utilities to use and test your models.

- Modular (but not too much) code base enabling easy implementation of new ideas.

- Tacotron: paper

- Tacotron2: paper

- Glow-TTS: paper

- Speedy-Speech: paper

- Align-TTS: paper

- FastPitch: paper

- FastSpeech: paper

- VITS: paper

- Guided Attention: paper

- Forward Backward Decoding: paper

- Graves Attention: paper

- Double Decoder Consistency: blog

- Dynamic Convolutional Attention: paper

- Alignment Network: paper

- MelGAN: paper

- MultiBandMelGAN: paper

- ParallelWaveGAN: paper

- GAN-TTS discriminators: paper

- WaveRNN: origin

- WaveGrad: paper

- HiFiGAN: paper

- UnivNet: paper

You can also help us implement more models.

🐸TTS is tested on Ubuntu 18.04 with python >= 3.6, < 3.9.

If you are only interested in synthesizing speech with the released 🐸TTS models, installing from PyPI is the easiest option.

pip install TTSIf you plan to code or train models, clone 🐸TTS and install it locally.

git clone https://github.com/coqui-ai/TTS

pip install -e .[all,dev,notebooks] # Select the relevant extrasIf you are on Ubuntu (Debian), you can also run following commands for installation.

$ make system-deps # intended to be used on Ubuntu (Debian). Let us know if you have a diffent OS.

$ make installIf you are on Windows, 👑@GuyPaddock wrote installation instructions here.

-

List provided models:

$ tts --list_models -

Run TTS with default models:

$ tts --text "Text for TTS" -

Run a TTS model with its default vocoder model:

$ tts --text "Text for TTS" --model_name "<language>/<dataset>/<model_name> -

Run with specific TTS and vocoder models from the list:

$ tts --text "Text for TTS" --model_name "<language>/<dataset>/<model_name>" --vocoder_name "<language>/<dataset>/<model_name>" --output_path -

Run your own TTS model (Using Griffin-Lim Vocoder):

$ tts --text "Text for TTS" --model_path path/to/model.pth.tar --config_path path/to/config.json --out_path output/path/speech.wav -

Run your own TTS and Vocoder models:

$ tts --text "Text for TTS" --model_path path/to/config.json --config_path path/to/model.pth.tar --out_path output/path/speech.wav --vocoder_path path/to/vocoder.pth.tar --vocoder_config_path path/to/vocoder_config.json

-

List the available speakers and choose as <speaker_id> among them:

$ tts --model_name "<language>/<dataset>/<model_name>" --list_speaker_idxs -

Run the multi-speaker TTS model with the target speaker ID:

$ tts --text "Text for TTS." --out_path output/path/speech.wav --model_name "<language>/<dataset>/<model_name>" --speaker_idx <speaker_id> -

Run your own multi-speaker TTS model:

$ tts --text "Text for TTS" --out_path output/path/speech.wav --model_path path/to/config.json --config_path path/to/model.pth.tar --speakers_file_path path/to/speaker.json --speaker_idx <speaker_id>

|- notebooks/ (Jupyter Notebooks for model evaluation, parameter selection and data analysis.)

|- utils/ (common utilities.)

|- TTS

|- bin/ (folder for all the executables.)

|- train*.py (train your target model.)

|- distribute.py (train your TTS model using Multiple GPUs.)

|- compute_statistics.py (compute dataset statistics for normalization.)

|- ...

|- tts/ (text to speech models)

|- layers/ (model layer definitions)

|- models/ (model definitions)

|- utils/ (model specific utilities.)

|- speaker_encoder/ (Speaker Encoder models.)

|- (same)

|- vocoder/ (Vocoder models.)

|- (same)