Prometheus-based Kubernetes Resource Recommendations

Usage docs »

Report Bug

·

Request Feature

·

Slack Channel

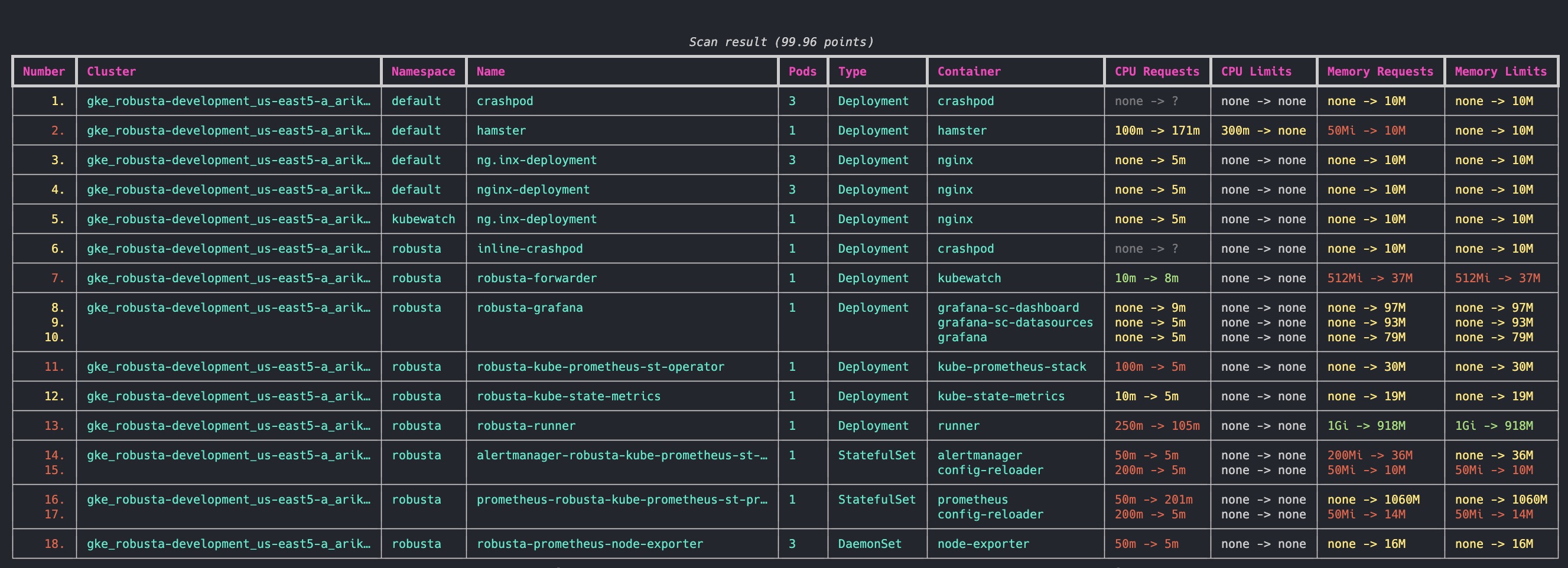

Robusta KRR (Kubernetes Resource Recommender) is a CLI tool for optimizing resource allocation in Kubernetes clusters. It gathers pod usage data from Prometheus and recommends requests and limits for CPU and memory. This reduces costs and improves performance.

- No Agent Required: Robusta KRR is a CLI tool that runs on your local machine. It does not require running Pods in your cluster.

- Prometheus Integration: Gather resource usage data using built-in Prometheus queries, with support for custom queries coming soon.

- Extensible Strategies: Easily create and use your own strategies for calculating resource recommendations.

- Future Support: Upcoming versions will support custom resources (e.g. GPUs) and custom metrics.

According to a recent Sysdig study, on average, Kubernetes clusters have:

- 69% unused CPU

- 18% unused memory

By right-sizing your containers with KRR, you can save an average of 69% on cloud costs.

Robusta KRR uses the following Prometheus queries to gather usage data:

-

CPU Usage:

sum(node_namespace_pod_container:container_cpu_usage_seconds_total:sum_irate{namespace="{object.namespace}", pod="{pod}", container="{object.container}"}) -

Memory Usage:

sum(container_memory_working_set_bytes{job="kubelet", metrics_path="/metrics/cadvisor", image!="", namespace="{object.namespace}", pod="{pod}", container="{object.container}"})

Need to customize the metrics? Tell us and we'll add support.

By default, we use a simple strategy to calculate resource recommendations. It is calculated as follows (The exact numbers can be customized in CLI arguments):

-

For CPU, we set a request at the 99th percentile with no limit. Meaning, in 99% of the cases, your CPU request will be sufficient. For the remaining 1%, we set no limit. This means your pod can burst and use any CPU available on the node - e.g. CPU that other pods requested but aren’t using right now.

-

For memory, we take the maximum value over the past week and add a 5% buffer.

Find about how KRR tries to find the default prometheus to connect here.

| Feature 🛠️ | Robusta KRR 🚀 | Kubernetes VPA 🌐 |

|---|---|---|

| Resource Recommendations 💡 | ✅ CPU/Memory requests and limits | ✅ CPU/Memory requests and limits |

| Installation Location 🌍 | ✅ Not required to be installed inside the cluster, can be used on your own device, connected to a cluster | ❌ Must be installed inside the cluster |

| Workload Configuration 🔧 | ✅ No need to configure a VPA object for each workload | ❌ Requires VPA object configuration for each workload |

| Immediate Results ⚡ | ✅ Gets results immediately (given Prometheus is running) | ❌ Requires time to gather data and provide recommendations |

| Reporting 📊 | ✅ Detailed CLI Report, web UI in Robusta.dev | ❌ Not supported |

| Extensibility 🔧 | ✅ Add your own strategies with few lines of Python | |

| Custom Metrics 📏 | 🔄 Support in future versions | ❌ Not supported |

| Custom Resources 🎛️ | 🔄 Support in future versions (e.g., GPU) | ❌ Not supported |

| Explainability 📖 | 🔄 Support in future versions (Robusta will send you additional graphs) | ❌ Not supported |

| Autoscaling 🔀 | 🔄 Support in future versions | ✅ Automatic application of recommendations |

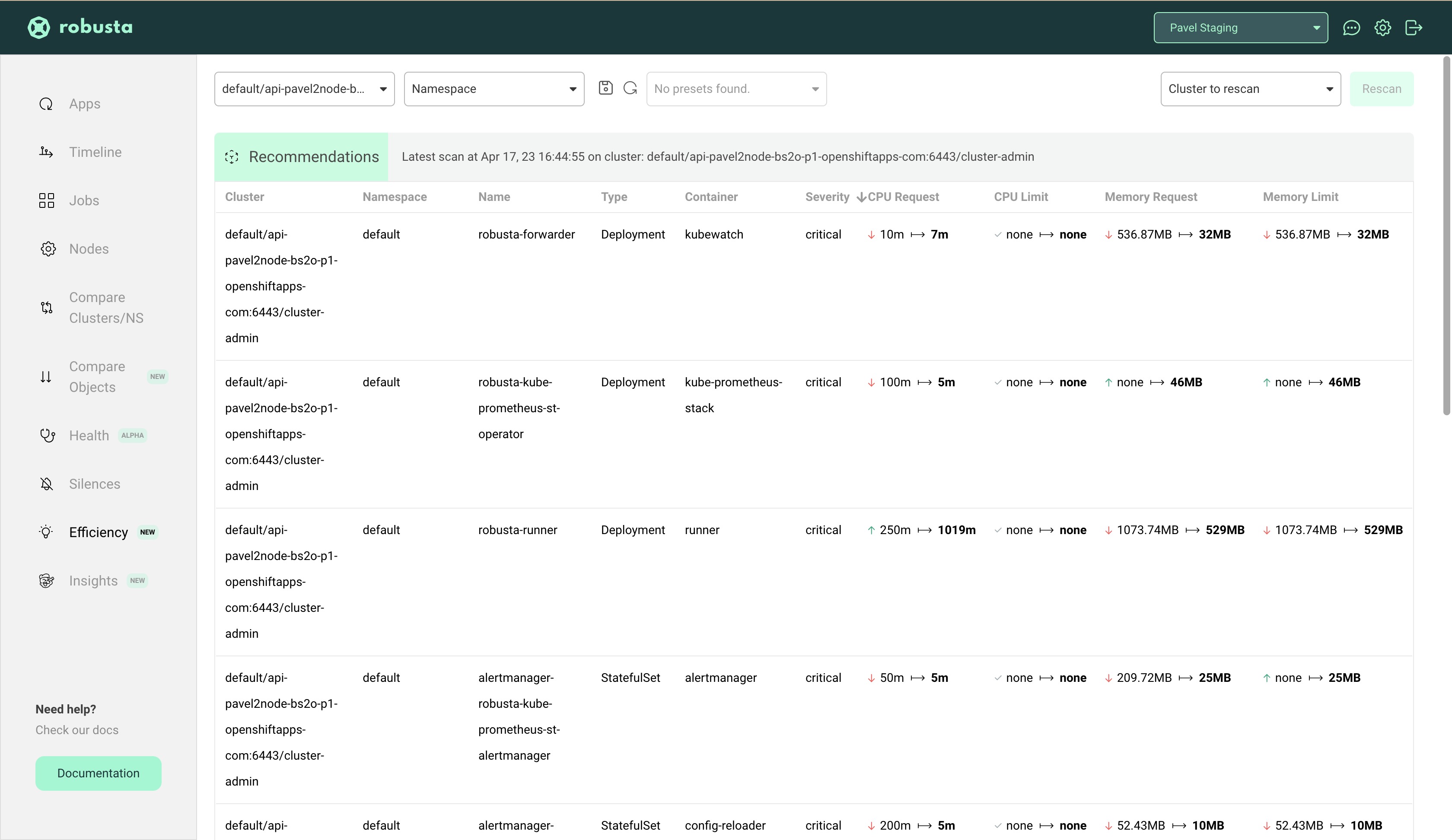

If you are using Robusta SaaS, then KRR is integrated starting from v0.10.15. You can view all your recommendations (previous ones also), filter and sort them by either cluster, namespace or name.

More features (like seeing graphs, based on which recommendations were made) coming soon. Tell us what you need the most!

- Make sure you have Python 3.9 (or greater) installed

- Clone the repo:

git clone https://github.com/robusta-dev/krr- Navigate to the project root directory (

cd ./krr) - Install requirements:

pip install -r requirements.txt- Run the tool:

python krr.py --helpStraightforward usage, to run the simple strategy:

python krr.py simpleIf you want only specific namespaces (default and ingress-nginx):

python krr.py simple -n default -n ingress-nginxBy default krr will run in the current context. If you want to run it in a different context:

python krr.py simple -c my-cluster-1 -c my-cluster-2If you want to get the output in JSON format (--logtostderr is required so no logs go to the result file):

python krr.py simple --logtostderr -f json > result.jsonIf you want to get the output in YAML format:

python krr.py simple --logtostderr -f yaml > result.yamlIf you want to see additional debug logs:

python krr.py simple -vMore specific information on Strategy Settings can be found using

python krr.py simple --helpBy default, KRR will try to auto-discover the running Prometheus by scanning those labels:

"app=kube-prometheus-stack-prometheus"

"app=prometheus,component=server"

"app=prometheus-server"

"app=prometheus-operator-prometheus"

"app=prometheus-msteams"

"app=rancher-monitoring-prometheus"

"app=prometheus-prometheus"If none of those labels result in finding Prometheus, you will get an error and will have to pass the working url explicitly (using the -p flag).

If your prometheus is not auto-connecting, you can use kubectl port-forward for manually forwarding Prometheus.

For example, if you have a Prometheus Pod called kube-prometheus-st-prometheus-0, then run this command to port-forward it:

kubectl port-forward pod/kube-prometheus-st-prometheus-0 9090Then, open another terminal and run krr in it, giving an explicit prometheus url:

python krr.py simple -p http://127.0.0.1:9090Look into the examples directory for examples on how to create a custom strategy/formatter.

We are planning to use pyinstaller to build binaries for distribution. Right now you can build the binaries yourself, but we're not distributing them yet.

- Install the project manually (see above)

- Navigate to the project root directory

- Install poetry (https://python-poetry.org/docs/#installing-with-the-official-installer)

- Install requirements with dev dependencies:

poetry install --group dev- Build the binary:

poetry run pyinstaller krr.py- The binary will be located in the

distdirectory. Test that it works:

cd ./dist/krr

./krr --helpWe use pytest to run tests.

- Install the project manually (see above)

- Navigate to the project root directory

- Install poetry (https://python-poetry.org/docs/#installing-with-the-official-installer)

- Install dev dependencies:

poetry install --group dev- Install robusta_krr as editable dependency:

pip install -e .- Run the tests:

poetry run pytestContributions are what make the open source community such an amazing place to learn, inspire, and create. Any contributions you make are greatly appreciated.

If you have a suggestion that would make this better, please fork the repo and create a pull request. You can also simply open an issue with the tag "enhancement". Don't forget to give the project a star! Thanks again!

- Fork the Project

- Create your Feature Branch (

git checkout -b feature/AmazingFeature) - Commit your Changes (

git commit -m 'Add some AmazingFeature') - Push to the Branch (

git push origin feature/AmazingFeature) - Open a Pull Request

Distributed under the MIT License. See LICENSE.txt for more information.

If you have any questions, feel free to contact support@robusta.dev

Project Link: https://github.com/robusta-dev/krr