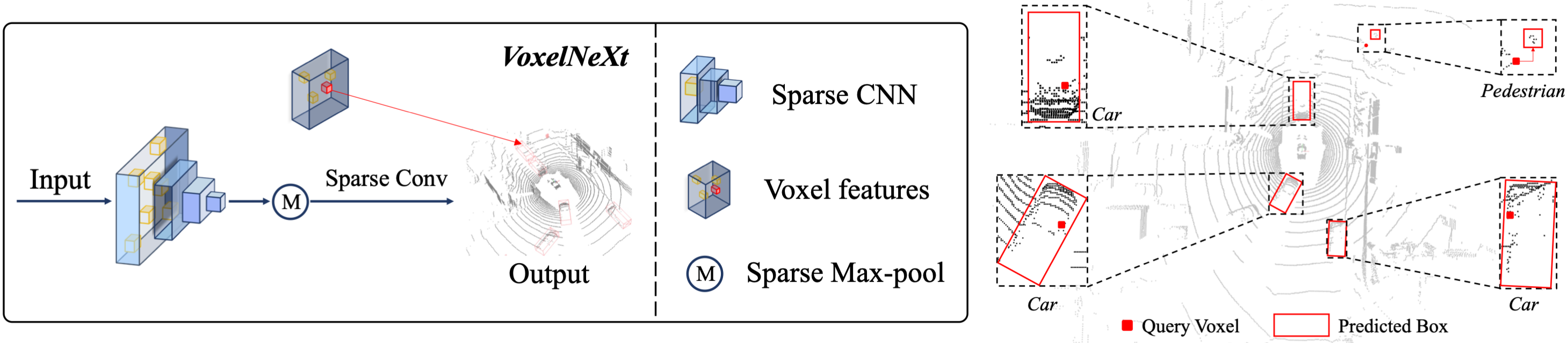

This is the official implementation of VoxelNeXt (CVPR 2023). VoxelNeXt is a clean, simple, and fully-sparse 3D object detector. The core idea is to predict objects directly upon sparse voxel features. No sparse-to-dense conversion, anchors, or center proxies are needed anymore. For more details, please refer to:

VoxelNeXt: Fully Sparse VoxelNet for 3D Object Detection and Tracking [Paper]

Yukang Chen, Jianhui Liu, Xiangyu Zhang, Xiaojuan Qi, Jiaya Jia

- [2023-01-28] VoxelNeXt achieved the SOTA performance on the Argoverse2 3D object detection.

- [2022-11-11] VoxelNeXt achieved 1st on the nuScenes LiDAR tracking leaderboard.

| nuScenes Detection | Set | mAP | NDS | Download |

|---|---|---|---|---|

| VoxelNeXt | val | 60.0 | 67.1 | Pre-trained |

| VoxelNeXt | test | 64.5 | 70.0 | Submission |

| +double-flip | test | 66.2 | 71.4 | Submission |

| nuScenes Tracking | Set | AMOTA | AMOTP | Download |

|---|---|---|---|---|

| VoxelNeXt | val | 70.2 | 64.0 | Results |

| VoxelNeXt | test | 69.5 | 56.8 | Submission |

| +double-flip | test | 71.0 | 51.1 | Submission |

| Argoverse2 | Head Kernel | mAP | Download |

|---|---|---|---|

| VoxelNeXt | 1x1x1 | 30.0 | Pre-trained |

| VoxelNeXt-K3 | 3x3x3 | 30.7 | Pre-trained |

| Waymo | Vec_L1 | Vec_L2 | Ped_L1 | Ped_L2 | Cyc_L1 | Cyc_L2 |

|---|---|---|---|---|---|---|

| VoxelNeXt-2D | 77.94/77.47 | 69.68/69.25 | 80.24/73.47 | 72.23/65.88 | 73.33/72.20 | 70.66/69.56 |

| VoxelNeXt | 78.16/77.70 | 69.86/69.42 | 81.47/76.30 | 73.48/68.63 | 76.06/74.90 | 73.29/72.18 |

- We cannot release the pre-trained models of VoxelNeXt on Waymo dataset due to the license of WOD.

- During inference, VoxelNeXt can work either with sparse-max-pooling or NMS post-processing. Please install our implemented spconv-plus, if you want to use the sparse-max-pooling inference. Otherwise, please use NMS post-processing by default.

https://github.com/dvlab-research/VoxelNeXt && cd VoxelNeXtFollowing the install documents for OpenPCDet.

For nuScenes and Waymo datasets, please follow the document in OpenPCDet.

For Argoverse2 dataset, please follow the steps in the instruction.

We provide the trained weight file so you can just run with that. You can also use the model you trained.

cd tools

bash scripts/dist_test.sh NUM_GPUS --cfg_file PATH_TO_CONFIG_FILE --ckpt PATH_TO_MODEL

#For example,

bash scripts/dist_test.sh 8 --cfg_file PATH_TO_CONFIG_FILE --ckpt PATH_TO_MODELbash scripts/dist_train.sh NUM_GPUS --cfg_file PATH_TO_CONFIG_FILE

#For example,

bash scripts/dist_train.sh 8 --cfg_file PATH_TO_CONFIG_FILEIf you find this project useful in your research, please consider citing:

@inproceedings{chen2023voxenext,

title={VoxelNeXt: Fully Sparse VoxelNet for 3D Object Detection and Tracking},

author={Yukang Chen and Jianhui Liu and Xiangyu Zhang and Xiaojuan Qi and Jiaya Jia},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2023}

}

- This work is built upon the OpenPCDet and spconv.

- This work is motivated by FSD for the fully sparse direction.

- VoxelNeXt (CVPR 2023) [Paper] [Code] Fully Sparse VoxelNet for 3D Object Detection and Tracking.

- Focal Sparse Conv (CVPR 2022 Oral) [Paper] [Code] Dynamic sparse convolution for high performance.

- Spatial Pruned Conv (NeurIPS 2022) [Paper] [Code] 50% FLOPs saving for efficient 3D object detection.f

- LargeKernel3D (CVPR 2023) [Paper] [Code] Large-kernel 3D sparse CNN backbone.

- SphereFormer (CVPR 2023) Spherical window 3D transformer backbone.

- spconv-plus A library where we combine our works into spconv.

- SparseTransformer A library that includes high-efficiency transformer implementations for sparse point cloud or voxel data.

This project is released under the Apache 2.0 license.