Yunqing Zhao1 ,

Chao Du2 ,

Milad Abdollahzadeh1 ,

Tianyu Pang2

Min Lin2 ,

Shuicheng Yan2 ,

Ngai‑Man Cheung1

1Singapore University of Technology and Design (SUTD)

2Sea AI Lab (SAIL), Singapore

The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2023;

Vancouver Convention Center, Vancouver, British Columbia, Canada.

[Project Page] [Poster] [Poster_source] [Slides] [Paper]

- Platform: Linux

- NVIDIA A100 PCIe 40GB, CuDNN 11.4

- lmdb, tqdm, wandb

A suitable conda environment named fsig can be created and activated with:

conda env create -f environment.yml -n fsig

conda activate fsig

Prepare the few-shot training dataset using lmdb format

For example, download the 10-shot target set, Babies (Link) and AFHQ-Cat(Link), and organize your directory as follows:

10-shot-{babies/afhq_cat}

└── images

└── image-1.png

└── image-2.png

└── ...

└── image-10.png

Then, transform to lmdb format:

python prepare_data.py --input_path [your_data_path_of_{babies/afhq_cat}] --output_path ./_processed_train/[your_lmdb_data_path_of_{babies/afhq_cat}]

Prepare the entire target dataset for evaluation

For example, download the entire dataset, Babies(Link) and AFHQ-Cat(Link), and organize your directory as follows:

entire-{babies/afhq_cat}

└── images

└── image-1.png

└── image-2.png

└── ...

└── image-n.png

Then, transform to lmdb format for evaluation

python prepare_data.py --input_path [your_data_path_of_entire_{babies/afhq_cat}] --output_path ./_processed_test/[your_lmdb_data_path_of_entire_{babies/afhq_cat}]

Download the GAN model pretrained on FFHQ from here. Then, save it to ./_pretrained/style_gan_source_ffhq.pt.

FFHQ -> Babies:

python train_dynamic_update_prune.py \

--exp dynamic_prune_ffhq_babies --data_path babies --n_sample_train 10 \

--iter 1750 --batch 2 --num_fisher_img 5 \

--fisher_freq 50 --warmup_iter 250 --fisher_quantile 40 \ --prune_quantile 0.1 \

--ckpt_source style_gan_source_ffhq.pt --source_key ffhq \

--store_samples --samples_freq 50 \

--eval_in_training --eval_in_training_freq 50 --n_sample_test 5000 \

--wandb --wandb_project_name babies --wandb_run_name rick

FFHQ -> AFHQ-Cat:

python train_dynamic_update_prune.py.py \

--exp dynamic_prune_ffhq_afhq_cat --data_path afhq_cat --n_sample_train 10 \

--iter 2250 --batch 2 --num_fisher_img 5 \

--fisher_freq 50 --warmup_iter 250 --fisher_quantile 85 --prune_quantile 0.075 \

--ckpt_source style_gan_source_ffhq.pt --source_key ffhq \

--store_samples --samples_freq 50 \

--eval_in_training --eval_in_training_freq 50 --n_sample_test 5000 \

--wandb --wandb_project_name afhq_cat --wandb_run_name rick

The training dynamics will be actively updated in wandb.

If you find this project useful in your research, please consider citing our paper:

@inproceedings{zhao2023exploring,

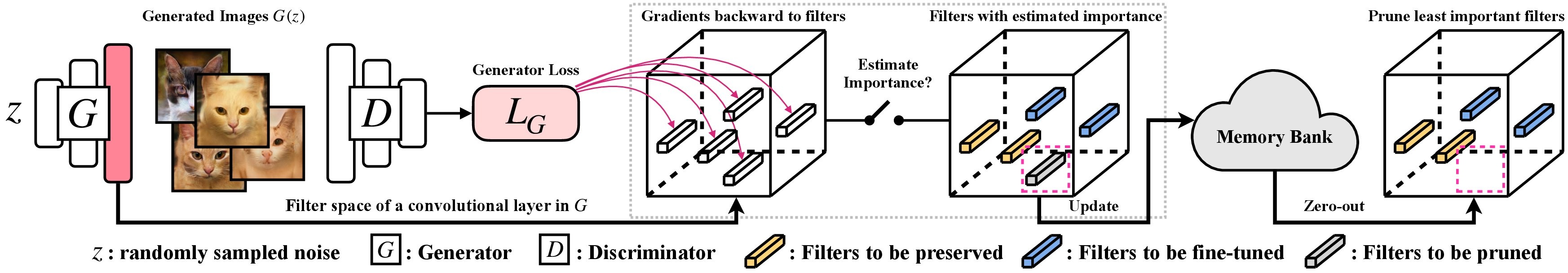

title={Exploring incompatible knowledge transfer in few-shot image generation},

author={Zhao, Yunqing and Du, Chao and Abdollahzadeh, Milad and Pang, Tianyu and Lin, Min and Yan, Shuicheng and Cheung, Ngai-Man},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={7380--7391},

year={2023}

}

Meanwhile, we also demonstrate a relevant research for few-shot image generation, via parameter-efficient Adaptation-Aware kernel Modulation (AdAM) to fine-tune the pretrained generators:

@inproceedings{zhao2022fewshot,

title={Few-shot Image Generation via Adaptation-Aware Kernel Modulation},

author={Yunqing Zhao and Keshigeyan Chandrasegaran and Milad Abdollahzadeh and Ngai-man Cheung},

booktitle={Advances in Neural Information Processing Systems},

editor={Alice H. Oh and Alekh Agarwal and Danielle Belgrave and Kyunghyun Cho},

year={2022},

url={https://openreview.net/forum?id=Z5SE9PiAO4t}

}

We appreciate the wonderful base implementation of StyleGAN-V2 from @rosinality. We thank @mseitzer, @Ojha and @richzhang for their implementations on FID score and intra-LPIPS.

We also thank for the useful training and evaluation tool used in this work, from @Miaoyun.