Fangqi Zhu1,2, Hongtao Wu1†*, Song Guo2*, Yuxiao Liu1, Chilam Cheang1, Tao Kong1

1ByteDance Research, 2Hong Kong University of Science and Technology

*Corresponding authors †Project Lead

Introduction.mp4

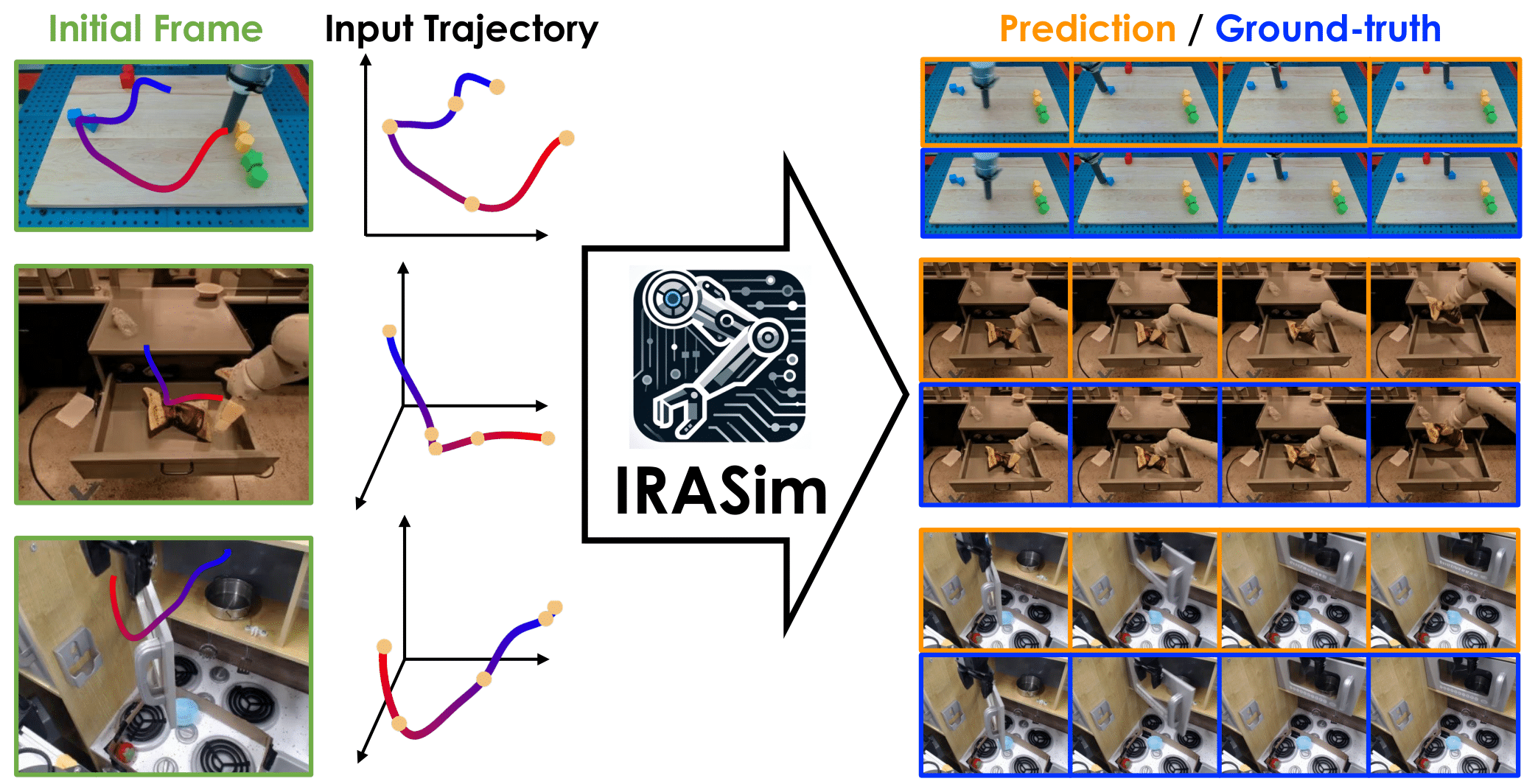

Scalable robot learning in the real world is limited by the cost and safety issues of real robots. In addition, rolling out robot trajectories in the real world can be time-consuming and labor-intensive. In this paper, we propose to learn an interactive real-robot action simulator as an alternative. We introduce a novel method, IRASim, which leverages the power of generative models to generate extremely realistic videos of a robot arm that executes a given action trajectory, starting from an initial given frame. To validate the effectiveness of our method, we create a new benchmark, IRASim Benchmark, based on three real-robot datasets and perform extensive experiments on the benchmark. Results show that IRASim outperforms all the baseline methods and is more preferable in human evaluations. We hope that IRASim can serve as an effective and scalable approach to enhance robot learning in the real world. To promote research for generative real-robot action simulators, we open-source code, benchmark, and checkpoints.

To set up the environment, run the following command:

bash scripts/install.shTo download the complete dataset, run:

bash scripts/download.sh

This table lists the download links and file sizes for the RT-1, Bridge, and Language-Table datasets, categorized into train, evaluation, and checkpoints data.

| Category | Train | Size | Evaluation | Size | Checkpoints | Size |

|---|---|---|---|---|---|---|

| RT-1 | rt1_train_data.tar.gz | 86G | rt1_evaluation_data.tar.gz | 100G | rt1_checkpoints_data.tar.gz | 29G |

| Bridge | bridge_train_data.tar.gz | 31G | bridge_evaluation_data.tar.gz | 63G | bridge_checkpoints_data.tar.gz | 32G |

| Language-Table | languagetable_train_data.tar.gz | 200G | languagetable_evaluation_data.tar.gz | 194G | languagetable_checkpoints_data.tar.gz | 34G |

The complete dataset structure can be found in dataset_structure.txt.

We recommend starting with the Language Table application. This application provides a user-friendly keyboard interface to control the robotic arm in an initial image on a 2D plane:

python3 application/languagetable.pyBelow are example scripts for training the IRASim-Frame-Ada model on the RT-1 dataset.

To accelerate training, we recommend encoding videos into latent videos first. Our code also supports direct training by setting pre_encode to false.

python3 main.py --config configs/train/rt1/frame_ada.yamltorchrun --nproc_per_node 8 --nnodes 1 --node_rank 0 --rdzv_endpoint {node_address}:{port} --rdzv_id 107 --rdzv_backend c10d main.py --config configs/train/rt1/frame_ada.yamlBelow are example scripts for evaluating the IRASim-Frame-Ada model on the RT-1 dataset.

To quantitatively evaluate the model in the short trajectory setting, we first need to generate all evaluation videos.

Generate evaluation videos:

torchrun --nproc_per_node 8 --nnodes 1 --node_rank 0 --rdzv_endpoint {node_address}:{port} --rdzv_id 107 --rdzv_backend c10d main.py --config configs/evaluation/rt1/frame_ada.yamlWe provide an automated script to calculate the metrics of the generated short videos:

python3 evaluate/evaluation_short_script.pyGenerate all long videos in an autoregressive manner.

Generate the scripts for generating long videos in a multi-process manner:

python3 scripts/generate_command.pyRun:

bash scripts/generate_long_video_rt1_frame_ada.shUse the automated script to calculate the metrics of the generated long videos:

python3 evaluate/evaluation_long_script.pyIf you find this code useful in your work, please consider citing

@article{FangqiIRASim2024,

title={IRASim: Learning Interactive Real-Robot Action Simulators},

author={Fangqi Zhu and Hongtao Wu and Song Guo and Yuxiao Liu and Chilam Cheang and Tao Kong},

year={2024},

journal={arXiv:2406.12802}

}- Our implementation is largely adapted from Latte.

- Our FVD implementation is adapted from stylegan-v.

- Our FID implementation is adapted from pytorch-fid.

- Our RT-1, Bridge, Language-Table datasets are adapted from RT-1, Bridge, open_x_embodiment .

If you have any questions during the trial, running or deployment, feel free to join our WeChat group discussion! If you have any ideas or suggestions for the project, you are also welcome to join our WeChat group discussion!