Facial Recognition & facial analysis for retail and security using AWS Rekognition.

We’ve heard plenty about computer vision, in competitions, articles, and coursework, but we don’t actually run into many uses of this technology in our everyday lives. When we go to the mall, attend schools, travel through transit hubs, stay at hotels, or contemplate choices in prison, we almost never see computer vision in use, even in spite of decades of exceptional growth. We know there is great value in these tools, as manufacturing and tech have are rapidly employing computer vision with great returns.

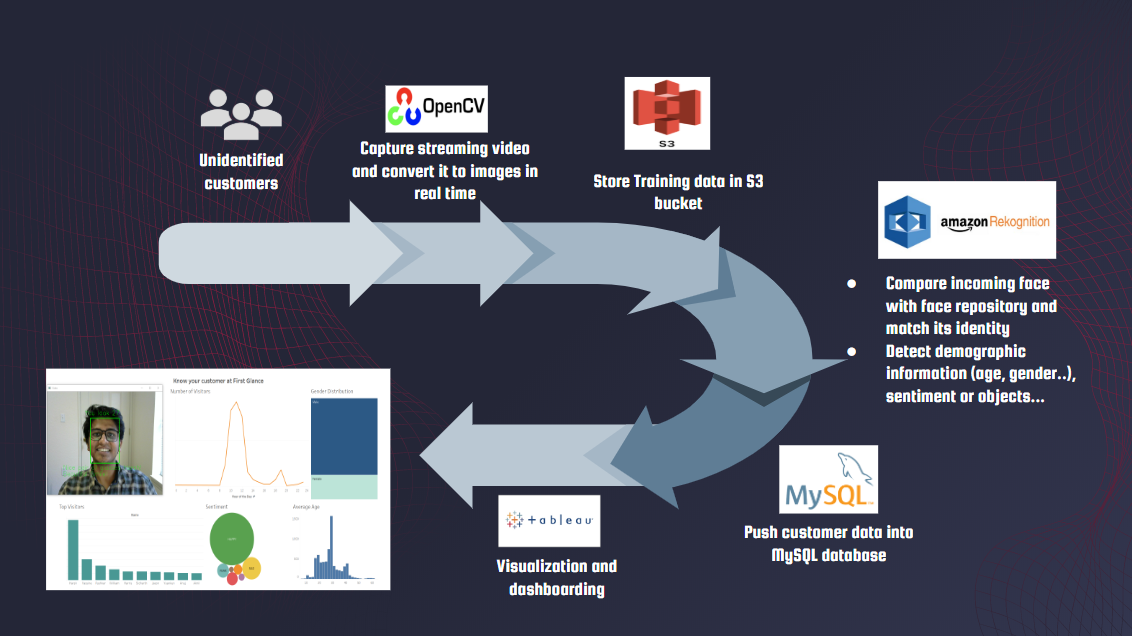

This is why, we built a simple computer vision platform anyone can deploy. It's key features are:-

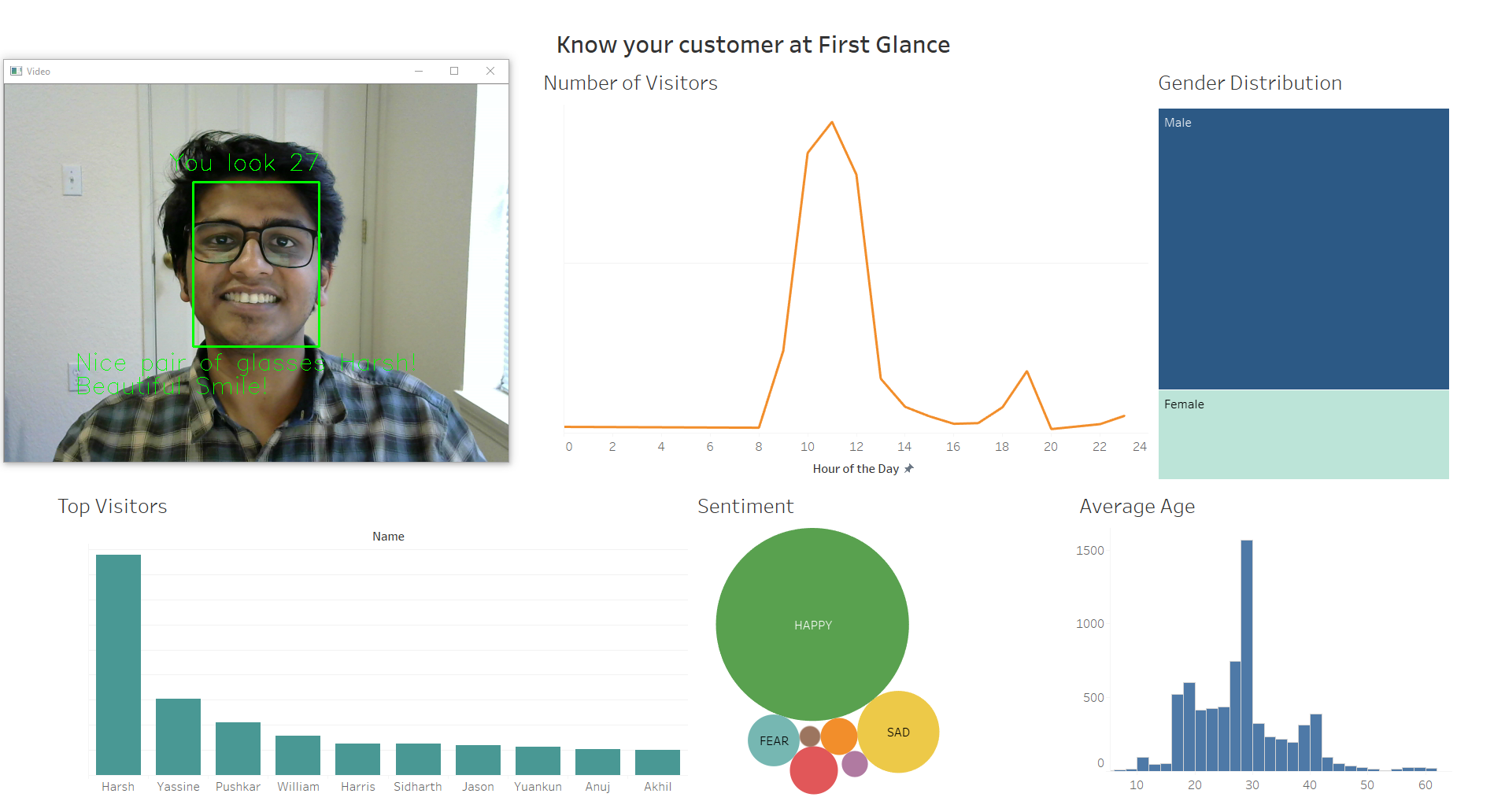

- Recognize individuals with just 1 image as training data ~ 100% accuracy (LinkedIn Headshots used as example)

- Facial analysis including age, gender, sentiment, facial hair, and accessories such as glasses.

- Personalised message to customer based on Facial analysis and recognition.

- Logs stored in MySQL database.

- Insights vidualized using Tableau.

- Low cost of setup - All you need is an AWS account ($150 credits for students) and Tableau License (free for students). Even if you are not a student, AWS charges for this application are as low as 1 cent for analysing 100 images and $5 for storing upto 1M faces.

Sample dashboard:-

This application has a diverse set of use cases in security, retail and education. Here are a few examples:-

This application is simple to setup and use.

Create a logs table by running customer_demographics.sql

https://docs.aws.amazon.com/AmazonS3/latest/user-guide/upload-objects.html

Update face_collection.py with the correct AWS credentials and change the collection name and S3 bucket name and run it to add faces to collection.

Run letsgetstart.py which opens up your web camera and starts storing the data. Remember to update the mysql password in the file.

Use the sample dashboard or create your own visualization from the MySQL database.

Get in touch with us if you need help implementing or want to contribute to this project.

(L-R) Harsh Seksaria, Hamed Khoojinian, Yingzi Wang, Yassine Manane, Peiyuan Wu