We have expanded on the original DINO repository https://github.com/IDEA-Research/GroundingDINO by introducing the capability to train the model with image-to-text grounding. This capability is essential in applications where textual descriptions must align with regions of an image. For instance, when the model is given a caption "a cat on the sofa," it should be able to localize both the "cat" and the "sofa" in the image.

- Fine-tuning DINO: This extension works allows you to fine-tune DINO on your custom dataset.

- Bounding Box Regression: Uses Generalized IoU and Smooth L1 loss for improved bounding box prediction.

- Position-aware Logit Losses: The model not only learns to detect objects but also their positions in the captions.

- NMS: We also implemented phrase based NMS to remove redundant boxes of same objects

See original Repo for installation of required dependencies essentially we need to install prerequisits and

- Prepare your dataset with images and associated textual captions. A tiny dataset is given multimodal-data to demonstrate the expected data format.

- Run the train.py for training.

python train.py

Visualize results of training on test images

python test.py

- Currently Support only one image allow batching

- Add model evaluations

- We did not added auxilary losses as mentioned in the original paper, as we feel we are just finetuning an already trained model but feel free to add auxillary losses and compare results

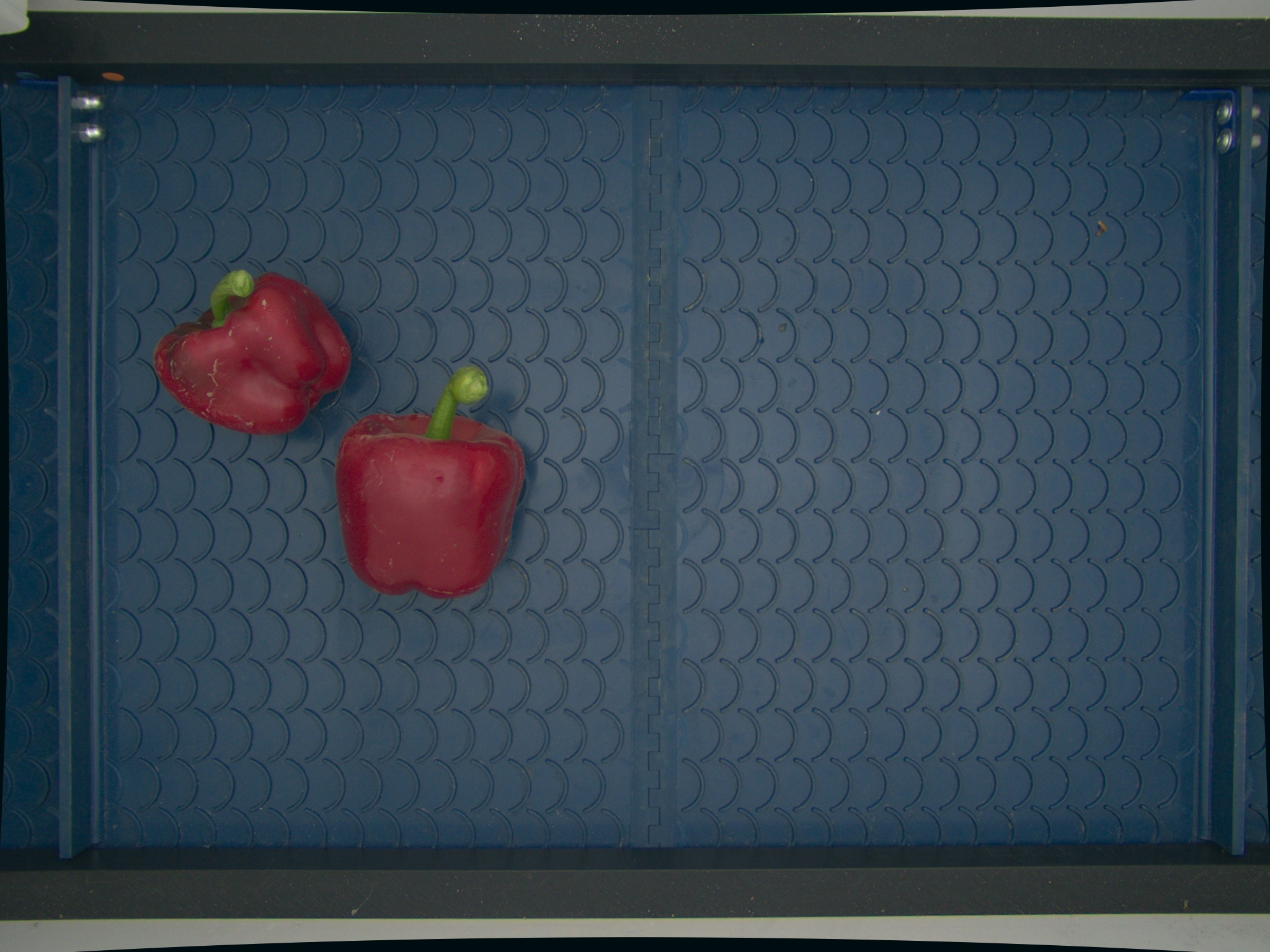

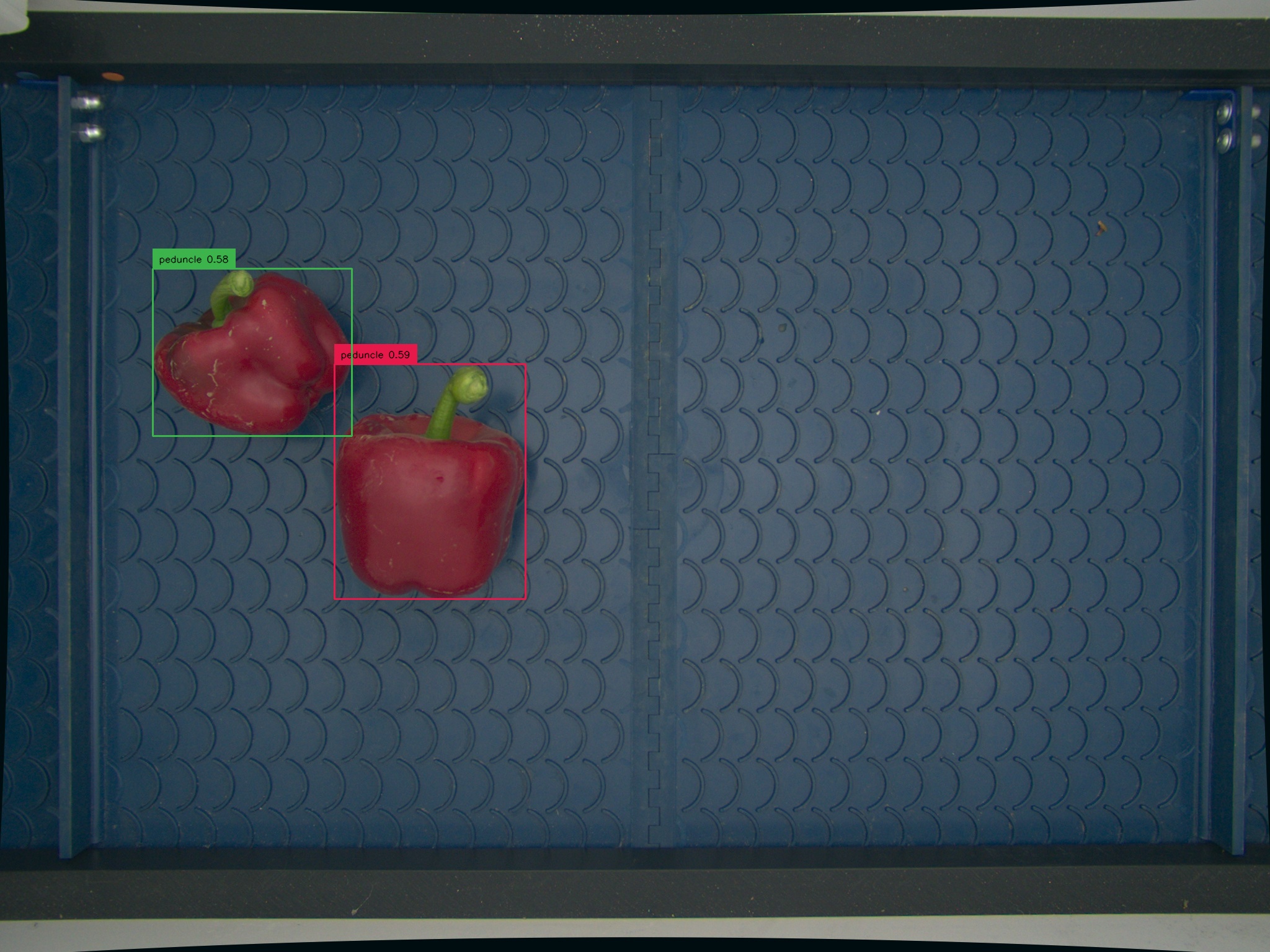

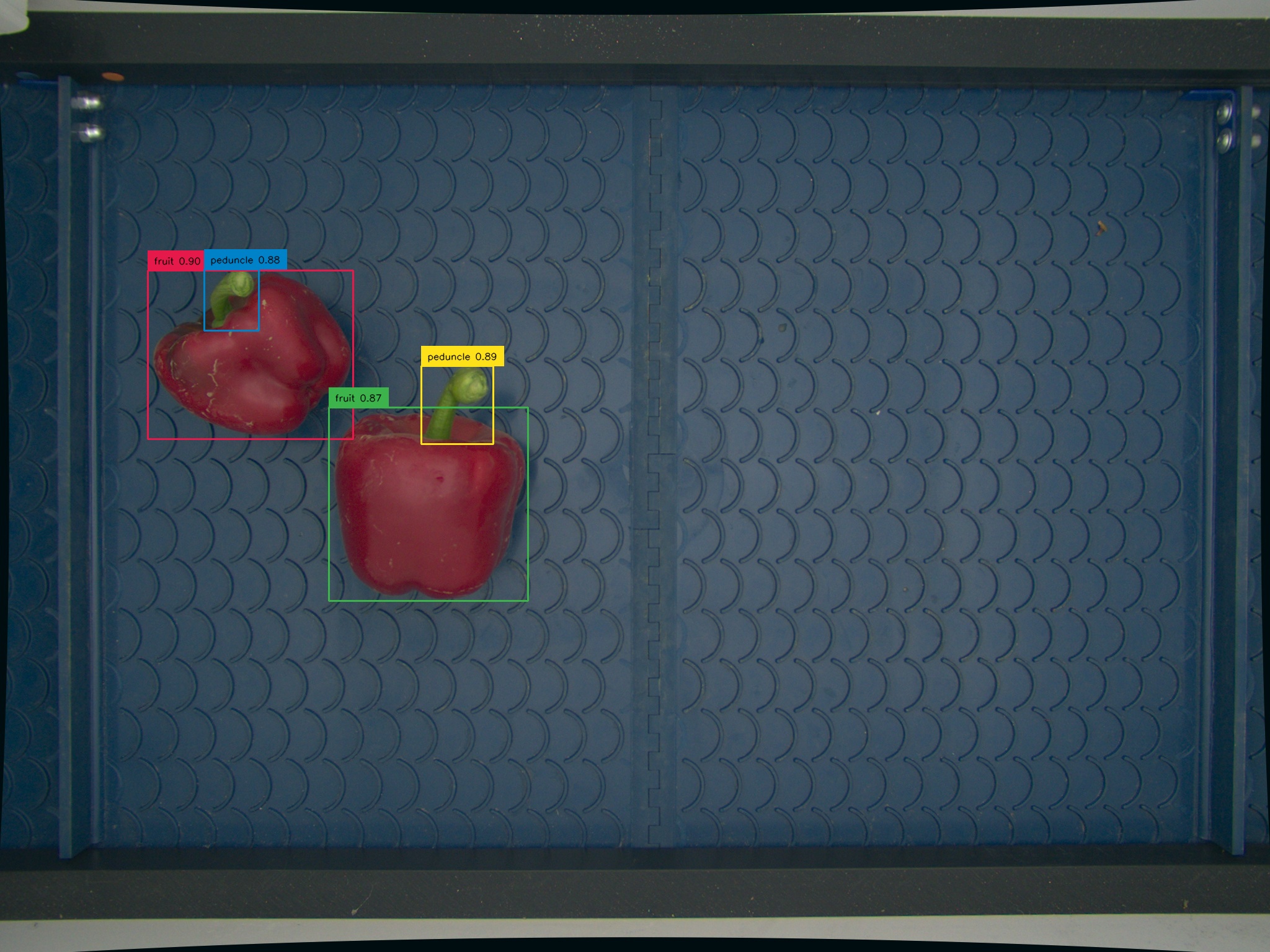

For Input text "peduncle.fruit." and input test image

Intially model detects the wrong category and does not detect peduncle (green part) of the fruits

After fine tuning the model can detect the right category of objects with high confidence and detect all parts of fruits as mentioned in text.

Feel free to open issues, suggest improvements, or submit pull requests. If you found this repository useful, consider giving it a star to make it more visible to others!